Because the launch of ChatGPT in November 2022, the GenAI

panorama has undergone fast cycles of experimentation, enchancment, and

adoption throughout a variety of use instances. Utilized to the software program

engineering trade, GenAI assistants primarily assist engineers write code

sooner by offering autocomplete strategies and producing code snippets

primarily based on pure language descriptions. This strategy is used for each

producing and testing code. Whereas we recognise the super potential of

utilizing GenAI for ahead engineering, we additionally acknowledge the numerous

problem of coping with the complexities of legacy programs, along with

the truth that builders spend much more time studying code than writing it.

By way of modernizing quite a few legacy programs for our purchasers, now we have discovered that an evolutionary strategy makes

legacy displacement each safer and more practical at attaining its worth objectives. This methodology not solely reduces the

dangers of modernizing key enterprise programs but in addition permits us to generate worth early and incorporate frequent

suggestions by steadily releasing new software program all through the method. Regardless of the optimistic outcomes now we have seen

from this strategy over a “Large Bang” cutover, the fee/time/worth equation for modernizing giant programs is commonly

prohibitive. We imagine GenAI can flip this case round.

For our half, now we have been experimenting over the past 18 months with

LLMs to sort out the challenges related to the

modernization of legacy programs. Throughout this time, now we have developed three

generations of CodeConcise, an inside modernization

accelerator at Thoughtworks . The motivation for

constructing CodeConcise stemmed from our statement that the modernization

challenges confronted by our purchasers are related. Our aim is for this

accelerator to change into our smart default in

legacy modernization, enhancing our modernization worth stream and enabling

us to appreciate the advantages for our purchasers extra effectively.

We intend to make use of this text to share our expertise making use of GenAI for Modernization. Whereas a lot of the

content material focuses on CodeConcise, that is just because now we have hands-on expertise

with it. We don’t recommend that CodeConcise or its strategy is the one method to apply GenAI efficiently for

modernization. As we proceed to experiment with CodeConcise and different instruments, we

will share our insights and learnings with the neighborhood.

GenAI period: A timeline of key occasions

One main motive for the

present wave of hype and pleasure round GenAI is the

versatility and excessive efficiency of general-purpose LLMs. Every new technology of those fashions has constantly

proven enhancements in pure language comprehension, inference, and response

high quality. We’re seeing quite a few organizations leveraging these highly effective

fashions to fulfill their particular wants. Moreover, the introduction of

multimodal AIs, resembling text-to-image generative fashions like DALL-E, alongside

with AI fashions able to video and audio comprehension and technology,

has additional expanded the applicability of GenAIs. Furthermore, the

newest AI fashions can retrieve new data from real-time sources,

past what’s included of their coaching datasets, additional broadening

their scope and utility.

Since then, now we have noticed the emergence of latest software program merchandise designed

with GenAI at their core. In different instances, current merchandise have change into

GenAI-enabled by incorporating new options beforehand unavailable. These

merchandise usually make the most of normal objective LLMs, however these quickly hit limitations when their use case goes past

prompting the LLM to generate responses purely primarily based on the info it has been educated with (text-to-text

transformations). For example, in case your use case requires an LLM to grasp and

entry your group’s information, probably the most economically viable resolution typically

includes implementing a Retrieval-Augmented Technology (RAG) strategy.

Alternatively, or together with RAG, fine-tuning a general-purpose mannequin may be applicable,

particularly for those who want the mannequin to deal with complicated guidelines in a specialised

area, or if regulatory necessities necessitate exact management over the

mannequin’s outputs.

The widespread emergence of GenAI-powered merchandise may be partly

attributed to the provision of quite a few instruments and growth

frameworks. These instruments have democratized GenAI, offering abstractions

over the complexities of LLM-powered workflows and enabling groups to run

fast experiments in sandbox environments with out requiring AI technical

experience. Nonetheless, warning have to be exercised in these comparatively early

days to not fall into traps of comfort with frameworks to which

Thoughtworks’ latest expertise radar

attests.

Issues that make modernization costly

Once we started exploring using “GenAI for Modernization”, we

targeted on issues that we knew we’d face time and again – issues

we knew have been those inflicting modernization to be time or price

prohibitive.

- How can we perceive the prevailing implementation particulars of a system?

- How can we perceive its design?

- How can we collect data about it with out having a human skilled out there

to information us? - Can we assist with idiomatic translation of code at scale to our desired tech

stack? How? - How can we reduce dangers from modernization by enhancing and including

automated exams as a security internet? - Can we extract from the codebase the domains, subdomains, and

capabilities? - How can we offer higher security nets in order that variations in conduct

between previous programs and new programs are clear and intentional? How can we allow

cut-overs to be as headache free as potential?

Not all of those questions could also be related in each modernization

effort. We now have intentionally channeled our issues from probably the most

difficult modernization eventualities: Mainframes. These are a number of the

most important legacy programs we encounter, each by way of measurement and

complexity. If we are able to clear up these questions on this state of affairs, then there

will definitely be fruit born for different expertise stacks.

The Structure of CodeConcise

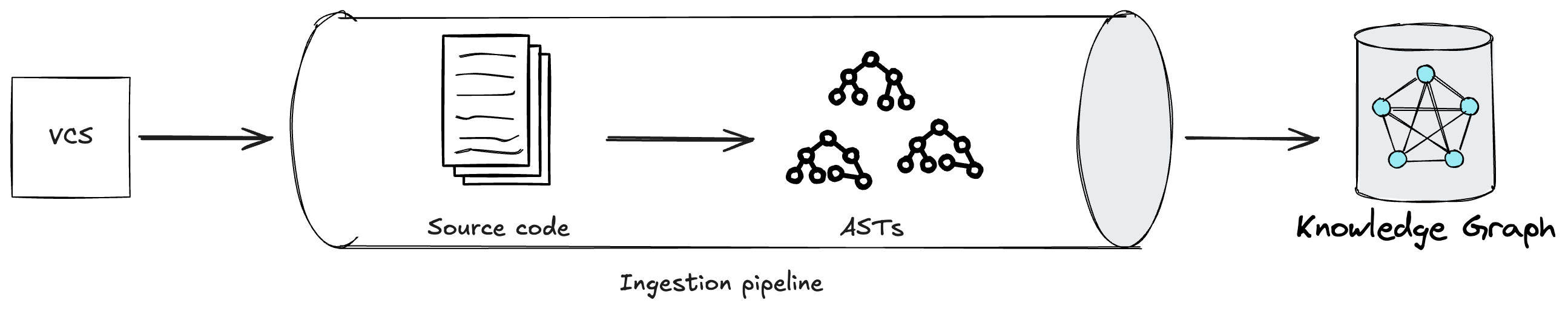

Determine 1: The conceptual strategy of CodeConcise.

CodeConcise is impressed by the Code-as-data

idea, the place code is

handled and analyzed in methods historically reserved for information. This implies

we aren’t treating code simply as textual content, however by means of using language

particular parsers, we are able to extract its intrinsic construction, and map the

relationships between entities within the code. That is accomplished by parsing the

code right into a forest of Summary Syntax Timber (ASTs), that are then

saved in a graph database.

Determine 2: An ingestion pipeline in CodeConcise.

Edges between nodes are then established, for instance an edge may be saying

“the code on this node transfers management to the code in that node”. This course of

doesn’t solely permit us to grasp how one file within the codebase may relate

to a different, however we additionally extract at a a lot granular degree, for instance, which

conditional department of the code in a single file transfers management to code within the

different file. The flexibility to traverse the codebase at such a degree of granularity

is especially essential because it reduces noise (i.e. pointless code) from the

context supplied to LLMs, particularly related for recordsdata that don’t include

extremely cohesive code. Primarily, there are two advantages we observe from this

noise discount. First, the LLM is extra more likely to keep focussed on the immediate.

Second, we use the restricted house within the context window in an environment friendly approach so we

can match extra data into one single immediate. Successfully, this permits the

LLM to research code in a approach that isn’t restricted by how the code is organized in

the primary place by builders. We confer with this deterministic course of because the ingestion pipeline.

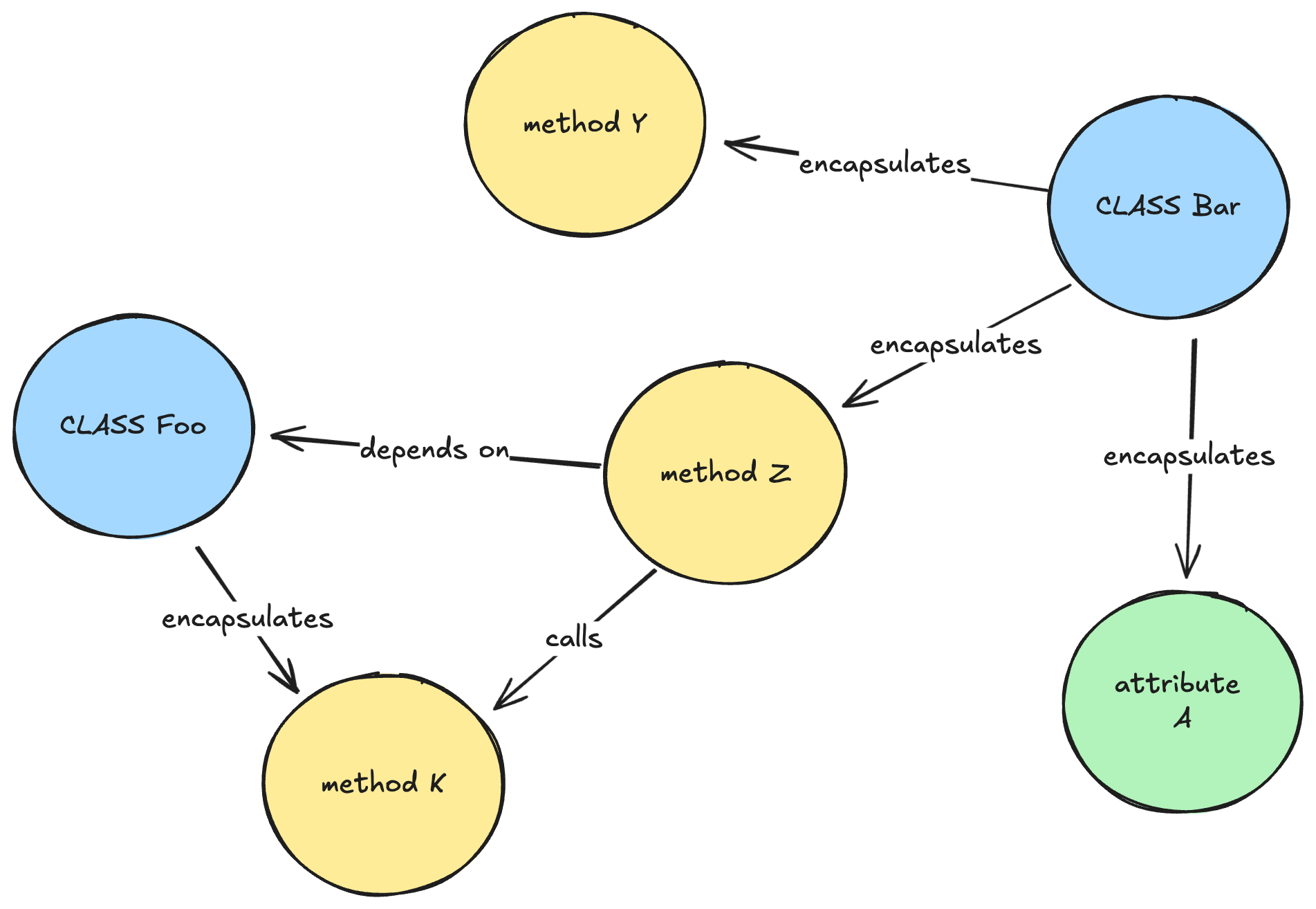

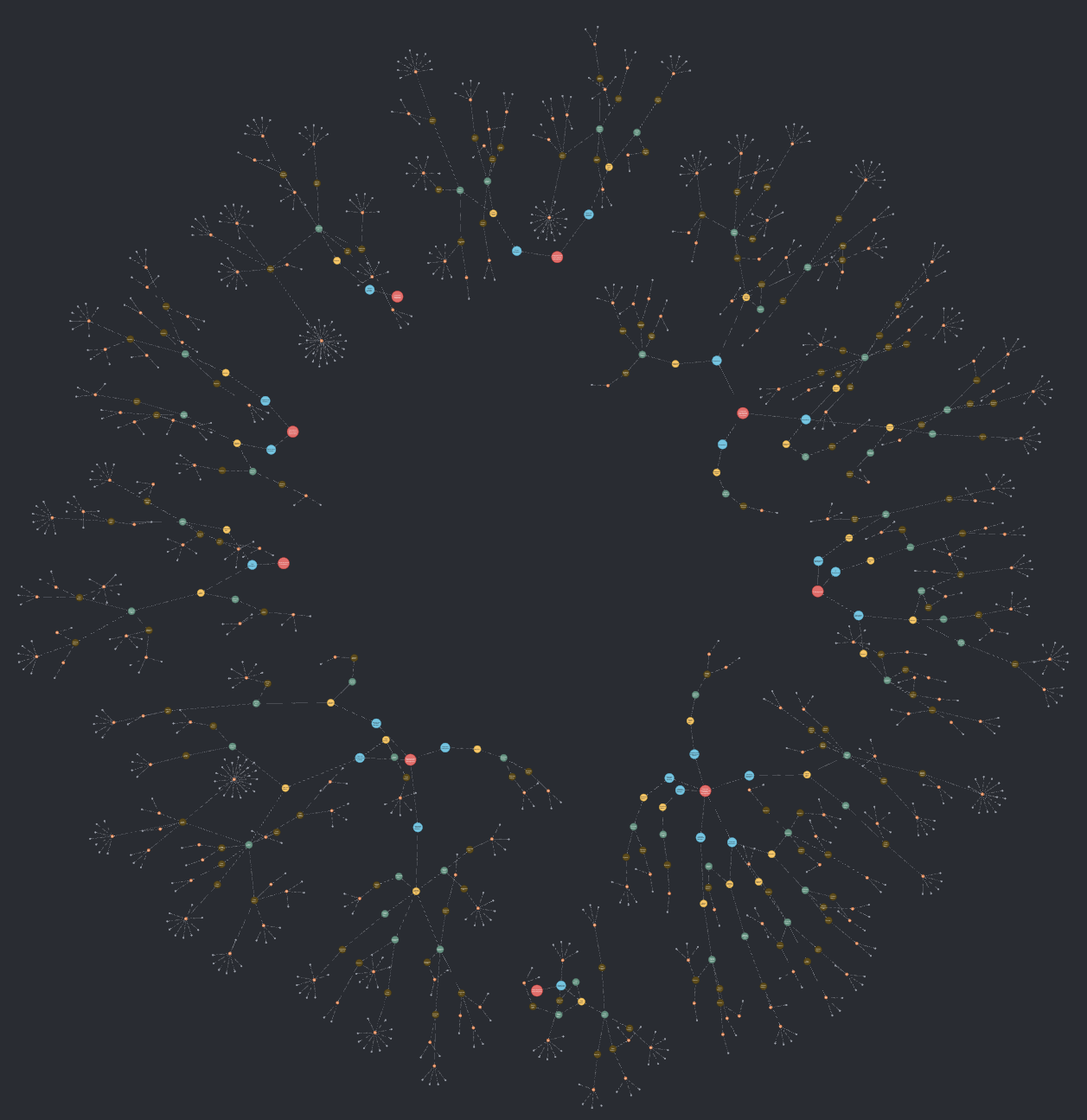

Determine 3: A simplified illustration of how a data graph may appear like for a Java codebase.

Subsequently, a comprehension pipeline traverses the graph utilizing a number of

algorithms, resembling Depth-first Search with

backtracking in post-order

traversal, to complement the graph with LLM-generated explanations at varied depths

(e.g. strategies, lessons, packages). Whereas some approaches at this stage are

widespread throughout legacy tech stacks, now we have additionally engineered prompts in our

comprehension pipeline tailor-made to particular languages or frameworks. As we started

utilizing CodeConcise with actual, manufacturing shopper code, we recognised the necessity to

maintain the comprehension pipeline extensible. This ensures we are able to extract the

data most beneficial to our customers, contemplating their particular area context.

For instance, at one shopper, we found {that a} question to a particular database

desk applied in code can be higher understood by Enterprise Analysts if

described utilizing our shopper’s enterprise terminology. That is notably related

when there may be not a Ubiquitous

Language shared between

technical and enterprise groups. Whereas the (enriched) data graph is the principle

product of the comprehension pipeline, it isn’t the one beneficial one. Some

enrichments produced throughout the pipeline, resembling routinely generated

documentation in regards to the system, are beneficial on their very own. When supplied

on to customers, these enrichments can complement or fill gaps in current

programs documentation, if one exists.

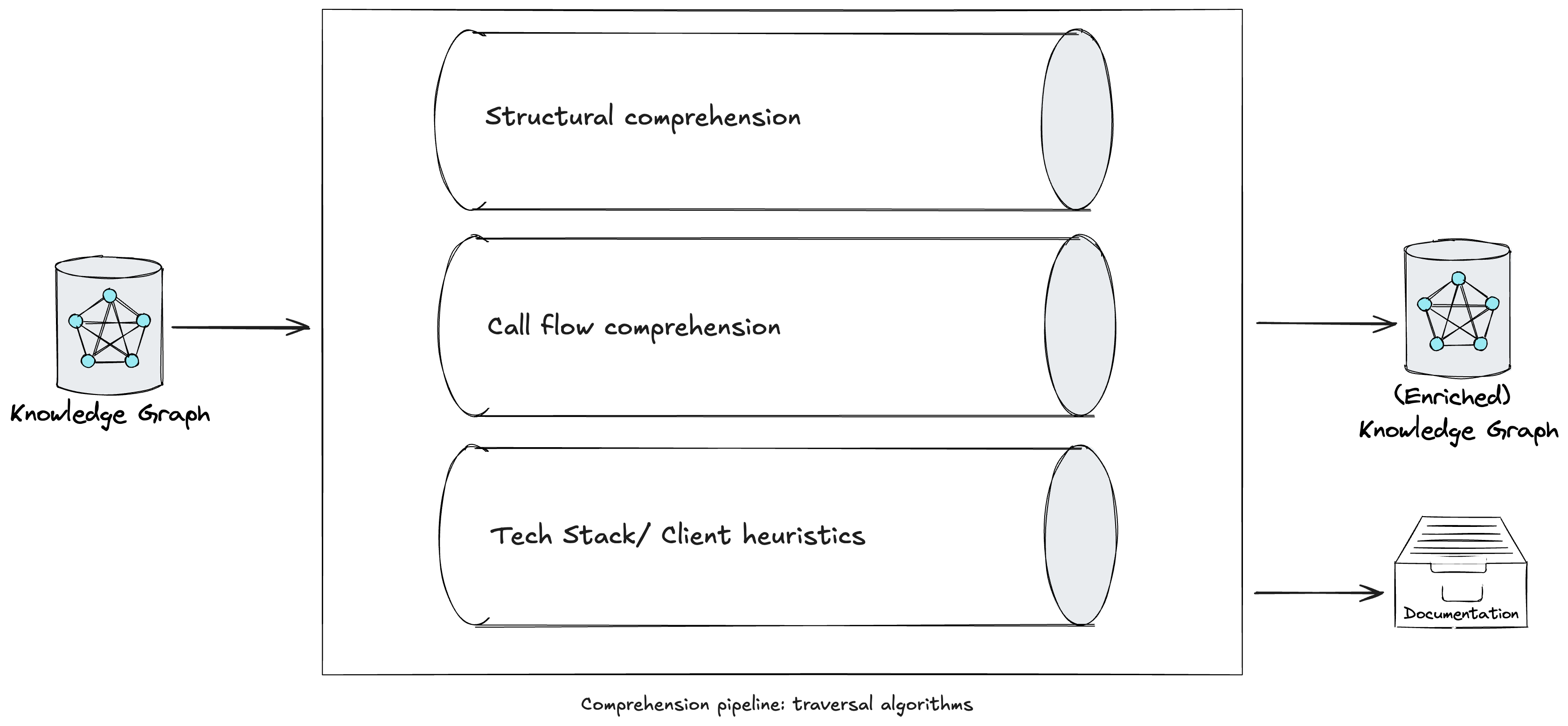

Determine 4: A comprehension pipeline in CodeConcise.

Neo4j, our graph database of selection, holds the (enriched) Data Graph.

This DBMS options vector search capabilities, enabling us to combine the

Data Graph into the frontend software implementing RAG. This strategy

supplies the LLM with a a lot richer context by leveraging the graph’s construction,

permitting it to traverse neighboring nodes and entry LLM-generated explanations

at varied ranges of abstraction. In different phrases, the retrieval element of RAG

pulls nodes related to the consumer’s immediate, whereas the LLM additional traverses the

graph to collect extra data from their neighboring nodes. For example,

when on the lookout for data related to a question about “how does authorization

work when viewing card particulars?” the index could solely present again outcomes that

explicitly cope with validating consumer roles, and the direct code that does so.

Nonetheless, with each behavioral and structural edges within the graph, we are able to additionally

embrace related data in known as strategies, the encircling package deal of code,

and within the information buildings which have been handed into the code when offering

context to the LLM, thus upsetting a greater reply. The next is an instance

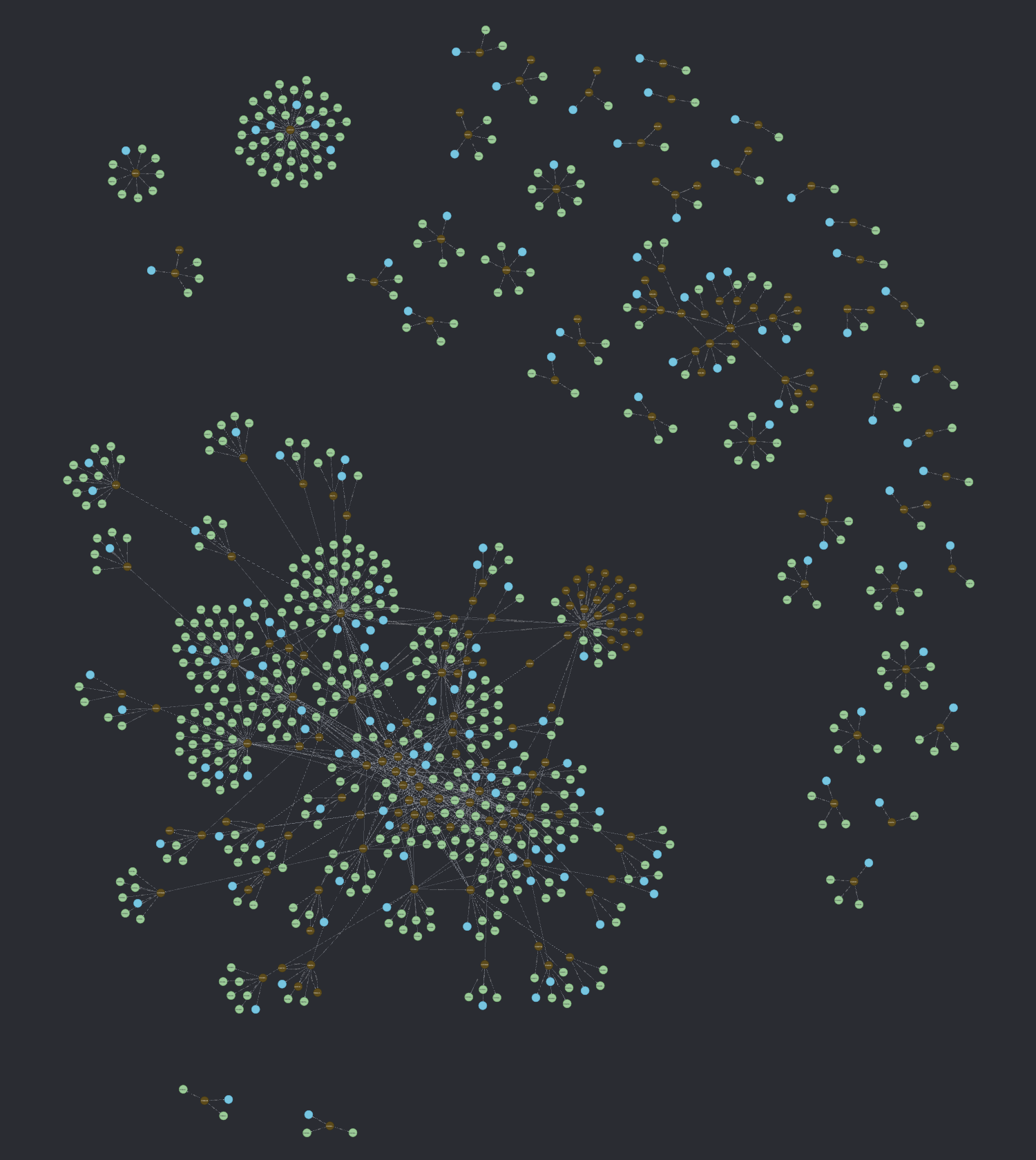

of an enriched data graph for AWS Card

Demo,

the place blue and inexperienced nodes are the outputs of the enrichments executed within the

comprehension pipeline.

Determine 5: An (enriched) data graph for AWS Card Demo.

The relevance of the context supplied by additional traversing the graph

in the end is determined by the factors used to assemble and enrich the graph within the

first place. There isn’t any one-size-fits-all resolution for this; it can rely upon

the particular context, the insights one goals to extract from their code, and,

in the end, on the ideas and approaches that the event groups adopted

when establishing the answer’s codebase. For example, heavy use of

inheritance buildings may require extra emphasis on INHERITS_FROM edges vs

COMPOSED_OF edges in a codebase that favors composition.

For additional particulars on the CodeConcise resolution mannequin, and insights into the

progressive studying we had by means of the three iterations of the accelerator, we

will quickly be publishing one other article: Code comprehension experiments with

LLMs.

Within the subsequent sections, we delve deeper into particular modernization

challenges that, if solved utilizing GenAI, might considerably influence the fee,

worth, and time for modernization – components that always discourage us from making

the choice to modernize now. In some instances, now we have begun exploring internally

how GenAI may handle challenges now we have not but had the chance to

experiment with alongside our purchasers. The place that is the case, our writing is

extra speculative, and now we have highlighted these cases accordingly.

Reverse engineering: drawing out low-level necessities

When enterprise a legacy modernization journey and following a path

like Rewrite or Exchange, now we have realized that, so as to draw a

complete listing of necessities for our goal system, we have to

look at the supply code of the legacy system and carry out reverse

engineering. These will information your ahead engineering groups. Not all

these necessities will essentially be integrated into the goal

system, particularly for programs developed over a few years, a few of which

could now not be related in as we speak’s enterprise and market context.

Nonetheless, it’s essential to grasp current conduct to make knowledgeable

choices about what to retain, discard, and introduce in your new

system.

The method of reverse engineering a legacy codebase may be time

consuming and requires experience from each technical and enterprise

individuals. Allow us to take into account under a number of the actions we carry out to achieve

a complete low-level understanding of the necessities, together with

how GenAI will help improve the method.

Handbook code critiques

Encompassing each static and dynamic code evaluation. Static

evaluation includes reviewing the supply code straight, generally

aided by particular instruments for a given technical stack. These purpose to

extract insights resembling dependency diagrams, CRUD (Create Learn

Replace Delete) stories for the persistence layer, and low-level

program flowcharts. Dynamic code evaluation, alternatively,

focuses on the runtime conduct of the code. It’s notably

helpful when a bit of the code may be executed in a managed

setting to watch its conduct. Analyzing logs produced throughout

runtime also can present beneficial insights into the system’s

conduct and its elements. GenAI can considerably improve

the understanding and rationalization of code by means of code critiques,

particularly for engineers unfamiliar with a selected tech stack,

which is commonly the case with legacy programs. We imagine this

functionality is invaluable to engineering groups, because it reduces the

typically inevitable dependency on a restricted variety of specialists in a

particular stack. At one shopper, now we have leveraged CodeConcise,

using an LLM to extract low-level necessities from the code. We

have prolonged the comprehension pipeline to supply static stories

containing the knowledge Enterprise Analysts (BAs) wanted to

successfully derive necessities from the code, demonstrating how

GenAI can empower non-technical individuals to be concerned in

this particular use case.

Abstracted program flowcharts

Low-level program flowcharts can obscure the general intent of

the code and overwhelm BAs with extreme technical particulars.

Subsequently, collaboration between reverse engineers and Topic

Matter Consultants (SMEs) is essential. This collaboration goals to create

abstracted variations of program flowcharts that protect the

important flows and intentions of the code. These visible artifacts

assist BAs in harvesting necessities for ahead engineering. We now have

learnt with our shopper that we might make use of GenAI to supply

summary flowcharts for every module within the system. Whereas it could be

cheaper to manually produce an summary flowchart at a system degree,

doing so for every module(~10,000 strains of code, with a complete of 1500

modules) can be very inefficient. With GenAI, we have been capable of

present BAs with visible abstractions that exposed the intentions of

the code, whereas eradicating many of the technical jargon.

SME validation

SMEs are consulted at a number of levels throughout the reverse

engineering course of by each builders and BAs. Their mixed

technical and enterprise experience is used to validate the

understanding of particular elements of the system and the artifacts

produced throughout the course of, in addition to to make clear any excellent

queries. Their enterprise and technical experience, developed over many

years, makes them a scarce useful resource inside organizations. Typically,

they’re stretched too skinny throughout a number of groups simply to “maintain

the lights on”. This presents a possibility for GenAI

to scale back dependencies on SMEs. At our shopper, we experimented with

the chatbot featured in CodeConcise, which permits BAs to make clear

uncertainties or request extra data. This chatbot, as

beforehand described, leverages LLM and Data Graph applied sciences

to offer solutions much like these an SME would supply, serving to to

mitigate the time constraints BAs face when working with them.

Thoughtworks labored with the shopper talked about earlier to discover methods to

speed up the reverse engineering of a giant legacy codebase written in COBOL/

IDMS. To attain this, we prolonged CodeConcise to assist the shopper’s tech

stack and developed a proof of idea (PoC) using the accelerator within the

method described above. Earlier than the PoC, reverse engineering 10,000 strains of code

usually took 6 weeks (2 FTEs working for 4 weeks, plus wait time and an SME

evaluation). On the finish of the PoC, we estimated that our resolution might cut back this

by two-thirds, from 6 weeks to 2 weeks for a module. This interprets to a

potential saving of 240 FTE years for the complete mainframe modernization

program.

Excessive-level, summary rationalization of a system

We now have skilled that LLMs will help us perceive low-level

necessities extra shortly. The subsequent query is whether or not they also can

assist us with high-level necessities. At this degree, there may be a lot

data to absorb and it’s robust to digest all of it. To sort out this,

we create psychological fashions which function abstractions that present a

conceptual, manageable, and understandable view of the functions we

are wanting into. Often, these fashions exist solely in individuals’s heads.

Our strategy includes working intently with specialists, each technical and

enterprise focussed, early on within the venture. We maintain workshops, resembling

Occasion

Storming

from Area-driven Design, to extract SMEs’ psychological fashions and retailer them

on digital boards for visibility, steady evolution, and

collaboration. These fashions include a website language understood by each

enterprise and technical individuals, fostering a shared understanding of a

complicated area amongst all staff members. At a better degree of abstraction,

these fashions can also describe integrations with exterior programs, which

may be both inside or exterior to the group.

It’s turning into evident that entry to, and availability of SMEs is

important for understanding complicated legacy programs at an summary degree

in an economical method. Lots of the constraints beforehand

highlighted are subsequently relevant to this modernization

problem.

Within the period of GenAI, particularly within the modernization house, we’re

seeing good outputs from LLMs when they’re prompted to clarify a small

subset of legacy code. Now, we need to discover whether or not LLMs may be as

helpful in explaining a system at a better degree of abstraction.

Our accelerator, CodeConcise, builds upon Code as Knowledge methods by

using the graph illustration of a legacy system codebase to

generate LLM-generated explanations of code and ideas at totally different

ranges of abstraction:

- Graph traversal technique: We leverage the complete codebase’s

illustration as a graph and use traversal algorithms to complement the graph with

LLM-generated explanations at varied depths. - Contextual data: Past processing the code and storing it within the

graph, we’re exploring methods to course of any out there system documentation, as

it typically supplies beneficial insights into enterprise terminology, processes, and

guidelines, assuming it’s of fine high quality. By connecting this contextual

documentation to code nodes on the graph, our speculation is we are able to improve

additional the context out there to LLMs throughout each upfront code rationalization and

when retrieving data in response to consumer queries.

In the end, the aim is to reinforce CodeConcise’s understanding of the

code with extra summary ideas, enabling its chatbot interface to

reply questions that usually require an SME, retaining in thoughts that

such questions won’t be straight answerable by inspecting the code

alone.

At Thoughtworks, we’re observing optimistic outcomes in each

traversing the graph and producing LLM explanations at varied ranges

of code abstraction. We now have analyzed an open-source COBOL repository,

AWS Card

Demo,

and efficiently requested high-level questions resembling detailing the system

options and consumer interactions. On this event, the codebase included

documentation, which supplied extra contextual data for the

LLM. This enabled the LLM to generate higher-quality solutions to our

questions. Moreover, our GenAI-powered staff assistant, Haiven, has

demonstrated at a number of purchasers how contextual details about a

system can allow an LLM to offer solutions tailor-made to

the particular shopper context.

Discovering a functionality map of a system

One of many first issues we do when starting a modernization journey

is catalog current expertise, processes, and the individuals who assist

them. Inside this course of, we additionally outline the scope of what is going to be

modernized. By assembling and agreeing on these components, we are able to construct a

robust enterprise case for the change, develop the expertise and enterprise

roadmaps, and take into account the organizational implications.

With out having this at hand, there isn’t any method to decide what wants

to be included, what the plan to realize is, the incremental steps to

take, and after we are accomplished.

Earlier than GenAI, our groups have been utilizing quite a few

methods to construct this understanding, when it isn’t already current.

These methods vary from Occasion Storming and Course of Mapping by means of

to “following the info” by means of the system, and even focused code

critiques for notably complicated subdomains. By combining these

approaches, we are able to assemble a functionality map of our purchasers’

landscapes.

Whereas this may occasionally appear as if a considerable amount of guide effort, these can

be a number of the most beneficial actions because it not solely builds a plan for

the longer term supply, however the considering and collaboration that goes into

making it ensures alignment of the concerned stakeholders, particularly

round what will be included or excluded from the modernization

scope. Additionally, now we have learnt that functionality maps are invaluable after we

take a capability-driven strategy to modernization. This helps modernize

the legacy system incrementally by steadily delivering capabilities in

the goal system, along with designing an structure the place

issues are cleanly separated.

GenAI modifications this image quite a bit.

Some of the highly effective capabilities that GenAI brings is

the flexibility to summarize giant volumes of textual content and different media. We will

use this functionality throughout current documentation that could be current

relating to expertise or processes to extract out, if not the tip

data, then at the least a place to begin for additional conversations.

There are a selection of methods which are being actively developed and

launched on this space. Specifically, we imagine that

GraphRAG which was lately

launched by Microsoft may very well be used to extract a degree of data from

these paperwork by means of Graph Algorithm evaluation of the physique of

textual content.

We now have additionally been trialing GenAI excessive of the data graph

that we construct out of the legacy code as talked about earlier by asking what

key capabilities modules have after which clustering and abstracting these

by means of hierarchical summarization. This then serves as a map of

capabilities, expressed succinctly at each a really excessive degree and a

detailed degree, the place every functionality is linked to the supply code

modules the place it’s applied. That is then used to scope and plan for

the modernization in a sooner method. The next is an instance of a

functionality map for a system, together with the supply code modules (small

grey nodes) they’re applied in.

However, now we have learnt to not view this totally LLM-generated

functionality map as mutually unique from the normal strategies of

creating functionality maps described earlier. These conventional approaches

are beneficial not just for aligning stakeholders on the scope of

modernization, but in addition as a result of, when a functionality already exists, it

can be utilized to cluster the supply code primarily based on the capabilities

applied. This strategy produces functionality maps that resonate higher

with SMEs by utilizing the group’s Ubiquitous language. Moreover,

evaluating each functionality maps may be a beneficial train, absolutely one

we stay up for experimenting with, as every may supply insights the

different doesn’t.

Discovering unused / lifeless / duplicate code

One other a part of gathering data on your modernization efforts

is knowing inside your scope of labor, “what remains to be getting used at

all”, or “the place have we received a number of cases of the identical

functionality”.

At present this may be addressed fairly successfully by combining two

approaches: static and dynamic evaluation. Static evaluation can discover unused

methodology calls and statements inside sure scopes of interrogation, for

occasion, discovering unused strategies in a Java class, or discovering unreachable

paragraphs in COBOL. Nonetheless, it’s unable to find out whether or not entire

API endpoints or batch jobs are used or not.

That is the place we use dynamic evaluation which leverages system

observability and different runtime data to find out if these

capabilities are nonetheless in use, or may be dropped from our modernization

backlog.

When seeking to discover duplicate technical capabilities, static

evaluation is probably the most generally used device as it may possibly do chunk-by-chunk textual content

similarity checks. Nonetheless, there are main shortcomings when utilized to

even a modest expertise property: we are able to solely discover code similarities in

the similar language.

We speculate that by leveraging the results of {our capability}

extraction strategy, we are able to use these expertise agnostic descriptions

of what giant and small abstractions of the code are doing to carry out an

estate-wide evaluation of duplication, which is able to take our future

structure and roadmap planning to the subsequent degree.

With regards to unused code nonetheless, we see little or no use in

making use of GenAI to the issue. Static evaluation instruments within the trade for

discovering lifeless code are very mature, leverage the structured nature of

code and are already at builders’ fingertips, like IntelliJ or Sonar.

Dynamic evaluation from APM instruments is so highly effective there’s little that instruments

like GenAI can add to the extraction of data itself.

Alternatively, these two complicated approaches can yield an unlimited

quantity of information to grasp, interrogate and derive perception from. This

may very well be one space the place GenAI might present a minor acceleration

for discovery of little used code and expertise.

Just like having GenAI confer with giant reams of product documentation

or specs, we are able to leverage its data of the static and

dynamic instruments to assist us use them in the fitting approach as an example by

suggesting potential queries that may be run over observability stacks.

NewRelic, as an example, claims to have built-in LLMs in to its options to

speed up onboarding and error decision; this may very well be turned to a

modernization benefit too.

Idiomatic translation of tech paradigm

Translation from one programming language to a different is just not one thing new. Many of the instruments that do that have

utilized static evaluation methods – utilizing Summary Syntax Timber (ASTs) as intermediaries.

Though these methods and instruments have existed for a very long time, outcomes are sometimes poor when judged by means of

the lens of “would somebody have written it like this if that they had began authoring it as we speak”.

Sometimes the produced code suffers from:

Poor general Code high quality

Often, the code these instruments produce is syntactically appropriate, however leaves quite a bit to be desired relating to

high quality. A whole lot of this may be attributed to the algorithmic translation strategy that’s used.

Non-idiomatic code

Sometimes, the code produced doesn’t match idiomatic paradigms of the goal expertise stack.

Poor naming conventions

Naming is pretty much as good or dangerous because it was within the supply language/ tech stack – and even when naming is nice within the

older code, it doesn’t translate properly to newer code. Think about routinely naming lessons/ objects/ strategies

when translating procedural code that transfers recordsdata to an OO paradigm!

Isolation from open-source libraries/ frameworks

- Fashionable functions usually use many open-source libraries and frameworks (versus older

languages) – and producing code at most instances doesn’t seamlessly do the identical - That is much more sophisticated in enterprise settings when organizations are likely to have inside libraries

(that instruments won’t be accustomed to)

Lack of precision in information

Even with primitive sorts languages have totally different precisions – which is more likely to result in a loss in

precision.

Loss in relevance of supply code historical past

Many instances when making an attempt to grasp code we have a look at how that code developed to that state with git log [or

equivalents for other SCMs] – however now that historical past is just not helpful for a similar objective

Assuming a company embarks on this journey, it can quickly face prolonged testing and verification

cycles to make sure the generated code behaves precisely the identical approach as earlier than. This turns into much more difficult

when little to no security internet was in place initially.

Regardless of all of the drawbacks, code conversion approaches proceed to be an possibility that pulls some organizations

due to their attract as doubtlessly the bottom price/ effort resolution for leapfrogging from one tech paradigm

to the opposite.

We now have additionally been fascinated with this and exploring how GenAI will help enhance the code produced/ generated. It

can not help all of these points, however possibly it may possibly assist alleviate at the least the primary three or 4 of them.

From an strategy perspective, we try to use the ideas of

Refactoring

to this – primarily

work out a approach we are able to safely and incrementally make the leap from one tech paradigm to a different. This strategy

has already seen some success – two examples that come to thoughts:

Conclusion

At present’s panorama has quite a few alternatives to leverage GenAI to

obtain outcomes that have been beforehand out of attain. Within the software program

trade, GenAI is already enjoying a major function in serving to individuals

throughout varied roles full their duties extra effectively, and this

influence is predicted to develop. For example, GenAI has produced promising

leads to helping technical engineers with writing code.

Over the previous a long time, our trade has developed considerably, creating patterns, finest practices, and

methodologies that information us in constructing fashionable software program. Nonetheless, one of many largest challenges we now face is

updating the huge quantity of code that helps key operations day by day. These programs are sometimes giant and sophisticated,

with a number of layers and patches constructed over time, making conduct troublesome to alter. Moreover, there are

typically only some specialists who totally perceive the intricate particulars of how these programs are applied and

function. For these causes, we use an evolutionary strategy to legacy displacement, decreasing the dangers concerned

in modernizing these programs and producing worth early. Regardless of this, the fee/time/worth equation for

modernizing giant programs is commonly prohibitive. On this article, we mentioned methods GenAI may be harnessed to

flip this case round. We are going to proceed experimenting with making use of GenAI to those modernization challenges

and share our insights by means of this text, which we’ll maintain updated. This may embrace sharing what has

labored, what we imagine GenAI might doubtlessly clear up, and what, nonetheless, has not succeeded. Moreover, we

will lengthen our accelerator, CodeConcise, with the purpose of additional innovating throughout the modernization course of to

drive larger worth for our purchasers.

Hopefully, this text highlights the good potential of harnessing

this new expertise, GenAI, to handle a number of the challenges posed by

legacy programs within the trade. Whereas there isn’t any one-size-fits-all

resolution to those challenges – every context has its personal distinctive nuances –

there are sometimes similarities that may information our efforts. We additionally hope this

article evokes others within the trade to additional develop experiments

with “GenAI for Modernization” and share their insights with the broader

neighborhood.