Introduction

On this weblog, we share the journey of constructing a Serverless optimized Artifact Registry from the bottom up. The primary objectives are to make sure container picture distribution each scales seamlessly below unpredictable and bursty Serverless visitors and stays obtainable below difficult situations resembling main dependency failures.

Containers are the fashionable cloud-native deployment format which characteristic isolation, portability and wealthy tooling eco-system. Databricks inner providers have been working as containers since 2017. We deployed a mature and have wealthy open supply challenge because the container registry. It labored nicely because the providers had been usually deployed at a managed tempo.

Quick ahead to 2021, when Databricks began to launch Serverless DBSQL and ModelServing merchandise, hundreds of thousands of VMs had been anticipated to be provisioned every day, and every VM would pull 10+ pictures from the container registry. In contrast to different inner providers, Serverless picture pull visitors is pushed by buyer utilization and may attain a a lot greater higher sure.

Determine 1 is a 1-week manufacturing visitors load (e.g. prospects launching new knowledge warehouses or MLServing endpoints) that exhibits the Serverless Dataplane peak visitors is greater than 100x in comparison with that of inner providers.

Based mostly on our stress checks, we concluded that the open supply container registry couldn’t meet the necessities of Serverless.

Serverless challenges

Determine 2 exhibits the principle challenges of serving Serverless workloads with open supply container registry:

- Laborious to maintain up with Databricks’ development:

- Container picture metadata is backed by relational databases, which scale vertically and slowly.

- At peak visitors, hundreds of registry situations should be provisioned in a number of seconds, which regularly turn out to be a bottleneck on the essential path of picture pulling.

- Not sufficiently dependable:

- Requests serving is complicated within the OSS based mostly structure, which introduces extra failure modes.

- Dependencies resembling relational database or cloud object storage being down results in a regional complete outage.

- Expensive to function: The OSS registries aren’t efficiency optimized and have a tendency to have excessive useful resource utilization (CPU intensive). Working them at Databricks’ scale is prohibitively costly.

What about cloud managed container registries? They’re usually extra scalable and provide availability SLA. Nevertheless, completely different cloud supplier providers have completely different quotas, limitations, reliability, scalability and efficiency traits. Databricks operates in a number of clouds, we discovered the heterogeneity of clouds didn’t meet the necessities and was too expensive to function.

Peer-to-peer (P2P) picture distribution is one other widespread method to enhance scalability, at a special infrastructure layer. It primarily reduces the load to registry metadata however nonetheless topic to aforementioned reliability dangers. We later additionally launched the P2P layer to cut back the cloud storage egress throughput. At Databricks, we consider that every layer must be optimized to ship reliability for your complete stack.

Introducing the Artifact Registry

We concluded that it was mandatory to construct Serverless optimized registry to fulfill the necessities and guarantee we keep forward of Databricks’ fast development. We subsequently constructed Artifact Registry – a homegrown multi-cloud container registry service. Artifact Registry is designed with the next rules:

- Every thing scales horizontally:

- Eliminated relational database (PostgreSQL); as an alternative, the metadata was persevered into cloud object storage (an current dependency for pictures manifest and layers storage). Cloud object storages are far more scalable and have been nicely abstracted throughout clouds.

- Eliminated cache occasion (Redis) and changed it with a easy in-memory cache.

- Scaling up/down in seconds: added intensive caching for picture manifests and blob requests to cut back hitting the sluggish code path (registry). Consequently, just a few situations (provisioned in a number of seconds) should be added as an alternative of lots of.

- Easy is dependable: consolidated 3 open supply micro-services (nginx, metadata service and registry) right into a single service, artifact-registry. This reduces 2 additional networking hops and improves efficiency/reliability.

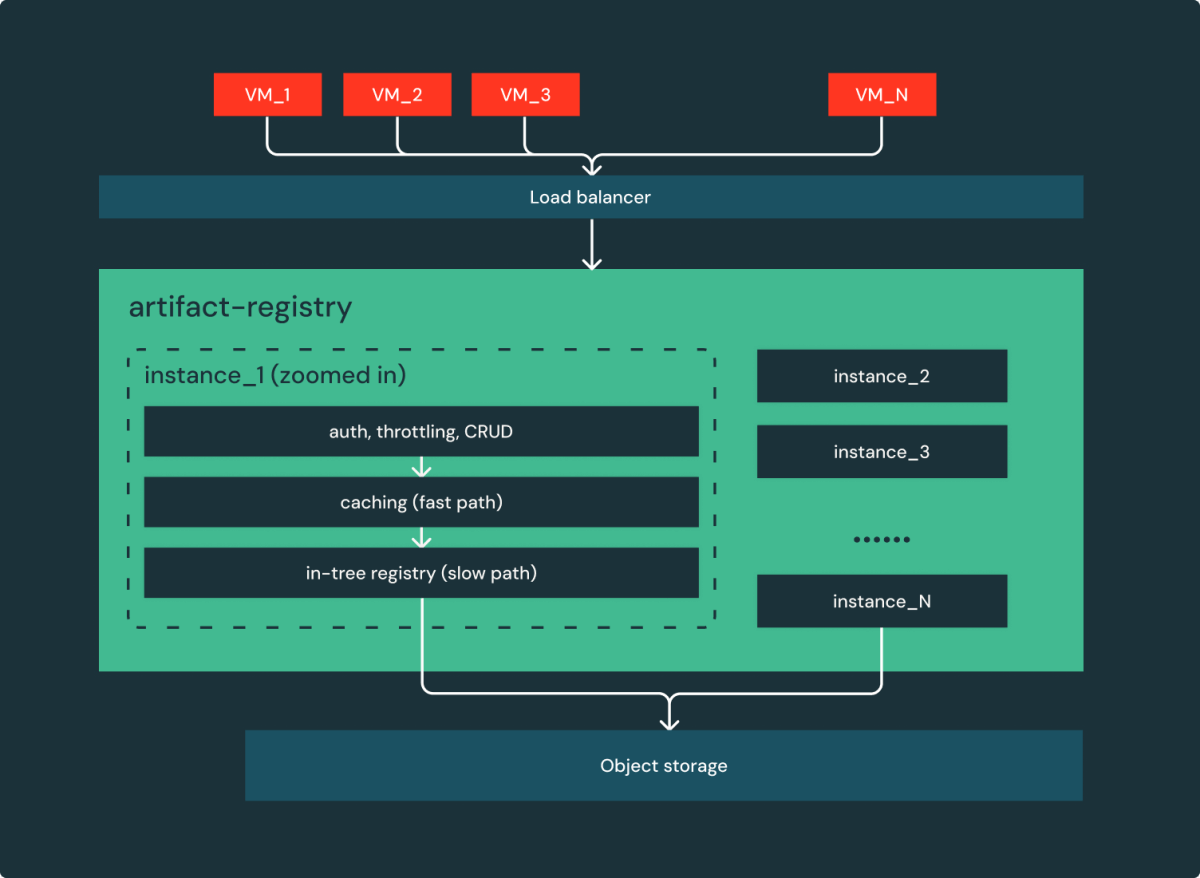

As proven in Determine 3, we basically reworked the unique system consisting of 5 providers to a easy net service: a bunch of stateless situations behind a load balancer serving requests!

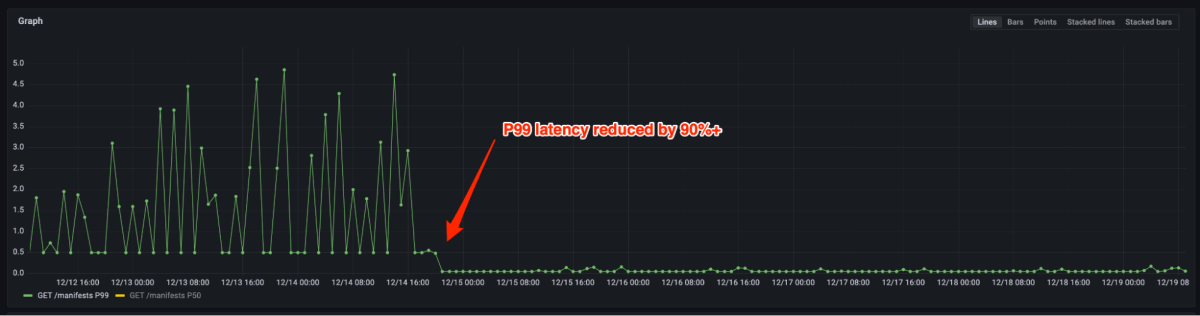

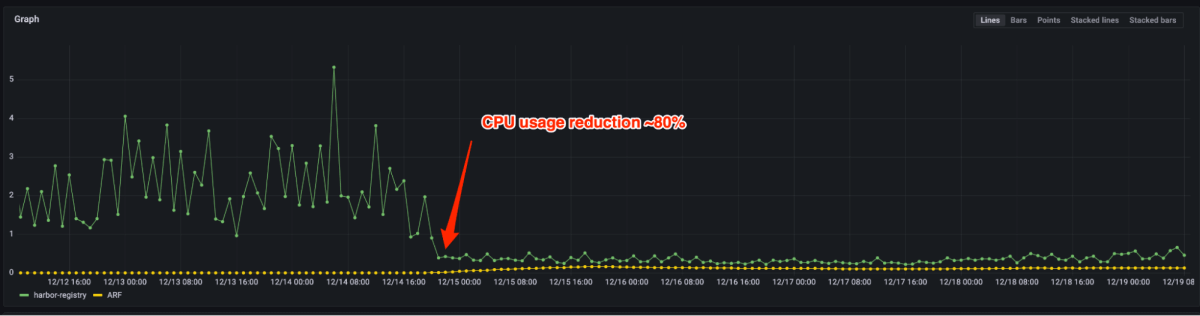

Determine 4 and 5 present that P99 latency decreased by 90%+ and CPU utilization decreased by 80% after migrating from the open supply registry to Artifact Registry. Now we solely have to provision a number of situations for a similar load vs. hundreds beforehand. The truth is, dealing with manufacturing peak visitors doesn’t require scale out generally. In case auto-scaling is triggered, it may be accomplished in a number of seconds.

The primary design resolution is to utterly exchange relational databases with cloud object storage for picture metadata. Relational databases are perfect for consistency and enriched question functionality, however have limitations on scalability and reliability. For instance, each picture pull request required authentication/authorization, which was served by PostgreSQL within the open supply implementation. The visitors spikes often brought on efficiency hiccups. The lookup question utilized by auth could be simply changed with a GET operation of a extra scalable Key/Worth storage. We additionally made cautious tradeoffs between comfort and reliability. For example, utilizing a relational database, it’s simple to mixture the picture rely, complete dimension grouping by completely different dimensions. Supporting such options, nevertheless, is non-trivial in object storage. In favor of reliability and scalability, we determined Artifact Registry to not assist such stats.

Surviving cloud object storages outage

With service reliability considerably improved after eliminating the dependencies of relational database, distant caching and inner microservices, there’s nonetheless a failure mode that sometimes occurs: cloud object storage outages. Cloud object storages are usually very dependable and scalable; nevertheless, when they’re unavailable (generally for hours), it doubtlessly causes regional outages. Databricks’ holds a excessive bar on reliability to attenuate affect of underlying cloud outages and proceed to serve our prospects.

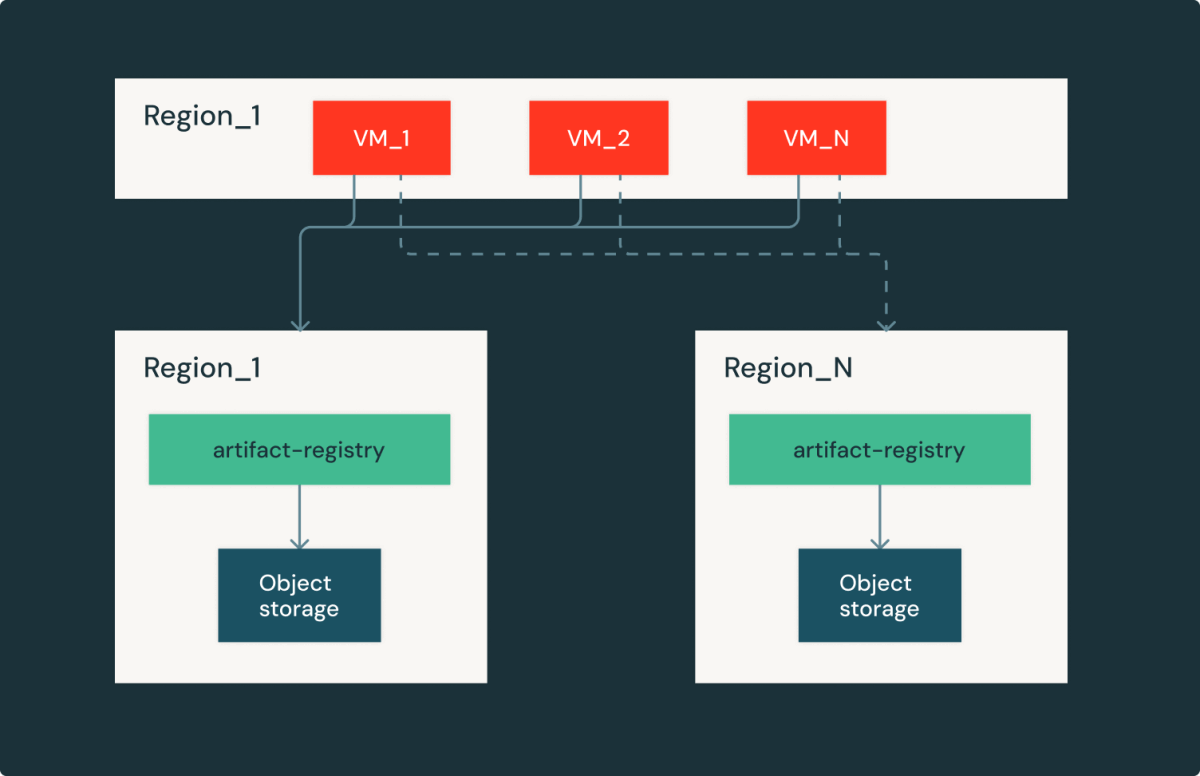

Artifact Registry is a regional service, which implies every cloud/area has an equivalent duplicate inside the area. This setup provides us the flexibility to fail over to completely different areas with the tradeoff on picture obtain latency and egress value. By fastidiously curating latency and capability, we had been in a position to shortly recuperate from cloud supplier outages and proceed serving Databricks’ prospects.

Conclusions

On this weblog submit, we shared our journey of constructing Databricks container registry from serving low churn inner visitors to buyer dealing with bursty Serverless workloads. We purpose-built Serverless optimized Artifact Registry. In comparison with the open supply registry, it delivered 90% P99 latency discount and 80% useful resource usages. As well as, we designed the system to tolerate regional cloud supplier outages, additional bettering reliability. Right now, Artifact Registry continues to be a strong basis that makes reliability, scalability and effectivity seamless amid Databricks’ fast Serverless development.

Acknowledgement

Constructing dependable and scalable Serverless infrastructure is a staff effort from our main contributors: Robert Landlord, Tian Ouyang, Jin Dong, and Siddharth Gupta. The weblog can also be a staff work – we respect the insightful reviewers supplied by Xinyang Ge and Rohit Jnagal.