Ever since Generative AI captured public consideration, there’s been no scarcity of hypothesis about the way forward for tech jobs. Would possibly these fashions displace total roles, rendering some job classes out of date? The considered being changed by AI could be unsettling. But, relating to software program growth and testing, generative AI is best suited to be a accomplice than a menace — an assistant poised to boost human capabilities reasonably than exchange them.

Whereas generative AI has the potential to extend productiveness and high quality if used responsibly, the inverse is true if used irresponsibly. That accountability hinges on people sustaining management — each in directing the AI and in evaluating its outputs. Accountable AI supervision usually requires area experience to have the ability to acknowledge errors and hazards within the AI’s output. In expert fingers, AI is usually a highly effective amplifier; however within the fingers of individuals with out enough understanding, it may possibly simply as simply misled, doubtlessly leading to undesirable outcomes.

Generative AI’s Limitations: The Want for Essential Pondering

Generative AI’s capability to swiftly produce code snippets, take a look at circumstances, and documentation has led many to treat it as a rare software able to human feats. But, regardless of these obvious shows of “intelligence,” generative AI doesn’t actually assume. As an alternative, it operates on a predictive foundation, selecting the subsequent probably phrase or motion primarily based on patterns in its coaching information. This strategy usually results in “hallucinations,” the place the system supplies believable sounding however inaccurate or deceptive output.

As a result of it’s sure by the immediate it’s given and the info on which it was skilled, generative AI can miss essential particulars, make incorrect assumptions, and perpetuate present biases. It additionally lacks real creativity because it merely acknowledges, replicates, and randomizes discovered patterns to generate output. Moreover, whereas it excels at producing human-like textual content, proficiency in replicating patterns in language just isn’t the identical as area experience; AI could seem assured whereas delivering essentially flawed suggestions. This threat is magnified by the opaque nature of fashions, making their inner reasoning processes obscure and their errors more durable to detect.

In the end, AI’s limitations underscore the significance of human oversight. Software program makers and testers should acknowledge the expertise’s inherent constraints, leveraging it as a useful assistant reasonably than a standalone authority. By guiding them with contextualized important pondering and specialised experience, and by scrutinizing and correcting their outputs, human software program practitioners can harness the advantages of generative AI whereas mitigating its shortcomings.

High quality Software program Requires Human Ingenuity

Though automation can streamline many testing duties, the broader self-discipline of software program testing is essentially anchored in human judgment and experience. In spite of everything, testing is aimed toward serving to ship high quality software program to individuals. Expert testers draw on each specific and tacit information to confirm capabilities and monitor down potential issues. Even when utilizing automation to increase their attain, human testers mix their information, ability, expertise, curiosity, and creativity to successfully take a look at their merchandise.

Machines can execute take a look at suites at excessive pace, however they lack the discernment to design, prioritize, and interpret exams within the context of their potential customers or shifting enterprise priorities. Human testers mix insights concerning the product, the undertaking, and the individuals concerned, balancing technical concerns and enterprise goals whereas accounting for regulatory and social implications.

Generative AI doesn’t essentially alter the character of testing. Whereas AI can recommend take a look at concepts and relieve testers from repetitive duties in ways in which different automation can’t, it lacks the contextual consciousness and demanding pondering essential to sufficiently consider software program performance, security, safety, efficiency, and person expertise. Accountable use of generative AI in testing requires human oversight by testers who direct and examine the AI. Since generative AI depends on what it was skilled on and the way it was prompted, human experience stays indispensable for making use of context, intent, and real-world constraints. When guided correctly, generative AI can empower expert testers to extra successfully and effectively take a look at their merchandise with out changing human ingenuity.

The Symbiotic Relationship Between People and AI

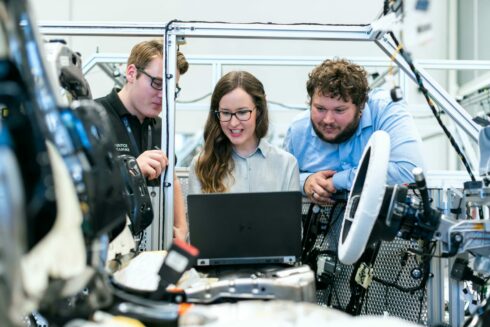

The intersection of AI and human experience has by no means been extra promising on this planet of software program testing. By functioning as a supportive collaborator below the path and correction of a talented tester, AI can provide strategies and carry out tedious duties — serving to make testing sooner, extra thorough, and higher attuned to individuals’s wants. A mix of human perception and AI-driven effectivity is the way forward for software program testing.

On this sense, the human performs the a part of a musical conductor, deciphering the rating (the necessities, each specific and implicit) and guiding the AI to carry out in a means that matches the venue (the software program’s context and constraints), all whereas offering steady path and correction. Removed from rendering testers out of date, generative AI encourages us to broaden our expertise. In impact, it invitations testers to change into more proficient conductors, orchestrating AI-driven options that resonate with their viewers, reasonably than specializing in a single instrument.

In the end, the rise of AI in testing shouldn’t be seen as a menace, however reasonably as a chance to raise the testing self-discipline. By combining synthetic intelligence with human creativity, contextual consciousness, and moral oversight, testers might help be sure that software program methods are delivered with higher high quality, security, and person satisfaction.