Agent Mode in Gemini Code Help now out there in VS Code and IntelliJ

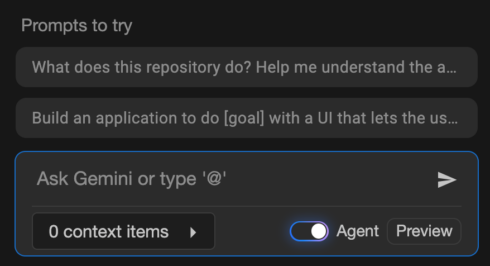

This mode was launched final month to the Insiders Channel for VS Code to broaden the capabilities of Code Help past prompts and responses to help actions like a number of file edits, full challenge context, and built-in instruments and integration with ecosystem instruments.

Since being added to the Insiders Channel, a number of new options have been added, together with the power to edit code adjustments utilizing Gemini’s Inline diff, user-friendly quota updates, real-time shell command output, and state preservation between IDE restarts.

Individually, the corporate additionally introduced new agentic capabilities in its AI Mode in Search, comparable to the power to set dinner reservations based mostly on elements like celebration measurement, date, time, location, and most popular sort of meals. U.S. customers opted into the AI Mode experiment in Labs will even now see outcomes which might be extra particular to their very own preferences and pursuits. Google additionally introduced that AI Mode is now out there in over 180 new nations.

GitHub’s coding agent can now be launched from wherever on platform utilizing new Brokers panel

GitHub has added a brand new panel to its UI that permits builders to invoke the Copilot coding agent from wherever on the positioning.

From the panel, builders can assign background duties, monitor working duties, or overview pull requests. The panel is a light-weight overlay on GitHub.com, however builders also can open the panel in full-screen mode by clicking “View all duties.”

The agent might be launched from a single immediate, like “Add integration exams for LoginController” or “Repair #877 utilizing pull request #855 for example.” It could possibly additionally run a number of duties concurrently, comparable to “Add unit take a look at protection for utils.go” and “Add unit take a look at protection for helpers.go.”

Anthropic provides Claude Code to Enterprise, Staff plans

With this change, each Claude and Claude Code shall be out there beneath a single subscription. Admins will be capable of assign customary or premium seats to customers based mostly on their particular person roles. By default, seats embrace sufficient utilization for a typical workday, however further utilization might be added in periods of heavy use. Admins also can create a most restrict for further utilization.

Different new admin settings embrace a utilization analytics dashboard and the power to deploy and implement settings, comparable to device permissions, file entry restrictions, and MCP server configurations.

Microsoft provides Copilot-powered debugging options for .NET in Visible Studio

Copilot can now recommend acceptable areas for breakpoints and tracepoints based mostly on present context. Equally, it will possibly troubleshoot non-binding breakpoints and stroll builders by the potential trigger, comparable to mismatched symbols or incorrect construct configurations.

One other new characteristic is the power to generate LINQ queries on large collections within the IEnumerable Visualizer, which renders knowledge right into a sortable, filterable tabular view. For instance, a developer may ask for a LINQ question that can floor problematic rows inflicting a filter subject. Moreover, builders can hover over any LINQ assertion and get an evidence from Copilot on what it’s doing, consider it in context, and spotlight potential inefficiencies.

Copilot also can now assist builders take care of exceptions by summarizing the error, figuring out potential causes, and providing focused code repair solutions.

Groundcover launches observability answer for LLMs and brokers

The eBPF-based observability supplier groundcover introduced an observability answer particularly for monitoring LLMs and brokers.

It captures each interplay with LLM suppliers like OpenAI and Anthropic, together with prompts, completions, latency, token utilization, errors, and reasoning paths.

As a result of groundcover makes use of eBPF, it’s working on the infrastructure layer and might obtain full visibility into each request. This enables it to do issues like comply with the reasoning path of failed outputs, examine immediate drift, or pinpoint when a device name introduces latency.

IBM and NASA launch open-source AI mannequin for predicting photo voltaic climate

The mannequin, Surya, analyzes excessive decision photo voltaic commentary knowledge to foretell how photo voltaic exercise impacts Earth. In response to IBM, photo voltaic storms can harm satellites, influence airline journey, and disrupt GPS navigation, which may negatively influence industries like agriculture and disrupt meals manufacturing.

The photo voltaic photographs that Surya was educated on are 10x bigger than usually AI coaching knowledge, so the group has to create a multi-architecture system to deal with it.

The mannequin was launched on Hugging Face.

Preview of NuGet MCP Server now out there

Final month, Microsoft introduced help for constructing MCP servers with .NET after which publishing them to NuGet. Now, the corporate is asserting an official NuGet MCP Server to combine NuGet bundle info and administration instruments into AI growth workflows.

“For the reason that NuGet bundle ecosystem is at all times evolving, giant language fashions (LLMs) get out-of-date over time and there’s a want for one thing that assists them in getting info in realtime. The NuGet MCP server supplies LLMs with details about new and up to date packages which were printed after the fashions in addition to instruments to finish bundle administration duties,” Jeff Kluge, principal software program engineer at Microsoft, wrote in a weblog put up.

Opsera’s Codeglide.ai lets builders simply flip legacy APIs into MCP servers

Codeglide.ai, a subsidiary of the DevOps firm Opsera, is launching its MCP server lifecycle platform that can allow builders to show APIs into MCP servers.

The answer always displays API adjustments and updates the MCP servers accordingly. It additionally supplies context-aware, safe, and stateful AI entry with out the developer needing to write down customized code.

In response to Opsera, giant enterprises could preserve 2,000 to eight,000 APIs — 60% of that are legacy APIs — and MCP supplies a manner for AI to effectively work together with these APIs. The corporate says that this new providing can cut back AI integration time by 97% and prices by 90%.

Confluent publicizes Streaming Brokers

Streaming Brokers is a brand new characteristic in Confluent Cloud for Apache Flink that brings agentic AI into knowledge stream processing pipelines. It permits customers to construct, deploy, and orchestrate brokers that may act on real-time knowledge.

Key options embrace device calling by way of MCP, the power to connect with fashions or databases utilizing Flink, and the power to counterpoint streaming knowledge with non-Kafka knowledge sources, like relational databases and REST APIs.

“Even your smartest AI brokers are flying blind in the event that they don’t have contemporary enterprise context,” stated Shaun Clowes, chief product officer at Confluent. “Streaming Brokers simplifies the messy work of integrating the instruments and knowledge that create actual intelligence, giving organizations a stable basis to deploy AI brokers that drive significant change throughout the enterprise.”