Implementing Manufacturing-Grade Analytics on a Databricks Information Warehouse

Excessive-concurrency, low-latency information warehousing is crucial for organizations the place information drives vital enterprise choices. This implies supporting a whole lot of concurrent customers, delivering speedy question efficiency for interactive analytics and enabling actual‑time insights for quick, knowledgeable choice‑making. A manufacturing‑grade information warehouse is greater than a help system—it’s a catalyst for progress and innovation.

Databricks pioneered the lakehouse structure to unify information, analytics and AI workloads—eliminating expensive information duplication and sophisticated system integrations. With built-in autonomous efficiency optimizations, the lakehouse delivers aggressive worth/efficiency whereas simplifying operations. As an open lakehouse, it additionally ensures quick, safe entry to vital information by way of Databricks SQL, powering BI, analytics and AI instruments with unified safety and governance that reach throughout your entire ecosystem. Open interoperability is crucial since most customers work together with the warehouse by way of these exterior instruments. The platform scales effortlessly—not solely with information and customers, but additionally with the rising variety of instruments your groups depend on—and affords highly effective built-in capabilities like Databricks AI/BI, Mosaic AI and extra, whereas sustaining flexibility and interoperability along with your present ecosystem.

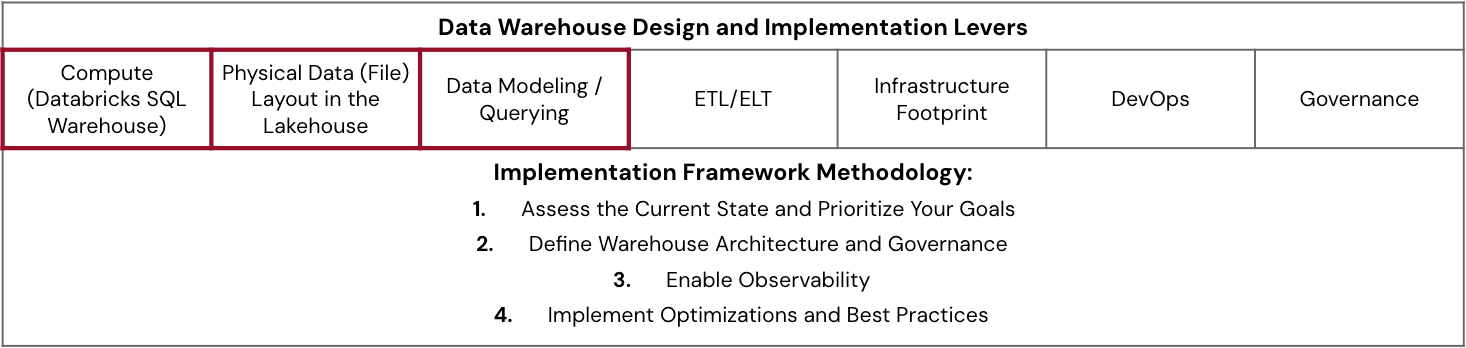

This weblog gives a complete information for organizations at any stage of their lakehouse structure journey—from preliminary design to mid-implementation to ongoing optimization—on maximizing high-concurrency, low-latency efficiency with the Databricks Information Intelligence Platform. We’ll discover:

- Core architectural elements of a knowledge warehouse and their collective impression on platform efficiency.

- A structured performance-tuning framework to information the optimization of those architectural components.

- Finest practices, monitoring methods and tuning methodologies to make sure sustained efficiency at scale.

- An actual-world case examine demonstrating how these rules work collectively in apply.

Key Architectural Concerns

Whereas many foundational rules of conventional information warehouses nonetheless apply—equivalent to sound information modeling, strong information administration and embedded information high quality—designing a contemporary lakehouse for manufacturing‑grade analytics requires a extra holistic method. Central to it is a unified governance framework, and Unity Catalog (AWS | Azure | GCP) performs a vital position in delivering it. By standardizing entry controls, lineage monitoring and auditability throughout all information and AI belongings, Unity Catalog ensures constant governance at scale—one thing that is more and more important as organizations develop in information quantity, person concurrency and platform complexity.

Efficient design requires:

- Adoption of confirmed architectural finest practices

- An understanding of tradeoffs between interconnected elements

- Clear aims for concurrency, latency and scale based mostly on enterprise necessities

In a lakehouse, efficiency outcomes are influenced by architectural selections made early within the design section. These deliberate design choices spotlight how fashionable lakehouses symbolize a elementary departure from legacy information warehouses throughout 5 vital axes:

With these architectural issues in thoughts, let’s discover a sensible framework for implementing a production-grade information warehouse that may ship on the promise of high-concurrency and low-latency at scale.

Technical Resolution Breakdown

The next framework distills finest practices and architectural rules developed by way of real-world engagements with enterprise prospects. Whether or not you are constructing a brand new information warehouse, migrating from a legacy platform or tuning an present lakehouse, these pointers will enable you speed up time to manufacturing whereas delivering scalable, performant and cost-efficient outcomes.

Begin With a Use Case-Pushed Evaluation

Earlier than implementing, we advocate a speedy evaluation of a vital workload—typically your slowest dashboard or most resource-intensive pipeline. This method helps you determine efficiency gaps and prioritize areas for optimization.

Ask the next questions to border your evaluation:

- What efficiency metrics matter most (e.g., question latency, throughput, concurrency) and the way do they evaluate to enterprise expectations?

- Who makes use of this workload, when and the way steadily?

- Are compute prices proportional to the workload’s enterprise worth?

This evaluation creates a basis for focused enhancements and helps align your optimization efforts with enterprise impression.

Implementation Framework

The framework beneath outlines a step-by-step method to implementing or modernizing your warehouse on Databricks:

- Assess the Present State and Prioritize Your Targets

- Consider and evaluate the prevailing structure in opposition to efficiency, value and scalability targets.

- Outline enterprise (and expertise) necessities for concurrency, latency, scale, value, SLAs and different components so the purpose posts do not hold transferring.

- Determine gaps that impression the enterprise most and prioritize remediation based mostly on worth and complexity (whether or not designing new, mid-migration or in manufacturing).

- Outline Warehouse Structure and Governance

- Design logical segmentation: Decide which groups or use instances will share or require devoted SQL Warehouses.

- Proper-size your warehouse cases, apply tagging and outline defaults (e.g., cache settings, timeouts, and many others.).

- Perceive and plan for fine-grained configurations like default caching, warehouse timeouts, JDBC timeouts from BI instruments and SQL configuration parameters (AWS | Azure | GPC).

- Set up a governance mannequin for warehouses masking administrator (AWS | Azure | GCP) and finish person (AWS | Azure | GCP) roles and duties.

- Spend money on coaching and supply implementation templates to make sure consistency throughout groups.

- Allow Observability

- Allow observability and monitoring for SQL warehouse utilization to detect anomalies, uncover inefficient workloads and optimize useful resource utilization.

- Activate out-of-the-box performance (AWS | Azure | GCP) alongside customized telemetry and automate alerts/remediations the place doable.

- Be taught to leverage system tables, warehouse monitoring and question profiles to determine points like spill, shuffle or queuing.

- Combine value information and lineage metadata (e.g., BI device context through question historical past tables) to correlate efficiency and spend.

- Implement Optimizations and Finest Practices

- Leverage insights from observability to align workload efficiency with enterprise and expertise necessities.

- Implement AI options for value, structure and compute effectivity.

- Codify learnings into reusable templates, documentation and checklists to scale finest practices throughout groups.

- Optimize incrementally utilizing an effort (complexity, timeline, experience) vs. impression (efficiency, value, upkeep overhead) matrix to prioritize.

Within the sections beneath, let’s stroll by way of every stage of this framework to grasp how considerate design and execution allow excessive concurrency, low latency and business-aligned value efficiency on Databricks.

Assess the Present State and Prioritize Your Targets

Earlier than diving into finest practices and tuning strategies, it is important to grasp the foundational levers that form lakehouse efficiency—equivalent to compute sizing, information structure and information modeling. These are the areas groups can straight affect to satisfy high-concurrency, low-latency, scale targets.

The scorecard beneath gives a easy matrix to evaluate maturity throughout every lever and determine the place to focus your efforts. To make use of it, consider every lever throughout three dimensions: how nicely it meets enterprise wants, how carefully it aligns with finest practices, the extent of technical functionality your crew has in that space and governance. Apply a Crimson-Amber-Inexperienced (RAG) ranking to every intersection to rapidly visualize strengths (inexperienced), areas for enchancment (amber) and important gaps (pink). One of the best practices and analysis strategies later on this weblog will inform the ranking–use this directionality together with a extra granular maturity evaluation. This train can information discussions throughout groups, floor hidden bottlenecks and assist prioritize the place to speculate—whether or not in coaching, structure modifications or automation.

With the elements that drive lakehouse efficiency and a framework to implement them outlined, what’s subsequent? The mix of finest practices (what to do), tuning strategies (how to do it) and evaluation strategies (when to do it) gives the actions to take to realize your efficiency aims.

The main focus will probably be on particular finest practices and granular configuration strategies for a couple of vital elements that work harmoniously to function a high-performing information warehouse.

Outline Warehouse Structure and Governance

Compute (Databricks SQL Warehouse)

Whereas compute is usually seen as the first efficiency lever, compute sizing choices ought to at all times be thought of alongside information structure design and modeling/querying, as these straight impression the compute wanted to realize the required efficiency.

Proper-sizing SQL warehouses is vital for cost-effective scaling. There is not any crystal ball for exact sizing upfront, however these are a number of key heuristics to comply with for organizing and sizing SQL warehouse compute.

- Allow SQL Serverless Warehouses: They provide instantaneous compute, elastic autoscaling and are totally managed, simplifying operations for all sorts of makes use of, together with bursty and inconsistent BI/analytics workloads. Databricks totally manages the infrastructure, with that infrastructure value baked in, providing the potential for TCO reductions.

- Perceive Workloads and Customers: Phase customers (human/automated) and their question patterns (interactive BI, advert hoc, scheduled studies) to make use of totally different warehouses scoped by utility context, a logical grouping by objective, crew, perform, and many others. Implement a multi-warehouse structure, by these segments, to have extra fine-grained sizing management and the flexibility to watch independently. Guarantee tags for value attribution are enforced. Attain out to your Databricks account contact to entry upcoming options meant to stop noisy neighbors.

- Iterative Sizing and Scaling: Do not overthink the preliminary warehouse dimension or min/max cluster settings. Changes based mostly on monitoring actual workload efficiency, utilizing mechanisms within the subsequent part, are far more practical than upfront guesses. Information volumes and the variety of customers don’t precisely estimate the compute wanted. The sorts of queries, patterns and concurrency of question load are higher metrics, and there is an automatic profit from Clever Workload Administration (IWM) (AWS | Azure | GCP).

- Perceive When to Resize vs. Scale: Improve warehouse dimension (“T-shirt dimension”) when needing to accommodate resource-heavy, complicated queries like giant aggregations and multi-table joins, which require excessive reminiscence—monitor frequency of disk spills and reminiscence utilization. Improve the variety of clusters for autoscaling when coping with bursty concurrent utilization and if you see persistent queuing because of many queries ready to execute, not a couple of intensive queries pending.

- Steadiness Availability and Price: Configure auto-stop settings. Serverless’s speedy chilly begin makes auto-stopping a big cost-saver for idle intervals.

Bodily Information (File) Format within the Lakehouse

Quick queries start with information skipping, the place the question engine reads solely related recordsdata utilizing metadata and statistics for environment friendly file pruning. The bodily group of your information straight impacts this pruning, making file structure optimization vital for high-concurrency, low-latency efficiency.

The evolution of information structure strategies on Databricks affords numerous approaches for optimum file group:

For brand spanking new tables, Databricks recommends defaulting to managed tables with Auto Liquid Clustering (AWS | Azure | GCP) and Predictive Optimization (AWS | Azure | GCP). Auto Liquid Clustering intelligently organizes information based mostly on question patterns, and you’ll specify preliminary clustering columns as hints to allow it in a single command. Predictive Optimization routinely handles upkeep jobs like OPTIMIZE, VACUUM and ANALYZE.

For present deployments utilizing exterior tables, take into account migrating to managed tables to completely leverage these AI-powered options, prioritizing high-read and latency-sensitive tables first. Databricks gives an automatic resolution (AWS | Azure | GCP) with the ALTER TABLE...SET MANAGED command to simplify the migration course of. Moreover, Databricks helps managed Iceberg tables as a part of its open desk format technique.

Information Modeling / Querying

Modeling is the place enterprise necessities meet information construction. All the time begin by understanding your finish consumption patterns, then mannequin to these enterprise wants utilizing your group’s most well-liked methodology—Kimball, Inmon, Information Vault or denormalized approaches. The lakehouse structure on Databricks helps all of them.

Unity Catalog options lengthen past observability and discovery with lineage, major keys (PKs), constraints and schema evolution capabilities. They supply essential hints to the Databricks question optimizer, enabling extra environment friendly question plans and bettering question efficiency. As an illustration, declaring PKs and overseas keys with RELY permits the optimizer to eradicate redundant joins, straight impacting pace. Unity Catalog’s strong help for schema evolution additionally ensures agility as your information fashions adapt over time. Unity Catalog gives an ordinary governance mannequin based mostly on ANSI SQL.

Further related assets embody Information Warehousing Modeling Methods and a three-part sequence on Dimensional Information Warehousing (Half 1, Half 2 and Half 3).

Allow Observability

Activating monitoring and motion tuning choices completely highlights the interconnectedness of information warehouse elements amongst compute, bodily file structure, question effectivity and extra.

- Begin by establishing observability by way of dashboards and purposes.

- Outline realized patterns for figuring out and diagnosing efficiency bottlenecks after which correcting them.

- Iteratively construct in automation by way of alerting and agentic corrective actions.

- Compile frequent tendencies inflicting bottlenecks and incorporate them into growth finest practices, code evaluate checks and templates.

Steady monitoring is crucial for sustained excessive, constant efficiency and price effectivity in manufacturing. Understanding commonplace patterns permits one to refine one’s tuning choices as utilization evolves.

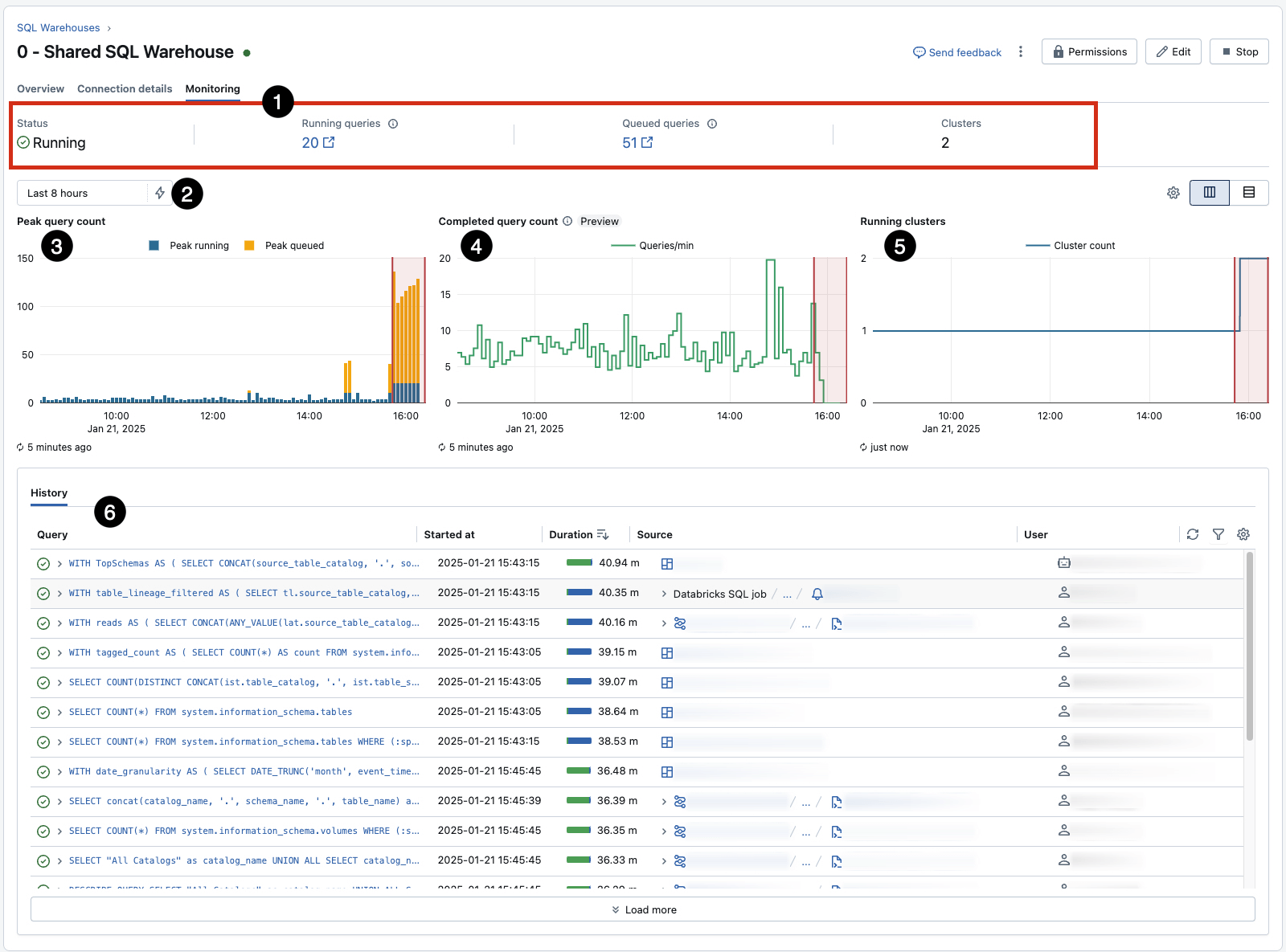

Monitor and Modify: Use every warehouse’s built-in Monitoring tab (AWS | Azure | GCP) for real-time insights into peak concurrent queries, utilization and different key statistics. This gives a fast reference for remark, however ought to be supplemented with additional strategies to drive alerts and motion.

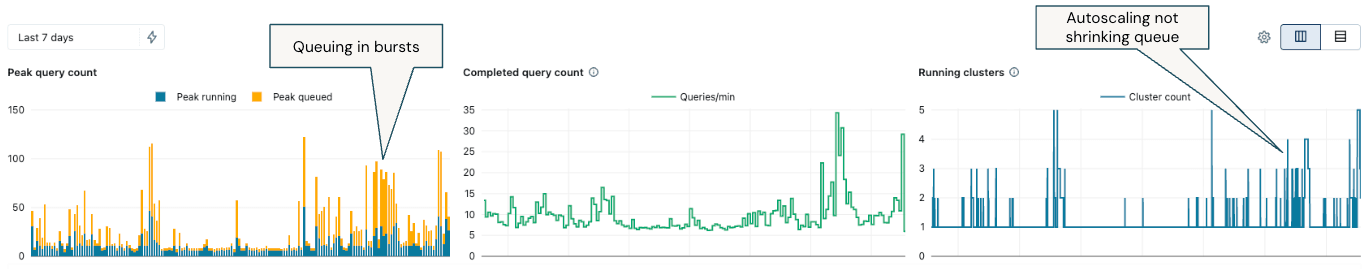

- Pay specific consideration to 3, which reveals queueing on account of concurrency limits for a given warehouse (and could be influenced by resizing) and 5, which exhibits autoscaling occasions in response to the queue. 6 captures question historical past, an important start line for figuring out and investigating long-running and inefficient workloads.

Leverage system tables: Helps extra granular, bespoke monitoring. Over time, develop customized dashboards and alerts, however reap the benefits of ready choices:

- The Granular SQL Warehouse Monitoring Dashboard gives a complete view of knowledgeable scaling choices by understanding who and what drives prices.

- The DBSQL Workflow Advisor gives a view throughout scaling, question efficiency to determine bottlenecks and price attribution.

- Introduce customized SQL Alerts (AWS | Azure | GCP) for in-built notifications realized from the monitoring occasions from the above.

For purchasers all for value attribution and observability past simply the SQL Warehouse, this devoted weblog, From Chaos to Management: A Price Maturity Journey with Databricks, on the associated fee maturity journey, is a useful useful resource.

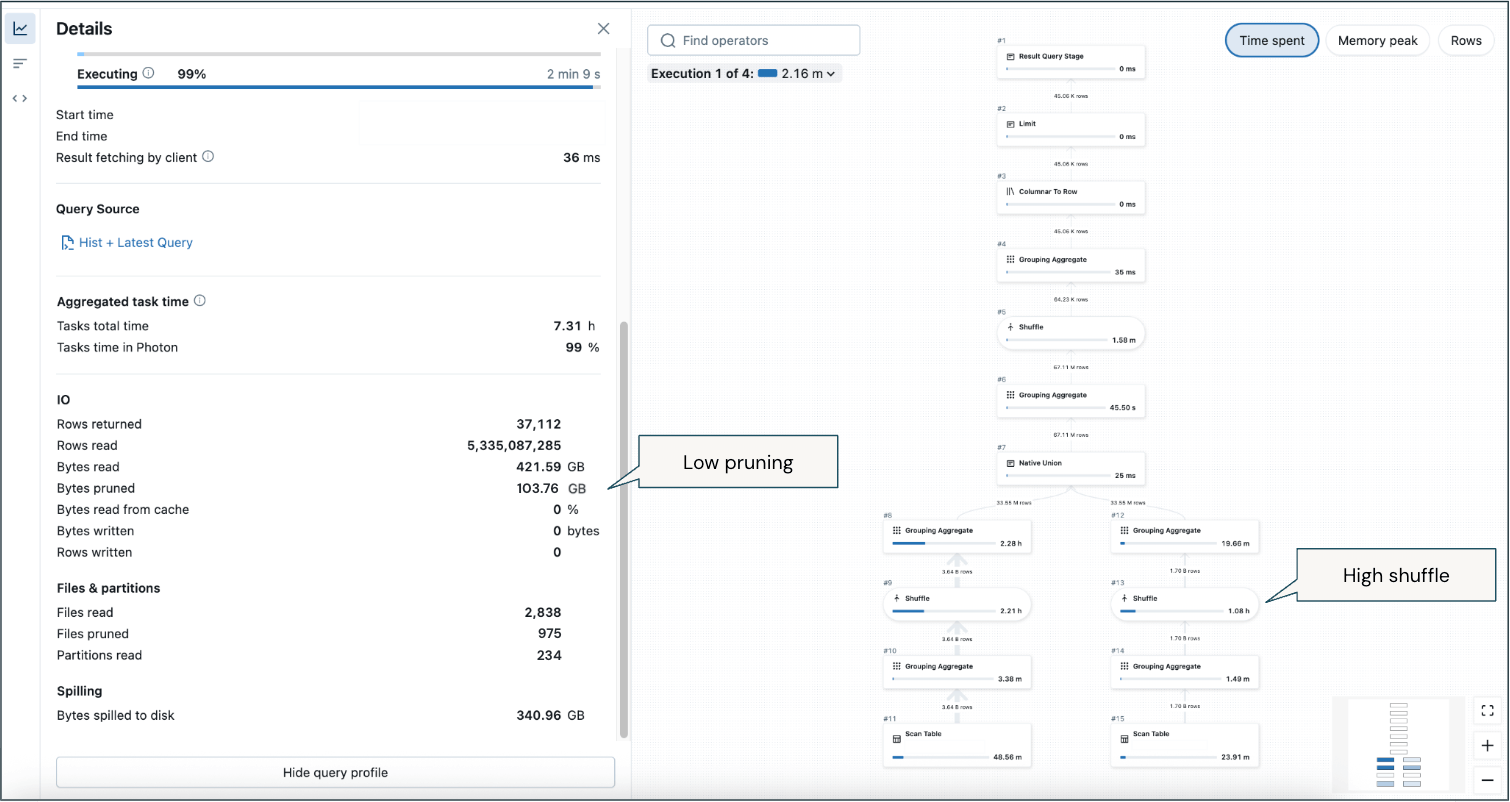

Make the most of Question Profiles: The Question Profile (AWS | Azure | GCP) device is your major diagnostic for particular person question efficiency points. It gives detailed execution plans and helps pinpoint bottlenecks that have an effect on required compute.

|

|

A number of start line strategies of what to search for from the question profile:

- Test if pruning happens. If there ought to be pruning (AWS | Azure | GCP) (i.e., lowering information learn from storage utilizing metadata/statistics of tables), which you’d anticipate if making use of predicates or joins, however it’s not occurring, then analyze the file structure technique. Ideally, recordsdata/partitions learn ought to be low and recordsdata pruned ought to be excessive.

- A big quantity of wall-clock time spent in “Scheduling” (higher than a couple of seconds) suggests queuing.

- If the ‘Outcome fetching by shopper’ period takes more often than not, it signifies a possible community subject between the exterior device/utility and SQL warehouse.

- Bytes learn from the cache will range relying on utilization patterns, as customers working queries utilizing the identical tables on the identical warehouse will naturally leverage the cached information relatively than re-scanning recordsdata.

- The DAG (Directed Acyclic Graph–AWS | Azure | GCP) means that you can determine steps by period of time they took, reminiscence utilized and rows learn. This might help slim down efficiency points for extremely complicated queries.

- To detect the small file downside (the place information recordsdata are considerably smaller than the optimum dimension, inflicting inefficient processing), ideally, the typical file dimension ought to be between 128MB and 1GB, relying on the dimensions of the desk:

- The vast majority of the question plan spent scanning supply desk(s).

- Run

DESCRIBE DETAIL [Table Name]. To search out the typical file dimension, divide thesizeInBytesby thenumFiles. Or, within the question profile, use [Bytes read] / [Files read].

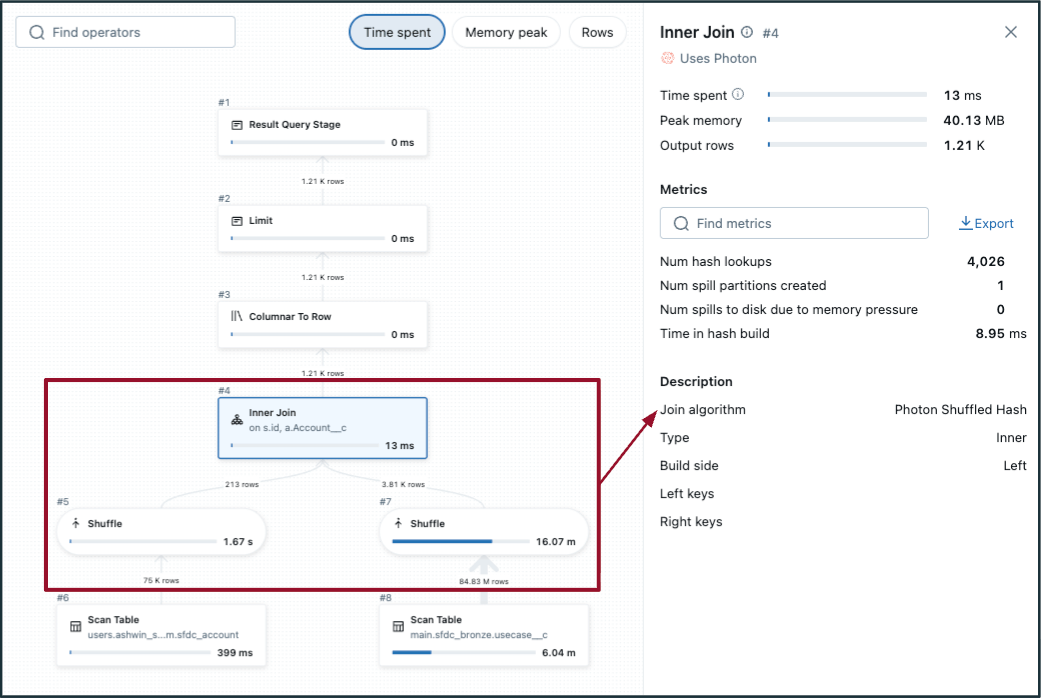

- To detect a probably inefficient shuffle hash be a part of:

- Select the be a part of step within the DAG and verify the “Be a part of algorithm”.

- No/low file pruning.

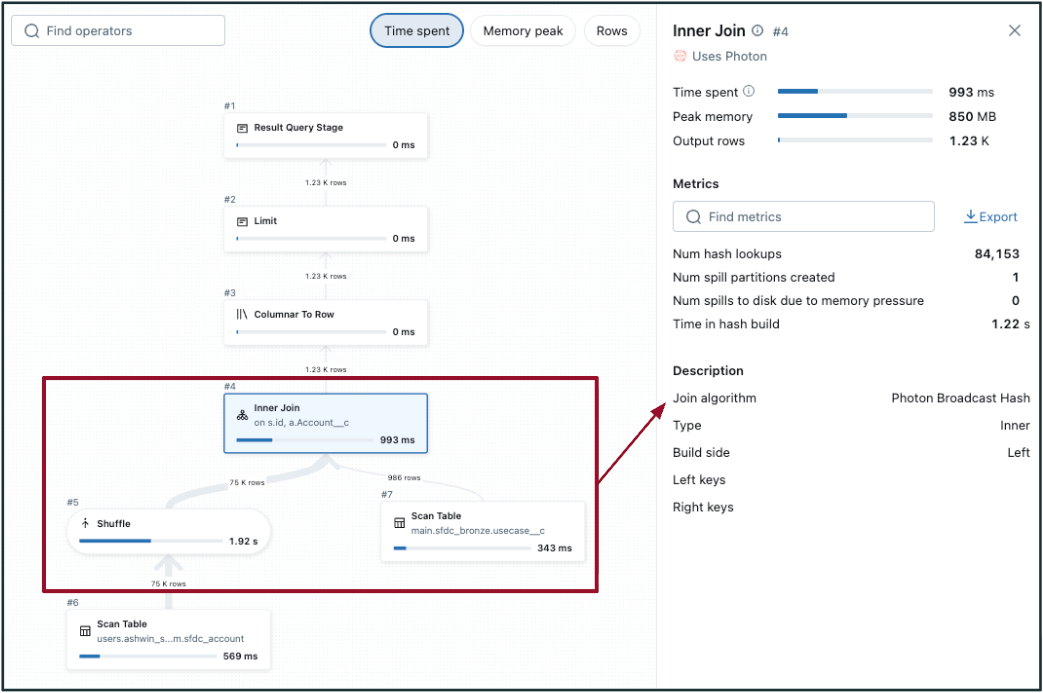

- Within the DAG, shuffle happens on each tables (on both aspect of the be a part of, like within the picture to the left). If one of many tables is sufficiently small, take into account broadcasting to carry out a broadcast hash be a part of as an alternative (proven within the picture to the suitable).

- Adaptive question execution (AQE) defaults to

- All the time guarantee filters are being utilized to cut back supply datasets.

|

|

Implement Optimizations and Finest Practices

Efficiency Points: The 4 S’s + Queuing

Whether or not configuring compute for a brand new workload or optimizing, it’s a necessity to grasp the commonest efficiency points. These match into a standard moniker, “The 4 S’s”, with a fifth (queuing) added on:

To cut back question latency in your SQL warehouse, decide whether or not spill, queuing and/or shuffle (skew and small recordsdata will come up later) is the first efficiency bottleneck. This complete information gives extra particulars. After figuring out the basis trigger, apply the rules beneath to regulate SQL warehouse sizing accordingly and measure the impression.

- Disk Spill (from reminiscence to disk): Spill happens when a SQL warehouse runs out of reminiscence and writes momentary outcomes to disk, considerably slower than in-memory processing. In a Question Profile, any quantities in opposition to “spill (bytes)” or “spill time” point out that is occurring.

To mitigate spills, enhance the SQL warehouse T-shirt dimension to offer extra reminiscence. Question reminiscence utilization can be decreased by way of question optimization strategies equivalent to early filtering, lowering skew and simplifying joins. Enhancing file structure—utilizing appropriately sized recordsdata or making use of Liquid Clustering—can additional restrict the quantity of information scanned and shuffled throughout execution.

Helper question on system tables that may be transformed to a SQL Alert or AI/BI Dashboard

- Question Queuing: If the SQL Warehouse Monitoring display screen exhibits persistent queuing (the place peak queued queries are >10) that does not instantly resolve with an autoscaling occasion, enhance the max scaling worth on your warehouse. Queuing straight provides latency as queries look ahead to obtainable assets.

Helper question on system tables that may be transformed to a SQL Alert or AI/BI Dashboard

- Excessive Parallelization/Low Shuffle: For queries that may be break up into many impartial duties—equivalent to filters or aggregations throughout giant datasets—and present low shuffle in Question Profiles, rising the SQL warehouse T-shirt dimension can enhance throughput and scale back queuing. Low shuffle signifies minimal information motion between nodes, which permits extra environment friendly parallel execution.

- Slim transformations (e.g., level lookups, combination lookups) typically profit from extra scaling for concurrent question dealing with. Vast transformations (complicated joins with aggregation) typically profit extra from bigger warehouse sizes versus scaling.

- Excessive Shuffle: Conversely, when shuffle is excessive, giant quantities of information are exchanged between nodes throughout question execution—typically on account of joins, aggregations or poorly organized information. This generally is a important efficiency bottleneck. In Question Profiles, excessive shuffle is indicated by giant values below “shuffle bytes written”, “shuffle bytes learn” or lengthy durations in shuffle-related levels. If these metrics are constantly elevated, optimizing the question or bettering bodily information structure relatively than merely scaling up compute is finest.

Helper question on system tables that may be transformed to a SQL Alert or AI/BI Dashboard

Taking a Macro Monitoring View

Whereas these analyses and guidelines assist perceive how queries impression the warehouse on the micro degree, sizing choices are made on the macro degree. Typically, begin by enabling the monitoring capabilities within the earlier part (and customise them) to determine what is occurring after which set up threshold measures for spill, skew, queuing, and many others., to function indicators for when resizing is required. Consider these thresholds to generate an impression rating by the frequency with which the thresholds are met or the share of time the thresholds are exceeded throughout common operation. To share a couple of instance measures (outline these utilizing your particular enterprise necessities and SLAs):

- Proportion of time every day that peak queued queries > 10

- Queries which can be within the high 5% of highest shuffle for an prolonged interval or constantly within the high 5% highest shuffle throughout peak utilization

- Intervals the place at the very least 20% of queries spill to disk or queries that spill to disk on greater than 25% of their executions

It is necessary to floor this in recognizing there are tradeoffs to contemplate, not a single recipe to comply with or one-size-fits-all for each information warehouse. If queue latency isn’t a priority, probably for in a single day queries that refresh, then do not tune for ultra-low-concurrency and acknowledge value effectivity with greater latency. This weblog gives a information on finest practices and methodologies to diagnose and tune your information warehouse based mostly in your distinctive implementation wants.

Optimizing Bodily Information (File) Format within the Lakehouse

Beneath are a number of finest practices for managing and optimizing bodily information recordsdata saved in your lakehouse. Use these and monitoring strategies to diagnose and resolve points impacting your information warehouse analytic workloads.

- Modify the information skipping of a desk (AWS | Azure | GCP) if needed. Delta tables retailer min/max and different statistics metadata for the primary 32 columns by default. Growing this quantity can enhance DML operation execution instances, however could lower question runtime if the extra columns are filtered in queries.

- To determine when you’ve got the small file downside, evaluate desk properties (numFiles, sizeInBytes, clusteringColumns, partitionColumns) and use both Predictive Optimization with Liquid Clustering or make sure you run OPTIMIZE compaction routines on high of correctly organized information.

- Whereas the advice is to allow Auto Liquid Clustering and reap the benefits of Predictive Optimization to take away handbook tuning, it’s useful to grasp underlying finest practices and be empowered to tune in choose cases manually. Beneath are helpful guidelines of thumb for choosing clustering columns:

- Begin with a single column, the one most naturally used as a predicate (and utilizing the strategies beneath), until there are a couple of apparent candidates. Typically, solely enormous tables profit from >1 cluster key.

- Prioritizing columns to make use of prioritizes optimizing reads over writes. They need to be 1) used as filter predicates, 2) utilized in GROUP BY or JOIN operations and three) MERGE columns.

- Typically, it ought to have excessive cardinality (however not distinctive). Keep away from meaningless values like UUID strings until you require fast lookups on these columns.

- Do not scale back cardinality (e.g., convert from timestamp thus far) as you’ll when setting a partition column.

- Do not use two associated columns (e.g., timestamp and datestamp)—at all times select the one with the upper cardinality.

- The order of keys within the CREATE TABLE syntax doesn’t matter. Multi-dimensional clustering is used.

Bringing it All Collectively: A Systematic Method

This weblog focuses on the primary three architectural levers. Different vital implementation elements contribute to architecting a high-concurrency, scalable, low-latency information warehouse, together with ETL/ELT, infrastructure footprint, DevOps and Governance. Further product perspective on implementing a lakehouse could be discovered right here, and an array of finest practices is offered from the Complete Information to Optimize Databricks, Spark and Delta Lake Workloads.

The foundational elements of your information warehouse—compute, information structure and modeling/querying—are extremely interdependent. Addressing efficiency successfully requires an iterative course of: repeatedly monitoring, optimizing and making certain new workloads adhere to an optimized blueprint. And evolve that blueprint as expertise finest practices change and your enterprise necessities change. You need the instruments and know-how to tune your warehouse to satisfy your exact concurrency, latency and scalability necessities. Sturdy governance, transparency, monitoring and safety allow this core architectural framework. These are usually not separate issues however the bedrock for delivering best-in-class information warehouse experiences on Databricks.

Now, let’s discover a latest buyer instance by which the framework and foundational finest practices, tuning and monitoring levers, have been utilized in apply, and a corporation considerably improved its information warehouse efficiency and effectivity.

Actual-World Eventualities and Tradeoffs

Electronic mail Advertising Platform Optimization

Enterprise Context

An e mail advertising platform gives e-commerce retailers with instruments to create customized buyer journeys based mostly on wealthy buyer information. The appliance permits customers to orchestrate e mail campaigns to focused audiences, serving to purchasers craft segmentation methods and observe efficiency. Actual-time analytics are vital to their enterprise—prospects anticipate quick visibility into marketing campaign efficiency metrics like click-through charges, bounces and engagement information.

Preliminary Problem

The platform was experiencing efficiency and price points with its analytics infrastructure. They have been working a Giant SQL Serverless warehouse with autoscaling from 1-5 clusters and even wanted to improve to XL throughout peak reporting intervals. Their structure relied on:

- Actual-time streaming information from a message queue into Delta Lake through steady structured streaming

- A nightly job to consolidate streamed data right into a historic desk

- Question-time unions between the historic desk and streaming information

- Advanced aggregations and deduplication logic executed at question time

This method meant that each buyer dashboard refresh required intensive processing, resulting in greater prices and slower response instances.

From monitoring the SQL warehouse, there was important queueing (yellow columns), with bursty intervals of utilization, the place autoscaling correctly engaged however was not capable of sustain with workloads:

To diagnose the reason for queueing, we recognized a couple of long-running queries and most steadily executed queries utilizing the question historical past (AWS | Azure | GCP) and system tables to find out whether or not queueing was merely on account of a excessive quantity of comparatively primary, slim queries or if optimization was wanted to enhance poor-performing queries.

A number of vital callouts from this instance profile from a long-running question:

- Low pruning (regardless of important filtering on time interval to return the latest 2 weeks) means a substantial quantity of information is being scanned.

- Excessive shuffle—there’ll inherently be shuffle on account of analytical aggregations, however it’s the majority of reminiscence utilization throughout historic and up to date information.

- Spill to disk in some cases.

These learnings from observing vital queries led to optimization actions throughout compute, information structure and question strategies.

Optimization Method

Working with a Databricks Supply Options Architect, the platform applied a number of key optimizations:

- Elevated merge frequency: Modified from nightly to hourly merges, considerably lowering the amount of streaming information that wanted processing at question time.

- Implement Materialized Views: Transformed the aggregation desk right into a materialized view that refreshes incrementally every hour, pre-computing complicated aggregation logic throughout refresh in order that query-time processing is restricted to solely the latest hour’s information.

- Trendy information group: Switched from Hive-style partitioning to computerized liquid clustering, which intelligently selects optimum clustering columns based mostly on question patterns and adapts over time.

Outcomes

After a six-week discovery and implementation course of, the platform noticed quick and memorable enhancements as soon as deployed:

- Diminished infrastructure prices: Downsized from a Giant serverless warehouse with autoscaling to a Small serverless warehouse with no autoscaling.

- Improved question efficiency: Decrease latency for end-user dashboards, enhancing buyer expertise.

- Streamlined operations: Eradicated operational overhead from frequent end-user efficiency complaints and help instances.

An instance of a question profile after optimization:

- Because the file structure was optimized, extra file pruning occurred to cut back the quantity of information/recordsdata that wanted to be learn.

- No spill to disk.

- Shuffle nonetheless happens due to analytical aggregations, however the quantity of shuffling is considerably decreased on account of extra environment friendly pruning and pre-aggregated components that do not have to be calculated at runtime.

This transformation demonstrates how making use of information modeling finest practices, leveraging serverless compute and using Databricks superior options like materialized views and liquid clustering can dramatically enhance each efficiency and cost-efficiency.

Key Takeaways

- Focus your necessities on information warehouse concurrency, latency and scale. Then, use finest practices, observability capabilities and tuning strategies to satisfy these necessities.

- Concentrate on right-sizing compute, implementing sturdy information structure practices (considerably helped by AI) and addressing information fashions and queries because the precedence.

- One of the best information warehouse is a Databricks lakehouse—reap the benefits of revolutionary approaches that result in new options, married with foundational information warehouse rules.

- Meet conventional information warehousing wants with out sacrificing AI/ML (you are capitalizing on them with Databricks).

- Do not dimension and tune blindly; leverage built-in observability to watch, optimize and automate cost-saving actions.

- Undertake Databricks SQL Serverless for optimum worth efficiency and help the variable utilization patterns typical of BI and analytics workloads.

Subsequent Steps and Further Sources

Reaching a high-concurrency, low-latency information warehouse that scales doesn’t occur by following a boilerplate recipe. There are tradeoffs to contemplate, and plenty of elements all work collectively. Whether or not you are cementing your information warehousing technique, in progress with an implementation and struggling to go reside, or optimizing your present footprint, take into account the most effective practices and framework outlined on this weblog to deal with it holistically. Attain out if you would like assist or to debate how Databricks can help all of your information warehousing wants.

Databricks Supply Options Architects (DSAs) speed up Information and AI initiatives throughout organizations. They supply architectural management, optimize platforms for value and efficiency, improve developer expertise and drive profitable mission execution. DSAs bridge the hole between preliminary deployment and production-grade options, working carefully with numerous groups, together with information engineering, technical leads, executives and different stakeholders to make sure tailor-made options and sooner time to worth. To learn from a customized execution plan, strategic steering and help all through your information and AI journey from a DSA, please get in contact along with your Databricks Account Crew.