Apache Flink is an open supply framework for stream and batch processing purposes. It excels in dealing with real-time analytics, event-driven purposes, and complicated knowledge processing with low latency and excessive throughput. Flink is designed for stateful computation with exactly-once consistency ensures for the appliance state.

Amazon Managed Service for Apache Flink is a completely managed stream processing service that you should utilize to run Apache Flink jobs at scale with out worrying about managing clusters and provisioning assets. You possibly can concentrate on implementing your utility utilizing your built-in improvement atmosphere (IDE) of alternative, and construct and package deal the appliance utilizing normal construct and steady integration and supply (CI/CD) instruments.

With Managed Service for Apache Flink, you may management the appliance lifecycle via easy AWS API actions. You need to use the API to begin and cease the appliance, and to use any adjustments to the code, runtime configuration, and scale. The service takes care of managing the underlying Flink cluster, supplying you with a serverless expertise. You possibly can implement automation resembling CI/CD pipelines with instruments that may work together with the AWS API or AWS Command Line Interface (AWS CLI).

You possibly can management the appliance utilizing the AWS Administration Console, AWS CLI, AWS SDK, and instruments utilizing the AWS API, resembling AWS CloudFormation or Terraform. The service isn’t prescriptive on the automation device you utilize to deploy and orchestrate the appliance.

Paraphrasing Jackie Stewart, the well-known racing driver, you don’t want to grasp the best way to function a Flink cluster to make use of Managed Service for Apache Flink, however some Mechanical Sympathy will provide help to implement a strong and dependable automation.

On this two-part collection, we discover what occurs throughout an utility’s lifecycle. This publish covers core ideas and the appliance workflow throughout regular operations. In Half 2, we take a look at potential failures, the best way to detect them via monitoring, and methods to rapidly resolve points after they happen.

Definitions

Earlier than analyzing the appliance lifecycle steps, we have to make clear the utilization of sure phrases within the context of Managed Service for Apache Flink:

- Utility – The principle useful resource you create, management, and run in Managed Service for Apache Flink is an utility.

- Utility code package deal – For every Managed Service for Apache Flink utility, you implement the appliance code package deal (utility artifact) of the Flink utility code you wish to run. This code is compiled and packaged together with dependencies right into a JAR or a ZIP file, that you simply add to an Amazon Easy Storage Service (Amazon S3) bucket.

- Configuration – Every utility has a configuration that accommodates the knowledge to run it. The configuration factors to the appliance code package deal within the S3 bucket and defines the parallelism, which will even decide the appliance assets, when it comes to KPUs. It additionally defines safety, networking, and runtime properties, that are handed to your utility code at runtime.

- Job – If you begin the appliance, Managed Service for Apache Flink creates a devoted cluster for you and runs your utility code as a Flink job.

The next diagram reveals the connection between these ideas.

There are two further vital ideas: checkpoints and savepoints, the mechanisms Flink makes use of to ensure state consistency throughout failures and operations. In Managed Service for Apache Flink, each checkpoints and savepoints are absolutely managed.

- Checkpoints – These are managed by the appliance configuration and enabled by default with a interval of 1 minute. In Managed Service for Apache Flink, checkpoints are used when a job robotically restarts after a runtime failure. They don’t seem to be sturdy and are deleted when the appliance is stopped or up to date and when the appliance robotically scales.

- Savepoints – These are known as snapshots in Managed Service for Apache Flink, and are used to persist the appliance state when the appliance is intentionally restarted by the person, because of an replace or an computerized scaling occasion. Snapshots might be triggered by the person. Snapshots (if enabled) are additionally robotically used to avoid wasting and restore the appliance state when the appliance is stopped and restarted, for instance to deploy a change or robotically scale. Automated use of snapshots is enabled within the utility configuration (enabled by default if you create an utility utilizing the console).

Lifecycle of an utility in Managed Service for Apache Flink

Beginning with the blissful path, a typical lifecycle of a Managed Service for Apache Flink utility contains the next steps:

- Create and configure a brand new utility.

- Begin the appliance.

- Deploy a change (replace the runtime configuration, replace the appliance code, change the parallelism to scale up or down).

- Cease the appliance.

Beginning, stopping, and updating the appliance use snapshots (if enabled) to retain utility state consistency throughout operations. We advocate enabling snapshots on each manufacturing and staging utility, to help the persistence of the appliance state throughout operations.

In Managed Service for Apache Flink, the appliance lifecycle is managed via the console, API actions within the kinesisanalyticsv2 API, or equal actions within the AWS CLI and SDK. On high of those elementary operations, you may construct your personal automation utilizing totally different instruments, straight utilizing low-level actions or utilizing larger stage infrastructure-as-code (IaC) tooling resembling AWS CloudFormation or Terraform.

On this publish, we discuss with the low-level API actions used at every step. Any higher-level IaC tooling will use mixture of those operations. Understanding these operations is key to designing a strong automation.

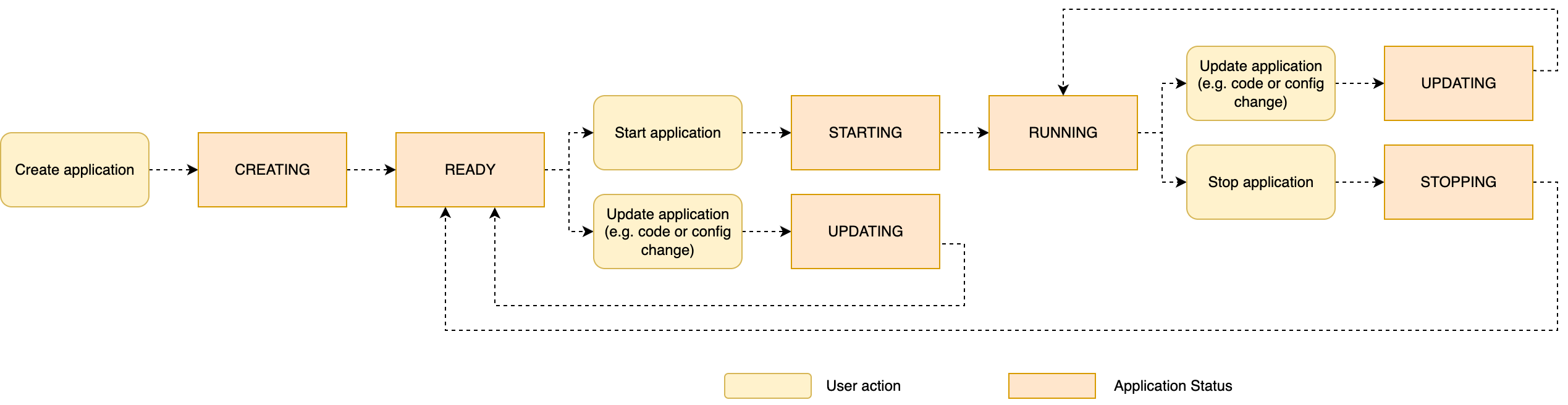

The next diagram summarizes the appliance lifecycle, displaying typical operations and utility statuses.

The standing of your utility, READY, STARTING, RUNNING, UPDATING, and so forth, might be noticed on the console and utilizing the DescribeApplication API motion.

Within the following sections, we analyze every lifecycle operation in additional element.

Create and configure the appliance

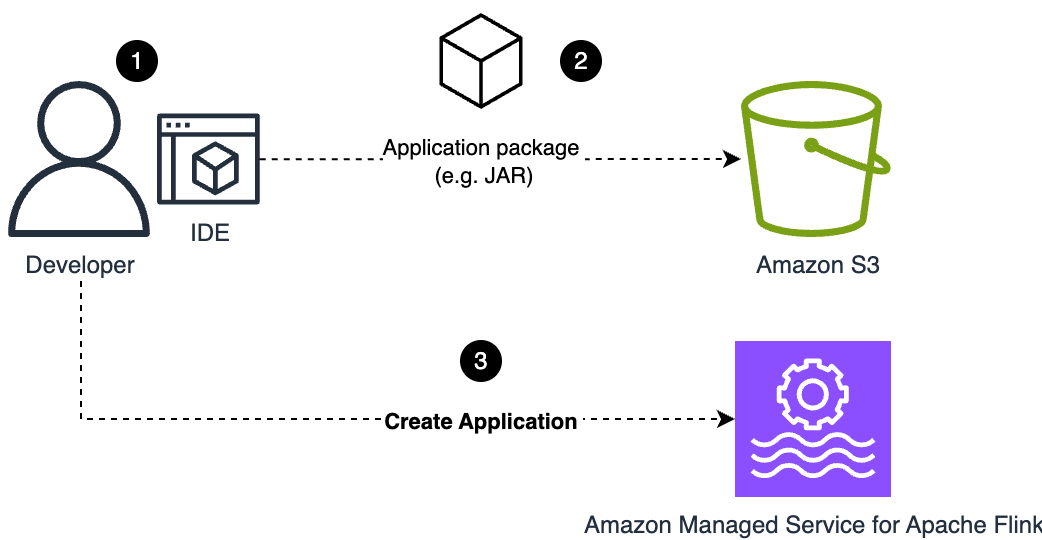

Step one is creating a brand new Managed Service for Apache Flink utility, together with defining the appliance configuration. You are able to do this in a single step utilizing the CreateApplication motion, or by creating the fundamental utility configuration after which updating the configuration earlier than beginning it utilizing UpdateApplication. The latter method is what you do if you create an utility from the console.

On this section, the developer packages the appliance they’ve carried out in a JAR file (for Java) or ZIP file (for Python) and uploads it to an S3 bucket the person has beforehand created. The bucket identify and the trail to the appliance code package deal are a part of the configuration you outline.

When UpdateApplication or CreateApplication is invoked, Managed Service for Apache Flink takes a replica of the appliance code package deal (JAR or ZIP file) referred by the configuration. The configuration is rejected if the file pointed by the configuration doesn’t exist.

The next diagram illustrates this workflow.

Merely updating the appliance code package deal within the S3 bucket doesn’t set off an replace. You could run UpdateApplication to make the brand new file seen to the service and set off the replace, even if you overwrite the code package deal with the identical identify.

Begin the appliance

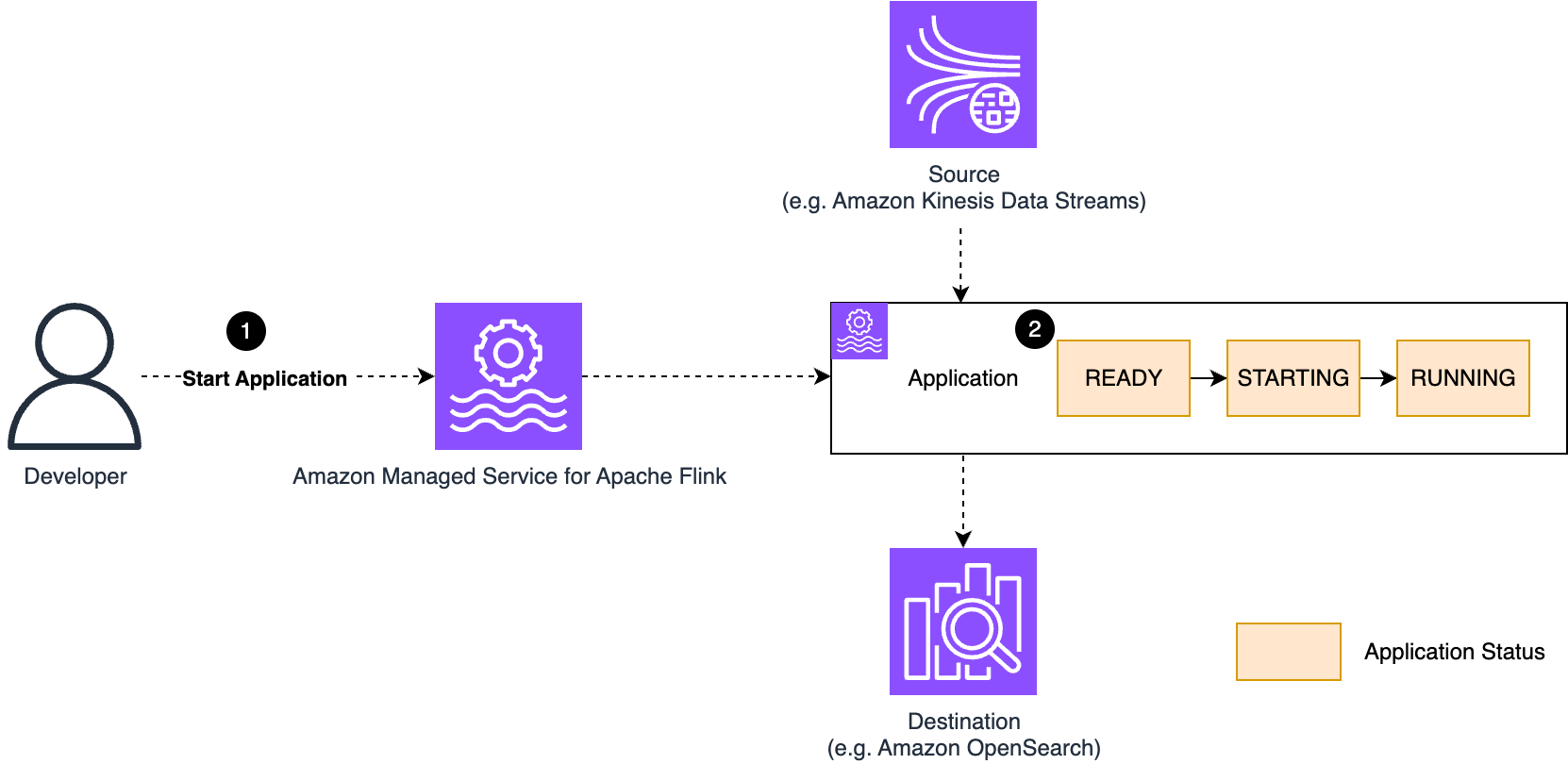

Managed Service for Apache Flink provisions assets when the appliance is definitely working, and also you solely pay for the assets of working purposes. You explicitly management when to begin the appliance by issuing a StartApplication.

Managed Service for Apache Flink indexes on excessive availability and runs your utility in a devoted Flink cluster. If you begin the appliance, Managed Service for Apache Flink deploys a devoted cluster and deploys and runs the Flink job primarily based on the configuration you outlined.

If you begin the appliance, the standing of the appliance strikes from READY, to STARTING, after which RUNNING.

The next diagram illustrates this workflow.

Managed Service for Apache Flink helps each streaming mode, the default for Apache Flink, and batch mode:

- Streaming mode – In streaming mode, after an utility is efficiently began and goes into

RUNNINGstanding, it retains working till you cease it explicitly. From this level on, the conduct on failure is robotically restarting the job from the most recent checkpoint, so there isn’t any knowledge loss. We focus on extra particulars about this failure situation later on this publish. - Batch mode – A Flink utility working in batch mode behaves in another way. After you begin it, it goes into

RUNNINGstanding, and the job continues working till it completes the processing. At that time the job will gracefully cease, and the Managed Service for Apache Flink utility goes again toREADYstanding.

This publish focuses on streaming purposes solely.

Replace the appliance

In Managed Service for Apache Flink, you deal with the next adjustments by updating the appliance configuration, utilizing the console or the UpdateApplication API motion:

- Utility code adjustments, changing the package deal (JAR or ZIP file) with one containing a brand new model

- Runtime properties adjustments

- Scaling, which suggests altering parallelism and assets (KPU) adjustments

- Operational parameter adjustments, resembling checkpoint, logging stage, and monitoring setup

- Networking configuration adjustments

If you modify the appliance configuration, Managed Service for Apache Flink creates a brand new configuration model, recognized by a model ID quantity, robotically incremented at each change.

Replace the code package deal

We talked about how the service takes a replica of the code package deal (JAR or ZIP file) if you replace the appliance configuration. The copy is related to the brand new utility configuration model that has been created. The service makes use of its personal copy of the code package deal to begin the appliance. You possibly can safely change or delete the code package deal after you’ve got up to date the configuration. The brand new package deal isn’t taken under consideration till you replace the appliance configuration once more.

Replace a READY (not working) utility

In case you replace an utility in READY standing, nothing particular occurs past creating the brand new configuration model that will likely be used the following time you begin the appliance. Nonetheless, in manufacturing, you’ll usually replace the configuration of an utility in RUNNING standing to use a change. Managed Service for Apache Flink robotically handles the operations required to replace the appliance with no knowledge loss.

Replace a RUNNING utility

To know what occurs if you replace a working utility, it’s essential to do not forget that Flink is designed for robust consistency and exactly-once state consistency. To keep up these options when a change is utilized, Flink should cease the info processing, take a replica of the appliance state, restart the job with the adjustments, and restore the state, earlier than processing can restart.

This can be a normal Flink conduct, and applies to any adjustments, whether or not it’s code adjustments, runtime configuration adjustments, or new parallelism to scale up and down. Managed Service for Apache Flink robotically orchestrates this course of for you. If snapshots are enabled, the service will take a snapshot earlier than stopping the processing and restart from the snapshot when the change is deployed. This fashion, the change might be deployed with zero knowledge loss.

If snapshots are disabled, the service restarts the job with the change, however the state will likely be empty, like the primary time you began the appliance. This may trigger knowledge loss. You usually don’t need this to occur, notably in manufacturing purposes.

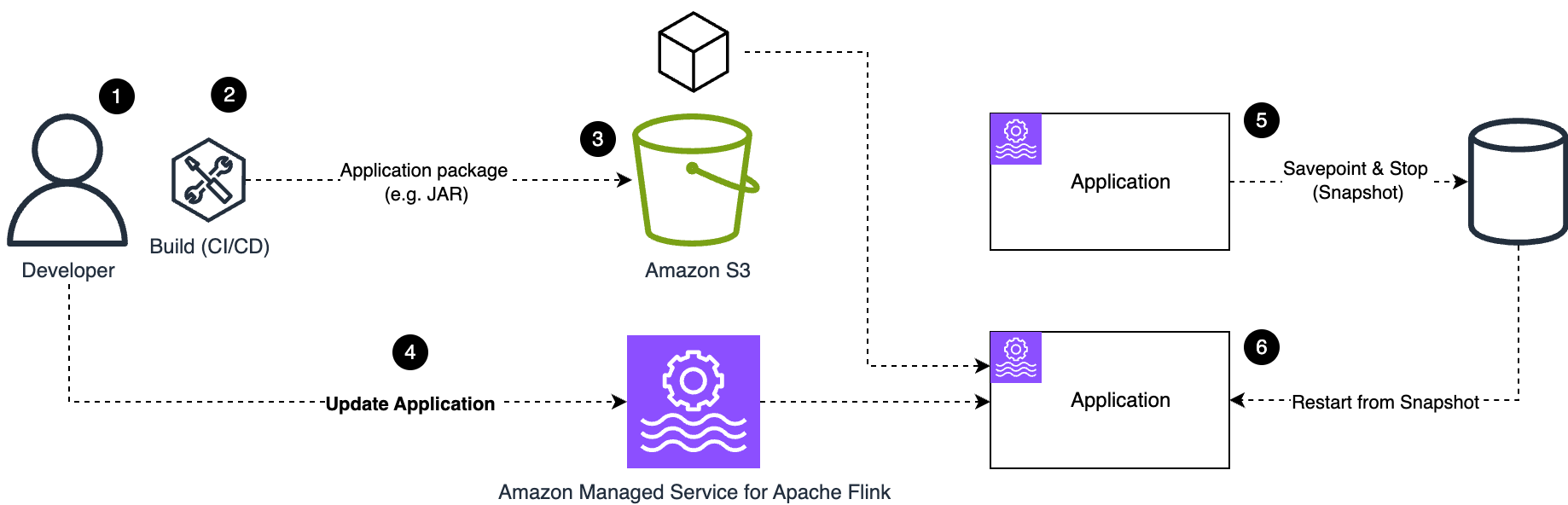

Let’s discover a sensible instance, illustrated by the next diagram. As an illustration, if you wish to deploy a code change, the next steps usually occur (on this instance, we assume that snapshots are enabled, which they need to be in a manufacturing utility):

- Make adjustments to the appliance code.

- The construct course of creates the appliance package deal (JAR or ZIP file), both manually or utilizing CI/CD automation.

- Add the brand new utility package deal to an S3 bucket.

- Replace the appliance configuration pointing to the brand new utility package deal.

- As quickly as you efficiently replace the configuration, Managed Service for Apache Flink begins the operation for updating the appliance. The appliance standing adjustments to

UPDATING. The Flink job is stopped, taking a snapshot of the appliance state. - After the adjustments have been utilized, the appliance is restarted utilizing the brand new configuration, which on this case contains the brand new utility code, and the job restores the state from the snapshot. When the method is full, the appliance standing goes again to

RUNNING.

The method is comparable for adjustments to the appliance configuration. For instance, you may change the parallelism to scale the appliance updating the appliance configuration, inflicting the appliance to be redeployed with the brand new parallelism and the quantity assets (CPU, reminiscence, native storage) primarily based on the brand new variety of KPU.

Replace the appliance’s IAM position

The appliance configuration accommodates a reference to an AWS Identification and Entry Administration (IAM) position. Within the unlikely case you wish to use a distinct position, you may replace the appliance configuration utilizing UpdateApplication. The method would be the similar described earlier.

Nonetheless, you often wish to modify the IAM position, so as to add or take away permissions. This operation doesn’t use the Managed Service for Apache Flink utility lifecycle and might be finished at any time. No utility cease and restart is required. IAM adjustments take impact instantly, doubtlessly inducing a failure if, for instance, you inadvertently take away a required permission. On this case, the conduct of the Flink job’s response may fluctuate, relying on the affected element.

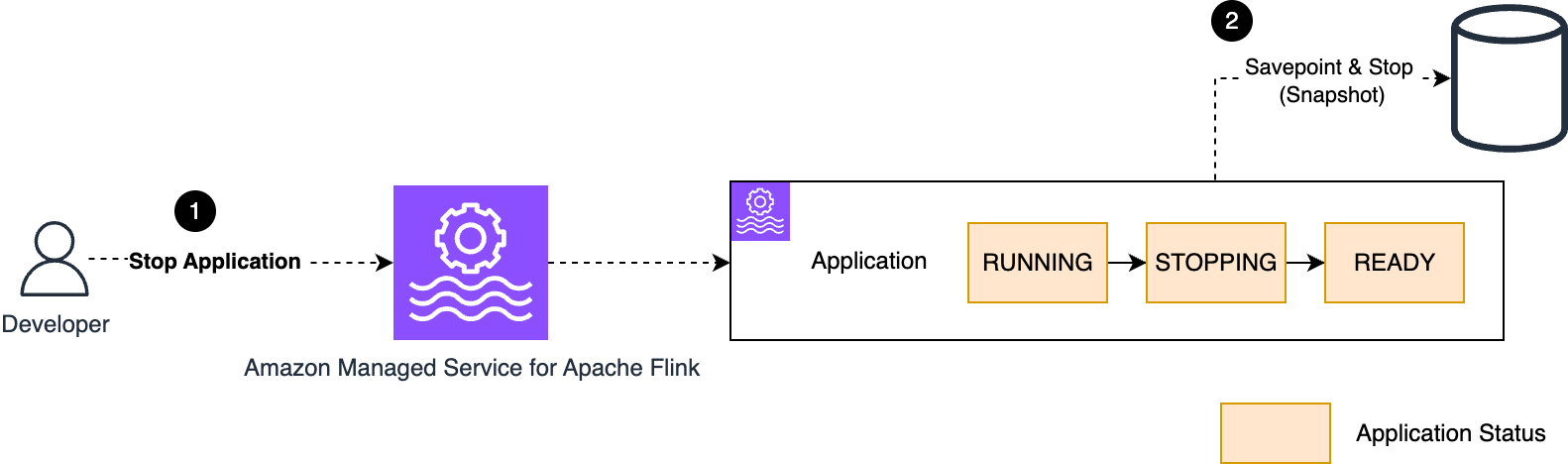

Cease the appliance

You possibly can cease a working Managed Service for Apache Flink utility utilizing the StopApplication motion or the console. The service gracefully stops the appliance. The state turns from RUNNING, into STOPPING, and at last into READY.

When snapshots are enabled, the service will take a snapshot of the appliance state when it’s stopped, as proven within the following diagram.

After you cease the appliance, any useful resource beforehand provisioned to run your utility is reclaimed. You incur no price whereas the appliance isn’t working (READY).

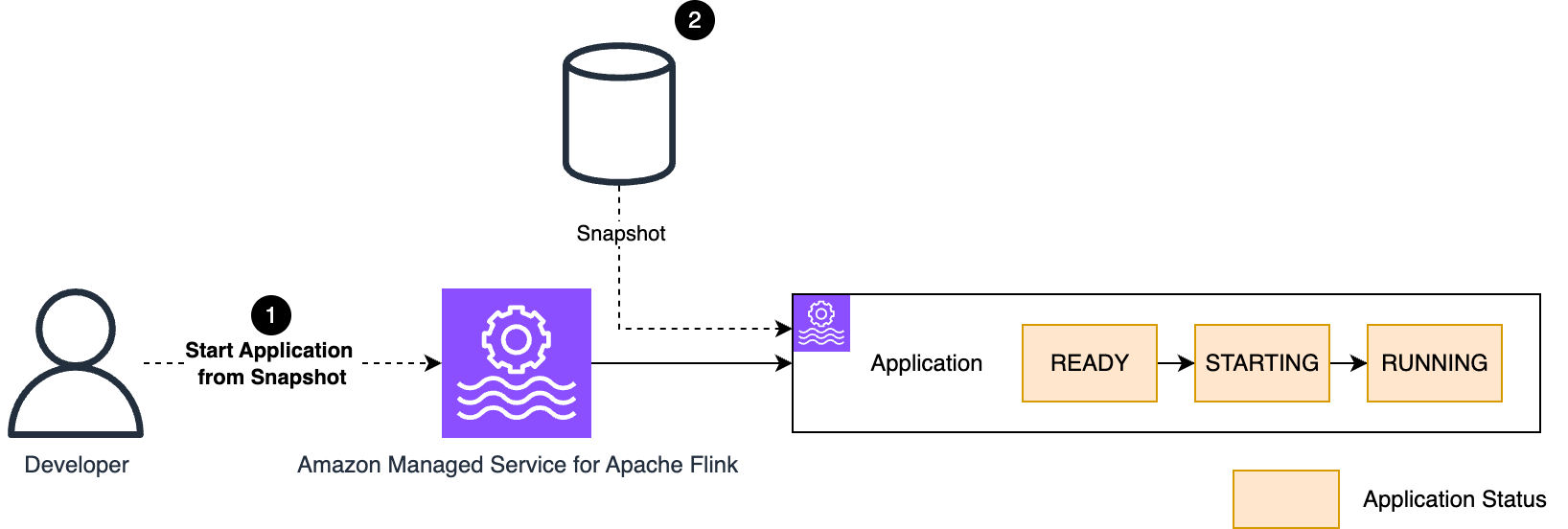

Begin the appliance from a snapshot

Typically, you may wish to cease a manufacturing utility and restart it later, restarting the processing from the purpose it was stopped. Managed Service for Apache Flink helps beginning the appliance from a snapshot. The snapshot saves not solely the appliance state, but additionally the purpose within the supply—the offsets in a Kafka matter, for instance—the place the appliance stopped consuming.

When snapshots are enabled, Managed Service for Apache Flink robotically takes a snapshot if you cease the appliance. This snapshot can be utilized if you restart the appliance.

The StartApplication API command has three restore choices:

RESTORE_FROM_LATEST_SNAPSHOT: Restore from the most recent snapshot.RESTORE_FROM_CUSTOM_SNAPSHOT: Restore from a customized snapshot (it’s essential to specify which one).SKIP_RESTORE_FROM_SNAPSHOT: Skip restoring from the snapshot. The appliance will begin with no state, because the very first time you ran it.

If you begin the appliance for the very first time, no snapshot is offered but. Whatever the restore possibility you select, the appliance will begin with no snapshot.

The method of beginning the appliance from a snapshot is visualized within the following diagram.

In manufacturing, you usually wish to restore from the most recent snapshot (RESTORE_FROM_LATEST_SNAPSHOT). This can robotically use the snapshot the service created if you final stopped the appliance.

Snapshots are primarily based on Flink’s savepoint mechanism and preserve the exactly-once consistency of the interior state. Additionally, the chance of reprocessing duplicate information from the supply is minimized as a result of the snapshot is taken synchronously whereas the Flink job is stopped.

Begin the appliance from an older snapshot

In Managed Service for Apache Flink, you may schedule taking periodic snapshots of a working manufacturing utility, for instance utilizing the Snapshot Supervisor. Taking a snapshot from a working utility doesn’t cease the processing and solely introduces a minimal overhead (similar to checkpointing). With the second possibility, RESTORE_FROM_CUSTOM_SNAPSHOT, you may restart the appliance again in time, utilizing a snapshot older than the one taken on the final StopApplication.

As a result of the supply positions—for instance, the offsets in a Kafka matter—are additionally restored with the snapshot, the appliance will revert to the purpose the appliance was processing when the snapshot was taken. This will even restore the state at that actual level, offering consistency.

If you begin an utility from an older snapshot, there are two vital concerns:

- Solely restore snapshots taken inside the supply system retention interval – In case you restore a snapshot older than the supply retention, knowledge loss may happen, and the appliance conduct is unpredictable.

- Restarting from an older snapshot will doubtless generate duplicate output – That is typically not an issue when the end-to-end system is designed to be idempotent. Nonetheless, this may trigger issues if you’re utilizing a Flink transactional connector, resembling File System sink or Kafka sink with exactly-once ensures enabled. As a result of these sinks are designed to ensure no duplicates (stopping them at any price), they may stop your utility from restarting from an older snapshot. There are workarounds to this operational drawback, however they depend upon the particular use case and are past the scope of this publish.

Understanding what occurs if you begin your utility

Now we have discovered the basic operations within the lifecycle of an utility. In Managed Service for Apache Flink, these operations are managed by a number of API actions, resembling StartApplication, UpdateApplication, and StopApplication. The service controls each operation for you. You don’t need to provision or handle Flink clusters. Nonetheless, a greater understanding of what occurs throughout the lifecycle provides you with ample Mechanical Sympathy to acknowledge potential failure modes and implement a extra strong automation.

Let’s see intimately what occurs if you difficulty a StartApplication command on an utility in READY (not working). If you difficulty an UpdateApplication command on a RUNNING utility, the appliance is first stopped with a snapshot, after which restarted with the brand new configuration, with a course of similar to what we’re going to see.

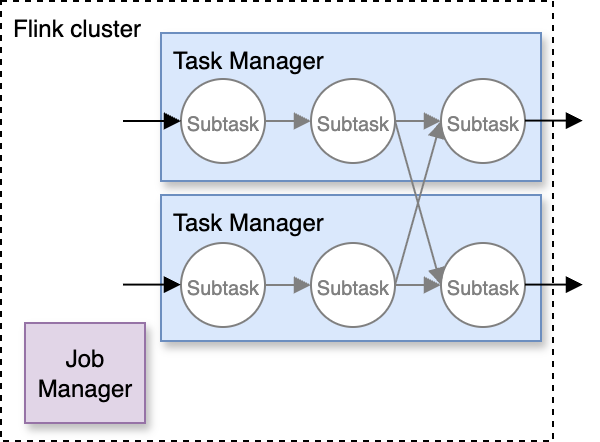

Composition of a Flink cluster

To know what occurs if you begin the appliance, we have to introduce a few further ideas. A Flink cluster is comprised of two sorts of nodes:

- A single Job Supervisor, which acts as a coordinator

- A number of Job Managers, which do the precise knowledge processing

In Managed Service for Apache Flink, you may see the cluster nodes within the Flink Dashboard, which you’ll entry from the console.

Flink decomposes the info processing outlined by your utility code into a number of subtasks, that are distributed throughout the Job Supervisor nodes, as illustrated within the following diagram.

Bear in mind, in Managed Service for Apache Flink, you don’t want to fret about provisioning and configuring the cluster. The service offers a devoted cluster on your utility. The full quantity of vCPU, reminiscence, and native storage of Job Managers matches the variety of KPU you configured.

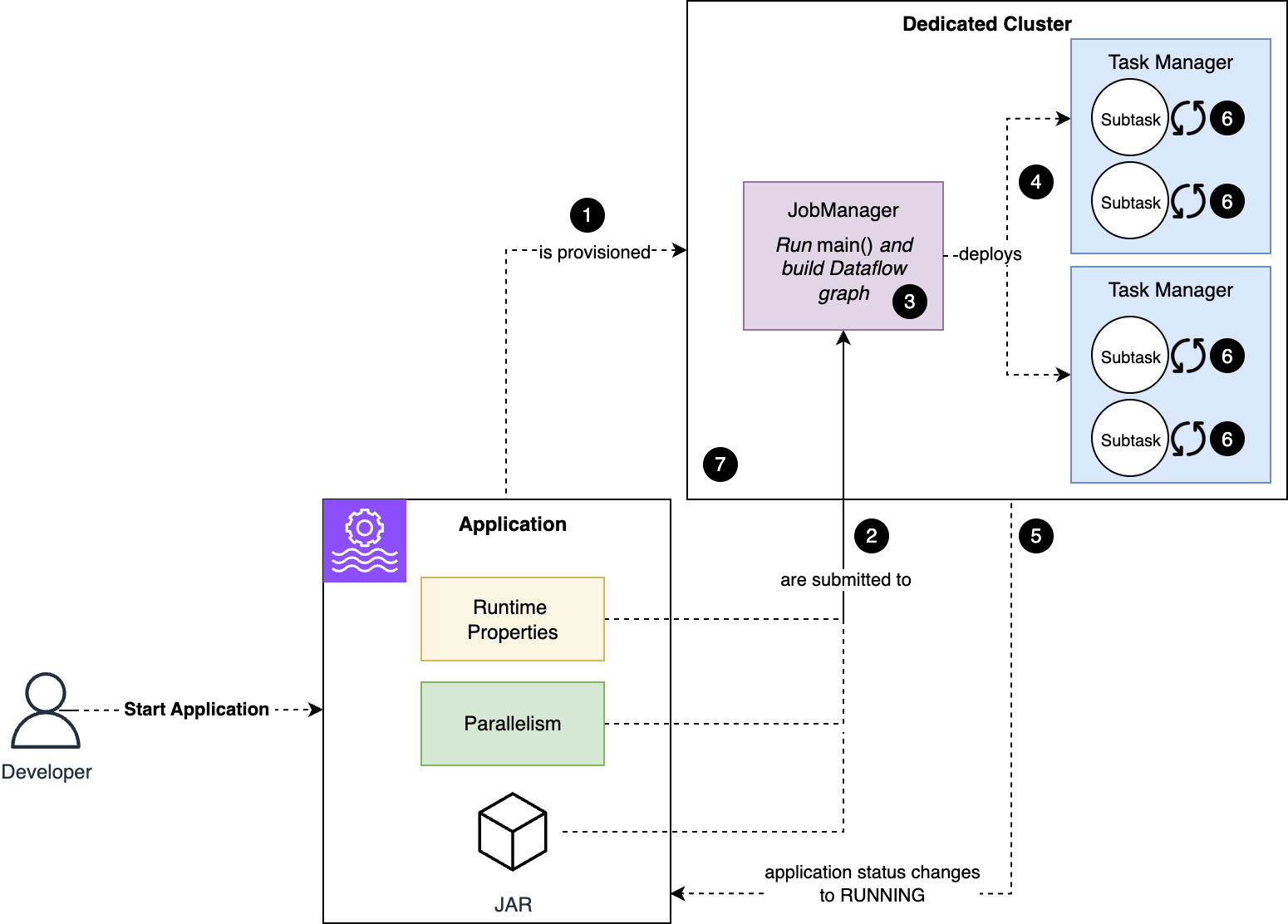

Beginning your Managed Service for Apache Flink utility

Now that we’ve mentioned how a Flink cluster consists, let’s discover what occurs if you difficulty a StartApplication command, or when the appliance restarts after a change has been deployed with an UpdateApplication command.

The next diagram illustrates the method. All the pieces is carried out robotically for you.

The workflow consists of the next steps:

- A devoted cluster, with the quantity of assets you requested, primarily based on the variety of KPU, is provisioned on your utility.

- The appliance code, runtime properties, and different configurations resembling the appliance parallelism are handed to the Job Supervisor node, the coordinator of the cluster.

- The Java or Python code within the

most important()technique of your utility is executed. This generates the logical graph of operators of your utility (known as dataflow). Based mostly on the dataflow you outlined and the appliance parallelism, Flink generates the subtasks, the precise nodes Flink will execute to course of your knowledge. - Flink then distributes the job’s subtasks throughout Job Managers, the precise employee nodes of the cluster.

- When the earlier step succeeds, the Flink job standing and the Managed Service for Apache Flink utility standing change to

RUNNING. Nonetheless, the job continues to be not fully working and processing knowledge. All substasks should be initialized. - Every subtask independently restores its state, if ranging from a snapshot, and initializes runtime assets. For instance, Flink’s Kafka supply connector restores the partition assignments and offsets from the savepoint (snapshot), establishes a connection to the Kafka cluster, and subscribes to the Kafka matter. From this step onward, a Flink job will cease and restart from its final checkpoint when encountering any unhandled error. If the issue inflicting the error isn’t transient, the job retains stopping and restarting from the identical checkpoint in a loop.

- When all subtasks are efficiently initialized and alter to

RUNNINGstanding, the Flink job begins processing knowledge and is now correctly working.

Conclusion

On this publish, we mentioned how the lifecycle of a Managed Service for Apache Flink utility is managed by easy AWS API instructions, or the equal utilizing the AWS SDK or AWS CLI. In case you are utilizing high-level automation instruments resembling AWS CloudFormation or Terraform, the low-level actions are additionally abstracted away for you. The service handles the complexity of working the Flink cluster and orchestrating the Flink job lifecycle.

Nonetheless, with a greater understanding of how Flink works and what the service does for you, you may implement extra strong automation and troubleshoot failures.

Within the Half 2, we proceed analyzing failure eventualities that may occur throughout regular operations or if you deploy a change or scale the appliance, and the best way to monitor operations to detect and get well when one thing goes mistaken.

Concerning the authors