Human-in-the-loop is a technique to construct machine studying fashions with individuals concerned on the proper moments. In human-in-the-loop machine studying, specialists label knowledge, evaluate edge circumstances, and provides suggestions on outputs. Their enter shapes targets, units high quality bars, and teaches fashions how you can deal with gray areas. The result’s Human-AI collaboration that retains methods helpful and protected for actual use. Many groups deal with HITL as last-minute hand repair. That view misses the purpose.

HITL works finest as deliberate oversight contained in the workflow. Folks information knowledge assortment, annotation guidelines, mannequin coaching checks, analysis, deployment gates, and stay monitoring. Automation handles the routine. People step in the place context, ethics, and judgment matter. This stability turns human suggestions in ML coaching into regular enhancements, not one-off patches.

Here’s what this text covers subsequent.

We outline HITL in clear phrases and map the place it suits within the ML pipeline. We define how you can design a sensible HITL system and why it lifts AI coaching knowledge high quality. We pair HITL with clever annotation, present how you can scale with out shedding accuracy, and flag widespread pitfalls. We shut with what HITL means as AI methods develop extra autonomous.

What’s Human-in-the-Loop (HITL)?

Human-in-the-Loop (HITL) is a mannequin improvement strategy the place human experience guides, validates, and improves AI/ML methods for larger accuracy and reliability. As a substitute of leaving knowledge processing, coaching, and decision-making solely to algorithms, HITL integrates human experience to enhance accuracy, reliability, and security.

In apply, HITL can contain:

- Information labeling and annotation: People present floor fact knowledge that trains AI fashions.

- Reviewing edge circumstances: Consultants validate or right outputs the place the mannequin is unsure.

- Steady suggestions: Human corrections refine the system over time, bettering adaptability.

This collaboration ensures that AI methods stay clear, truthful, and aligned with real-world wants, particularly in advanced or delicate domains like healthcare, finance, or actual property. Basically, HITL combines the effectivity of automation with human judgment to construct smarter, safer, and extra reliable AI options.

What’s Human-in-the-Loop Machine Studying

Human-in-the-loop machine studying is an ML workflow that retains individuals concerned at key steps. It’s greater than handbook fixes. Assume deliberate human oversight in knowledge work, mannequin checks, and stay operations.

Automation has grown quick. We moved from rule-based scripts to statistical strategies, then to deep studying and right this moment’s generative fashions. Techniques now study patterns at scale. Even so, fashions nonetheless miss uncommon circumstances and shift with new knowledge. Labels age. Context adjustments by area, season, or coverage. That’s the reason edge circumstances, knowledge drift, and area quirks maintain displaying up.

The price of errors is actual. Facial recognition can present bias on pores and skin tone and gender. Imaginative and prescient fashions in autonomous autos can misclassify a truck facet as open house. In healthcare, a triage rating can skew towards a subgroup if coaching knowledge lacked correct protection. These errors erode belief.

HITL helps shut that hole.

A easy human-in-the-loop structure provides individuals to mannequin coaching and evaluate so choices keep grounded in context.

- Consultants write labeling guidelines, pull exhausting examples, and settle disputes.

- They set thresholds, evaluate dangerous outputs, and doc uncommon circumstances so the mannequin learns.

- After launch, reviewers audit alerts, repair labels, and feed these adjustments into the following coaching cycle.

The mannequin takes routine work. Folks deal with judgment, danger, and ethics. This regular loop improves accuracy, reduces bias, and retains methods aligned with actual use.

Why HITL is important for high-quality coaching knowledge

Human-in-the-Loop (HITL) is important for high-quality coaching knowledge and efficient knowledge preparation for machine studying as a result of AI fashions are solely nearly as good as the information they study from. With out human experience, coaching datasets danger being inaccurate, incomplete, or biased. Automated labeling hits a ceiling when knowledge is noisy or ambiguous. Accuracy plateaus and errors unfold into coaching and analysis.

Rechecks of well-liked benchmarks discovered label errors round 3 to six %, sufficient to flip mannequin rankings, and that is the place educated annotators stroll into the image. HITL ensures:

- Area experience. Radiologists for medical imaging. Linguists for NLP. They set guidelines, spot edge circumstances, and repair refined misreads that scripts miss.

- Clear escalation. Tiered evaluate with adjudication prevents single-pass errors from turning into floor fact.

- Focused effort. Lively studying routes solely unsure gadgets to individuals, which raises sign with out bloating value.

High quality field: GIGO in ML

- Higher labels result in higher fashions.

- Human suggestions in ML coaching breaks error propagation and retains datasets aligned with real-world that means.

Right here’s proof that it really works:

- Re-labeled ImageNet. When researchers changed single labels with human-verified units, reported good points shrank and a few mannequin rankings modified. Cleaner labels produced a extra devoted check of actual efficiency.

- Benchmark audits. Systematic evaluations present that small fractions of mislabelled examples can distort each analysis and deployment selections, reinforcing the necessity for human within the loop on high-impact knowledge.

Human-in-the-loop machine studying affords deliberate oversight that upgrades coaching knowledge high quality, reduces bias, and stabilizes mannequin habits the place it counts.

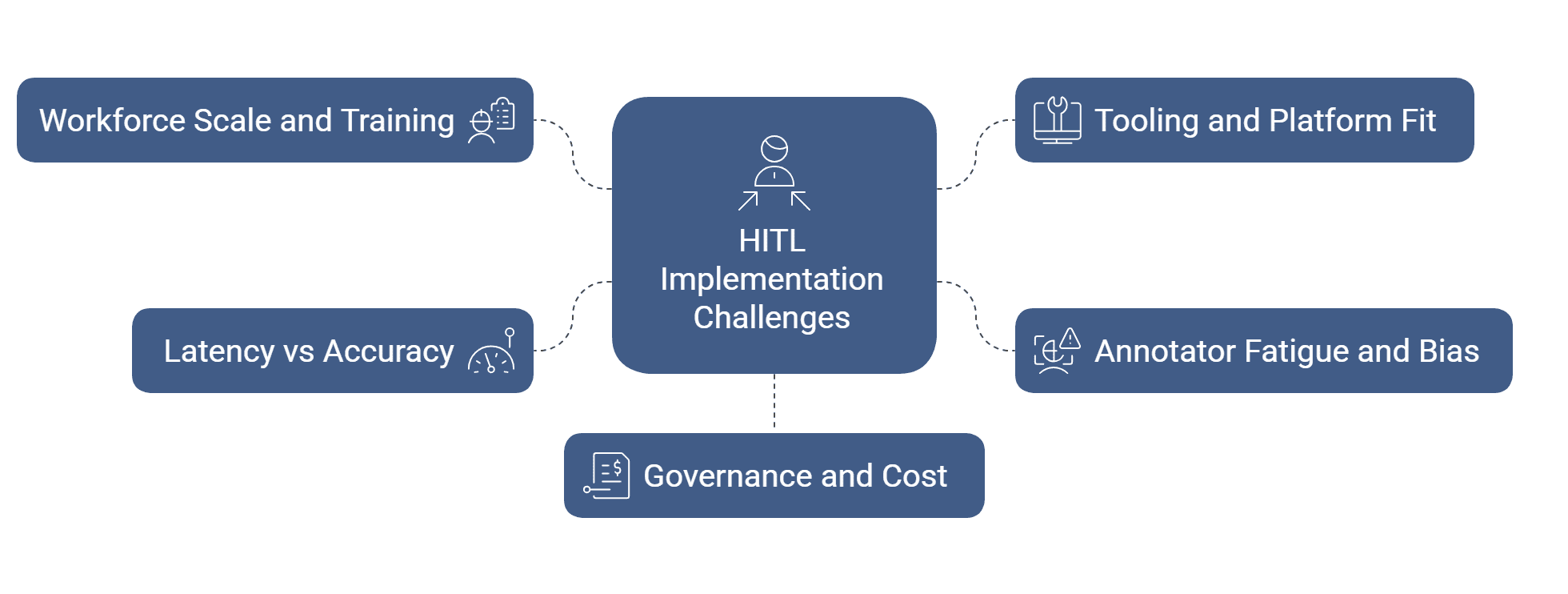

Challenges and issues in implementing HITL

Implementing Human-in-the-Loop (HITL) comes with challenges equivalent to scaling human involvement, guaranteeing constant knowledge labeling, managing prices, and integrating suggestions effectively. Organizations should stability automation with human oversight, deal with potential biases, and keep knowledge privateness, all whereas designing workflows that maintain the ML pipeline each correct and environment friendly.

- Workforce scale and coaching:

You want sufficient educated annotators on the proper time. Create clear guides, quick coaching movies, and fast quizzes. Monitor settlement charges and provides quick suggestions so high quality improves week by week. - Tooling and platform match:

Examine that your labeling device speaks your stack. Help for versioned schemas, audit trails, RBAC, and APIs retains knowledge transferring. In case you construct customized instruments, funds for ops, uptime, and consumer assist. - Annotator fatigue and bias:

Lengthy queues and repetitive gadgets decrease accuracy. Rotate duties, cap session size, and blend simple with exhausting examples. Use blind evaluate and battle decision to scale back private bias and groupthink. - Latency vs accuracy in actual time:

Some use circumstances want instantaneous outcomes. Others can await evaluate. Triage by danger. Route solely high-risk or low-confidence gadgets to people. Cache choices and reuse them to chop delay. - Governance and value:

Human-in-the-loop machine studying wants clear possession. Outline acceptance standards, escalation paths, and funds alerts. Measure label high quality, throughput, and unit value so leaders can commerce velocity for accuracy with eyes open.

Tips on how to design an efficient human-in-the-loop system

Begin with choices, not instruments.

Record the factors the place judgment shapes outcomes. Write the foundations for these moments, agree on high quality targets, and match human-in-the-loop machine studying into that path. Preserve the loop easy to run and straightforward to measure.

Use the suitable sorts of information labeling

Use expert-only labeling for dangerous or uncommon lessons. Add model-assist the place the system pre-fills labels and other people affirm or edit. For exhausting gadgets, gather two or three opinions and let a senior reviewer resolve. Usher in mild programmatic guidelines for apparent circumstances, however maintain individuals answerable for edge circumstances.

Putting in HITL in your organization

- Decide one high-value use case and run a brief pilot.

- Write pointers with clear examples and counter-examples.

- Set acceptance checks, escalation steps, and a service stage for turnaround.

- Wire lively studying so low-confidence gadgets attain reviewers first.

- Monitor settlement, latency, unit value, and error themes.

- When the loop holds regular, develop to the following dataset utilizing the identical HITL structure in AI.

Is a human within the loop system scalable?

Sure, in case you route by confidence and danger. Right here’s how one can make the system scalable:

- Auto-accept clear circumstances.

- Ship medium circumstances to educated reviewers.

- Escalate solely the few which can be excessive affect or unclear.

- Use label templates, ontology checks, and periodic audits to maintain consistency as quantity grows.

Higher uncertainty scores will goal evaluations extra exactly. Mannequin-assist will velocity video and 3D labeling. Artificial knowledge will assist cowl uncommon occasions, however individuals will nonetheless display it. RLHF will prolong past textual content to policy-heavy outputs in different domains.

For moral and equity checks, begin writing bias-aware guidelines. Pattern by subgroup and evaluate these slices on a schedule. Use numerous annotator swimming pools and occasional blind evaluations. Preserve audit trails, privateness controls, and consent information tight.

These steps maintain human-AI collaboration protected, traceable, and match for actual use.

Wanting forward: HITL in a way forward for autonomous AI

Fashions are getting higher at self-checks and self-corrections. They may nonetheless want guardrails. Excessive-stakes calls, long-tail patterns, and shifting insurance policies name for human judgment.

Human enter will change form. Extra immediate design and coverage establishing entrance. Extra suggestions curation and dataset governance. Moral evaluate as a scheduled apply, not an afterthought. In reinforcement studying with human suggestions, reviewers will deal with disputed circumstances and security boundaries whereas instruments deal with routine scores.

HITL just isn’t a fallback. It’s a strategic associate in ML operations: it units requirements, tunes thresholds, and audits outcomes so methods keep aligned with actual use.

Deeper integrations with labeling and MLOps instruments, richer analytics for slice-level high quality, and a specialised workforce by area and job sort. The intention is straightforward: maintain automation quick, maintain oversight sharp, and maintain fashions helpful because the world adjustments.

Conclusion

Human within the loop is the bottom of reliable AI because it retains judgment within the workflow the place it issues. It turns uncooked knowledge into dependable alerts. With deliberate evaluations, clear guidelines, and lively studying, fashions study quicker and fail safer.

High quality holds as you scale as a result of individuals deal with edge circumstances, bias checks, and coverage shifts whereas automation does the routine. That’s how knowledge turns into intelligence with each scale and high quality.

In case you are selecting a associate, choose one which embeds HITL throughout knowledge assortment, annotation, QA, and monitoring. Ask for measurable targets, slice-level dashboards, and actual escalation paths. That’s our mannequin at HitechDigital. We construct and run HITL loops finish to finish so your methods keep correct, accountable, and prepared for actual use.