Fashionable knowledge groups face a vital problem: their analytical datasets are scattered throughout a number of storage techniques and codecs, creating operational complexity that slows down insights and hampers collaboration. Knowledge scientists waste precious time navigating between totally different instruments to entry knowledge saved in numerous places, whereas knowledge engineers wrestle to take care of constant efficiency and governance throughout disparate storage options. Groups usually discover themselves locked into particular question engines or analytics instruments primarily based on the place their knowledge resides, limiting their potential to decide on the most effective device for every analytical job.

Amazon SageMaker Unified Studio addresses this fragmentation by offering a single setting the place groups can entry and analyze organizational knowledge utilizing AWS analytics and AI/ML providers. The brand new Amazon S3 Tables integration solves a elementary downside: it permits groups to retailer their knowledge in a unified, high-performance desk format whereas sustaining the pliability to question that very same knowledge seamlessly throughout a number of analytics engines—whether or not by means of JupyterLab notebooks, Amazon Redshift, Amazon Athena, or different built-in providers. This eliminates the necessity to duplicate knowledge or compromise on device alternative, permitting groups to give attention to producing insights slightly than managing knowledge infrastructure complexity.

Desk buckets are the third sort of S3 bucket, happening alongside the prevailing basic goal buckets, listing buckets, and now the fourth sort – vector buckets. You’ll be able to consider a desk bucket as an analytics warehouse that may retailer Apache Iceberg tables with numerous schemas. Moreover, S3 Tables ship the identical sturdiness, availability, scalability, and efficiency traits as S3 itself, and robotically optimize your storage to maximise question efficiency and to reduce price.

On this publish, you learn to combine SageMaker Unified Studio with S3 tables and question your knowledge utilizing Athena, Redshift, or Apache Spark in EMR and Glue.

Integrating S3 Tables with AWS analytics providers

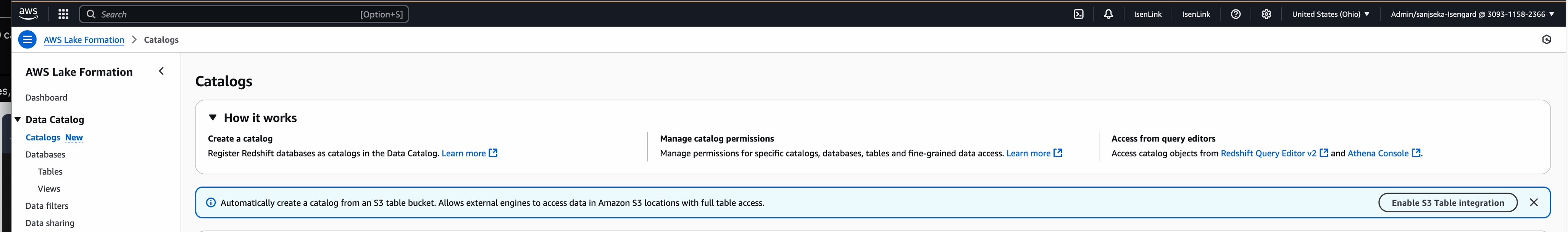

S3 desk buckets combine with AWS Glue Knowledge Catalog and AWS Lake Formation to permit AWS analytics providers to robotically uncover and entry your desk knowledge. For extra data, see creating an S3 Tables catalog.

Earlier than you get began with SageMaker Unified Studio, your administrator should first create a website within the SageMaker Unified Studio and give you the URL. For extra data, see the SageMaker Unified Studio Administrator Information.

Should you’ve by no means used S3 Tables in SageMaker Studio, you’ll be able to enable it to allow the S3 Tables analytics integration whenever you create a brand new S3 Tables catalog in SageMaker Unified Studio.

Be aware: This integration must be configured individually in every AWS Area.

Whenever you combine utilizing SageMaker Unified Studio, it takes the next actions in your account:

- Creates a brand new AWS Identification and Entry Administration (IAM) service position that offers AWS Lake Formation entry to all of your tables and desk buckets in the identical AWS Area the place you’ll provision the sources. This permits Lake Formation to handle entry, permissions, and governance for all present and future desk buckets.

- Creates a catalog from an S3 desk bucket within the AWS Glue Knowledge Catalog.

- Add the Redshift service position (

AWSServiceRoleForRedshift)as a Lake Formation Learn-only administrator permissions.

Conditions

Creating catalogs from S3 desk buckets in SageMaker Unified Studio

To get began utilizing S3 Tables in SageMaker Unified Studio you create a brand new Lakehouse catalog with S3 desk bucket supply utilizing the next steps.

- Open the SageMaker console and use the area selector within the high navigation bar to decide on the suitable AWS Area.

- Choose your SageMaker area.

- Choose or create a brand new mission you wish to create a desk bucket in.

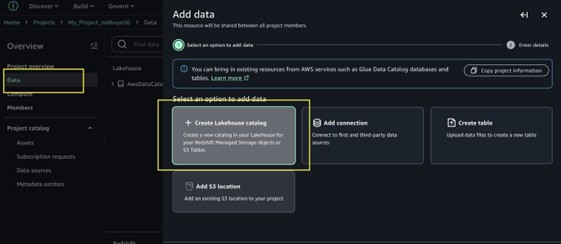

- Within the navigation menu choose Knowledge, then choose + so as to add a brand new knowledge supply.

- Select Create Lakehouse catalog.

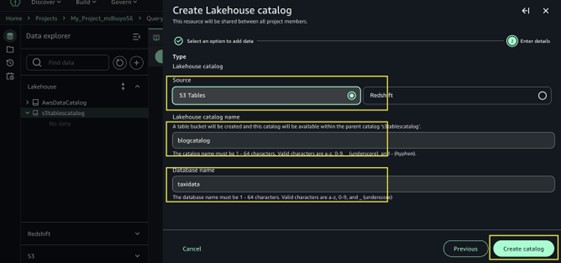

- Within the add catalog menu, select S3 Tables because the supply.

- Enter a reputation for the catalog blogcatalog.

- Enter database identify taxidata.

- Select Create catalog.

- The next steps will assist you to create these sources in your AWS account:

- A new S3 desk bucket and the corresponding Glue little one catalog beneath the father or mother Catalog

s3tablescatalog. - Go to Glue console, develop Knowledge Catalog, Click on databases, a brand new database inside that Glue little one catalog. The database identify will match the database identify you offered.

- Look forward to the catalog provisioning to complete.

- A new S3 desk bucket and the corresponding Glue little one catalog beneath the father or mother Catalog

- Create tables in your database, then use the Question Editor or a Jupyter pocket book to run queries in opposition to them.

Creating and querying S3 desk buckets

After including an S3 Tables catalog, it may be queried utilizing the format s3tablescatalog/blogcatalog. You’ll be able to start creating tables throughout the catalog and question them in SageMaker Studio utilizing the Question Editor or JupyterLab. For extra data, see Querying S3 Tables in SageMaker Studio.

Be aware: In SageMaker Unified Studio, you’ll be able to create S3 tables solely utilizing the Athena engine. Nonetheless, as soon as the tables are created, they are often queried utilizing Athena, Redshift, or by means of Spark in EMR and Glue.

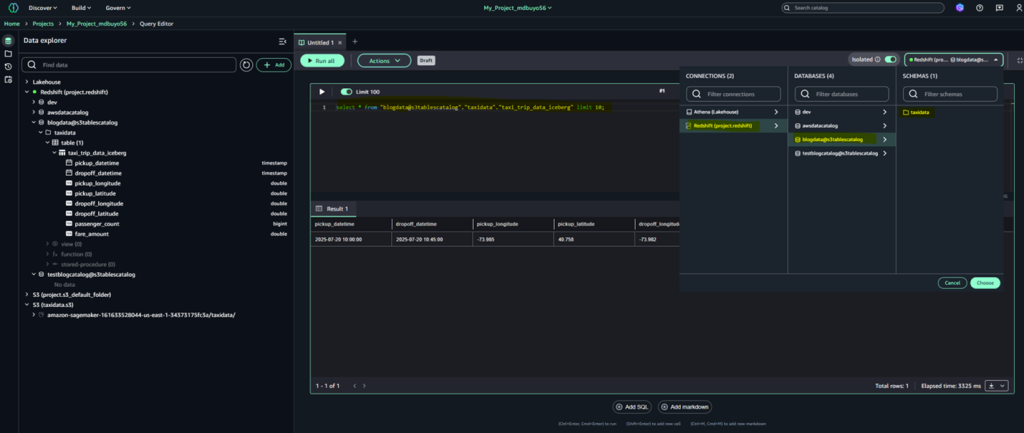

Utilizing the question editor

Making a desk within the question editor

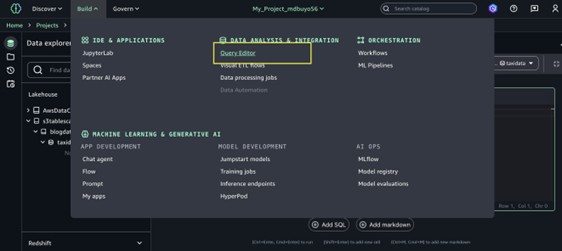

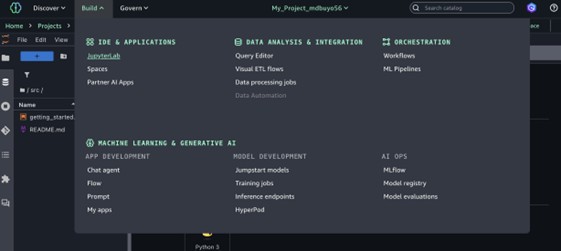

- Navigate to the mission you created within the high middle menu of the SageMaker Unified Studio dwelling web page.

- Broaden the Construct menu within the high navigation bar, then select Question editor.

- Launch a brand new Question Editor tab. This device capabilities as a SQL pocket book, enabling you to question throughout a number of engines and construct visible knowledge analytics options.

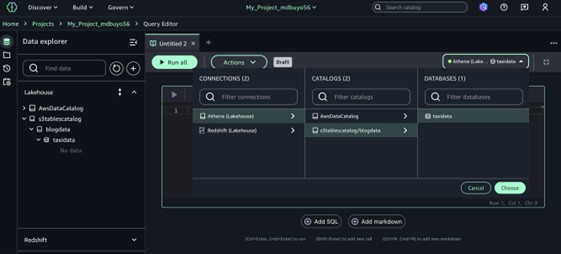

- Choose a knowledge supply in your queries through the use of the menu within the upper-right nook of the Question Editor.

- Underneath Connections, select Lakehouse (Athena) to connect with your Lakehouse sources.

- Underneath Catalogs, select S3tablescatalog/blogcatalog.

- Underneath Databases, select the identify of the database in your S3 tables.

- Choose Select to connect with the database and question engine.

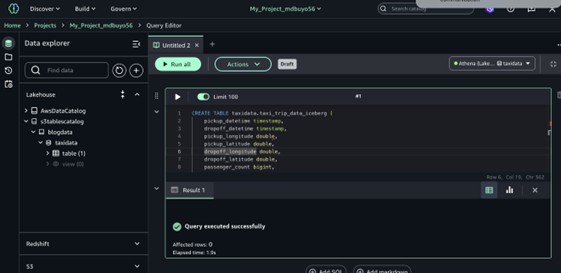

- Run the next SQL question to create a brand new desk within the catalog.

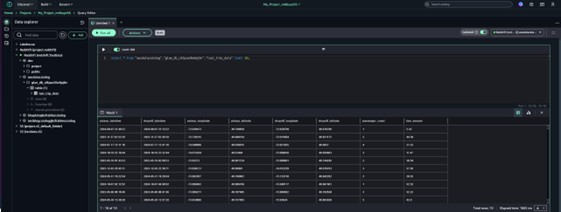

After you create the desk, you’ll be able to browse to it within the Knowledge explorer by selecting S3tablescatalog →s3tableCatalog →taxidata→taxi_trip_data_iceberg.

- Insert knowledge right into a desk with the next DML assertion.

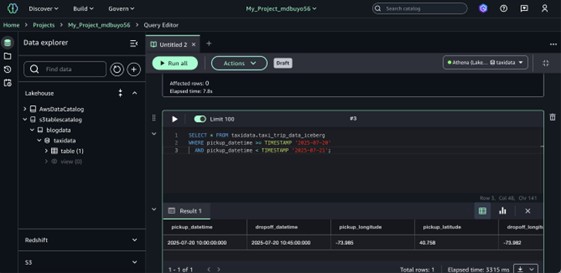

- Choose knowledge from a desk with the next question.

You’ll be able to be taught extra concerning the Question Editor and discover extra SQL examples within the SageMaker Unified Studio documentation.

Earlier than continuing with JupyterLab setup:

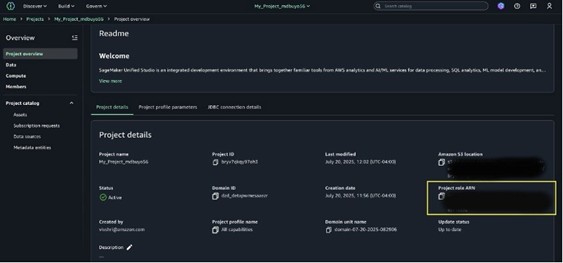

To create tables utilizing the Spark engine through a Spark connection, you should grant the S3TableFullAccess permission to the Undertaking Function ARN.

- Find the Undertaking Function ARN in SageMaker Unified Studio Undertaking Overview.

- Go to the IAM console then choose Roles.

- Seek for and choose the Undertaking Function.

- Connect the S3TableFullAccess coverage to the position, in order that the mission has full entry to work together with S3 Tables.

Utilizing JupyterLab

- Navigate to the mission you created within the high middle menu of the SageMaker Unified Studio dwelling web page.

- Broaden the Construct menu within the high navigation bar, then select JupyterLab.

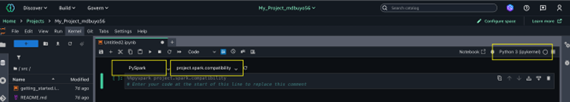

- Create a brand new pocket book.

- Choose Python3 Kernel.

- Select PySpark because the connection sort.

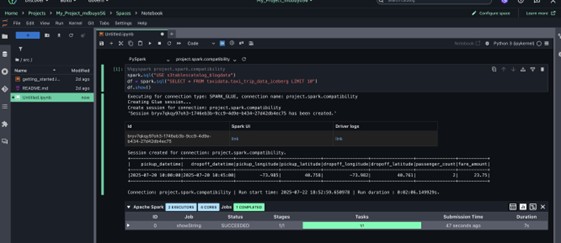

- Choose your desk bucket and namespace as the information supply in your queries:

- For Spark engine, execute question

USE s3tablescatalog_blogdata

- For Spark engine, execute question

Querying knowledge utilizing Redshift:

On this part, we stroll by means of tips on how to question the information utilizing Redshift inside SageMaker Unified Studio.

- From the SageMaker Studio dwelling web page, select your mission identify within the high middle navigation bar.

- Within the navigation panel, develop the Redshift mission folder.

- Open the blogdata@s3tablescatalog database.

- Broaden the taxidata schema.

- Underneath the Tables part, find and develop taxi_trip_data_iceberg.

- Evaluate the desk metadata to view all columns and their corresponding knowledge sorts.

- Open the Pattern knowledge tab to preview a small, consultant subset of information.

- Select Actions.

- Choose Preview knowledge from the dropdown to open and consider the total dataset within the knowledge viewer.

When you choose your desk, the Question Editor robotically opens with a pre-populated SQL question. This default question retrieves the high 10 information from the desk, providing you with an prompt preview of your knowledge. It makes use of customary SQL naming conventions, referencing the desk by its totally certified identify within the format database_schema.table_name. This strategy ensures the question precisely targets the meant desk, even in environments with a number of databases or schemas.

Finest practices and concerns

The next are some concerns you need to pay attention to.

- Whenever you create an S3 desk bucket utilizing the S3 console, integration with AWS analytics providers is enabled robotically by default. You may as well select to arrange the combination manually by means of a guided course of within the console. Additionally, whenever you create S3 Desk bucket programmatically utilizing the AWS SDK, or AWS CLI, or REST APIs, the combination with AWS analytics providers is just not robotically configured. It is advisable to manually carry out the steps required to combine the S3 Desk bucket with AWS Glue Knowledge Catalog and Lake Formation, permitting these providers to find and entry the desk knowledge.

- When creating an S3 desk bucket to be used with AWS analytics providers like Athena, we suggest utilizing all lowercase letters for the desk bucket identify. This requirement ensures correct integration and visibility throughout the AWS analytics ecosystem. Be taught extra about it from getting began with S3 tables.

- S3 Tables supply computerized desk upkeep options like compaction, snapshot administration, and unreferenced file elimination to optimize knowledge for analytics workloads. Nonetheless, there are some limitations to contemplate. Please learn extra on it from concerns and limitations for upkeep jobs.

Conclusion

On this publish, we mentioned tips on how to use SageMaker Unified Studio’s integration with S3 Tables to boost your knowledge analytics workflows. The publish defined the setup course of, together with making a Lakehouse catalog with S3 desk bucket supply, configuring mandatory IAM roles, and establishing integration with AWS Glue Knowledge Catalog and Lake Formation. We walked you thru sensible implementation steps, from creating and managing Apache Iceberg primarily based S3 tables to executing queries by means of each the Question Editor and JupyterLab with PySpark, in addition to accessing and analyzing knowledge utilizing Redshift.

To get began with SageMaker Unified Studio and S3 Tables integration, go to Entry Amazon SageMaker Unified Studio documentation.

About authors