Clients of all sizes have been efficiently utilizing Amazon OpenSearch Service to energy their observability workflows and achieve visibility into their functions and infrastructure. Throughout incident investigation, Web site Reliability Engineers (SREs) and operations middle personnel depend on OpenSearch Service to question logs, look at visualizations, analyze patterns, correlate traces to seek out the foundation reason behind the incident, and cut back Imply Time to Decision (MTTR). When an incident occurs that triggers alerts, SREs sometimes soar between a number of dashboards, write particular queries, examine current deployments, and correlate between logs and traces to piece collectively a timeline of occasions. Not solely is that this course of largely handbook, however it additionally creates a cognitive load on these personnel, even when all the info is available. That is the place agentic AI may help, by being an clever assistant that may perceive the best way to question, interpret numerous telemetry alerts, and systematically examine an incident.

On this publish, we current an observability agent utilizing OpenSearch Service and Amazon Bedrock AgentCore that may assist floor root trigger and get insights quicker, deal with a number of query-correlation cycles, and finally cut back MTTR even additional.

Resolution overview

The next diagram reveals the general structure for the observability agent.

Functions and infrastructure emit telemetry alerts within the type of logs, traces, and metrics. These alerts are then gathered by OpenTelemetry Collector (Step 1) and exported to Amazon OpenSearch Ingestion utilizing particular person pipelines for each sign: logs, traces, and metrics (Step 2). These pipelines ship the sign knowledge to an OpenSearch Service area and Amazon Managed Service for Prometheus (Step 3).

OpenTelemetry is the usual for instrumentation, and gives vendor-neutral knowledge assortment throughout a broad vary of languages and frameworks. Enterprises of assorted sizes are adopting this structure sample utilizing OpenTelemetry for his or her observability wants, particularly these dedicated to open supply instruments. Extra notably, this structure builds on open supply foundations, serving to enterprises keep away from vendor lock-in, profit from the open supply group, and implement it throughout on-premises and numerous cloud environments.

For this publish, we use the OpenTelemetry Demo utility to exhibit our observability use case. That is an ecommerce utility powered by about 20 completely different microservices, and generates real looking telemetry knowledge along with characteristic units to generate load and simulate failures.

Mannequin Context Protocol servers for observability sign knowledge

The Mannequin Context Protocol (MCP) gives a standardized mechanism to attach brokers to exterior knowledge sources and instruments. On this resolution, we constructed three distinct MCP servers, one for every kind of sign.

The Logs MCP server exposes software features for looking, filtering, and deciding on log knowledge that’s saved in an OpenSearch Service area for log knowledge. This allows the agent to question the logs utilizing numerous standards like easy key phrase matching, service title filter, log stage, or time ranges. This mimics the everyday queries you’ll run throughout an investigation. The next snippet reveals a pseudo code of what the software operate can appear like:

The Traces MCP server exposes software features for looking and retrieving details about distributed traces. These features may help lookup traces by hint ID and discover traces for a specific service, the spans belonging to a hint, the service map data constructed based mostly on the spans, and the speed, error, and period (also called RED metrics). This allows the agent to comply with a request’s path throughout the companies and pinpoint the place failures occurred or latency originated.

The Metrics MCP server exposes software features for querying time collection metrics. The agent can use these features to examine error fee percentiles and useful resource utilization, that are key alerts for understanding the general well being of the system and figuring out anomalous habits.

These three MCP servers span throughout the several types of knowledge utilized by investigation engineers, offering a whole working set for an agent to conduct investigations with autonomous correlation throughout logs, traces, and metrics to find out the doable root causes for a problem. Moreover, a customized MCP server exposes software features over enterprise knowledge on income, gross sales, and different enterprise metrics. For the OpenTelemetry demo utility, you possibly can develop artificial knowledge to assist in offering context for affect and different enterprise stage metrics. For brevity, we don’t present that server as part of this structure.

Observability agent

The observability agent is central to the answer. It’s constructed to assist with incident investigation. Conventional automations and handbook runbooks sometimes comply with predefined working procedures, however with an observability agent, you don’t must outline them. The agent can analyze, purpose based mostly on the info obtainable to it, and adapt its technique based mostly on what it discovers. It correlates findings throughout logs, traces, and metrics to reach at a root trigger.

The observability agent is constructed with the Strands Agent SDK, an open supply framework that simplifies improvement of AI brokers. The SDK gives a model-driven strategy with flexibility to deal with underlying orchestration and reasoning (the agent loop) by invoking uncovered instruments and sustaining coherent, turn-based interactions. This implementation additionally discovers instruments dynamically, so if there’s a change within the capabilities, the agent could make choices based mostly on up-to-date data.

The agent runs on Amazon Bedrock AgentCore Runtime, which gives absolutely managed infrastructure for internet hosting and working brokers. The runtime helps in style agent frameworks, together with Stands, LangGraph, and CrewAI. The runtime additionally gives scaling availability and compute that many enterprises require to run production-grade brokers.

We use Amazon Bedrock AgentCore Gateway to hook up with all three MCP servers. When deploying brokers at scale, gateways are indispensable parts to scale back administration duties like customized code improvement, infrastructure provisioning, complete ingress and egress safety, and unified entry. These are important enterprise features wanted when bringing a workload to manufacturing. On this utility, we create gateways that join all three MCP servers as targets utilizing server-sent occasions. Gateways work alongside Amazon Bedrock AgentCore Identities to offer safe credentials administration and safe identification propagation from the person to the speaking entities. The pattern utility makes use of AWS Identification and Entry Administration (IAM) for identification administration and propagation.

Incident investigation is commonly a multi-step course of. It entails iterative speculation testing, a number of rounds of querying, and constructing context over time. We use Amazon Bedrock AgentCore Reminiscence for this function. On this resolution, we use session-based namespaces to take care of separate dialog threads for various investigations. For instance, when a person asks “What about Fee service?” throughout an investigation, the agent retrieves current dialog historical past from reminiscence to take care of consciousness of prior findings. We retailer each person questions and agent responses with timestamps to assist the agent reconstruct the dialog chronologically and purpose about already accomplished findings.

We configured the observability agent to make use of Anthropic’s Claude Sonnet v4.5 in Amazon Bedrock for reasoning. The mannequin interprets questions, decides which MCP software to invoke, analyzes the outcomes, and formulates the set of questions or conclusions. We use a system immediate to instruct the mannequin to suppose like an skilled SRE or an operation middle engineer: “Beginning with a high-level examine, narrowing down affected parts, correlate throughout telemetry sign sorts and derive conclusion with substantiation. You ask the mannequin to additionally counsel logical subsequent steps resembling performing a drill down to analyze inter service dependencies.” This makes the agent versatile to research and purpose about frequent forms of incident investigations.

Observability agent in motion

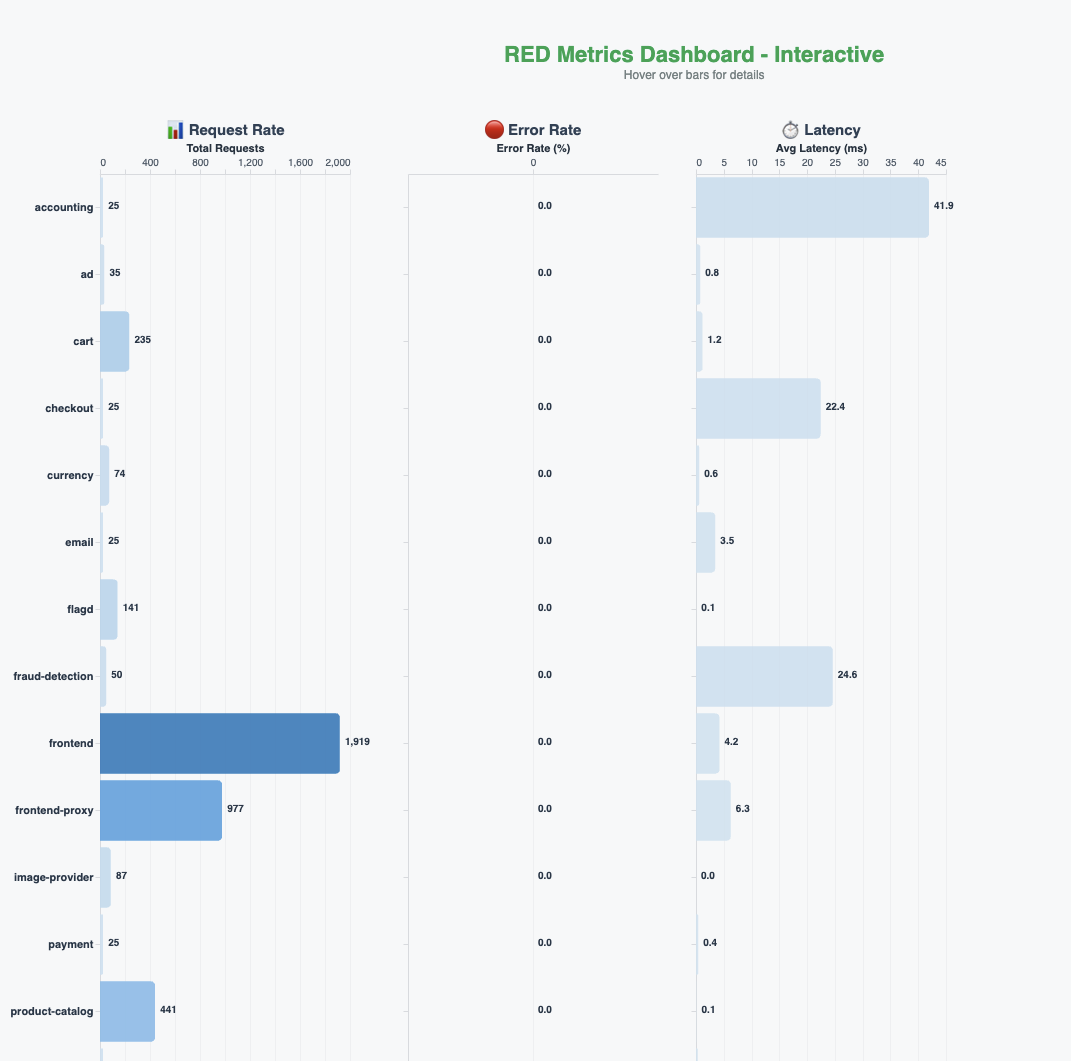

We constructed a real-time RED (fee, errors, period) metrics dashboards for your entire utility, as proven within the following determine.

To ascertain a baseline, we requested the agent the next query: “Are there any errors in my utility within the final 5 minutes?”The agent queries the traces and metrics, analyzes the outcomes, and responds saying there are not any errors within the system. It notes that every one the companies are energetic, traces are wholesome, and the system is processing requests usually. The agent additionally proactively suggests subsequent steps that is perhaps helpful for additional investigation.

Introducing failures

The OpenTelemetry demo utility has a characteristic flag that we will use to introduce deliberate failures within the system. It additionally contains load era so these errors can floor prominently. We use these options to introduce a couple of failures with the fee service. The actual-time RED metrics dashboards within the earlier determine mirror the affect and present the error charges climbing.

Investigation and root trigger evaluation

Now that we’re producing errors, we interact the agent once more. That is sometimes the beginning of the investigation session. Additionally, we’ve got workflows like alarms triggering or pages going out that can set off the beginning of an investigation.

We ask the query “Customers are complaining that it’s taking a very long time to purchase gadgets. Are you able to examine to see what’s going on?”

The agent retrieves the dialog historical past from reminiscence (if there may be any), invokes instruments to question RED metrics throughout companies, and analyzes the outcomes. It identifies a essential buy circulation efficiency subject: fee service is in a connectivity disaster and utterly unavailable, with excessive latency noticed in fraud detection, advert service, and advice service. The agent gives instant motion suggestions—restore fee service connectivity as the highest precedence—and suggests subsequent steps, together with investigating fee service logs.

Following the agent’s suggestion, we ask it to analyze the logs: “Examine fee service logs to grasp the connectivity subject.”

The agent searches logs for the checkout and fee companies, correlates them with hint knowledge, and analyzes service dependencies from the service map. It confirms that though cart service, product catalog service, and forex service are wholesome, the fee service is totally unreachable, efficiently figuring out the foundation reason behind our intentionally launched failure.

Past root trigger: Analyzing enterprise affect

As talked about earlier, we’ve got artificial enterprise gross sales and income knowledge in a separate MCP server, so when the person asks the agent “Analyze the enterprise affect of the checkout and fee service failures,” the agent makes use of this enterprise knowledge, examines the transaction knowledge from traces, calculates estimated income affect, and assesses buyer abandonment charges because of checkout failures. This reveals how the agent can transcend figuring out the foundation trigger and supply assist with operational actions like making a runbook for subject decision sooner or later, which could be first the step to offering computerized remediation with out involving SREs.

Advantages and outcomes

Though the failure situation on this publish is simplified for illustration, it highlights a number of key advantages that immediately contribute to decreasing MTTR.

Accelerated investigation cycles

Conventional workflows for troubleshooting contain a number of iterations of hypotheses, verification, querying, and knowledge evaluation at every step, requiring context switching and consuming hours of effort. The observability agent reduces these drastically to a couple minutes by autonomous reasoning, correlation, and actioning, which in flip reduces MTTR.

Dealing with complicated workflows

Actual-world manufacturing situations usually contain cascading failures and a number of system failures. The observability agent’s capabilities can prolong to those situations by utilizing historic knowledge and sample recognition. For example, it will probably distinguish associated points from false positives utilizing temporal or identity-based correlation, dependency graphs, and different methods, serving to SREs keep away from wasted investigation effort on unrelated anomalies.

Moderately than present a single reply, the agent can present probabilistic distribution throughout potential root causes, serving to SREs prioritize remediation strategies; for instance:

- Fee service community connectivity subject: 75%

- Downstream fee gateway timeout: 15%

- Database connection pool exhaustion: 8%

- Different/Unknown: 2%

The agent can examine present signs in opposition to previous incidents, figuring out whether or not related patterns have occurred prior to now, thereby evolving from a reactive question software right into a proactive diagnostic assistant.

Conclusion

Incident investigation stays largely handbook. SREs juggle dashboards, craft queries, and correlate alerts underneath strain, even when all the info is available. On this publish, we confirmed how an observability agent constructed with Amazon Bedrock AgentCore and OpenSearch Service can alleviate this cognitive burden by autonomously querying logs, traces, and metrics; correlating findings; and guiding SREs towards root trigger quicker. Though this sample represents one strategy, the pliability of Amazon Bedrock AgentCore mixed with the search and analytics capabilities of OpenSearch Service permits brokers to be designed and deployed in quite a few methods—at completely different phases of the incident lifecycle, with various ranges of autonomy, or centered on particular investigation duties—to fit your group’s distinctive operational wants. Agentic AI doesn’t exchange current observability funding, however amplifies them by offering an efficient manner to make use of your knowledge throughout incident investigations.

Concerning the authors