The variety of choices now we have to configure and enrich a coding agent’s context has exploded over the previous few months. Claude Code is main the cost with improvements on this area, however different coding assistants are shortly following go well with. Highly effective context engineering is turning into an enormous a part of the developer expertise of those instruments.

Context engineering is related for every type of brokers and LLM utilization after all. My colleague Bharani Subramaniam’s easy definition is: “Context engineering is curating what the mannequin sees so that you simply get a greater consequence.”

For coding brokers, there may be an rising set of context engineering approaches and phrases. The inspiration of it are the configuration options supplied by the instruments (e.g. “guidelines”, “expertise”), after which the nitty gritty of half is how we conceptually use these options (“specs”, varied workflows).

This memo is a primer in regards to the present state of context configuration options, utilizing Claude Code for example on the finish.

What’s context in coding brokers?

“Every thing is context” – nevertheless, these are the principle classes I consider as context configuration in coding brokers.

Reusable Prompts

Nearly all types of AI coding context engineering in the end contain a bunch of markdown recordsdata with prompts. I exploit “immediate” within the broadest sense right here, prefer it’s 2023: A immediate is textual content that we ship to an LLM to get a response again. To me there are two fundamental classes of intentions behind these prompts, I’ll name them:

-

Directions: Prompts that inform an agent to do one thing, e.g. “Write an E2E check within the following means: …”

-

Steerage: (aka guidelines, guardrails) Normal conventions that the agent ought to comply with, e.g. “All the time write assessments which might be impartial of one another.”

These two classes usually mix into one another, however I’ve nonetheless discovered it helpful to tell apart them.

Context interfaces

I couldn’t actually discover a longtime time period for what I’d name context interfaces: Descriptions for the LLM of the way it can get much more context, ought to it determine to.

-

Instruments: Constructed-in capabilities like calling bash instructions, looking out recordsdata, and so forth.

-

MCP Servers: Customized packages or scripts that run in your machine (or on a server) and provides the agent entry to knowledge sources and different actions.

-

Expertise: These latest entrants into coding context engineering are descriptions of extra assets, directions, documentation, scripts, and so forth. that the LLM can load on demand when it thinks it’s related for the duty at hand.

The extra of those you configure, the more room they take up within the context. So it’s prudent to assume strategically about what context interfaces are vital for a specific job.

Recordsdata in your workspace

Essentially the most fundamental and highly effective context interfaces in coding brokers are file studying and looking out, to know your

If and when: Who decides to load context?

-

LLM: Permitting the LLM to determine when to load context is a prerequisite for working brokers in an unsupervised means. However there all the time stays some uncertainty (dare I say non-determinism) if the LLM will truly load the context after we would anticipate it to. Instance: Expertise

-

Human: A human invocation of context provides us management, however reduces the extent of automation total. Instance: Slash instructions

-

Agent software program: Some context options are triggered by the agent software program itself, at deterministic closing dates. Instance: Claude Code hooks

How a lot: Preserving the context as small as doable

One of many objectives of context engineering is to steadiness the quantity of context given – not too little, not an excessive amount of. Despite the fact that context home windows have technically gotten actually large, that doesn’t imply that it’s a good suggestion to indiscriminately dump data in there. An agent’s effectiveness goes down when it will get an excessive amount of context, and an excessive amount of context is a price issue as properly after all.

A few of this measurement administration is as much as the developer: How a lot context configuration we create, and the way a lot textual content we put in there. My advice could be to construct context like guidelines recordsdata up progressively, and never pump an excessive amount of stuff in there proper from the beginning. The fashions have gotten fairly highly effective, so what you may need needed to put into the context half a yr in the past won’t even be vital anymore.

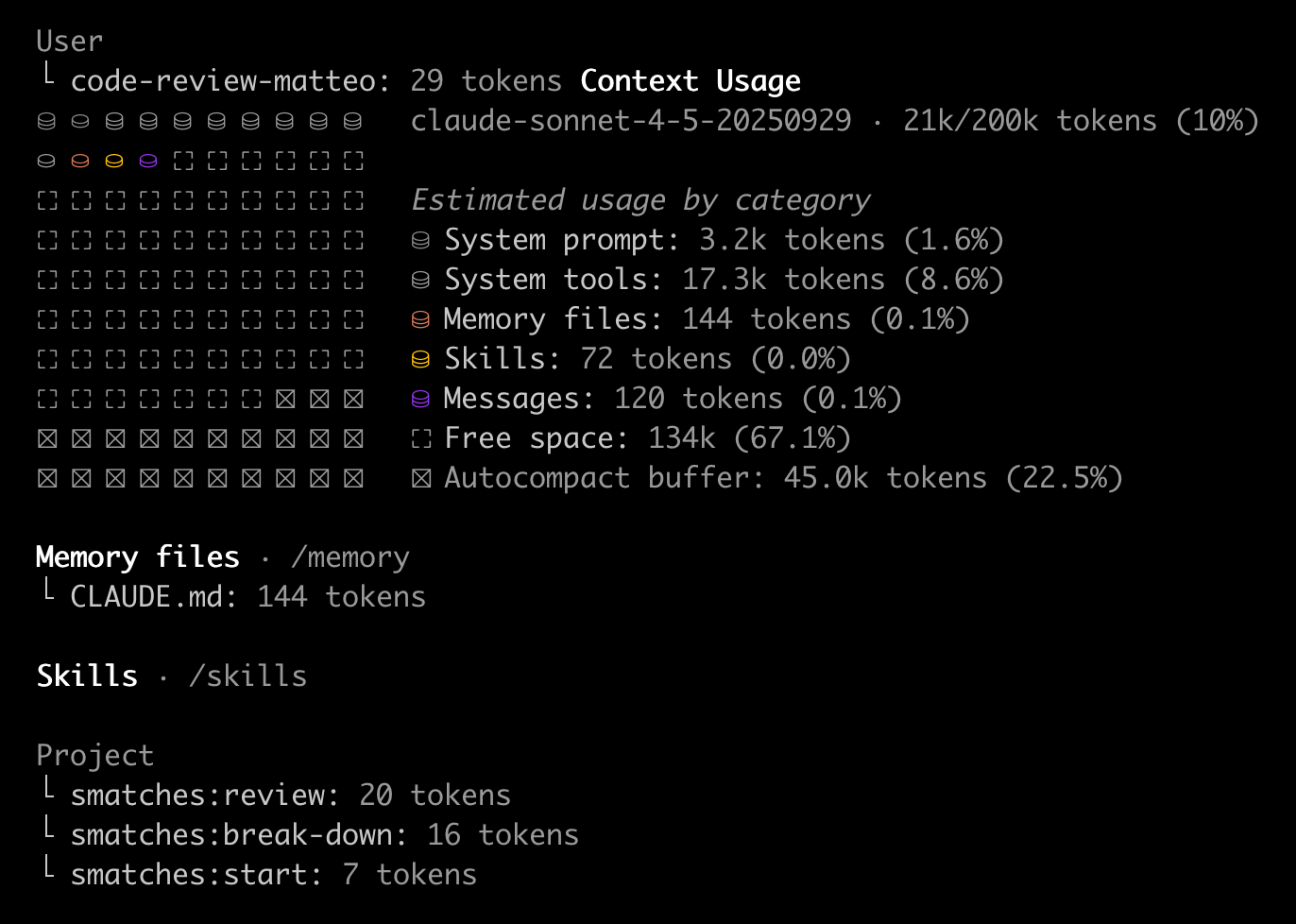

Transparency about how full the context is, and what’s taking on how a lot area, is an important characteristic within the instruments to assist us navigate this steadiness.

However it’s not all as much as us, some coding agent instruments are additionally higher at optimising context below the hood than others. They compact the dialog historical past periodically, or optimise the best way instruments are represented (like Claude Code’s Device Search Device).

Instance: Claude Code

Right here is an outline of Claude Code’s context configuration options as of January 2026, and the place they fall within the dimensions described above:

CLAUDE.md

What: Steerage

Who decides to load: Claude Code – All the time used at begin of a session

When to make use of: For many ceaselessly repeated normal conventions that apply to the entire mission

Instance use instances:

- “we use yarn, not npm”

- “don’t neglect to activate the digital atmosphere earlier than working something”

- “after we refactor, we don’t care about backwards compatibility”

Different coding assistants: Mainly all coding assistants have this characteristic of a fundamental “guidelines file”; There are makes an attempt to standardise it as AGENTS.md

Guidelines

What: Steerage

Who decides to load: Claude Code, when recordsdata on the configured paths have been loaded

When to make use of: Helps organise and modularise steerage, and due to this fact restrict measurement of the all the time loaded CLAUDE.md. Guidelines will be scoped to recordsdata (e.g. *.ts for all TypeScript recordsdata), which implies they may then solely be loaded when related.

Instance use instances: “When writing bash scripts, variables needs to be known as ${var} not $var.” paths: **/*.sh

Different coding assistants: Increasingly more coding assistants permit this path-based guidelines configuration, e.g. GH Copilot and Cursor

Slash instructions

What: Directions

Who decides to load: Human

When to make use of: Frequent duties (assessment, commit, check, …) that you’ve a particular longer immediate for, and that you simply wish to set off your self, inside the principle context DEPRECATED in Claude Code, superceded by Expertise

Instance use instances: /code-review · /e2e-test · /prep-commit

Different coding assistants: Frequent characteristic, e.g. GH Copilot and Cursor

Expertise

What: Steerage, directions, documentation, scripts, …

Who decides to load: LLM (primarily based on ability description) or Human

When to make use of: In its easiest type, that is for steerage or directions that you simply solely wish to “lazy load” when related for the duty at hand. However you may put no matter extra assets and scripts you need right into a ability’s folder, and reference them from the principle SKILL.md to be loaded.

Instance use instances:

- JIRA entry (ability e.g. describes how agent can use CLI to entry JIRA)

- “Conventions to comply with for React elements”

- “Easy methods to combine the XYZ API”

Different coding assistants: Cursor’s “Apply intelligently” guidelines have been all the time a bit like this, however they’re now additionally switching to Claude Code model Expertise

Subagents

What: Directions + Configuration of mannequin and set of accessible instruments; Will run in its personal context window, will be parallelised

Who decides to load: LLM or Human

When to make use of:

- Frequent bigger duties which might be appropriate for and price working in their very own context for effectivity (to enhance outcomes with extra intentional context), or to cut back prices).

- Duties for which you often wish to use a mannequin aside from your default mannequin

- Duties that want particular instruments / MCP servers that you simply don’t wish to all the time have accessible in your default context

- Orchestratable workflows

Instance use instances:

- Create an E2E check for all the pieces that was simply constructed

- Code assessment carried out by a separate context and with a unique mannequin to provide you a “second opinion” with out the luggage of your authentic session

- subagents are foundational for swarm experiments like claude-flow or Fuel City

Different coding assistants: Roo Code has had subagents for fairly some time, they name them “modes”; Cursor simply bought them; GH Copilot permits agent configuration, however they’ll solely be triggered by people for now

MCP Servers

What: A program that runs in your machine (or on a server) and provides the agent entry to knowledge sources and different actions by way of the Mannequin Context Protocol

Who decides to load: LLM

When to make use of: Use whenever you wish to give your agent entry to an API, or to a instrument working in your machine. Consider it as a script in your machine with a lot of choices, and people choices are uncovered to the agent in a structured means. As soon as the LLM decides to name this, the instrument name itself is often a deterministic factor. There’s a development now to supercede some MCP server performance with expertise that describe the right way to use scripts and CLIs.

Instance use instances: JIRA entry (MCP server that may execute API calls to Atlassian) · Browser navigation (e.g. Playwright MCP) · Entry to a data base in your machine

Different coding assistants: All frequent coding assistants help MCP servers at this level

Hooks

What: Scripts

Who decides to load: Claude Code lifecycle occasions

When to make use of: Whenever you need one thing to occur deterministically each single time you edit a file, execute a command, name an MCP server, and so forth.

Instance use instances:

- Customized notifications

- After each file edit, test if it’s a JS file and if that’s the case, then run prettier on it

- Claude Code observability use instances, like logging all executed instructions someplace

Different coding assistants: Hooks are a characteristic that’s nonetheless fairly uncommon. Cursor has simply began supporting them.

Plugins

What: A solution to distribute all or any of these items

Instance use instances: Distribute a typical set of instructions, expertise and hooks to groups in an organisation

That is fairly an extended record! Nonetheless, we’re in a “storming” part proper now and can certainly converge on an easier set of options. I anticipate e.g. Expertise to not solely soak up slash instructions, but in addition guidelines, which would scale back this record by two entries.

Sharing context configurations

As I stated to start with, these options are simply the muse for people to do the precise work and filling these with affordable context. It takes fairly a little bit of time to construct up a great setup, as a result of you need to use a configuration for some time to have the ability to say if it’s working properly or not – there are not any unit assessments for context engineering. Due to this fact, persons are eager to share good setups with one another.

Challenges for sharing:

- The context of the sharer and the receiver needs to be as comparable as doable – it really works so much higher within a group than between strangers on the web

- There’s a tendency to overengineer the context with pointless, copied & pasted directions up entrance, in my expertise it’s finest to construct this up iteratively

- Completely different expertise ranges may want completely different guidelines and directions

- If in case you have low consciousness of what’s in your context since you copied so much from a stranger, you may inadvertently repeat directions or contradict present ones, or blame the poor coding agent for being ineffective when it’s simply following your directions

Beware: Phantasm of management

Despite the title, in the end this isn’t actually engineering… As soon as the agent will get all these directions and steerage, execution nonetheless will depend on how properly the LLM interprets them! Context engineering can positively make a coding agent more practical and enhance the likelihood of helpful outcomes fairly a bit. Nonetheless, typically individuals speak about these options with phrases like “guarantee it does X”, or “forestall hallucinations”. However so long as LLMs are concerned, we are able to by no means be sure of something, we nonetheless have to assume in chances and select the correct degree of human oversight for the job.