Reminiscence shapes how people suppose and the way AI brokers act. With out it, an agent solely responds to the present enter; with it, it could maintain context, recall previous actions, and reuse helpful data.

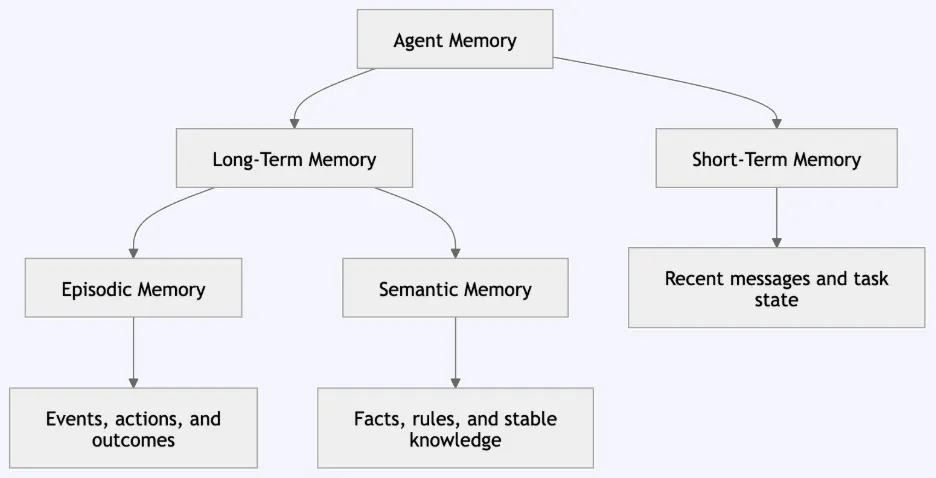

AI reminiscence spans short-term, episodic, semantic, and long-term reminiscence, every with completely different design trade-offs round storage, retention, retrieval, and management. On this article, we’ll discover agent reminiscence patterns, a sensible bridge between cognitive science and AI engineering.

What Agent Reminiscence Means

Agent reminiscence is the flexibility of an AI agent to retailer data, recollect it later, and use it to enhance future responses or actions. It permits the agent to recollect previous experiences, keep context, acknowledge helpful patterns, and adapt throughout interactions.

That is vital as a result of an LLM doesn’t routinely bear in mind all the pieces throughout periods. By default, it primarily works with the enter accessible within the present context window. Reminiscence have to be added as a separate design layer across the mannequin. This layer decides what must be saved, the way it must be organized, and when it must be retrieved.

In a easy chatbot, reminiscence could solely imply preserving the previous few messages within the dialog. In a extra superior AI agent, reminiscence can embody person preferences, previous actions, activity historical past, software outputs, choices, errors, and realized information. This helps the agent keep away from ranging from zero each time.

For instance, a deployment assistant could do not forget that a person works on the api-gateway service. It might additionally do not forget that manufacturing deployments want approval on Fridays. When the person later asks, “Can I deploy right now?”, the agent can use that saved data to present a extra helpful reply.

So, agent reminiscence isn’t just storage. It’s a full course of:

Every step issues. A superb reminiscence system ought to retailer helpful data, retrieve solely what’s related, and maintain the ultimate response grounded in dependable context. For this reason agent reminiscence have to be handled as a part of system design, not simply as a database characteristic.

Reminiscence Varieties: From Cognitive Science to AI Brokers

AI agent reminiscence is less complicated to grasp once we join it with human reminiscence. In cognitive science, reminiscence is split into completely different programs as a result of every system has a distinct goal. The identical concept applies to AI brokers. A well-designed agent shouldn’t retailer each reminiscence in a single place. It ought to use completely different reminiscence varieties for various duties.

- Brief-term reminiscence handles the present activity utilizing latest messages, momentary notes, software outputs, or the present purpose. It’s often carried out via a rolling buffer, dialog state, or context window.

- Lengthy-term reminiscence shops data throughout periods, reminiscent of person preferences, previous interactions, insurance policies, paperwork, or realized information. It’s typically carried out utilizing databases, data graphs, vector embeddings, or persistent shops.

- Episodic reminiscence information particular previous occasions, together with person actions, software calls, choices, and outcomes. It helps with auditability, debugging, and studying from earlier circumstances.

- Semantic reminiscence shops reusable data reminiscent of information, guidelines, preferences, and ideas. For instance, “Manufacturing deployments on Fridays require approval” is semantic reminiscence as a result of it could information future responses.

A easy solution to examine these reminiscence varieties is proven under:

| Reminiscence Kind | What It Shops | AI Agent Instance | Most important Use |

| Brief-term reminiscence | Present context and up to date turns | Previous few person messages | Keep dialog circulation |

| Lengthy-term reminiscence | Data saved throughout periods | Person profile or undertaking historical past | Personalization and continuity |

| Episodic reminiscence | Particular occasions and outcomes | “Person requested about deployment approval yesterday” | Traceability and studying from historical past |

| Semantic reminiscence | Information, guidelines, and ideas | “Friday manufacturing deploys want SRE approval” | Reusable data and reasoning |

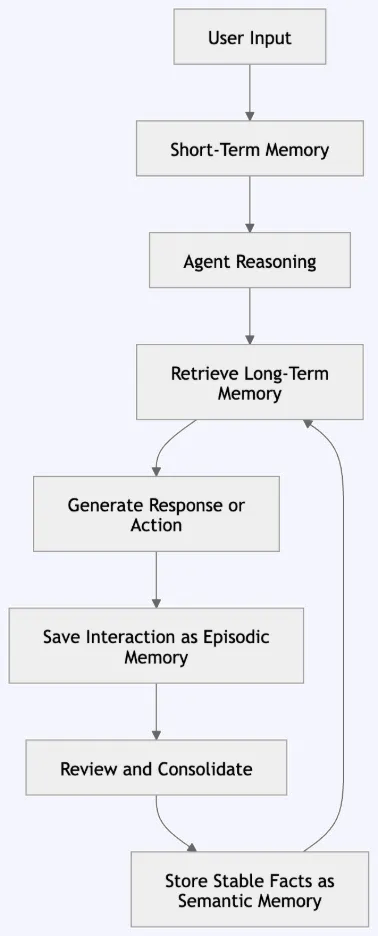

Agent Reminiscence Structure and Knowledge Move

After understanding reminiscence varieties, the following step is seeing how they work collectively inside an AI agent. A superb reminiscence system doesn’t retailer all the pieces in a single place. It separates reminiscence into layers and strikes data fastidiously between them.

The agent receives person enter, makes use of short-term reminiscence for the present dialog, and retrieves related long-term reminiscence when wanted. After responding or performing, it could save the interplay as episodic reminiscence. Over time, vital or repeated data can change into semantic reminiscence.

This circulation retains the agent helpful with out overloading the context window. Since LLMs don’t bear in mind all the pieces throughout periods by default, reminiscence have to be added across the mannequin. A superb system shops solely helpful data and retrieves solely what’s related.

On this structure, short-term reminiscence helps the present activity. Episodic reminiscence information what occurred. Semantic reminiscence shops steady information, guidelines, and preferences. Lengthy-term reminiscence connects these layers and makes helpful data accessible in future periods.

A sensible agent reminiscence pipeline often follows these steps:

| Step | What Occurs | Instance |

| Enter | The person sends a question | “Can I deploy right now?” |

| Brief-term reminiscence | The agent checks latest context | Person is engaged on api-gateway |

| Retrieval | The agent searches saved reminiscence | Friday deployments want approval |

| Reasoning | The agent combines question and reminiscence | Right this moment is Friday, approval is required |

| Response | The agent offers a solution | “You’ll be able to deploy solely after SRE approval.” |

| Episodic write | The interplay is logged | Person requested about Friday deployment |

| Semantic replace | Steady information could also be saved | Manufacturing Friday deploys require approval |

This design retains the system clear. Uncooked occasions are saved first. Steady data is created later. The agent retrieves solely probably the most related recollections as a substitute of putting all previous knowledge into the immediate. This makes the system quicker, simpler to judge, and safer to handle.

Arms-on: Constructing Agent Reminiscence with LangGraph in Google Colab

On this hands-on part, we are going to construct one LangGraph agent that makes use of three reminiscence patterns:

| Reminiscence Kind | Function |

| Brief-term reminiscence | Retains the present dialog thread energetic |

| Episodic reminiscence | Shops what occurred in previous interactions |

| Semantic reminiscence | Shops reusable information, guidelines, and preferences |

We need to construct an agent that may:

1. Keep in mind the present dialog.

2. Save previous interactions as episodic reminiscence.

3. Retailer reusable information as semantic reminiscence.

4. Retrieve helpful reminiscence earlier than answering.

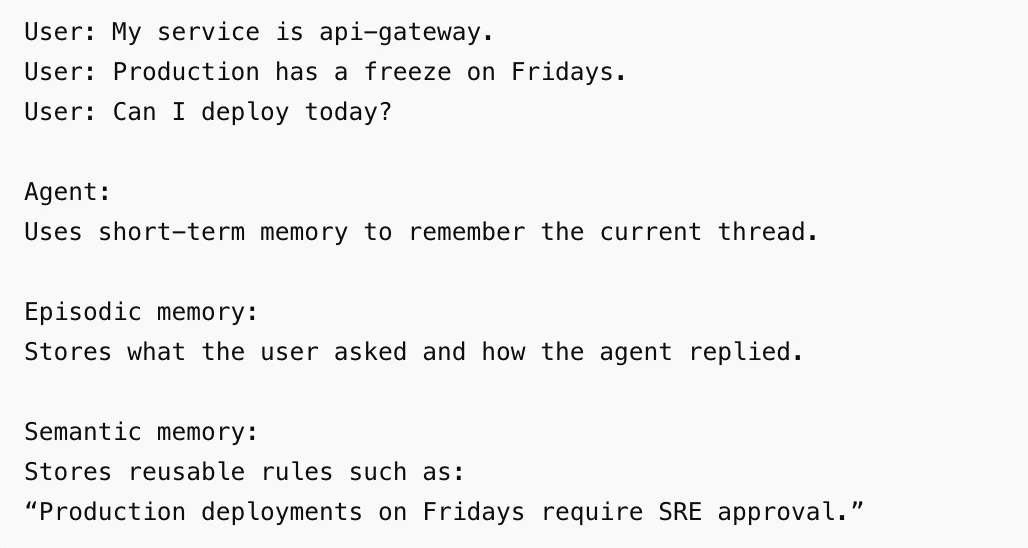

Instance circulation:

Step 1: Set up Required Packages

!pip -q set up -U langgraph langchain-openai Step 2: Set the API Key

In Colab, use getpass so the bottom line is hidden.

import os

from getpass import getpass

if "OPENAI_API_KEY" not in os.environ:

os.environ["OPENAI_API_KEY"] = getpass("Enter your OpenAI API key: ") Step 3: Import Libraries

from dataclasses import dataclass

from datetime import datetime, timezone

import uuid

from langchain_openai import ChatOpenAI, OpenAIEmbeddings

from langgraph.graph import StateGraph, MessagesState, START

from langgraph.checkpoint.reminiscence import InMemorySaver

from langgraph.retailer.reminiscence import InMemoryStore

from langgraph.runtime import Runtime Step 4: Create the Mannequin

mannequin = ChatOpenAI(

mannequin="gpt-4o-mini",

temperature=0

) We use temperature=0 so the output is extra steady in the course of the demo.

Step 5: Create Shared Reminiscence Elements

This demo makes use of one checkpointer and one reminiscence retailer.

embeddings = OpenAIEmbeddings(

mannequin="text-embedding-3-small"

)

retailer = InMemoryStore(

index={

"embed": embeddings,

"dims": 1536

}

)

checkpointer = InMemorySaver()Here’s what every part does:

| Element | Function |

| InMemorySaver | Shops short-term thread state |

| InMemoryStore | Shops episodic and semantic recollections |

| OpenAIEmbeddings | Helps retrieve semantic recollections utilizing similarity search |

Step 6: Outline Person Context

We use user_id to maintain reminiscence separated by person.

@dataclass

class AgentContext:

user_id: str That is vital as a result of one person’s reminiscence shouldn’t seem in one other person’s dialog.

Step 7: Add Helper Features

This helper extracts a semantic reminiscence when the person says “do not forget that”.

def extract_semantic_memory(message: str):

lower_message = message.decrease()

if lower_message.startswith("do not forget that"):

return message.exchange("Keep in mind that", "").exchange("do not forget that", "").strip()

return NoneThis helper codecs saved recollections earlier than passing them to the mannequin.

def format_memories(gadgets, key):

if not gadgets:

return "No related recollections discovered."

return "n".be a part of(

f"- {merchandise.worth[key]}"

for merchandise in gadgets

)Step 8: Outline the Agent Node

That is the principle a part of the demo. The agent does 4 issues:

1. Reads the most recent person message.

2. Retrieves semantic recollections.

3. Generates a response.

4. Saves episodic and semantic reminiscence.

def agent_node(state: MessagesState, runtime: Runtime[AgentContext]):

user_id = runtime.context.user_id

latest_user_message = state["messages"][-1].content material

episodic_namespace = (

"episodic_memory",

user_id

)

semantic_namespace = (

"semantic_memory",

user_id

)

semantic_memories = runtime.retailer.search(

semantic_namespace,

question=latest_user_message,

restrict=5

)

semantic_memory_text = format_memories(

semantic_memories,

key="reality"

)

system_message = {

"function": "system",

"content material": f"""

You're a useful deployment assistant.

Use the reminiscence under solely when it's related.

Semantic reminiscence:

{semantic_memory_text}

"""

}

response = mannequin.invoke(

[system_message] + state["messages"]

)

episode = {

"timestamp": datetime.now(timezone.utc).isoformat(),

"occasion": f"Person requested: {latest_user_message}. Agent replied: {response.content material}",

"user_message": latest_user_message,

"agent_response": response.content material,

"memory_type": "episodic"

}

runtime.retailer.put(

episodic_namespace,

str(uuid.uuid4()),

episode

)

semantic_fact = extract_semantic_memory(latest_user_message)

if semantic_fact:

runtime.retailer.put(

semantic_namespace,

str(uuid.uuid4()),

{

"reality": semantic_fact,

"memory_type": "semantic",

"created_at": datetime.now(timezone.utc).isoformat()

}

)

return {

"messages": [response]

}Step 9: Construct the LangGraph Agent

builder = StateGraph(

MessagesState,

context_schema=AgentContext

)

builder.add_node("agent", agent_node)

builder.add_edge(START, "agent")

graph = builder.compile(

checkpointer=checkpointer,

retailer=retailer

)

At this level, the agent is prepared.

Step 10: Create a Thread and Person Context

config = {

"configurable": {

"thread_id": "deployment-thread-1"

}

}

context = AgentContext(

user_id="user-123"

)The thread_id controls short-term reminiscence. The user_id controls long-term reminiscence separation.

Demo 1: Brief-Time period Reminiscence

Brief-term reminiscence helps the agent bear in mind the present dialog thread.

Run the primary flip:

response_1 = graph.invoke(

{

"messages": [

{

"role": "user",

"content": "My service is api-gateway."

}

]

},

config=config,

context=context

)

print(response_1["messages"][-1].content material)

Run the second flip:

response_2 = graph.invoke(

{

"messages": [

{

"role": "user",

"content": "Production has a freeze on Fridays."

}

]

},

config=config,

context=context

)

print(response_2["messages"][-1].content material)

Now ask a follow-up query:

response_3 = graph.invoke(

{

"messages": [

{

"role": "user",

"content": "Can I deploy today?"

}

]

},

config=config,

context=context

)

print(response_3["messages"][-1].content material)Output:

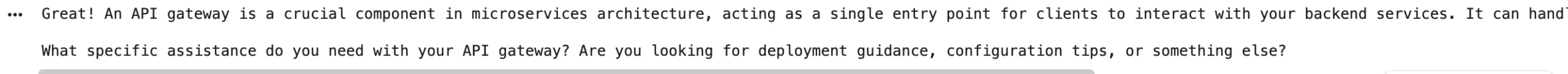

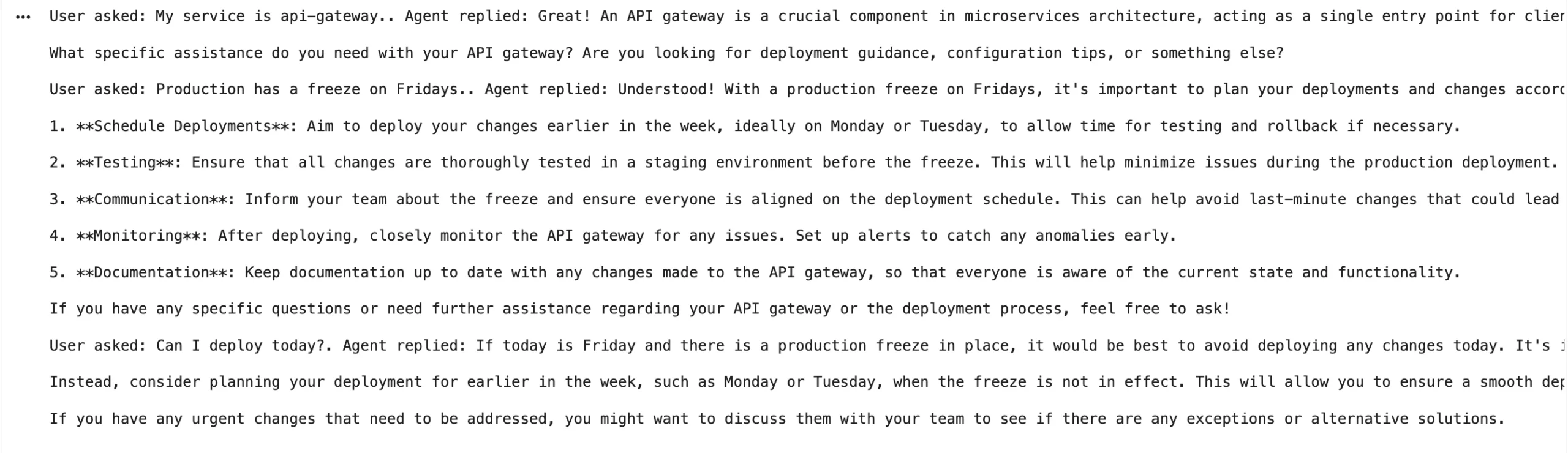

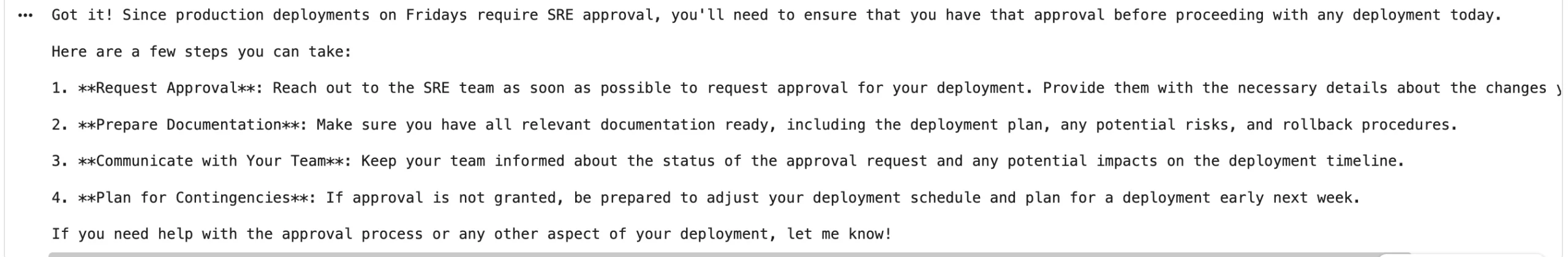

From the output we will see that the agent remembers that the service is api-gateway and that manufacturing has a freeze on Fridays.

This reveals short-term reminiscence as a result of the agent makes use of earlier messages from the identical thread.

Demo 2: Episodic Reminiscence

Episodic reminiscence shops what occurred throughout interactions. In our agent, each person message and agent response is saved as an episode.

Run this cell to examine saved episodic recollections:

episodic_namespace = (

"episodic_memory",

"user-123"

)

episodes = retailer.search(

episodic_namespace,

restrict=10

)

for episode in episodes:

print(episode.worth["event"])

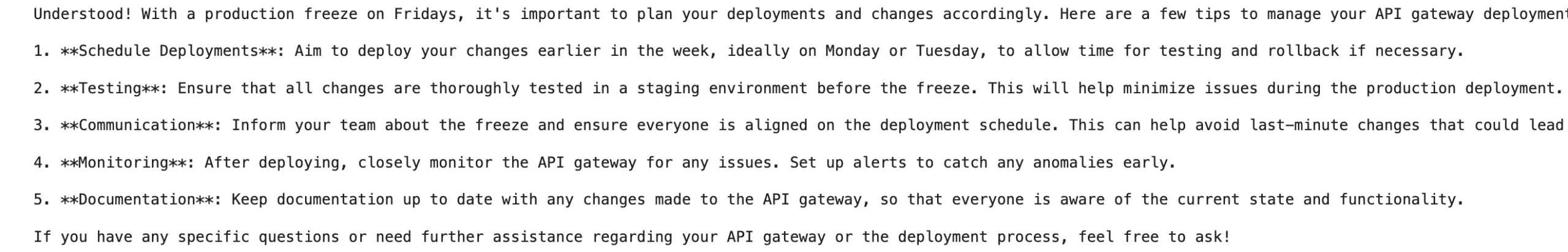

print()Output:

That is episodic reminiscence as a result of it shops particular occasions. It information what occurred, when it occurred, and the way the agent responded.

Demo 3: Semantic Reminiscence

Semantic reminiscence shops reusable information. On this demo, the agent saves a semantic reminiscence when the person begins a message with “Keep in mind that”.

Run this cell:

response_4 = graph.invoke(

{

"messages": [

{

"role": "user",

"content": "Remember that production deployments on Fridays require SRE approval."

}

]

},

config=config,

context=context

)

print(response_4["messages"][-1].content material)

Now ask a query that ought to use this saved reality:

response_5 = graph.invoke(

{

"messages": [

{

"role": "user",

"content": "Can I deploy api-gateway on Friday?"

}

]

},

config=config,

context=context

)

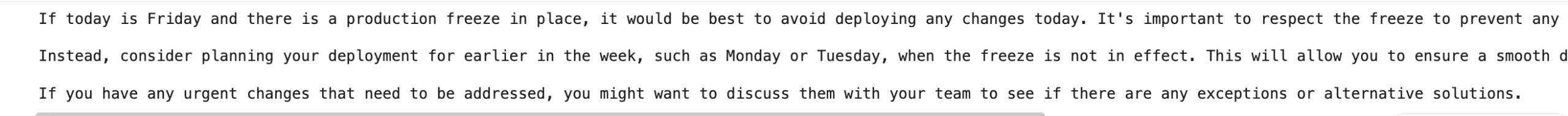

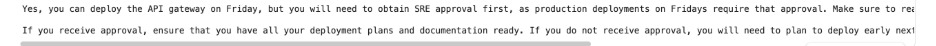

print(response_5["messages"][-1].content material)Output:

We are able to see that the agent answered that Friday manufacturing deployments require SRE approval.

This reveals semantic reminiscence as a result of the saved reality is reusable. It’s not only a report of 1 occasion. It’s data the agent can use once more later.

Examine Semantic Reminiscence

Run this cell to see the saved semantic information:

semantic_namespace = (

"semantic_memory",

"user-123"

)

semantic_memories = retailer.search(

semantic_namespace,

question="Friday deployment approval",

restrict=5

)

for reminiscence in semantic_memories:

print(reminiscence.worth["fact"])Output:

| Reminiscence Kind | The place It Seems within the Demo | What It Does |

| Brief-term reminiscence | Similar thread_id | Retains the dialog linked |

| Episodic reminiscence | episodic_memory namespace | Shops interplay historical past |

| Semantic reminiscence | semantic_memory namespace | Shops reusable information |

| Person separation | user_id in namespace | Prevents reminiscence mixing throughout customers |

This hands-on demo reveals how completely different reminiscence varieties can work collectively in a single LangGraph agent. Brief-term reminiscence retains the present dialog energetic. Episodic reminiscence shops what occurred. Semantic reminiscence shops reusable data. In Google Colab, in-memory storage is straightforward and helpful for studying. For manufacturing programs, these reminiscence layers must be moved to persistent storage so the agent can protect reminiscence after restarts.

Selecting the Proper Storage Backend

After constructing reminiscence into an agent, the following query is the place to retailer it. One of the best storage backend will depend on how the reminiscence can be used.

Brief-term reminiscence wants quick entry in the course of the present dialog. Episodic reminiscence must retailer occasions and historical past. Semantic reminiscence wants search over information, guidelines, and preferences. Lengthy-term reminiscence wants to remain accessible throughout periods.

| Reminiscence Kind | Good Storage Selection | Why |

| Brief-term reminiscence | In-memory retailer, Redis, PostgreSQL checkpointer | Quick entry in the course of the energetic thread |

| Episodic reminiscence | SQLite, PostgreSQL, MongoDB | Shops occasions, timestamps, and historical past |

| Semantic reminiscence | Vector retailer, Chroma, FAISS, PostgreSQL with vector assist | Helps search over which means |

| Lengthy-term reminiscence | PostgreSQL, MongoDB, sturdy key-value retailer | Retains reminiscence throughout periods |

A superb reminiscence backend must also assist separation by person, thread, and reminiscence kind. This prevents reminiscence from mixing throughout customers and makes retrieval simpler to manage.

Select the backend based mostly on the reminiscence’s job. Brief-term reminiscence wants pace. Episodic reminiscence wants historical past. Semantic reminiscence wants search. Lengthy-term reminiscence wants sturdiness. A well-designed agent separates these reminiscence layers so the system stays quick, searchable, and simpler to handle.

Safety, Privateness, and Governance

Reminiscence makes an agent extra helpful, nevertheless it additionally will increase threat. When data is saved throughout periods, incorrect or delicate recollections can have an effect on future responses. A reminiscence system should due to this fact management what’s saved, who can entry it, how lengthy it stays, and the way it may be deleted.

The principle dangers embody reminiscence poisoning, immediate injection via saved content material, delicate knowledge leakage, cross-user reminiscence leakage, and off reminiscence. For instance, an agent shouldn’t save API keys, passwords, tokens, or personal person knowledge as reminiscence.

A protected reminiscence system ought to comply with a number of clear guidelines:

| Rule | Why It Issues |

| Retailer solely helpful data | Reduces noise and pointless threat |

| Keep away from secrets and techniques and delicate knowledge | Prevents unintentional publicity |

| Separate reminiscence by person and undertaking | Avoids cross-user leakage |

| Validate vital recollections | Prevents false or dangerous recollections |

| Assist deletion | Permits unsafe or outdated reminiscence to be eliminated |

| Hold reminiscence under system guidelines | Prevents saved content material from overriding core directions |

Reminiscence must also embody provenance when potential. The system ought to know the place a reminiscence got here from, when it was created, and whether or not it’s nonetheless legitimate.

Agent reminiscence must be helpful, nevertheless it should even be managed. A superb reminiscence system shops solely protected and precious data, separates customers clearly, helps deletion, and prevents saved recollections from overriding fastened system guidelines. This makes agent reminiscence safer, extra dependable, and simpler to handle

Conclusion

Agent reminiscence helps AI brokers keep context, recall previous interactions, and reuse helpful data. By separating reminiscence into short-term, episodic, semantic, and long-term layers, builders can construct brokers which can be extra organized and dependable. Brief-term reminiscence helps the present dialog. Episodic reminiscence information occasions. Semantic reminiscence shops reusable information. Lengthy-term reminiscence retains vital data throughout periods. The LangGraph demo reveals how these concepts will be carried out in observe. Nonetheless, reminiscence have to be managed fastidiously. A superb system ought to retailer solely helpful data, shield delicate knowledge, assist deletion, and forestall reminiscence leakage. Effectively-designed reminiscence makes brokers extra constant, customized, and reliable.

Steadily Requested Questions

A. Agent reminiscence lets AI brokers retailer, recall, and reuse data to enhance future responses.

A. Completely different reminiscence varieties deal with present context, previous occasions, reusable information, and long-term continuity.

A. Protected reminiscence shops solely helpful data, protects delicate knowledge, separates customers, helps deletion, and prevents leakage.

Login to proceed studying and luxuriate in expert-curated content material.