Should you’ve been following the AI area these days, you’ve in all probability seen one thing huge: folks don’t simply care what an AI solutions anymore, they care how it reaches that reply. And that’s precisely the place DeepSeek Math V2 steps in. It’s an open-source mannequin constructed particularly for actual mathematical reasoning.

On this information, I’ll stroll you thru what DeepSeek Math V2 is, why everyone seems to be speaking about its generator-verifier system, and the way this mannequin manages to unravel advanced proofs whereas checking its personal work like a strict math trainer. Should you’re interested in how AI is lastly getting good at formal math, maintain studying.

What’s DeepSeek Math V2?

DeepSeek Math V2 is DeepSeek-AI’s latest open-source LLM constructed particularly for mathematical reasoning and theorem proving. Launched on the finish of 2025, it marks a giant shift from AI fashions that merely return closing solutions to ones that really present their work and justify each step.

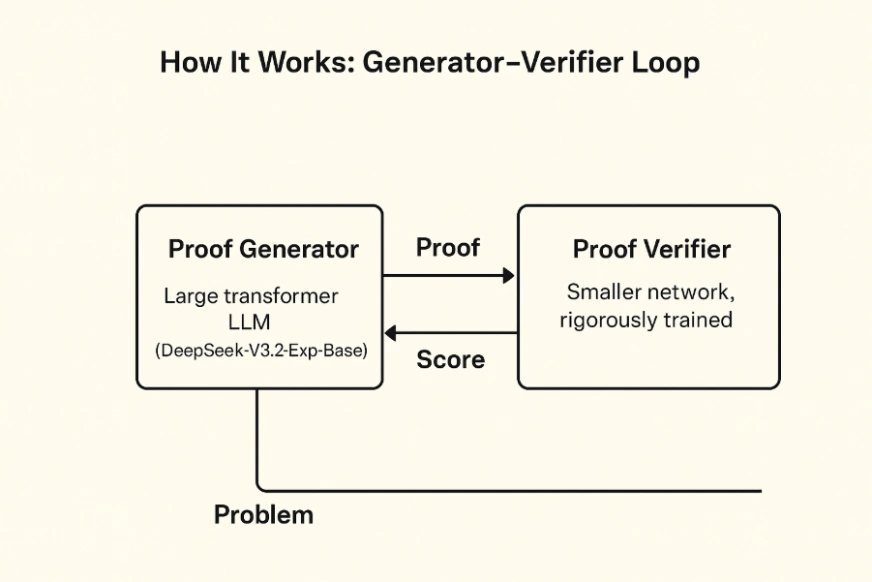

What makes it particular is its two-model generator–verifier setup. One mannequin writes the proof, and the second mannequin checks every step like a logic inspector. So as an alternative of simply fixing an issue, DeepSeek Math V2 additionally evaluates whether or not its personal reasoning is sensible. The workforce skilled it with reinforcement studying, rewarding not simply right solutions however clear, rigorous derivations.

And the outcomes converse for themselves. DeepSeek Math V2 performs on the high stage in main math competitions, scoring round 83.3% at IMO 2025 and 98.3% on the Putnam 2024. It surpasses earlier open fashions and comes surprisingly near the perfect proprietary techniques on the market.

Key Options of DeepSeek Math V2

- Large scale: With 685B parameters constructed on DeepSeek-V3.2-ExpBase, the mannequin handles extraordinarily lengthy proofs utilizing a number of numeric codecs (BF16, F8_E4M3, F32) and sparse consideration for environment friendly computation.

- Self-verification: A devoted verifier checks each proof step for logical consistency. If a step is flawed or a theorem is misapplied, the system flags it and the generator is retrained to keep away from repeating the error. This suggestions loop forces the mannequin to refine its reasoning.

- Reinforcement coaching: The mannequin was skilled on mathematical literature and artificial issues, then improved by means of proof-based reinforcement studying. The generator proposes options, the verifier scores them, and more durable proofs yield stronger rewards, pushing the mannequin towards deeper and extra correct derivations.

- Open supply and accessible: The weights are launched below Apache 2.0 and accessible on Hugging Face and GitHub. You can even strive DeepSeek Math V2 straight by means of the free DeepSeek Chat interface, which helps non-commercial analysis and academic use.

The Two-Mannequin Structure of DeepSeek Math V2

DeepSeek Math V2’s structure presents two principal parts that work together with one another:

- Proof Generator: This massive transformer LLM (DeepSeek-V3.2-Exp-Base) is chargeable for creating step-by-step mathematical proofs based mostly on the issue assertion.

- Proof Verifier: Though it’s a smaller community, it’s an extensively skilled one which represents each proof with logical steps (for instance, through an summary syntax tree) and carries out the applying of mathematical guidelines on them. It signifies the inconsistencies within the reasoning or the invalid manipulations that aren’t termed as ‘phrases’ and assigns a “rating” to every proof.

Coaching occurs in two levels. First, the verifier is skilled on recognized right and incorrect proofs. Then the generator is skilled with the verifier performing as its reward mannequin. Each time the generator produces a proof, the verifier scores it. Unsuitable steps get penalized, absolutely right proofs get rewarded, and over time the generator learns to supply clear, legitimate derivations.

Multi-Move Verification and Search

Because the generator improves and begins producing harder proofs, the verifier receives further compute equivalent to extra search passes to catch subtler errors. This creates a shifting goal the place the verifier all the time stays barely forward, pushing the generator to enhance repeatedly.

Throughout regular operation, the mannequin additionally makes use of a multi-pass inference course of. It generates many candidate proof drafts, and the verifier checks every one. DeepSeek Math V2 can department in an MCTS-style search the place it explores completely different proof paths, removes those with low verifier scores, and iterates on the promising ones. In easy phrases, it retains rewriting its work till the verifier approves it.

def generate_verified_proof(downside):

root = initialize_state(downside)

whereas not root.is_complete():

kids = broaden(root, generator)

for youngster in kids:

rating = verifier.consider(youngster.proof_step)

if rating DeepSeek Math V2 ensures that each reply comes with clear, step-by-step reasoning, because of its mixture of technology and real-time verification. It is a main improve from fashions that solely purpose for the ultimate reply with out displaying how they reached it.

Methods to Entry DeepSeek Math 2?

The mannequin weights and code are publicly accessible below an Apache 2.0 license (DeepSeek moreover mentions a non-commercial research-friendly license). To strive it out, you’ll be able to:

- Obtain from Hugging Face: The mannequin is hosted on Hugging Face deepseek-ai/DeepSeekMath-V2 . Utilizing the Hugging Face Transformers library, one can load the mannequin and tokenizer. Remember it’s enormous, you’ll want not less than a number of high-end GPUs (the repo recommends 8×A100) or TPU pods for inference.

- DeepSeek Chat interface: Should you don’t have large compute, DeepSeek affords a free internet demo at chat.deepseek.com . This “Chat with DeepSeek AI” permits interactive prompting (together with math queries) with out setup. It’s a simple technique to see the mannequin’s output on pattern issues.

- APIs and integration: You possibly can deploy the mannequin through any normal serving framework (e.g. DeepSeek’s GitHub has code for multi-pass inference). Instruments like Apidog or FastAPI will help wrap the mannequin in an API. For instance, one may create an endpoint /solve-proof that takes an issue textual content and returns the mannequin’s proof and verifier feedback.

Now, let’s strive the mannequin out!

Process 1: Generate a Step-by-Step Proof

Stipulations:

- GPU with not less than 40GB VRAM (e.g., A100, H100, or related).

- Python surroundings (Python 3.10+)

- Set up newest variations of:

pip set up transformers speed up bitsandbytes torch –improve Step 1: Select a Math Drawback

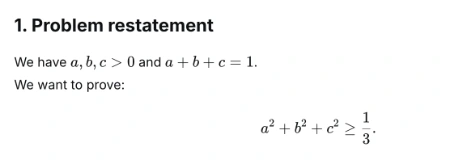

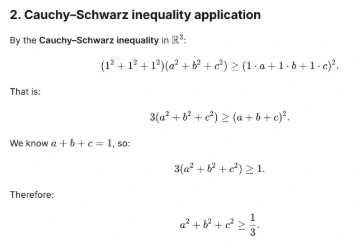

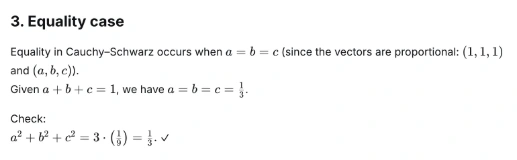

For this hands-on, we’ll be utilizing the next downside which is quite common in math olympiads:

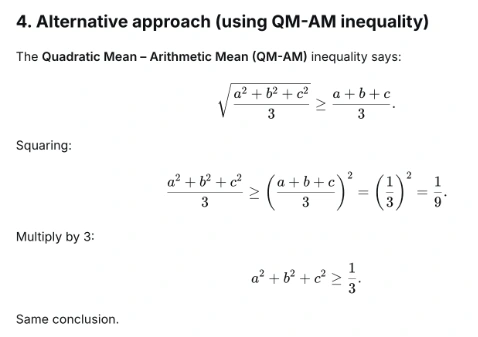

Let a, b, c be optimistic actual numbers such {that a} + b + c = 1. Show that a² + b² + c² ≥ 1/3.

Step 2: Python script to run the Mannequin

from transformers import AutoTokenizer, AutoModelForCausalLM

import torch

# Load mannequin and tokenizer

model_id = "deepseek-ai/DeepSeek-Math-V2"

tokenizer = AutoTokenizer.from_pretrained(model_id, trust_remote_code=True)

mannequin = AutoModelForCausalLM.from_pretrained(

model_id,

torch_dtype=torch.bfloat16,

device_map="auto",

trust_remote_code=True

)

# Immediate

immediate = """You might be DeepSeek-Math-V2, a competition-level mathematical reasoning mannequin.

Resolve the next downside step-by-step. Present an entire and rigorous proof.

Drawback: Let a, b, c be optimistic actual numbers such {that a} + b + c = 1. Show that a² + b² + c² ≥ 1/3.

Resolution:"""

# Tokenize and generate

inputs = tokenizer(immediate, return_tensors="pt").to(mannequin.machine)

outputs = mannequin.generate(

**inputs,

max_new_tokens=512,

temperature=0.2,

top_p=0.95,

do_sample=True

)

# Decode and print consequence

output_text = tokenizer.decode(outputs[0], skip_special_tokens=True)

print("n=== Proof Output ===n")

print(output_text)

# Step 3: Run the script

# In your terminal, run the next command:

# python deepseek_math_demo.pyOr for those who require then you’ll be able to check it on the internet interface as effectively.

Output:

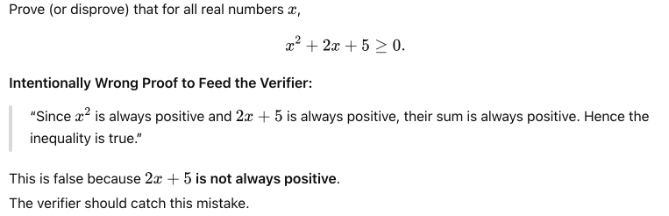

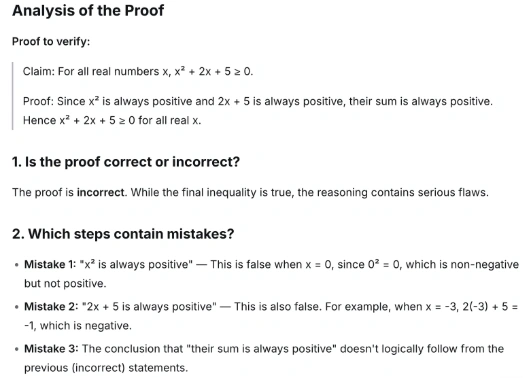

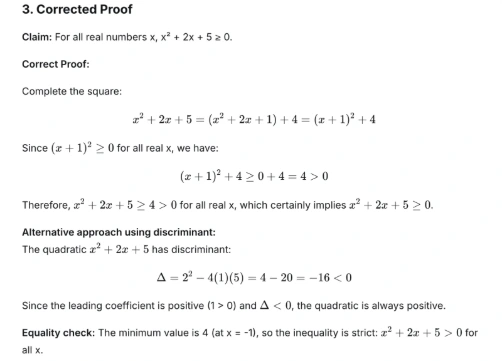

Process 2: Test the Correctness of a Mathematical Proof

On this activity, we’ll feed DeepSeek Math V2 a flawed math proof and ask its Verifier element to critique and validate the reasoning. It is going to mainly present one of the necessary options of DeepSeek Math V2, self-verification.

Step 1: Outline the Drawback:

Step 2: Add the Verifier Immediate code:

from transformers import AutoTokenizer, AutoModelForCausalLM

import torch

model_id = "deepseek-ai/DeepSeek-Math-V2"

tokenizer = AutoTokenizer.from_pretrained(model_id, trust_remote_code=True)

mannequin = AutoModelForCausalLM.from_pretrained(

model_id,

torch_dtype=torch.bfloat16,

device_map="auto",

trust_remote_code=True

)

# Incorrect proof for DeepSeek to confirm

incorrect_proof = """

Declare: For all actual numbers x, x^2 + 2x + 5 ≥ 0.

Proof: Since x^2 is all the time optimistic and 2x + 5 is all the time optimistic, their sum is all the time optimistic. Therefore x^2 + 2x + 5 ≥ 0 for all actual x.

"""

immediate = f"""You're the DeepSeek Math V2 Verifier.

Your activity is to critically analyze the next proof, determine incorrect reasoning,

and supply a corrected, rigorous clarification.

Proof to confirm:

{incorrect_proof}

Please present:

1. Whether or not the proof is right or incorrect.

2. Which steps comprise errors.

3. A corrected proof.

"""

inputs = tokenizer(immediate, return_tensors="pt").to(mannequin.machine)

outputs = mannequin.generate(

**inputs,

max_new_tokens=600,

temperature=0.2,

top_p=0.95,

do_sample=True

)

print("n=== Verifier Output ===n")

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

# Step 3: Run the script

# In your terminal, run the next command:

# python deepseek_verifier_demo.py Output:

Efficiency and Benchmarks

DeepSeek Math V2 delivers standout outcomes throughout main math benchmarks:

- Worldwide Math Olympiad (IMO) 2025: Scored round 83.3 % by absolutely fixing issues 1 to five and partially fixing downside 6. This matches high closed-source techniques, even earlier than its official contest entry.

- Canadian Math Olympiad (CMO) 2024: Scored about 73.8 % by absolutely fixing 4 of 6 issues and partially fixing the remainder.

- Putnam Examination 2024: Achieved 98.3 % (118 out of 120 factors) below scaled compute, solely lacking partial credit score on the hardest questions.

- ProofBench (DeepMind): Obtained about 99 % approval on fundamental proofs and 62 % on superior proofs, outperforming GPT-4, Claude 4, and Gemini on structured reasoning.

In side-by-side comparisons, DeepSeek Math V2 constantly beats main fashions on proof accuracy by 15 to twenty %. Many fashions nonetheless guess or skip steps, whereas DeepSeek’s strict verification loop reduces error charges considerably, with studies displaying as much as 40 % fewer reasoning errors than speed-focused techniques.

Purposes and Significance

DeepSeek Math V2 is not only sturdy in competitions. It pushes AI nearer to formal verification by treating each downside as a proof-checking activity. Listed here are the principle methods it may be used:

- Training and tutoring: It may grade math assignments, test pupil proofs, and supply step-by-step hints or apply issues.

- Analysis help: Helpful for exploring early concepts, recognizing weak reasoning, and producing new approaches in areas like cryptography and quantity idea.

- Theorem-proving techniques: It may assist instruments like Lean or Coq by serving to translate natural-language reasoning into formal proofs.

- High quality management: It may confirm advanced calculations in fields equivalent to aerospace, cryptography, and algorithm design the place accuracy is essential.

Additionally Learn:

Conclusion

DeepSeek Math V2 is a strong device amongst AI’s math-related duties. It connects an enormous transformer spine with new proof-checking loops, achieves file scores in contests, and is made accessible to the neighborhood without spending a dime. The event of AI has all the time been the case in DeepSeek Math V2 that self-verifying is the core of deep pondering, not solely of bigger fashions or knowledge.

Strive it out at present and let me know your ideas within the remark part beneath!

Login to proceed studying and luxuriate in expert-curated content material.