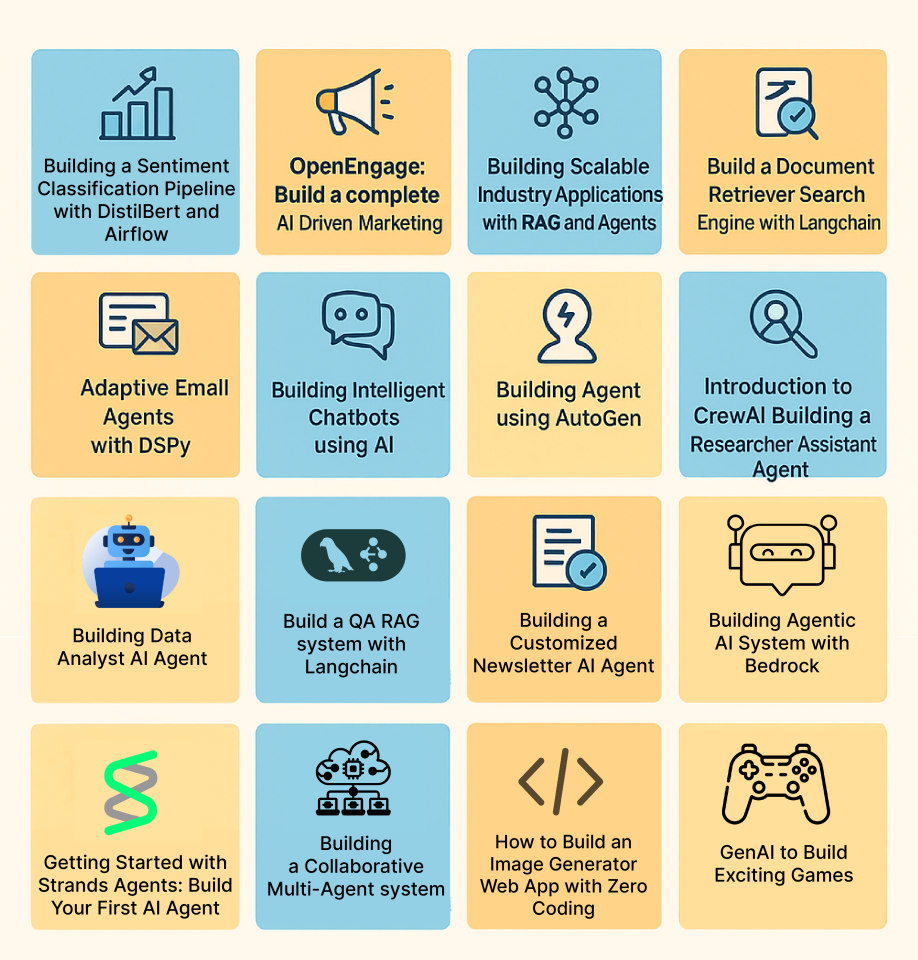

AI and information science are two of the fastest-growing fields on the earth right now. Should you understand that and are aiming to degree up your portfolio, stand out in interviews, or just perceive them intimately, right here is your final wrap-up for 2025. On this article, we carry you 25+ absolutely solved, end-to-end AI and information science tasks. These tasks span throughout machine studying, NLP, laptop imaginative and prescient, RAG methods, automation, multi-agent collaboration, and extra (learn their newbie’s guides within the hyperlink). Whereas each AI and information science mission listed right here covers totally different subjects, all of them observe one structured format. With this, you may shortly perceive what you’ll be taught, what instruments you’ll grasp, and the way precisely to resolve these AI and information science tasks with a step-by-step strategy.

In totality, these tasks shall assist rookies and professionals alike within the subject of AI and information science to hone their abilities, construct production-grade purposes, and keep forward of the business curve.

Ideally, I’d counsel you bookmark this text and be taught any or each mission as per your liking, one after the other. For this, I’ve additionally shared the hyperlink to every of the tasks. So with none delay, let’s dive proper into the perfect AI and Information Science tasks of 2025.

Classical ML / Core Information Science

1. Mortgage Prediction Apply Drawback (Utilizing Python)

This mission takes a real-world loan-approval situation and guides you to construct a binary classification mannequin in Python. You’ll predict whether or not a mortgage utility will get accredited or not based mostly on applicant information. It provides you hands-on expertise in an end-to-end information science workflow: from information exploration to mannequin constructing and analysis.

Key Expertise to Study

- Understanding binary classification and its use in real-life issues like mortgage approval.

- Exploratory Information Evaluation (EDA): univariate and bivariate evaluation to grasp information distributions and relationships.

- Information preprocessing: dealing with lacking values, outlier remedy, encoding categorical variables, and making ready information for modelling.

- Constructing classification fashions in Python (e.g., logistic regression, choice bushes, random forest, and many others.).

- Mannequin analysis & validation: utilizing train-test cut up, metrics like accuracy (and optionally precision/recall), and evaluating a number of fashions to decide on the perfect performer.

Mission Workflow

- Outline the issue assertion: resolve to foretell whether or not a mortgage utility must be accredited or denied based mostly on applicant attributes (earnings, credit score historical past, mortgage quantity, and many others.).

- Load the dataset in Python (e.g., with pandas) and carry out preliminary inspection: checking information varieties, lacking values, and abstract statistics.

- Carry out Exploratory Information Evaluation (EDA: analyse distributions, relationships between options and goal to get insights.

- Preprocess the information: deal with lacking values/outliers, encode categorical variables, and put together information for modelling.

- Construct a number of classification fashions: begin with easy ones (like logistic regression), then attempt extra superior fashions (choice tree, random forest, and many others.) to see which works greatest.

- Consider and evaluate fashions: cut up information into prepare and take a look at units, compute efficiency metrics, validate stability, and select the mannequin with the perfect efficiency.

- Interpret outcomes and draw insights: perceive which options affect mortgage approval predictions most, and mirror on the implications for real-world loan-approval methods.

2. Twitter Sentiment Evaluation (Utilizing Python)

This mission teaches you the way to carry out sentiment evaluation on Twitter information utilizing Python. You’ll be taught to fetch tweets, clear and preprocess textual content, construct machine-learning fashions, and classify sentiments (constructive, destructive, impartial). It’s probably the most common NLP starter tasks as a result of it combines real-world noisy textual content information with sensible ML workflows.

Key Expertise to Study

- Textual content preprocessing: cleansing tweets, eradicating noise, tokenization, stop-word removing

- Understanding sentiment evaluation fundamentals utilizing NLP

- Characteristic engineering utilizing methods like TF-IDF or Bag-of-Phrases

- Constructing ML fashions for textual content classification (Logistic Regression, Naive Bayes, SVM, and many others.)

- Evaluating NLP fashions utilizing accuracy, F1-score, and confusion matrix

- Working with Python libraries like pandas, scikit-learn, and NLTK

Mission Workflow

- Acquire tweets: both utilizing pattern datasets or by fetching reside tweets by way of APIs.

- Preprocess the textual content information: clear URLs, hashtags, mentions, emojis; tokenize and normalize the textual content.

- Convert textual content to numerical options utilizing TF-IDF, Bag-of-Phrases, or different vectorization methods.

- Construct sentiment classification fashions: begin with baseline algorithms like Logistic Regression or Naive Bayes.

- Prepare and consider the mannequin utilizing accuracy and F1-score to measure efficiency.

- Interpret outcomes: perceive essentially the most influential phrases, patterns in sentiment, and the way your mannequin responds to totally different tweet varieties.

- Apply the mannequin to unseen tweets to generate insights from reside or saved Twitter information.

3. Constructing Textual content Classification Fashions in NLP

This mission helps you perceive the way to construct end-to-end textual content classification methods utilizing core NLP methods. You’ll work with uncooked textual content information, clear and remodel it, and prepare machine-learning fashions that may robotically classify textual content into predefined classes. The mission focuses on the basics of NLP and serves as a robust entry level for anybody studying how text-based ML pipelines work.

Key Expertise to Study

- Textual content preprocessing: cleansing, tokenization, normalization

- Changing textual content into numerical options (TF-IDF, Bag-of-Phrases, and many others.)

- Constructing ML fashions for textual content classification (Logistic Regression, Naive Bayes, SVM, and many others.)

- Understanding analysis metrics for NLP classification duties

- Structuring an end-to-end NLP pipeline from information loading to mannequin deployment

Mission Workflow

- Begin by loading and exploring the textual content dataset and understanding the goal labels.

- Clear and preprocess the textual content: take away noise, tokenize, normalize, and put together it for modelling.

- Convert textual content into numeric representations utilizing TF-IDF or Bag-of-Phrases.

- Prepare classification fashions on the processed information and tune fundamental parameters.

- Consider mannequin efficiency and evaluate outcomes to decide on the perfect strategy.

4. Constructing Your First Laptop Imaginative and prescient Mannequin

This mission guides you to construct your very first laptop imaginative and prescient mannequin utilizing deep studying. You’ll find out how digital pictures are processed, how convolutional neural networks (CNNs) work, after which prepare a imaginative and prescient mannequin on actual picture information. It’s designed for rookies – a robust entry level into image-based ML and deep studying.

Key Expertise to Study

- Fundamentals of picture processing and the way pictures are represented digitally (pixels, channels, arrays)

- Understanding Convolutional Neural Networks (CNNs): convolution layer, pooling/striding, downsampling, and many others.

- Constructing deep-learning-based imaginative and prescient fashions utilizing Python frameworks (e.g. TensorFlow, OpenCV)

- Coaching and evaluating picture classification fashions on actual datasets

- Finish-to-end CV pipeline: information loading, preprocessing, mannequin design, coaching, inference

Mission Workflow

- Load and preprocess the picture dataset: learn pictures, convert to arrays, normalize, and resize as wanted.

- Construct a CNN mannequin: outline convolution, pooling, and fully-connected layers to be taught from picture information.

- Prepare the mannequin on coaching pictures and validate on a hold-out set to watch efficiency.

- Consider outcomes: test mannequin accuracy (or different applicable metrics), analyze misclassifications, iterate if wanted.

- Use the skilled mannequin for inference on new/unseen pictures to check real-world efficiency.

GenAI, LLMs, and RAG

5. Constructing a Deep Analysis AI Agent

This AI mission walks you thru constructing a full-blown research-and-report era agent utilizing a graph-based agent framework (like LangGraph). Such an agent can robotically fetch information from the online, analyze it, and compile a structured analysis report. The mission provides you a hands-on understanding of agentic AI workflows – the place the agent autonomously breaks down a analysis process, gathers sources, and assembles a readable report.

Key Expertise to Study

- Understanding agent-based AI design: planning brokers, process decomposition, sub-agent orchestration

- Integrating web-search instruments/APIs to fetch real-time information for evaluation

- Designing pipelines combining search, information assortment, content material era, and report meeting

- Orchestrating parallel execution: enabling sub-tasks to run concurrently for sooner outcomes

- Immediate engineering and template design for structured report era

Mission Workflow

- Outline your analysis goal: decide a subject or query for the agent to discover (e.g. “Newest traits in AI brokers in 2025”).

- Arrange the agent framework utilizing a graph-based agent instrument; create core modules equivalent to a planner node, section-builder sub-agents, and a closing report compiler.

- Combine web-search capabilities so the agent can dynamically fetch information from the web when wanted.

- Design a report template that defines sections like introduction, background, insights, and conclusion, so the agent is aware of the construction forward.

- Run the agent workflow: planner decomposes duties > sub-agents fetch information, write sections > closing compiler collates sections right into a full report.

- Evaluate and refine the generated report, validate sources/information, and tweak prompts or workflow for higher coherence and reliability.

6. Construct your first RAG system utilizing LlamaIndex

This mission helps you construct a full Retrieval-Augmented Era (RAG) system utilizing LlamaIndex. You’ll discover ways to ingest paperwork (PDFs, textual content, and many others.), cut up them into manageable chunks, construct a semantic search index (usually vector-based), after which join that index with a language mannequin to serve context-aware responses or QA. The end result: a system that may reply consumer queries based mostly in your doc assortment. Such a system is smarter, extra correct, and grounded in precise information.

Key Expertise to Study

- Doc ingestion and preprocessing: loading docs, cleansing textual content, chunking/splitting for indexing

- Working with indexing & embedding/vector shops to allow semantic retrieval

- Constructing a retrieval + era pipeline: utilizing LlamaIndex to fetch related context and feeding it to an LLM for reply synthesis

- Configuring retrieval parameters: chunk measurement, embedding mannequin, and question engine settings to optimize retrieval high quality

- Integrating retrieval and LLM-based era right into a seamless QA/utility move

Mission Workflow

- Put together your doc corpus: PDFs, textual content recordsdata or any unstructured content material you need the system to “know.”

- Preprocess and cut up paperwork into chunks or nodes to allow them to be successfully listed.

- Construct an index utilizing LlamaIndex (vector-based or semantic), embedding the doc chunks right into a searchable retailer.

- Arrange a question engine that retrieves related chunks given a consumer’s query or immediate.

- Combine the index with an LLM: feed the retrieved context + consumer question to the LLM, let it generate a context-aware response.

- Check the system: ask various questions, test response correctness and relevance. Alter indexing or retrieval settings if wanted (chunk measurement, embedding mannequin, and many others.).

7. Construct a Doc Retriever Search Engine with LangChain

This mission helps you construct a doc retriever–type search engine utilizing LangChain. You’ll discover ways to course of massive textual content corpora, break them into chunks, create embeddings, and join every little thing to a vector database in order that consumer queries return essentially the most related paperwork. It’s a compact however highly effective introduction to retrieval methods that sit on the core of recent RAG purposes.

Key Expertise to Study

- Fundamentals of doc retrieval and serps

- Utilizing LangChain for doc loading, chunking, and embedding era

- Indexing paperwork right into a vector database for environment friendly similarity search

- Implementing retrievers that fetch essentially the most related chunks for a given question

- Understanding how such retrieval methods plug into bigger RAG or QA pipelines

Mission Workflow

- Load a textual content corpus (for instance, Wikipedia-like paperwork or data base content material) utilizing LangChain doc loaders.

- Chunk paperwork into smaller items and generate embeddings for every chunk.

- Retailer these embeddings in a vector database or in-memory vector retailer.

- Implement a retriever that, given a consumer question, finds and returns essentially the most related doc chunks.

- Check the search engine with totally different queries and refine chunking, embeddings, or retrieval settings to enhance relevance.

8. Construct a QA RAG system with Langchain

This mission walks you thru constructing an entire Query-Answering RAG system utilizing LangChain. You’ll mix retrieval (vector search) with an LLM to create a strong pipeline the place the mannequin solutions questions utilizing context pulled out of your paperwork – making responses factual, grounded, and context-aware.

Key Expertise to Study

- Fundamentals of Retrieval-Augmented Era (RAG)

- Integrating LLMs with vector databases for context-aware QA

- Utilizing LangChain’s retrievers, indexes, and chains

- Constructing end-to-end QA pipelines with immediate templates and retrieval logic

- Bettering RAG efficiency by way of chunking, embedding selection, and immediate design

Mission Workflow

- Load paperwork, chunk them, and embed them for vector storage.

- Construct a retriever that fetches essentially the most related chunks for any question.

- Join the retriever with an LLM utilizing LangChain’s QA or RAG-style chains.

- Configure prompts in order that the mannequin makes use of the retrieved context whereas answering.

- Check the QA system with varied questions and refine chunking, retrieval, or prompts to enhance accuracy.

9. Coding a ChatGPT-style Language Mannequin From Scratch in Pytorch

This mission exhibits you the way to construct a transformer-based language mannequin much like ChatGPT from the bottom up utilizing PyTorch. You get hands-on with all parts: tokenization, embeddings, positional encodings, masked self-attention, and a decoder-only transformer. By the top, you’ll have coded, skilled, and even deployed your individual easy language mannequin able to producing textual content.

Key Expertise to Study

- Fundamentals of transformer-based language fashions: embeddings, positional encoding, masked self-attention, decoder-only transformer structure

- Sensible PyTorch abilities: information preparation, mannequin coding, coaching, and fine-tuning

- NLP fundamentals for generative duties: dealing with tokenization, language mannequin inputs & outputs

- Coaching and evaluating a customized LLM: loss capabilities, overfitting avoidance, and inference pipeline setup

- Deploying a customized language mannequin: understanding the way to go from prototype code to an inference-ready mannequin

Mission Workflow

- Put together your textual dataset and construct tokenization + input-label pipelines.

- Implement core mannequin parts: embeddings, positional encodings, consideration layers, and the decoder-only transformer.

- Prepare your mannequin on the ready information, monitoring coaching progress and tuning hyperparameters if wanted.

- Validate the mannequin’s textual content era functionality: pattern outputs, examine coherence, test for typical errors.

- Optionally fine-tune or iterate mannequin parameters/information to enhance era high quality earlier than deployment.

10. Constructing Clever Chatbots utilizing AI

This mission teaches you the way to construct a contemporary AI-powered chatbot able to understanding consumer queries, retrieving related info, and producing clever, context-aware responses. You’ll work with LLMs, retrieval pipelines, and chatbot frameworks. You’ll create an assistant that may reply precisely, deal with paperwork, and help multimodal interactions relying in your setup.

Key Expertise to Study

- Designing end-to-end conversational AI methods

- Constructing retrieval-augmented chatbot pipelines utilizing embeddings and semantic search

- Loading paperwork, producing embeddings, and enabling contextual question-answering

- Structuring conversational flows and sustaining context

- Incorporating responsible-AI practices: security, bias checks, and clear responses

Mission Workflow

- Begin by loading your data base: PDFs, textual content paperwork, or customized datasets.

- Preprocess your content material and generate embeddings for semantic retrieval.

- Construct a retrieval-plus-generation pipeline: retrieval offers context, and the LLM generates correct solutions.

- Combine the pipeline right into a chatbot interface that helps conversational interactions.

- Check the chatbot end-to-end, consider response accuracy, and refine prompts and retrieval settings for higher efficiency.

Agentic / Multi-Agent / Automation

11. Constructing a Collaborative Multi-Agent System

This mission teaches you the way to construct a collaborative multi-agent AI system utilizing a graph-based framework. As an alternative of a single agent doing all duties, you design a number of brokers (nodes) that talk, coordinate, and share obligations. Such cross-communication and coordinated motion allow modular, scalable AI workflows for complicated, multi-step issues.

Key Expertise to Study

- Understanding multi-agent structure: how brokers operate as nodes and coordinate by way of message passing.

- Utilizing LangGraph to outline brokers, their roles, dependencies, and interactions.

- Designing workflows the place totally different brokers specialize (for instance: information retrieval, processing, summarization, or decision-making) and collaborate.

- Managing state and context throughout brokers, enabling sequences of operations, info move, and context sharing.

- Constructing modular and maintainable AI methods, that are simpler to increase or debug in comparison with monolithic agent setups.

Mission Workflow

- Outline the general process or drawback that wants a number of capabilities (e.g., analysis + summarization + reporting, or information pipeline + evaluation + alerting).

- Decompose the issue into sub-tasks, then design a set of brokers the place every agent handles a particular sub-task or position.

- Mannequin the brokers and their dependencies utilizing LangGraph: arrange nodes, outline inputs/outputs, and specify communication or information move between them.

- Implement agent logic for every node: for instance, information fetcher agent, analyzer agent, summarizer agent, and many others.

- Run the multi-agent system end-to-end: provide enter, let brokers collaborate in response to story-defined move, and seize the ultimate output/end result.

- Check and refine the workflow: consider output high quality, debug agent interactions, and modify information flows or agent obligations for higher efficiency.

12. Creating Drawback-Fixing Brokers with GenAI for Actions

This mission teaches you the way to construct GenAI-powered problem-solving brokers that may suppose, plan, and execute actions autonomously. As an alternative of merely producing responses, these brokers be taught to interrupt down duties into smaller steps, compose actions intelligently, and full end-to-end workflows. It’s a vital basis for contemporary agentic AI methods utilized in automation, assistants, and enterprise workflows.

Key Expertise to Study

- Understanding agentic AI: how reasoning-driven brokers differ from conventional ML fashions

- Job decomposition: breaking massive issues into action-level steps

- Designing agent architectures that plan and execute actions

- Utilizing GenAI fashions to allow reasoning, planning, and dynamic decision-making

- Constructing actual, action-based AI workflows as a substitute of static prompt-response methods

Mission Workflow

- Begin with the basics of agentic methods. These embody what brokers are, how multi-agent constructions work, and why reasoning issues.

- Outline a transparent drawback the agent ought to remedy, equivalent to information extraction, chained automation, or multi-step duties.

- Design the action-composition framework: how the agent decides steps, plans execution, and handles branching logic.

- Implement the agent utilizing GenAI fashions to allow reasoning and motion choice.

- Check the agent end-to-end and refine its planning or execution logic based mostly on efficiency.

13. Construct a Resume Evaluate Agentic System with CrewAI

This mission guides you to construct an AI-powered resume evaluation system utilizing an agent framework. The system robotically analyses submitted resumes, evaluates key attributes (abilities, expertise, relevance), and offers structured suggestions or scoring. It mimics how a recruiter would display purposes, however in an automatic, scalable approach.

Key Expertise to Study

- Constructing agentic methods tailor-made for doc evaluation and analysis

- Parsing and extracting structured info from unstructured paperwork (resumes)

- Designing analysis standards and scoring logic aligned with job necessities

- Combining NLP methods with agent orchestration to evaluate content material (abilities, expertise, schooling, and many others.)

- Automating suggestions era and structured output (evaluation studies)

Mission Workflow

- Start by defining the analysis standards or rubric your resume-review agent ought to apply (e.g. talent match, expertise years, position relevance).

- Construct or configure the agent framework (utilizing CrewAI) to just accept resumes as enter — PDF, DOCX or textual content.

- Implement parsing logic to extract related fields (abilities, expertise, schooling, and many others.) from the resume.

- Have the agent consider the extracted information towards your standards and generate structured suggestions/scoring.

- Check the system with a number of resumes to test consistency, accuracy, and robustness – refine parsing and analysis logic as wanted.

14. Constructing a Information Analyst AI Agent

This mission teaches you the way to construct an AI-powered information analyst agent that may automate your whole information workflow. This spans from loading uncooked datasets to producing insights, visualizations, summaries, and studies. The agent can interpret consumer queries in pure language, resolve what analytical steps to carry out, and return significant outcomes with out requiring handbook coding.

Key Expertise to Study

- Understanding the basics of agentic AI and the way brokers can automate analytical duties

- Constructing data-oriented agent workflows for cleansing, preprocessing, evaluation, and reporting

- Automating core analytics capabilities: EDA, summarisation, visualization, and sample detection

- Designing decision-making logic so the agent chooses the appropriate analytical operation based mostly on consumer queries

- Integrating natural-language interfaces so customers can ask questions in plain English and get information insights

Mission Workflow

- Outline the evaluation scope: the dataset, the sorts of insights wanted, and typical questions the agent ought to reply.

- Arrange the agent framework and configure modules for information loading, cleansing, transformation, and evaluation.

- Implement analytical capabilities: summaries, correlations, charts, development evaluation, and many others.

- Construct a natural-language question interface that maps consumer inquiries to the related analytical steps.

- Check utilizing actual queries and refine the agent’s choice logic for accuracy and reliability.

15. Constructing Agent utilizing AutoGen

This mission teaches you the way to use AutoGen, a multi-agent AI framework, to construct clever brokers that may plan, talk, and remedy duties collaboratively. You’ll discover ways to construction brokers with particular roles, allow them to alternate messages, combine instruments or fashions, and orchestrate full end-to-end workflows utilizing agentic intelligence.

Key Expertise to Study

- Fundamentals of agentic AI and multi-agent system design

- Creating AutoGen brokers with outlined roles and capabilities

- Structuring communication flows between brokers

- Integrating instruments, LLMs, and exterior capabilities into brokers

- Designing multi-agent workflows for analysis, automation, coding duties, and reasoning-heavy issues

Mission Workflow

- Arrange the AutoGen surroundings and perceive how brokers, messages, and instruments match collectively.

- Outline agent roles equivalent to planner, assistant, or executor based mostly on the duty you need to automate.

- Construct a minimal agent group and configure their communication logic.

- Combine instruments (like code execution or retrieval capabilities) to increase agent capabilities.

- Run a collaborative workflow: let brokers plan, delegate, and execute duties by way of structured interactions.

- Refine prompts, agent roles, and workflow steps to enhance reliability and efficiency.

16. Getting Began with Strands Brokers: Construct Your First AI Agent

This mission helps you construct your first AI agent utilizing Strands, a framework that allows brokers to carry out duties, purpose, and act. It’s designed for rookies, providing a hands-on introduction to constructing agentic methods that may carry out structured duties and workflows.

Key Expertise to Study

- Fundamentals of agentic AI: what brokers are, how they purpose and act.

- Understanding the Strands framework for constructing AI brokers.

- Establishing an agent pipeline: from enter consumption to output/motion.

- Designing duties and actions: the way to outline what the agent must do.

- Testing and refining agent behaviour for reliability and correctness.

Mission Workflow

- Set up and configure the Strands surroundings and dependencies.

- Outline a easy process you need your agent to carry out (e.g. info retrieval, information summarization, easy automation).

- Construct the agent logic: outline inputs, anticipated actions or outputs, and the way the agent processes requests.

- Run and take a look at the agent: feed pattern enter, observe outputs, consider correctness.

- Iterate and refine: modify immediate logic, enter/output formatting or agent behaviour for higher outcomes.

17. Constructing a Custom-made E-newsletter AI Agent

This mission teaches you the way to construct an AI-powered system that robotically generates custom-made newsletters. Utilizing an agent framework, you’ll create a pipeline that fetches content material, summarises and codecs it, and delivers a ready-to-send publication — automating what’s historically a tedious, handbook course of.

Key Expertise to Study

- Understanding agentic AI design: goal-setting, constraint modelling, process orchestration

- Utilizing trendy frameworks (e.g. for brokers + LLMs) to construct workflow-based AI methods for content material automation

- Automating content material gathering and summarisation for dynamic content material sources

- Deploying and delivering outcomes: integration with deployment platforms (e.g. by way of Replit/Streamlit), producing output in publication format

- Arms-on sensible pipeline creation: from information ingestion to closing publication output

Mission Workflow

- Outline the publication’s goal: what content material you need (e.g. information abstract, AI-trends roundup, curated articles), frequency, and audience.

- Fetch or ingest content material: collect articles/information/posts from net sources or datasets.

- Use an AI agent to course of content material: summarise, filter, and format the data as per publication necessities.

- Generate the publication: compile summaries right into a structured publication structure.

- Deploy the system – optionally on a platform (e.g. by way of a easy net app) so you may set off publication era and supply simply.

18. Adaptive E mail Brokers with DSPy

This mission teaches you the way to construct adaptive, context-aware electronic mail brokers utilizing DSPy. Not like mounted prompt-based responders, these brokers dynamically choose related context, retrieve previous interactions, optimize prompts, and generate polished electronic mail replies robotically. The main focus is on making electronic mail automation smarter, adaptive, and extra dependable utilizing DSPy’s structured framework.

Key Expertise to Study

- Designing adaptive brokers that may retrieve, filter, and use context intelligently

- Understanding DSPy workflows for constructing strong LLM pipelines

- Implementing context-engineering methods: context choice, compression, and relevance filtering

- Utilizing DSPy optimization methods (like MePro-style refinement) to enhance output high quality

- Automating electronic mail responses end-to-end: studying inputs, retrieving context, producing coherent replies

Mission Workflow

- Arrange the DSPy surroundings and perceive its core workflow parts.

- Construct the context-handling logic — how the agent selects emails, threads, and related info from previous conversations.

- Create the adaptive electronic mail pipeline: retrieval → immediate formation → optimization → response era.

- Check the agent on instance electronic mail threads and consider the standard, tone, and relevance of responses.

- Refine the agent by tuning context guidelines and enhancing prompt-optimization methods for extra adaptive behaviour.

19. Constructing an Agentic AI System with Bedrock

This mission exhibits you the way to construct production-ready agentic AI methods utilizing Amazon Bedrock because the backend. You’ll discover ways to mix multi-agent design, managed LLM providers, orchestration and deployment to create clever, context-aware brokers that may purpose, collaborate, and execute complicated workflows, all with out heavy infrastructure overhead.

Key Expertise to Study

- Fundamentals of agentic AI methods: what makes an agentic system totally different from easy LLM apps

- Easy methods to use Bedrock for brokers: creating brokers, establishing agent orchestration, and leveraging managed AI providers

- Multi-agent orchestration: designing workflows the place a number of brokers collaborate to resolve duties

- Integrating exterior instruments/APIs with brokers: enabling brokers to work together with information shops, databases or different providers for real-world use circumstances

- Constructing scalable, production-ready AI methods by combining brokers + managed cloud infrastructure

Mission Workflow

- Begin by understanding the idea: what’s “agentic AI,” and the way Bedrock helps constructing such methods.

- Design the agent structure: outline the variety of brokers, their roles, and the way they’ll talk or collaborate to realize objectives.

- Arrange brokers on Bedrock: configure and initialize brokers utilizing Bedrock’s agent-management capabilities.

- Combine required exterior instruments/providers (APIs, databases, and many others.) as per process necessities, so brokers can fetch information, persist state or work together with exterior methods.

- Implement orchestration logic so brokers coordinate: go context/state, set off sub-agents, and deal with dependencies.

- Check the complete agentic workflow end-to-end: feed inputs, let brokers collaborate, and examine outputs.

- Iterate to refine logic, error-handling, orchestration, and integration to make the system strong and production-ready.

20. Introduction to CrewAI Constructing a Researcher Assistant Agent

This mission teaches you the way to construct a “Researcher Assistant” AI agent utilizing CrewAI. You find out how brokers are outlined, how they collaborate inside a crew, and the way to automate analysis duties equivalent to info gathering, summarization, and structured be aware creation. It’s the right start line for understanding CrewAI’s agent-based workflow.

Key Expertise to Study

- Fundamentals of agentic AI and the way CrewAI constructions brokers, duties, and crews

- Defining agent roles and obligations inside a analysis workflow

- Utilizing CrewAI parts to orchestrate multi-step analysis duties

- Automating analysis duties equivalent to information retrieval, summarization, note-making, and report era

- Constructing a purposeful Analysis Assistant that may deal with end-to-end analysis prompts

Mission Workflow

- Perceive CrewAI’s structure: how brokers, duties, and crews work together to type a workflow.

- Outline the Analysis Assistant Agent’s scope: what info it ought to collect, summarize, or compile.

- Arrange brokers and instruments inside CrewAI, assigning every agent a transparent position inside the analysis move.

- Assemble your brokers right into a crew to allow them to collaborate and go info between steps.

- Run the agent on a analysis immediate: observe the way it retrieves information, summarizes content material, and generates structured output.

- Refine agent prompts, behaviour, or crew construction to enhance accuracy and output high quality.

Utilized / Area-Particular AI

21. No Code Predictive Analytics with Orange

One of the vital beginner-friendly information science tasks, this one teaches you the way to carry out predictive analytics utilizing Orange. For these unaware, Orange is a totally no-code, drag-and-drop data-mining platform. You’ll be taught to construct machine-learning workflows, run experiments, evaluate fashions, and extract insights from information with out writing a single line of code. It’s excellent for learners who need to perceive ML ideas by way of visible, interactive workflows moderately than programming.

Key Expertise to Study

- Core machine-learning ideas: supervised & unsupervised studying

- Information preprocessing and have exploration

- Constructing regression, classification, and clustering fashions

- Mannequin analysis: accuracy, RMSE, train-test cut up, cross-validation

- Visible, workflow-based ML experimentation with Orange

Mission Workflow

- Begin with the issue assertion: perceive what you need to predict utilizing your dataset.

- Load your information into Orange utilizing its easy drag-and-drop widgets.

- Preprocess your dataset by dealing with lacking values, deciding on options, and visualizing patterns.

- Select your ML strategy: regression, classification, or clustering, relying in your process.

- Experiment with a number of fashions by connecting totally different mannequin widgets and observing how every performs.

- Consider the outcomes utilizing built-in analysis widgets, evaluating accuracy or error metrics.

- Interpret the insights and find out how predictive analytics can information decision-making in real-world situations.

22. Generative AI on AWS (Case Examine Mission)

This mission walks you thru constructing generative AI purposes on cloud infrastructure, utilizing AWS providers. You’ll discover ways to leverage AWS’s AI/ML stack, together with foundational mannequin providers, inference endpoints, and AI-driven instruments. This shall provide help to discover ways to construct, host, and deploy gen-AI apps in a scalable, production-ready surroundings.

Key Expertise to Study

- Working with AWS AI/ML providers, particularly SageMaker and Amazon Bedrock

- Constructing and deploying generative AI purposes (textual content, language, probably multimodal) on AWS

- Integrating AWS instruments/providers (mannequin internet hosting, inference, storage, API endpoints)

- Managing real-world deployment constraints: scalability, useful resource administration, surroundings setup

- Understanding cloud-based ML workflows: from mannequin choice to deployment and inference

Mission Workflow

- Outline your generative AI use case: resolve what sort of gen-AI app you need (for instance: textual content era, summarisation, content material creation).

- Choose fashions by way of AWS providers: use Bedrock (or SageMaker) to select or load basis / pre-trained fashions, appropriate in your use case.

- Configure cloud infrastructure: arrange compute sources, storage (for information and mannequin artifacts), and inference endpoints by way of AWS.

- Deploy the mannequin to AWS: host the mannequin on AWS, create endpoints or APIs so the mannequin can serve actual requests.

- Combine enter/output pipelines: handle consumer inputs (textual content, prompts, information), feed them to the mannequin endpoint, and deal with generated outputs.

- Check and iterate on the system: run generative duties, test outcomes for correctness, latency, and reliability; tweak parameters or prompts as wanted.

- Scale & optimize deployment: make sure the system is production-ready: handle safety, environment friendly useful resource utilization, price optimization, and reliability.

23. Constructing a Sentiment Classification Pipeline with DistilBert and Airflow

This mission teaches you the way to construct an end-to-end sentiment-analysis pipeline utilizing a contemporary transformer mannequin (DistilBERT) mixed with Apache Airflow for workflow automation. You’ll work with actual evaluation information, clear and preprocess it, fine-tune a transformer for sentiment prediction, after which orchestrate the whole pipeline so it runs in a structured, automated method. You additionally construct a easy native interface so customers can enter textual content and immediately get sentiment outcomes.

Key Expertise to Study

- Utilizing DistilBERT for transformer-based sentiment classification

- Textual content preprocessing and cleansing for real-world evaluation datasets

- Workflow orchestration with Airflow: DAG creation, process scheduling, dependencies

- Automating ML pipelines end-to-end (information → mannequin → inference)

- Constructing a easy native prediction interface for user-friendly mannequin interplay

Mission Workflow

- Load and clear the evaluation dataset; preprocess textual content and put together it for transformer inputs.

- High-quality-tune or prepare a DistilBERT-based sentiment classifier on the cleaned information.

- Create an Airflow DAG that automates all steps: ingestion, preprocessing, inference, and output era.

- Construct a minimal native utility to enter new textual content and retrieve sentiment predictions from the mannequin.

- Check the complete pipeline end-to-end and refine steps for stability, accuracy, and effectivity.

24. OpenEngage: Construct an entire AI-driven advertising and marketing Engine

This mission/course exhibits you the way to construct an end-to-end AI-powered advertising and marketing engine that automates personalised buyer journeys, engagement, and marketing campaign administration. You find out how massive language fashions (LLMs) and automation can remodel conventional advertising and marketing workflows into scalable, data-driven, and personalised advertising and marketing methods.

Key Expertise to Study

- How LLMs can be utilized to generate personalised content material and tailor advertising and marketing messages at scale

- Designing and orchestrating buyer journeys — mapping consumer behaviours to automated engagement flows

- Constructing AI-driven advertising and marketing pipelines: information seize, monitoring consumer behaviour, segmentation, and multi-channel supply (electronic mail, messages, and many others.)

- Integrating AI-based personalization with conventional advertising and marketing/CRM workflows to optimize engagement and conversions

- Understanding the way to construct an AI advertising and marketing engine that reduces handbook effort and scales with the consumer base

Mission Workflow

- Perceive the position of AI and LLMs in trendy advertising and marketing methods and the way they’ll enhance personalization and engagement.

- Outline advertising and marketing aims and buyer journey — what campaigns, what consumer interactions, what personalization logic.

- Construct or configure the advertising and marketing engine’s parts: information seize/monitoring, consumer segmentation, content material era by way of LLMs, and supply mechanisms.

- Design automated pipelines that set off personalised messages based mostly on consumer behaviour or segments, leveraging AI for content material and timing.

- Check the pipelines with pattern customers/information, monitor efficiency (engagement, response charges), and refine segmentation or content material logic.

25. Easy methods to Construct an Picture Generator Internet App with Zero Coding

This mission guides you to construct an online utility that generates pictures utilizing generative AI — all with out writing any code. It’s a drag-and-drop, no-programming route for anybody who needs to launch a picture generator net app shortly, utilizing prebuilt parts and interfaces.

Key Expertise to Study

- Understanding generative AI for pictures: how AI fashions can create visuals from prompts

- Utilizing no-code or low-code instruments to construct net purposes that combine AI picture era

- Designing consumer interface and consumer move for an online app with out coding

- Deploying a functioning net app that connects to an AI backend for real-time picture era

- Managing picture enter/output, immediate dealing with, and consumer requests in a no-code surroundings

Mission Workflow

- Select a no-code/low-code platform or instrument that helps AI picture era + web-app constructing.

- Configure the backend with a generative AI mannequin (pre-trained) that may generate pictures based mostly on consumer prompts.

- Design the front-end utilizing drag-and-drop UI parts: enter immediate subject, generate button, show space for outcomes.

- Hyperlink the front-end to the AI backend: guarantee consumer inputs are handed accurately, and generated pictures are returned and displayed.

- Check the app completely by submitting totally different prompts, checking output pictures, and verifying usability and efficiency.

- Optionally deploy/publish the online app so others can use it (on a internet hosting platform or a web-app internet hosting service).

26. GenAI to Construct Thrilling Video games

This mission teaches you the way to construct enjoyable and interactive video games powered by Generative AI. You’ll discover how AI can drive recreation logic, generate dynamic content material, reply to participant inputs, and create participating experiences, all while not having a complicated game-development background. It’s a inventive, hands-on method to perceive how GenAI can be utilized past conventional information or textual content purposes.

Key Expertise to Study

- Making use of Generative AI fashions to design recreation mechanics

- Integrating AI instruments/APIs to create dynamic, responsive gameplay

- Designing consumer interplay flows for AI-powered video games

- Dealing with prompt-based era and various consumer inputs

- Constructing light-weight interactive purposes utilizing AI because the core engine

Mission Workflow

- Begin by selecting a easy recreation idea the place AI era provides worth — for instance, a guessing recreation, storytelling problem, or AI-generated puzzle.

- Outline the sport loop: how the consumer interacts, what enter they offer, and what the AI generates in response.

- Combine a generative AI mannequin to provide dynamic content material, hints, storylines, or choices.

- Construct the interplay move: seize consumer enter, name the AI mannequin, format outputs, and return outcomes again to the participant.

- Check the sport with totally different inputs, refine prompts for higher responses, and enhance the general gameplay expertise.

Conclusion

In case you have managed to observe all or any of the AI and information science tasks above, I’m certain you gained rather more sensible expertise than you’ll’ve from simply the theoretical understanding of those subjects. The most effective half – these subjects cowl every little thing from classical ML to superior agentic methods, RAG pipelines, and even game-building with GenAI. Every mission is designed that will help you flip abilities into actual, portfolio-ready outcomes. Whether or not you’re simply beginning out or levelling up as knowledgeable, these tasks are certain that will help you perceive how trendy AI methods work in a complete new approach.

That is your 2025 blueprint for studying AI and information science. Now dive into those that excite you most, observe the structured workflows, and create one thing extraordinary.

Login to proceed studying and revel in expert-curated content material.

![25+ AI and Information Science Solved Tasks [2025 Wrap-up] 25+ AI and Information Science Solved Tasks [2025 Wrap-up]](https://i1.wp.com/cdn.analyticsvidhya.com/wp-content/uploads/2025/12/25-AI-and-Data-Science-Solved-Projects-2025-Wrap-up.png?w=696&resize=696,0&ssl=1)