The time period harness has emerged as a shorthand to imply all the things in an AI agent besides the mannequin itself – Agent = Mannequin + Harness. That could be a very broad definition, and subsequently value narrowing down for frequent classes of brokers. I need to take the freedom right here of defining its that means within the bounded context of utilizing a coding agent. In coding brokers, a part of the harness is already inbuilt (e.g. by way of the system immediate, or the chosen code retrieval mechanism, or perhaps a refined orchestration system). However coding brokers additionally present us, their customers, with many options to construct an outer harness particularly for our use case and system.

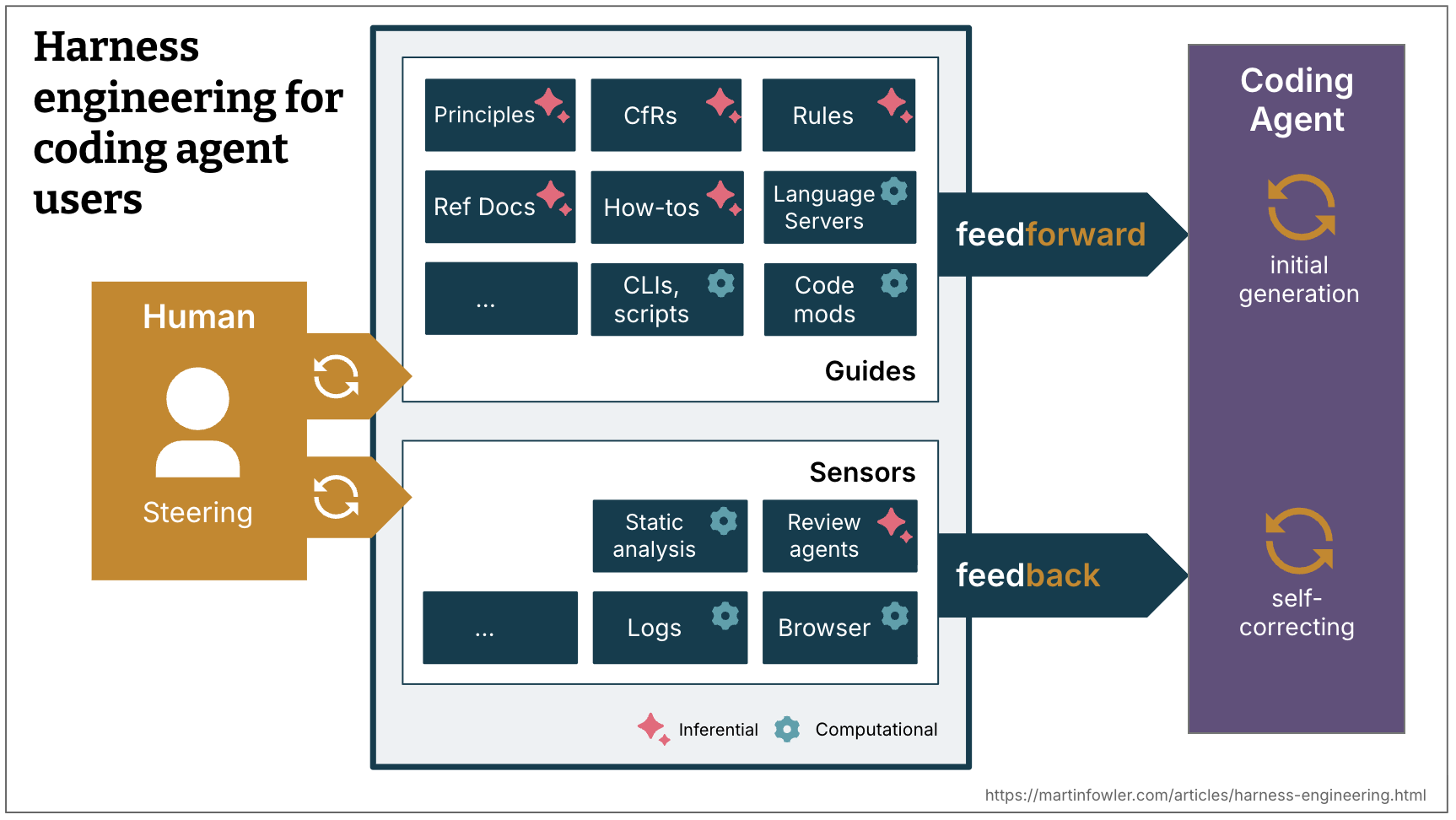

Determine 1:

The time period “harness” means various things relying on the bounded context.

A well-built outer harness serves two objectives: it will increase the likelihood that the agent will get it proper within the first place, and it offers a suggestions loop that self-corrects as many points as attainable earlier than they even attain human eyes. Finally it ought to scale back the assessment toil and improve the system high quality, all with the additional advantage of fewer wasted tokens alongside the way in which.

Feedforward and Suggestions

To harness a coding agent we each anticipate undesirable outputs and attempt to forestall them, and we put sensors in place to permit the agent to self-correct:

- Guides (feedforward controls) – anticipate the agent’s behaviour and intention to steer it earlier than it acts. Guides improve the likelihood that the agent creates good ends in the primary try

- Sensors (suggestions controls) – observe after the agent acts and assist it self-correct. Significantly highly effective after they produce alerts which can be optimised for LLM consumption, e.g. customized linter messages that embrace directions for the self-correction – a optimistic form of immediate injection.

Individually, you get both an agent that retains repeating the identical errors (feedback-only) or an agent that encodes guidelines however by no means finds out whether or not they labored (feed-forward-only).

Computational vs Inferential

There are two execution varieties of guides and sensors:

- Computational – deterministic and quick, run by the CPU. Checks, linters, kind checkers, structural evaluation. Run in milliseconds to seconds; outcomes are dependable.

- Inferential – Semantic evaluation, AI code assessment, “LLM as choose”. Usually run by a GPU or NPU. Slower and costlier; outcomes are extra non-deterministic.

Computational guides improve the likelihood of fine outcomes with deterministic tooling. Computational sensors are low-cost and quick sufficient to run on each change, alongside the agent. Inferential controls are in fact costlier and non-deterministic, however permit us to each present wealthy steerage, and add further semantic judgment. Despite their non-determinism, inferential sensors can notably improve our belief when used with a robust mannequin, or fairly a mannequin that’s appropriate to the duty at hand.

Examples

| Path | Computational / Inferential | Instance implementations | |

|---|---|---|---|

| Coding conventions | feedforward | Inferential | AGENTS.md, Abilities |

| Directions bootstrap a brand new venture | feedforward | Each | Ability with directions and a bootstrap script |

| Code mods | feedforward | Computational | A device with entry to OpenRewrite recipes |

| Structural assessments | suggestions | Computational | A pre-commit (or coding agent) hook working ArchUnit assessments that verify for violations of module boundaries |

| Directions assessment | suggestions | Inferential | Abilities |

The steering loop

The human’s job in that is to steer the agent by iterating on the harness. At any time when a difficulty occurs a number of occasions, the feedforward and suggestions controls must be improved to make the problem much less possible to happen sooner or later, and even forestall it.

Within the steering loop, we will in fact additionally use AI to enhance the harness. Coding brokers now make it less expensive to construct extra customized controls and extra customized static evaluation. Brokers might help write structural assessments, generate draft guidelines from noticed patterns, scaffold customized linters, or create how-to guides from codebase archaeology.

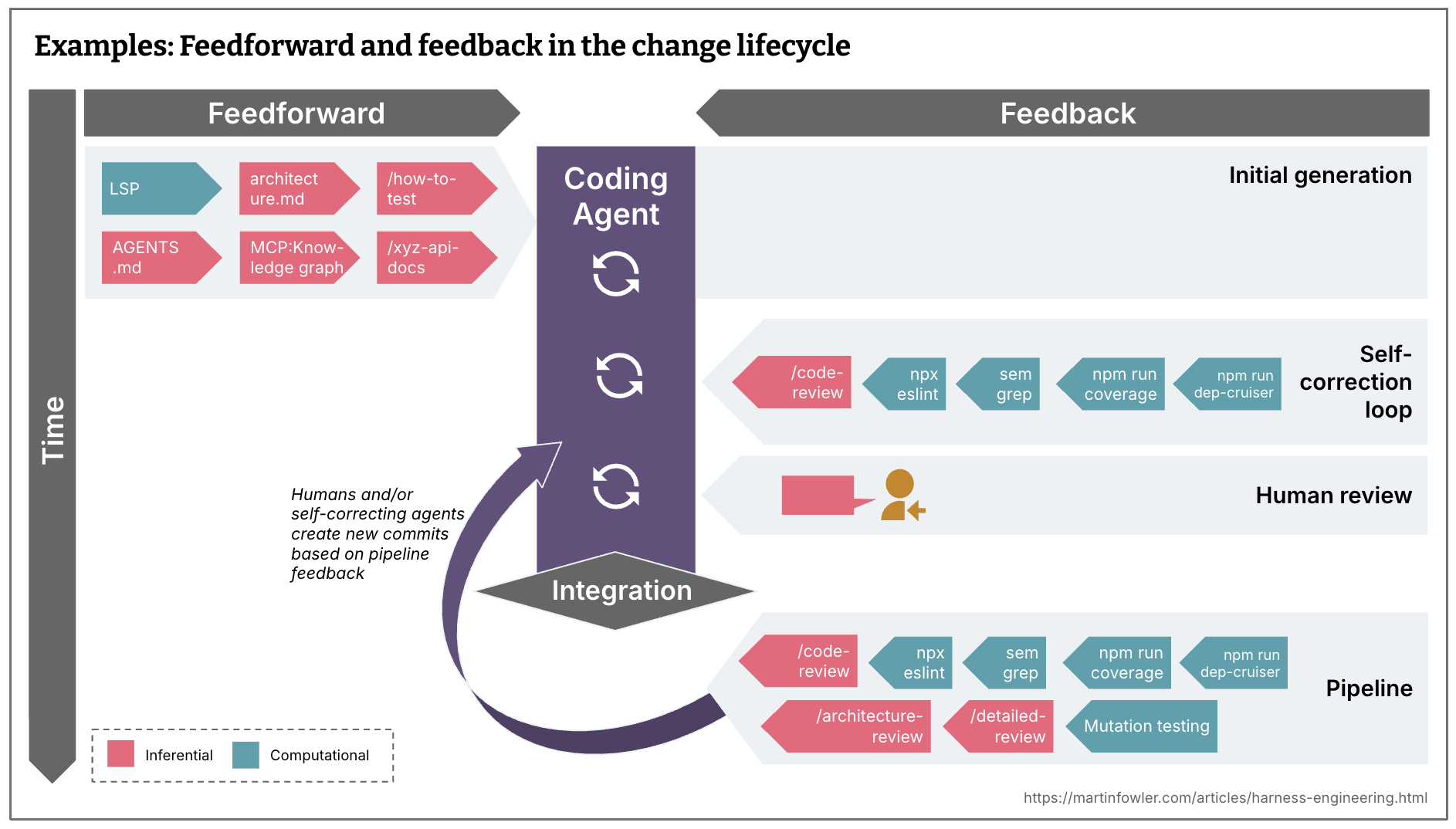

Timing: Preserve high quality left

Groups who’re constantly integrating have all the time confronted the problem of spreading assessments, checks and human critiques throughout the event timeline based on their price, velocity and criticality. While you aspire to constantly ship, you ideally even need each commit state to be deployable. You need to have checks as far left within the path to manufacturing as attainable, because the earlier you discover points, the cheaper they’re to repair. Suggestions sensors, together with the brand new inferential ones, should be distributed throughout the lifecycle accordingly.

Feedforward and suggestions within the change lifecycle

- What in all fairness quick and must be run even earlier than integration, and even earlier than a commit is even created? (e.g. linters, quick take a look at suites, fundamental code assessment agent)

- What’s costlier and may subsequently solely be run post-integration within the pipeline, along with a repetition of the quick controls? (e.g. mutation testing, a extra broad code assessment that may take note of the larger image)

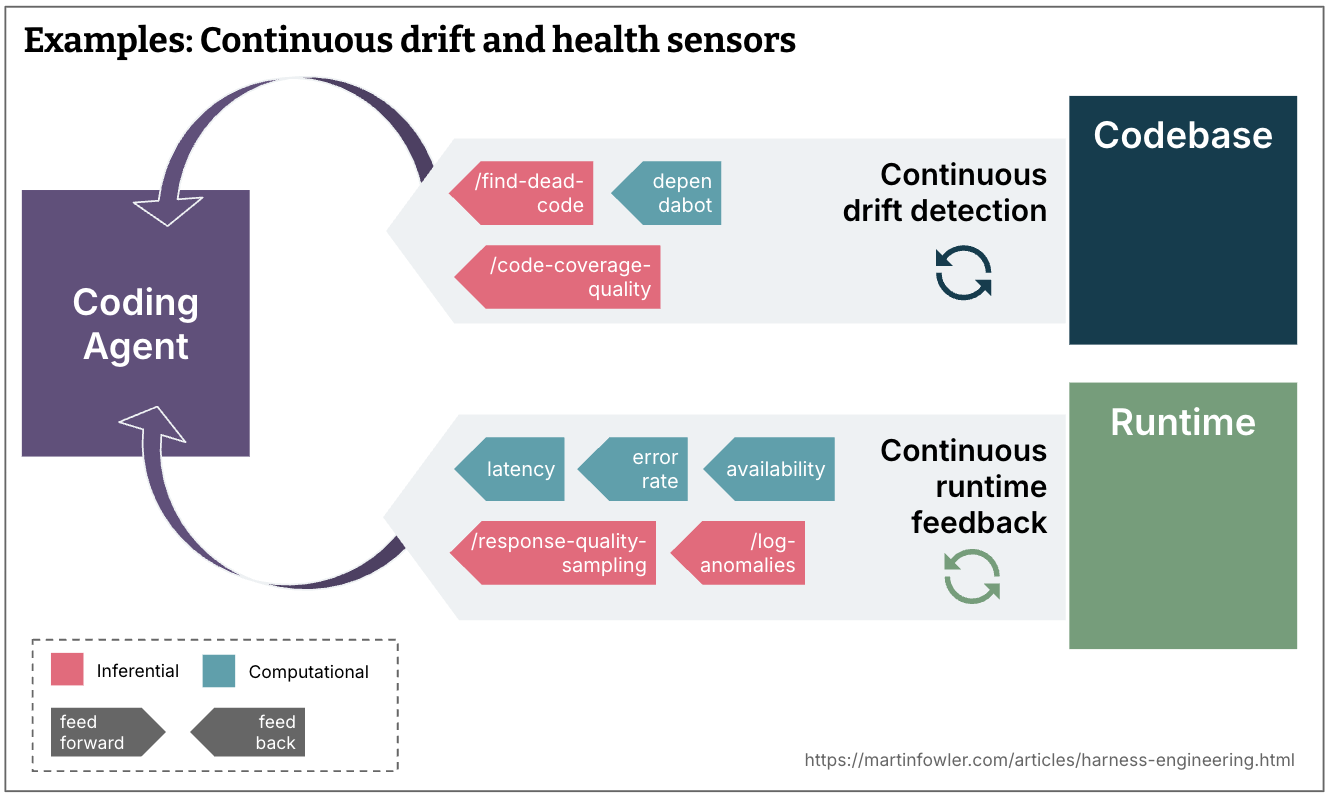

Steady drift and well being sensors

- What kind of drift accumulates steadily and must be monitored by sensors working constantly towards the codebase, outdoors the change lifecycle? (e.g. useless code detection, evaluation of the standard of the take a look at protection, dependency scanners)

- What runtime suggestions might brokers be monitoring? (e.g. having them search for degrading SLOs to make solutions enhance them, or AI judges constantly sampling response high quality and flagging log anomalies)

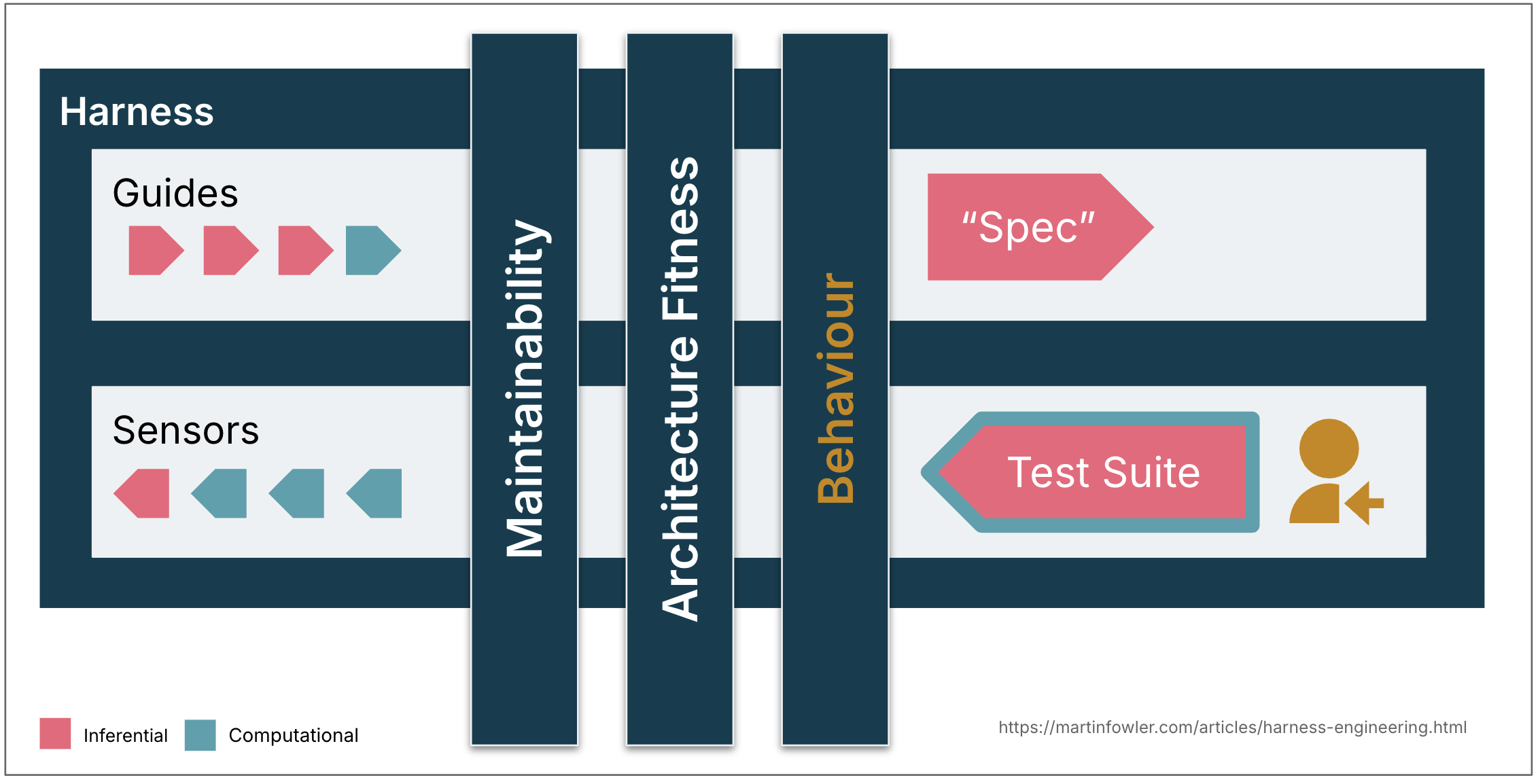

Regulation classes

The agent harness acts like a cybernetic governor, combining feed-forward and suggestions to control the codebase in the direction of its desired state. It is helpful to tell apart between a number of dimensions of that desired state, categorised by what the harness is meant to control. Distinguishing between these classes helps as a result of harnessability and complexity differ throughout them, and qualifying the phrase offers us extra exact language for a time period that’s in any other case very generic.

The next are three classes that appear helpful to me as of now:

Maintainability harness

Roughly all the examples I’m giving on this article are about regulating inside code high quality and maintainability. That is in the mean time the simplest kind of harness, as we now have quite a lot of pre-existing tooling that we will use for this.

To mirror on how a lot these aforementioned maintainability harness concepts improve my belief in brokers, I mapped frequent coding agent failure modes that I catalogued earlier than towards it.

Computational sensors catch the structural stuff reliably: duplicate code, cyclomatic complexity, lacking take a look at protection, architectural drift, type violations. These are low-cost, confirmed, and deterministic.

LLMs can partially handle issues that require semantic judgment – semantically duplicate code, redundant assessments, brute-force fixes, over-engineered options – however expensively and probabilistically. Not on each commit.

Neither catches reliably among the higher-impact issues: Misdiagnosis of points, overengineering and pointless options, misunderstood directions. They’re going to typically catch them, however not reliably sufficient to scale back supervision. Correctness is outdoors any sensor’s remit if the human did not clearly specify what they wished within the first place.

Structure health harness

This teams guides and sensors that outline and verify the structure traits of the applying. Principally: Health Capabilities.

Examples:

- Abilities that feed ahead our efficiency necessities, and efficiency assessments that feed again to the agent if it improved or degraded them.

- Abilities that describe coding conventions for higher observability (like logging requirements), and debugging directions that ask the agent to mirror on the standard of the logs it had out there.

Behaviour harness

That is the elephant within the room – how can we information and sense if the applying functionally behaves the way in which we’d like it to? In the intervening time, I see most individuals who give excessive autonomy to their coding brokers do that:

- Feed-forward: A practical specification (of various ranges of element, from a brief immediate to multi-file descriptions)

- Feed-back: Verify if the AI-generated take a look at suite is inexperienced, has fairly excessive protection, some would possibly even monitor its high quality with mutation testing. Then mix that with guide testing.

This strategy places quite a lot of religion into the AI-generated assessments, that is not adequate but. A few of my colleagues are seeing good outcomes with the authorized fixtures sample, however it’s simpler to use in some areas than others. They use it selectively the place it matches, it is not a wholesale reply to the take a look at high quality drawback.

So total, we nonetheless have loads to do to determine good harnesses for practical behaviour that improve our confidence sufficient to scale back supervision and guide testing.

Harnessability

Not each codebase is equally amenable to harnessing. A codebase written in a strongly typed language naturally has type-checking as a sensor; clearly definable module boundaries afford architectural constraint guidelines; frameworks like Spring summary away particulars the agent would not even have to fret about and subsequently implicitly improve the agent’s possibilities of success. With out these properties, these controls aren’t out there to construct.

This performs out in a different way for greenfield versus legacy. Greenfield groups can bake harnessability in from day one – know-how choices and structure selections decide how governable the codebase might be. Legacy groups, particularly with purposes which have accrued quite a lot of technical debt, face the more durable drawback: the harness is most wanted the place it’s hardest to construct.

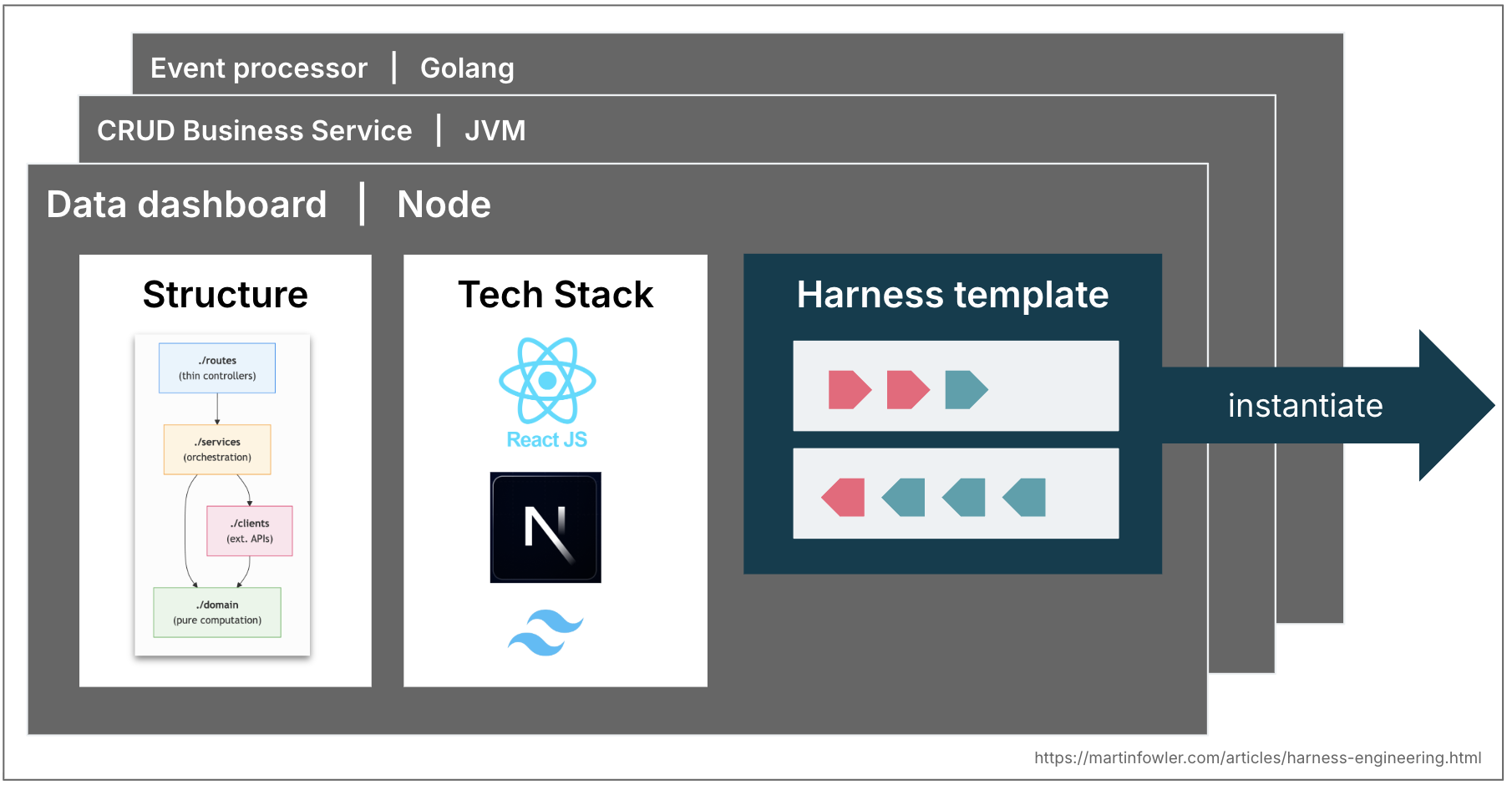

Harness templates

Most enterprises have a couple of frequent topologies of providers that cowl 80% of what they want – enterprise providers that exposes information by way of APIs; occasion processing providers; information dashboards. In lots of mature engineering organizations these topologies are already codified in service templates. These would possibly evolve into harness templates sooner or later: a bundle of guides and sensors that leash a coding agent to the construction, conventions and tech stack of a topology. Groups could begin selecting tech stacks and constructions partly primarily based on what harnesses are already out there for them.

We’d in fact face related challenges as with service templates. As quickly as groups instantiate them, they begin fall out of sync with upstream enhancements. Harness templates would face the identical versioning and contribution issues, perhaps even worse with non-deterministic guides and sensors which can be more durable to check.

The position of the human

As human builders we deliver our abilities and expertise as an implicit harness to each codebase. We absorbed conventions and good practices, we now have felt the cognitive ache of complexity, and we all know that our title is on the commit. We additionally carry organisational alignment – consciousness of what the group is attempting to realize, which technical debt is tolerated for enterprise causes, and what “good” appears to be like like on this particular context. We go in small steps and at our human tempo, which creates the pondering area for that have to get triggered and utilized.

A coding agent has none of this: no social accountability, no aesthetic disgust at a 300-line operate, no instinct that “we do not do it that method right here,” and no organisational reminiscence. It would not know which conference is load-bearing and which is simply behavior, or whether or not the technically appropriate resolution matches what the group is attempting to do.

Harnesses are an try to externalise and make specific what human developer expertise brings to the desk, however it might solely go thus far. Constructing a coherent system of guides and sensors and self-correction loops is pricey, so we now have to prioritise with a transparent aim in thoughts: harness mustn’t essentially intention to completely remove human enter, however to direct it to the place our enter is most necessary.

A place to begin – and open questions

The psychological mannequin I’ve laid out right here describes methods which can be already taking place in observe and helps body discussions about what we nonetheless want to determine. Its aim is to boost the dialog above the characteristic stage – from abilities and MCP servers to how we strategically design a system of controls that offers us real confidence in what brokers produce.

Listed here are some harness-related examples from the present discourse:

- An OpenAI group documented what their harness appears to be like like: layered structure enforced by customized linters and structural assessments, and recurring “rubbish assortment” that scans for drift and has brokers recommend fixes. Their conclusion: “Our most tough challenges now middle on designing environments, suggestions loops, and management programs.”

- Stripe’s write-up about their minions describes issues like pre-push hooks that run related linters primarily based on a heuristic, they spotlight how necessary “shift suggestions left” is to them, and their “blueprints” present how they’re integrating suggestions sensors into the agent workflows.

- Mutation and structural testing are examples of computational suggestions sensors which have been underused prior to now, however at the moment are having a resurgence.

- There’s elevated chatter amongst builders concerning the integration of LSPs and code intelligence in coding brokers, examples of computational feedforward guides.

- I hear tales from groups at Thoughtworks about tackling structure drift with each computational and inferential sensors, e.g. growing API high quality with a mixture of brokers and customized linters, or growing code high quality with a “janitor military”.

There’s a lot nonetheless to determine, not simply the already talked about behavioural harness. How can we maintain a harness coherent because it grows, with guides and sensors in sync, not contradicting one another? How far can we belief brokers to make wise trade-offs when directions and suggestions alerts level in numerous instructions? If sensors by no means fireplace, is {that a} signal of top of the range or insufficient detection mechanisms? We’d like a approach to consider harness protection and high quality much like what code protection and mutation testing do for assessments. Feedforward and suggestions controls are at the moment scattered throughout supply steps, there’s actual potential for tooling that helps configure, sync, and purpose about them as a system. Constructing this outer harness is rising as an ongoing engineering observe, not a one-time configuration.