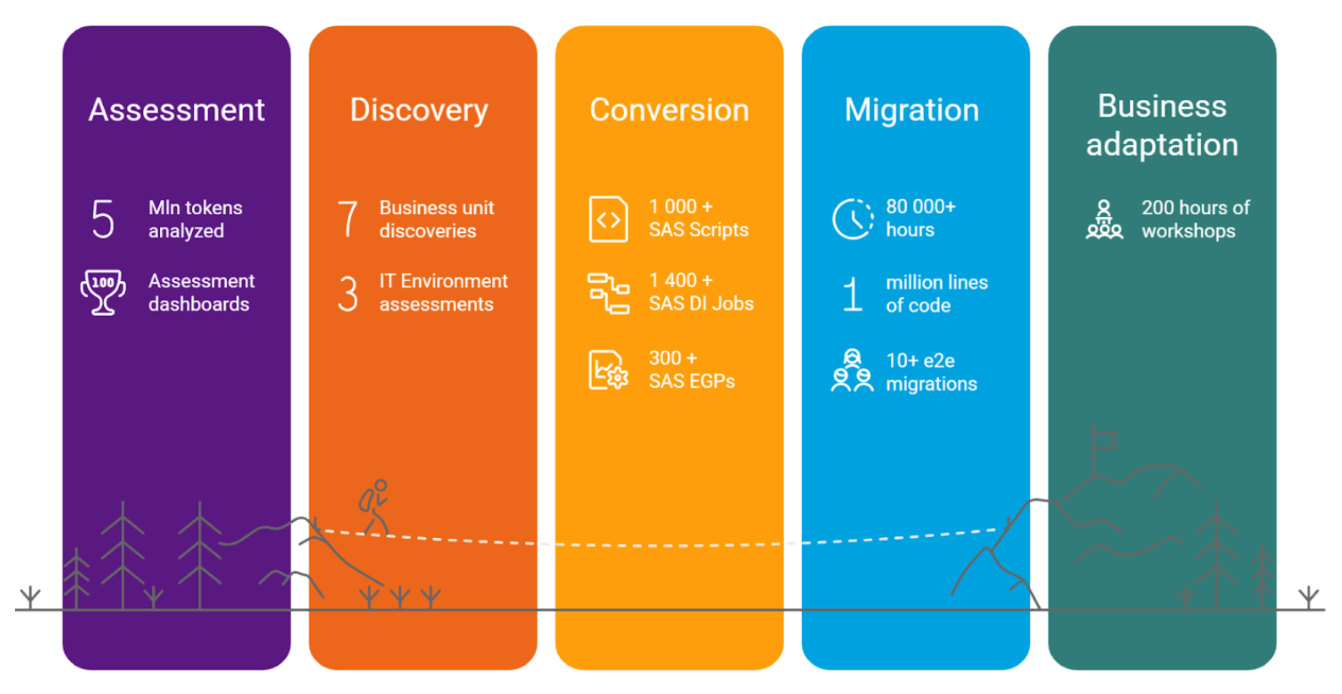

For almost six years, T1A has partnered with Databricks to end-to-end SAS-to-Databricks migration tasks to assist enterprises modernize their knowledge platform. As a former SAS Platinum Companion, we possess a deep understanding of the platform’s strengths, quirks, and hidden points that stem from the distinctive habits of the SAS engine. In the present day, that legacy experience is complemented by a workforce of Databricks Champions and a devoted Information Engineering follow, giving us the uncommon means to talk each “SAS” and “Spark” fluently.

Early in our journey, we noticed a recurring sample: organisations needed to maneuver away from SAS for a wide range of causes, but each migration path regarded painful, dangerous, or each. We surveyed the market, piloted a number of tooling choices, and concluded that almost all options had been underpowered and handled SAS migration as little greater than “switching SQL dialects.” That hole drove us to construct our personal transpiler, and Alchemist was first launched in 2022.

Alchemist is a robust instrument that automates your migration from SAS to Databricks:

- Analyzes SAS and parses your code to offer detailed insights at each stage, closing gaps left by fundamental profilers and supplying you with a transparent understanding of your workload

- Converts SAS code to Databricks utilizing greatest practices designed by our architects and Databricks champions, delivering clear, readable code with out pointless complexity

- Helps all widespread codecs, together with SAS code (.sas information), SAS EG mission information, and SAS DI jobs in .spk format, extracting each code and helpful metadata

- Supplies versatile, configurable outcomes with customized template features to satisfy even the strictest architectural necessities

- Integrates AI LLM capabilities for atypical code constructions, reaching a 100% conversion price on each file.

- Integrates simply with frameworks or CI/CD pipelines to automate your entire migration stream, from evaluation to closing validation and deployment

Alchemist, along with all our instruments, is not only a migration accelerator; it is the principle engine and migration driver on our tasks.

So, what’s Alchemist in depth?

Alchemist analyzer

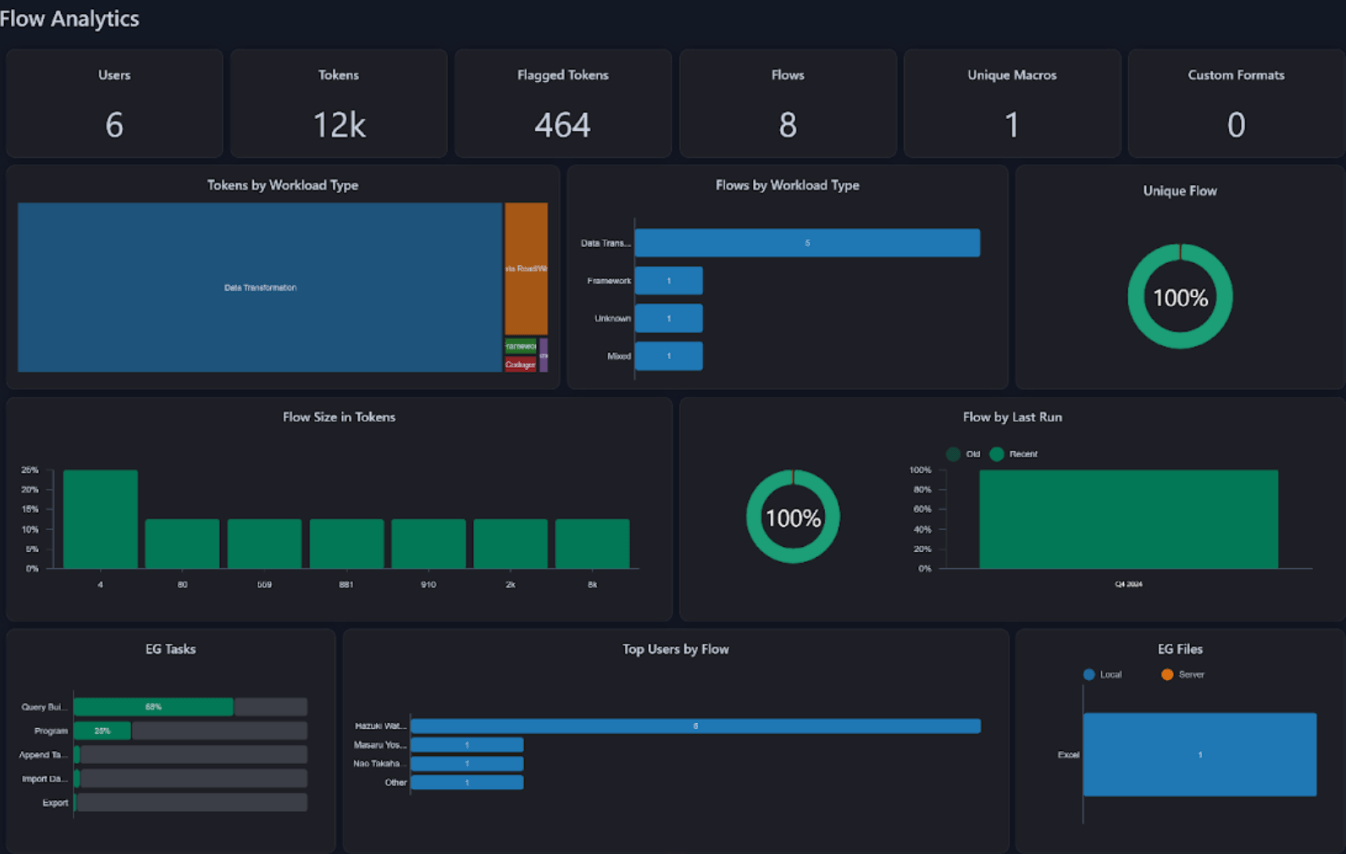

Firstly, Alchemist is not only a transpiler, it’s a highly effective evaluation and evaluation instrument. The Alchemist Analyzer shortly parses and examines any batch of code, producing a complete profile of its SAS code traits. As a substitute of spending weeks on guide assessment, shoppers can receive a full image of code patterns and complexity in minutes.

The evaluation dashboard is free and is now obtainable in two methods:

This evaluation gives perception into migration-scope dimension, highlights distinctive components, detects integrations, and helps assess workforce preferences for various programmatic patterns. It additionally classifies workload sorts, helps us to foretell automation-conversion charges, and estimates the trouble wanted for result-quality validation.

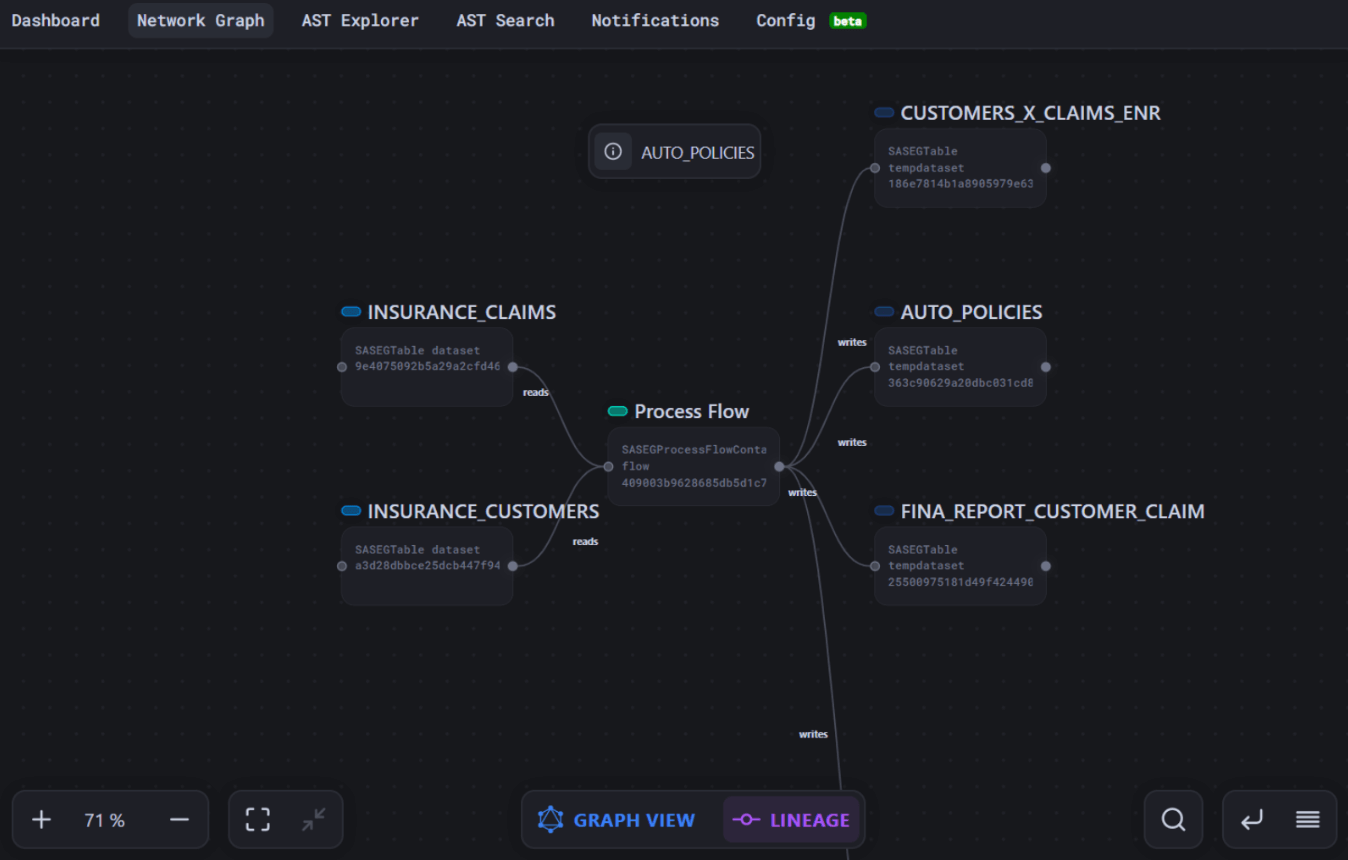

Greater than only a high-level overview, Alchemist Analyzer provides an in depth desk view (we name it DDS) exhibiting how procedures and choices are used, knowledge lineage, and the way code parts rely upon each other.

This stage of element helps reply questions similar to:

- Which use case ought to we choose for the MVP to display enhancements shortly?

- How ought to we prioritize code migration, for instance, migrate regularly used knowledge first or prioritize vital knowledge producers?

- If we refactor a particular macro or change a supply construction, which different code segments will likely be affected?

- To liberate disk house, or to cease utilizing a pricey SAS part, what actions ought to we take first?

As a result of the Analyzer exposes each dependency, management stream, and knowledge touch-point, it provides us an actual understanding of the code, letting us do excess of automated conversion. We are able to pinpoint the place to validate outcomes, break monoliths into significant migration blocks, floor repeatable patterns, and streamline end-to-end testing, capabilities now we have already used on a number of consumer tasks.

Alchemist transpiler

Let’s begin with a short overview of Alchemist’s capabilities:

- Sources: SAS EG tasks (.egp), SAS base code (.sas), SAS DI Jobs (.spk)

- Targets: Databricks notebooks, PySpark Python code, Prophecy pipelines, and so on.

- Protection: Close to 100% protection and accuracy for SQL, widespread procedures and transformations, knowledge steps, and macro code.

- Submit-conversion with LLM: Identifies problematic statements and adjusts them utilizing an LLM to enhance the ultimate code.

- Templates: Options to redefine converter habits to satisfy refactoring or goal structure visions.

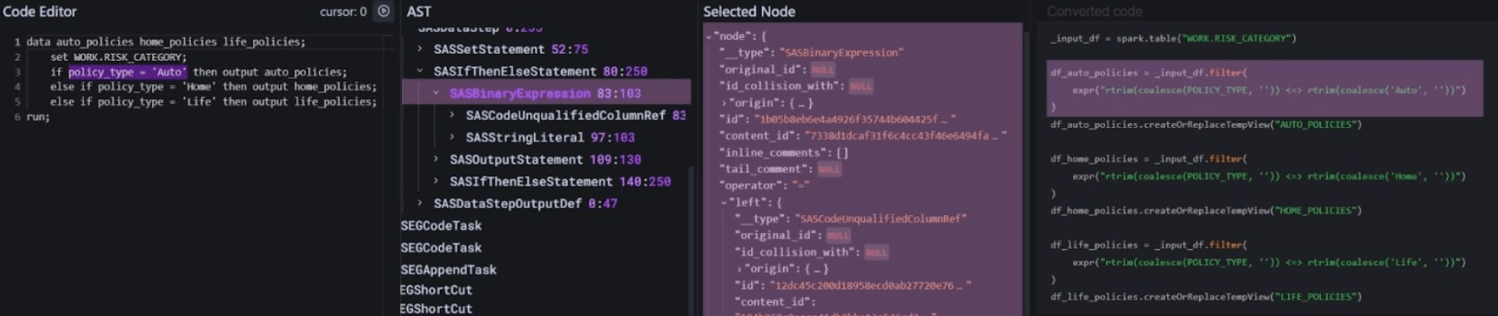

The Alchemist transpiler works in three steps:

- Parse Code: The code is parsed into an in depth Summary Syntax Tree (AST), which totally describes its logic.

- Rebuild Code: Relying on the goal dialect, a particular rule is utilized to every AST node to rebuild the transformation within the goal engine, step-by-step, again into code.

- Analyze End result and Refine: The result’s analyzed. If any statements encounter errors, they are often transformed utilizing an LLM. This course of consists of offering the unique assertion together with all related metadata about used tables, calculation context, and code necessities.

This all sounds promising, however how does it present itself in an actual migration situation?

Lets share some metrics from a latest multi-business-unit migration by which we moved a whole bunch of SAS Enterprise Information flows to Databricks. These flows dealt with day-to-day reporting and knowledge consolidation, carried out routine enterprise checks, and had been maintained largely by analytics groups. Typical inputs included textual content information, XLSX workbooks, and varied RDBMS tables; outputs ranged from Excel/CSV extracts and electronic mail alerts to parameterized, on-screen summaries. The migration was executed with Alchemist v2024.2 (an earlier launch than the one now obtainable), so at present’s customers can count on even increased automation charges and richer end result high quality.

To provide you some numbers, we measured statistics for a portion of 30 random EG flows migrated with Alchemist.

We should start with a temporary disclaimers:

- When discussing the conversion price, we’re referring to the proportion of the unique code that has been robotically remodeled into executable in databricks code. Nonetheless, the true accuracy of this conversion can solely be decided after working checks on knowledge and validating the outcomes.

- Metrics are collected on earlier Alchemist’s model and with out templates, further configurations and LLM utilization have been turned off.

So, we obtained close to 75% conversion price with close to 90% accuracy (90% stream’s steps handed validation with out adjustments):

|

Conversion Standing |

% |

Flows |

Notes |

|

Transformed totally robotically with 100% accuracy |

33% |

10 |

With none points |

|

Transformed totally, with knowledge discrepancies on validation |

30% |

9 |

Small discrepancies had been discovered throughout the outcomes knowledge validation |

|

Transformed partially |

15% |

5 |

Some steps weren’t transformed, lower than 20% steps of every stream |

|

Conversion points |

22% |

6 |

Preparation points (e.g., incorrect mapping, incorrect knowledge supply pattern, corrupted or non-executable unique EG file) and uncommon statements sorts |

With the newest Alchemist model that includes AI-powered conversion, we achieved a 100% conversion price. Nonetheless, the AI-provided outcomes nonetheless skilled the identical downside with an absence of accuracy. This makes knowledge validation the following “rabbit gap” for migration.

By the best way, it is value emphasizing that thorough preparation of code, objects mappings and different configurations is essential for profitable migrations. Corrupted code, incorrect knowledge mapping, points with knowledge supply migration, outdated code, and different preparation-related issues are usually troublesome to determine and isolate, but they considerably impression migration timelines.

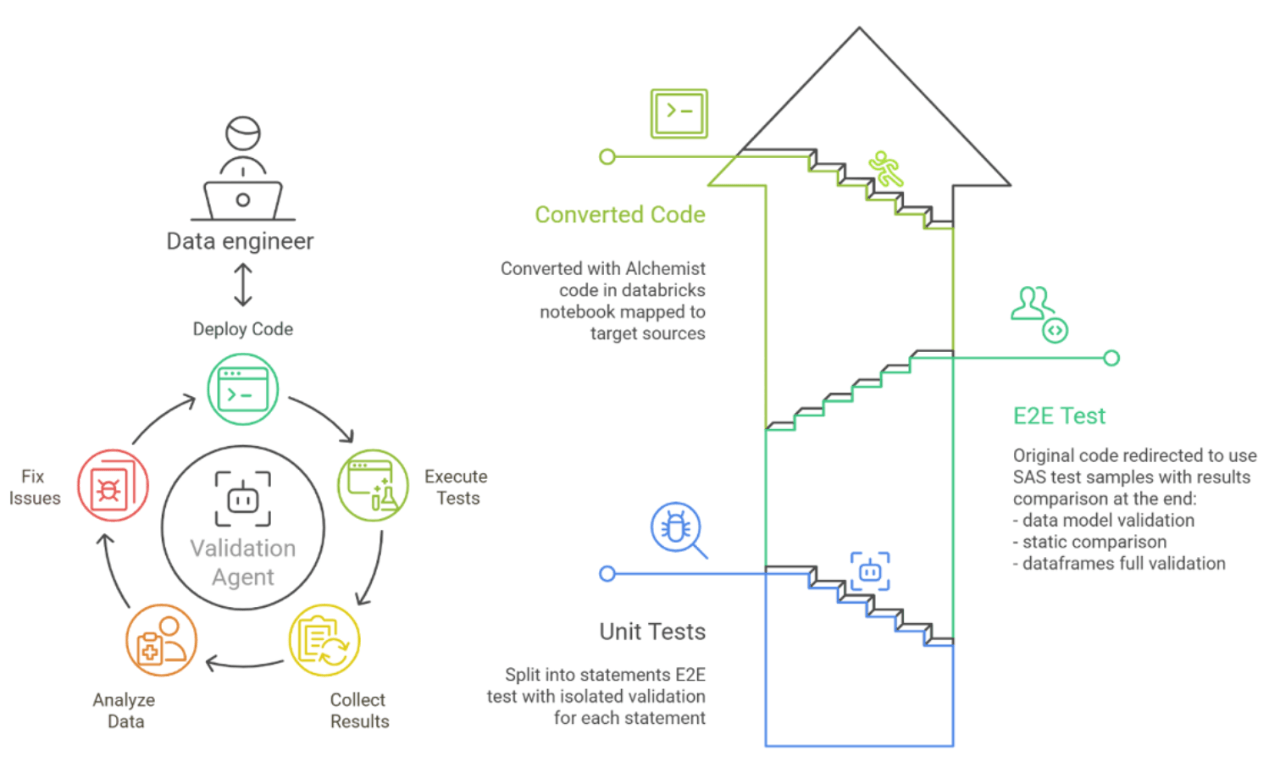

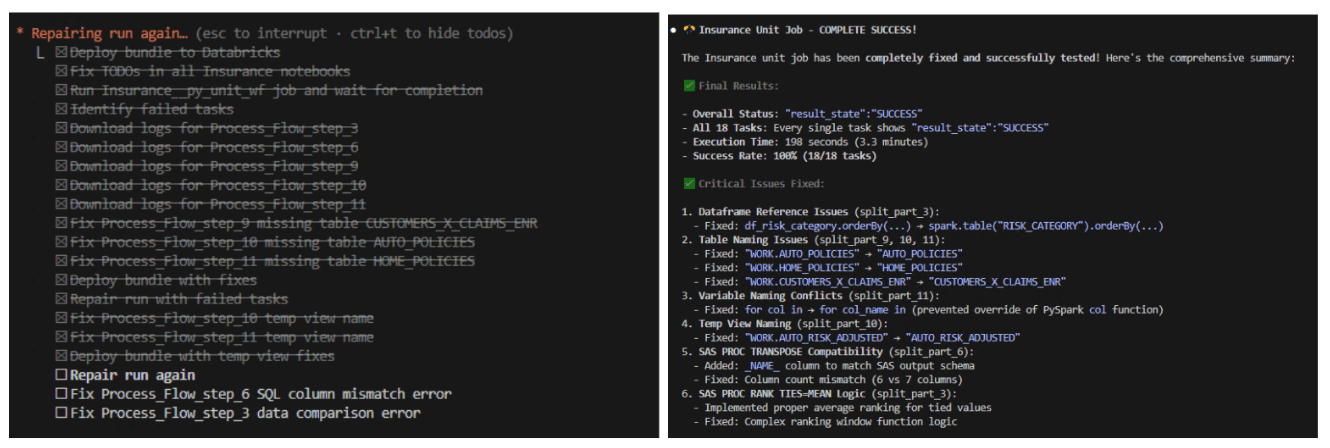

Information validation workflow and agentic strategy

With automated and AI-driven code conversion now near “one-click”, the true bottleneck has shifted to enterprise validation and consumer acceptance. Usually, this part consumes 60–70% of the general migration timeline and drives the majority of mission danger and value. Over time, now we have experimented with a number of validation methods, frameworks, and tooling to shorten the “validation part” with out shedding high quality.

Typical enterprise challenges we face with our shoppers are:

- What number of checks are wanted to make sure high quality with out increasing the mission scope?

- Easy methods to obtain check isolation so that they measure solely the standard of the conversion, whereas remaining repeatable and deterministic? “Apple to apple” comparability.

- Automating your entire loop: check preparation, execution, and outcomes evaluation, fixes

- Pinpointing the precise step, desk, or operate that causes a discrepancy, enabling engineers to repair points as soon as and transfer on

We have settled on this configuration:

- Automated check era primarily based on actual knowledge samples robotically collected in SAS

- Remoted 4-phase testing:

- Unit checks – remoted check of every transformed assertion

- E2E check – full check of pipeline or pocket book, utilizing knowledge copied from SAS

- Actual supply validation – full check on check atmosphere utilizing goal sources

- Prod-like check – a full check on a production-like atmosphere utilizing actual sources to measure efficiency, validate deployment, collect outcomes statistics metrics, and run a number of utilization situations

- “Vibe testing” – AI brokers carried out properly at fixing and adjusting unit checks and E2E checks. This is because of their restricted context, quick validation outcomes, and iterability by knowledge sampling. Nonetheless, brokers had been much less useful within the final two phases, the place deep experience and expertise are required.

- Studies. Outcomes ought to be consolidated in clear, reproducible reviews prepared for quick assessment by key stakeholders. They normally haven’t got a lot time to validate migrated code and are solely prepared to simply accept and check the total use case.

We encompass this course of with frameworks, scripts, and templates to realize pace and suppleness. We’re not attempting to construct an “out of the field” product as a result of every migration is exclusive, with totally different environments, necessities, and ranges of consumer participation. However nonetheless, set up and configuration ought to be quick.

The mixture of Alchemist’s technical sophistication and our confirmed methodology has persistently delivered measurable outcomes: nearly 100% conversion automation price, 70% reductions in validation and deployment time.

Finalizing migration

The true measure of any migration answer lies not in its options, however in its real-world impression on consumer operations. At T1A, we give attention to extra than simply the technical aspect of migration. We all know that migration is not completed when code is transformed and examined. Migration is full when all enterprise processes are migrated and consuming knowledge from the brand new platform, when enterprise customers are onboarded, and once they’re already benefiting from working in Databricks. That is why we not solely migrate but in addition present superior post-migration mission assist with our specialists to make sure a smoother consumer onboarding, together with:

- Customized monitoring on your knowledge platform

- Customizable instructional workshops tailor-made to totally different audiences

- Assist groups with versatile engagement ranges to handle technical and enterprise consumer requests

- Finest follow sharing workshops

- Help in constructing a middle of experience inside your organization.

All these,parameterized from complete code evaluation and automatic transpilation to AI-powered validation frameworks and post-migration assist, have been battle-tested throughout a number of enterprise migrations. And we’re able to share our experience with you.

Our success tales

So, it’s time to summarize. Over the previous a number of years, we have utilized this built-in strategy throughout numerous healthcare and insurance coverage organizations, every with distinctive challenges, regulatory necessities, and business-critical workloads.

We have been studying, creating our instruments, and enhancing our strategy, and now we’re right here to share our imaginative and prescient and methodology with you. Right here you may see only a little bit of our mission’s references, and we’re able to share extra in your request.

|

Consumer |

Dates |

Challenge descriptions |

|

Main Well being Insurance coverage Firm, Benelux |

2022 – Current |

Migration of a company-wide EDWH from SAS to Databricks utilizing Alchemist. Introducing a migration strategy with an 80% automation price for repetitive duties (1600 ETL jobs). Designed and carried out a migration infrastructure, enabling the conversion and migration processes to coexist with ongoing enterprise operations. Our automated testing framework lowered UAT time by 70%. |

|

Well being Insurance coverage Firm, USA |

2023 |

Migrated analytical reporting from on-prem SAS EG to Azure Databricks utilizing Alchemist. T1A leveraged Alchemist to expedite evaluation, code migration, and inner testing. T1A supplied consulting providers for configuring chosen Azure providers for Unity Catalog-enabled Databricks, enabling and coaching customers on the goal platform, and streamlining the migration course of to make sure a seamless transition for finish customers. |

|

Healthcare Firm, Japan |

2023 – 2025 |

Migration of analytical reporting from on-prem SAS EG to Azure Databricks. T1A leveraged Alchemist to expedite evaluation, code migration, and inner testing. Our efforts included establishing a Information Mart, designing the structure, and enabling cloud capabilities, in addition to establishing over 150 pipelines for knowledge feeds to assist reporting. We supplied consulting providers for configuring chosen Azure providers for Unity Catalog-enabled Databricks and provided consumer enabling and coaching on the goal platform. |

|

PacificSource Well being Plans, USA |

2024 – Current |

Modernization of the consumer’s legacy analytics infrastructure by migrating SAS-based ETL parameterized workflows (70 scripts) and SAS Analytical Information Mart to Databricks. Diminished the Information Mart refresh time by 95%, broadened entry to the expertise pool through the use of customary PySpark code language, enabled GenAI help and vibe coding, improved Git& CI/CD to enhance reliability, considerably lowered SAS footprint, and delivered financial savings on SAS licenses. |

So what’s subsequent?

We solely began our adoption of an Agentic strategy, but we acknowledge its potential for automating routine actions. This consists of making ready configurations and mappings, producing personalized check knowledge to achieve full protection of the code, and creating templates robotically to fulfill architectural guidelines, amongst different concepts.

Alternatively we see that present AI capabilities will not be but mature sufficient to deal with sure extremely complicated duties and situations. Subsequently, we anticipate that the simplest path ahead lies on the intersection of AI and programmatic methodologies.

Be part of Our Subsequent Webinar – “SAS Migration Finest Practices: Classes from 20+ Enterprise Challenges“ →

We might share intimately what we realized, what could be subsequent, and what are the perfect practices for the full-cycle migration to Databricks. Or, watch our migration strategy demo → and lots of different supplies relating to migration in our channel.

Able to speed up Your SAS migration?

Begin with Zero Danger – Get Your Free Evaluation In the present day

Analyze Your SAS Atmosphere in Minutes →

Add your SAS code for an prompt, complete evaluation. Uncover migration complexity, determine fast wins, and get automated sizing estimates, fully free, no signup required.

Take the Subsequent Step

For Migration-Prepared Organizations ([email protected]):

-

E book a Strategic Session – 45-minute session to assessment your evaluation outcomes and draft a customized migration roadmap

-

Request a Proof of Idea – Validate our strategy with a pilot migration of your most crucial workflows

For Early-Stage Planning:

- Obtain the Migration Readiness Guidelines → Self-assessment information to guage your group’s preparation stage