Anthropic right now is releasing a preview of Claude Code Overview, which makes use of brokers to catch bugs in each pull request. It’s in analysis preview to Staff and Enterprise customers.

With AI instruments creating code at a exceptional tempo, code evaluations have gotten a bottleneck, so most PRs are getting gentle scans as an alternative of deeper evaluations, which may result in bugs coming into manufacturing.

Within the announcement, Anthropic defined that when a pull request is opened, the brokers search for and confirm bugs, filtering out false positives, and ranks them in accordance the severity. The result’s seen as a high-signal overview remark within the PR, and creates in-line feedback for particular bugs. The extra complicated the modifications, the extra brokers are deployed, and get a deeper learn than extra trivial ones would get. The corporate stated that primarily based on its testing, the typical code evaluation takes roughly 20 minutes.

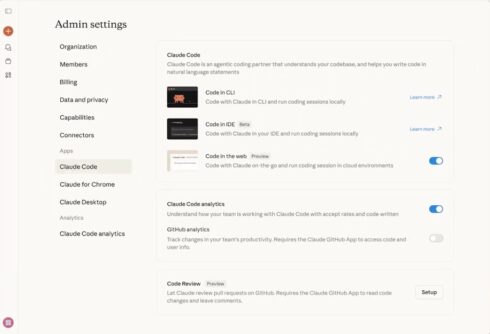

Claude Code Overview is a extra in-depth answer that Claude Code GitHub Motion, and for builders, there is no such thing as a configuration required; it begins up mechanically each time a PR is made. Admins utilizing Claude Code Overview should Allow Code Overview in your Claude Code settings, set up the GitHub App, and choose repositories you’d wish to run evaluations on, the corporate defined.

“We run Code Overview on practically each PR at Anthropic,” the corporate wrote in a weblog saying the discharge. “Earlier than, 16% of PRs received substantive evaluation feedback. Now 54% do. It received’t approve PRs — that’s nonetheless a human name — nevertheless it closes the hole so reviewers can truly cowl what’s delivery.”

Evaluations are billed on token utilization and usually common $15–25, scaling with PR measurement and complexity, the corporate wrote.

Discover the docs for extra data.