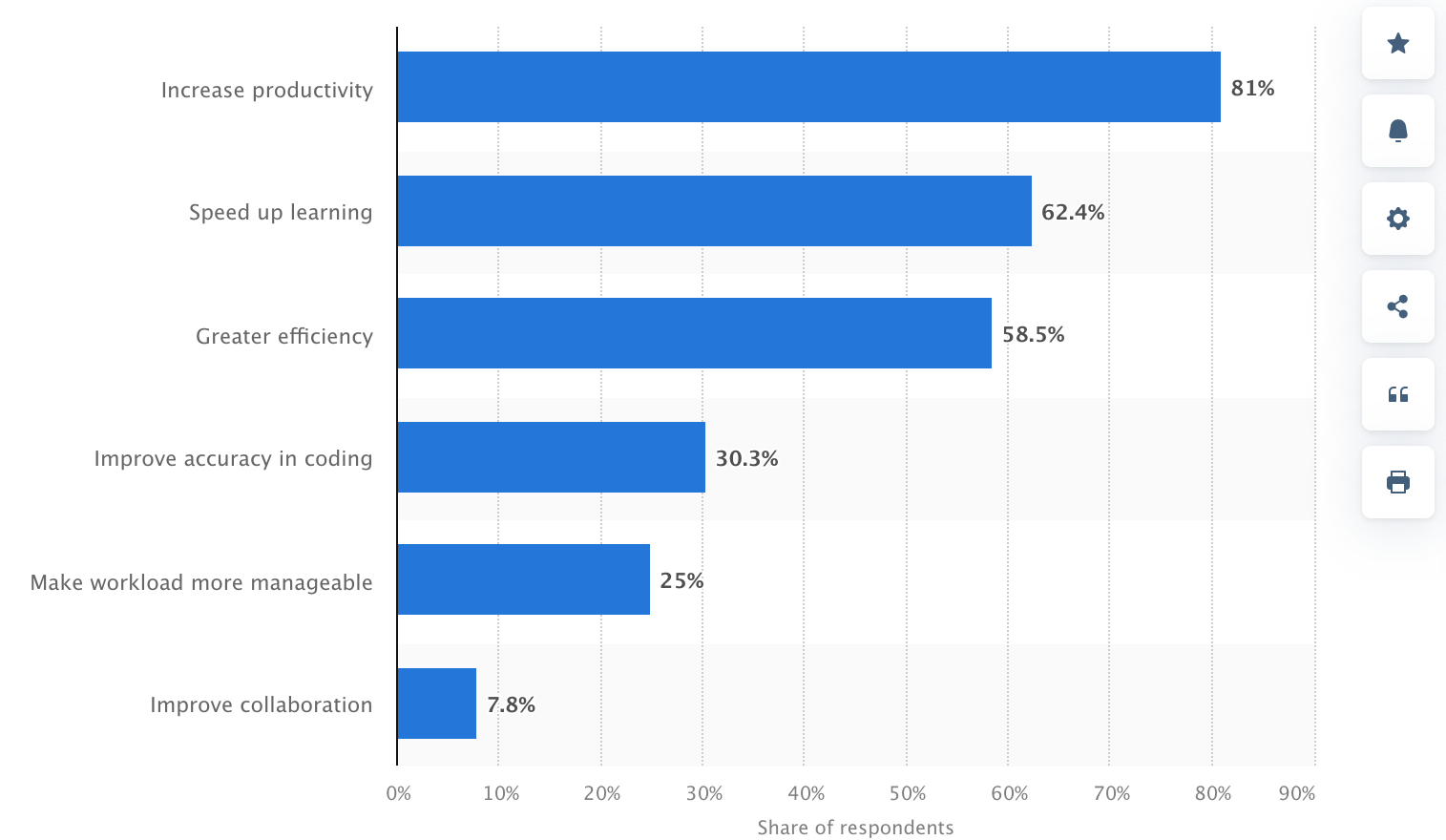

During the last 2-3 years, synthetic intelligence (AI) brokers have turn into extra embedded within the software program improvement course of. In keeping with Statista, three out of 4 builders, or round 75%, use GitHub Copilot, OpenAI Codex, ChatGPT, and different generative AI of their every day chores.

Nonetheless, whereas AI exhibits promise in directing software program improvement duties, it creates a wave of authorized uncertainty.

Potential of Synthetic Intelligence in Managing Complicated Duties, Statista

Who owns the code written by an AI? What occurs if AI-made code infringes on another person’s mental property? And what are the privateness dangers when business knowledge is processed by way of AI fashions?

To reply all these burning questions, we’ll clarify how AI improvement is regarded from the authorized facet, particularly in outsourcing circumstances, and dive into all issues corporations ought to perceive earlier than permitting these instruments to combine into their workflows.

What Is AI in Customized Software program Improvement?

The marketplace for AI applied sciences is huge, amounting to round $244 billion in 2025. Usually, AI is split into machine studying and deep studying and additional into pure language processing, pc imaginative and prescient, and extra.

In software program improvement, AI instruments check with clever methods that may help or automate elements of the programming course of. They’ll recommend strains of code, full features, and even generate complete modules relying on context or prompts supplied by the developer.

Within the context of outsourcing initiatives—the place velocity is not any much less vital than high quality—AI applied sciences are shortly turning into staples in improvement environments.

They increase productiveness by taking redundant duties, lower the time spent on boilerplate code, and help builders who could also be working in unfamiliar frameworks or languages.

Advantages of Utilizing Synthetic Intelligence, Statista

How AI Instruments Can Be Built-in in Outsourcing Tasks

Synthetic intelligence in 2025 has turn into the specified talent for practically all technical professions.

Whereas the uncommon bartender or plumber could not require AI mastery to the identical degree, it has turn into clear that including an AI talent to a software program developer’s arsenal is a should as a result of within the context of software program improvement outsourcing, AI instruments can be utilized in some ways:

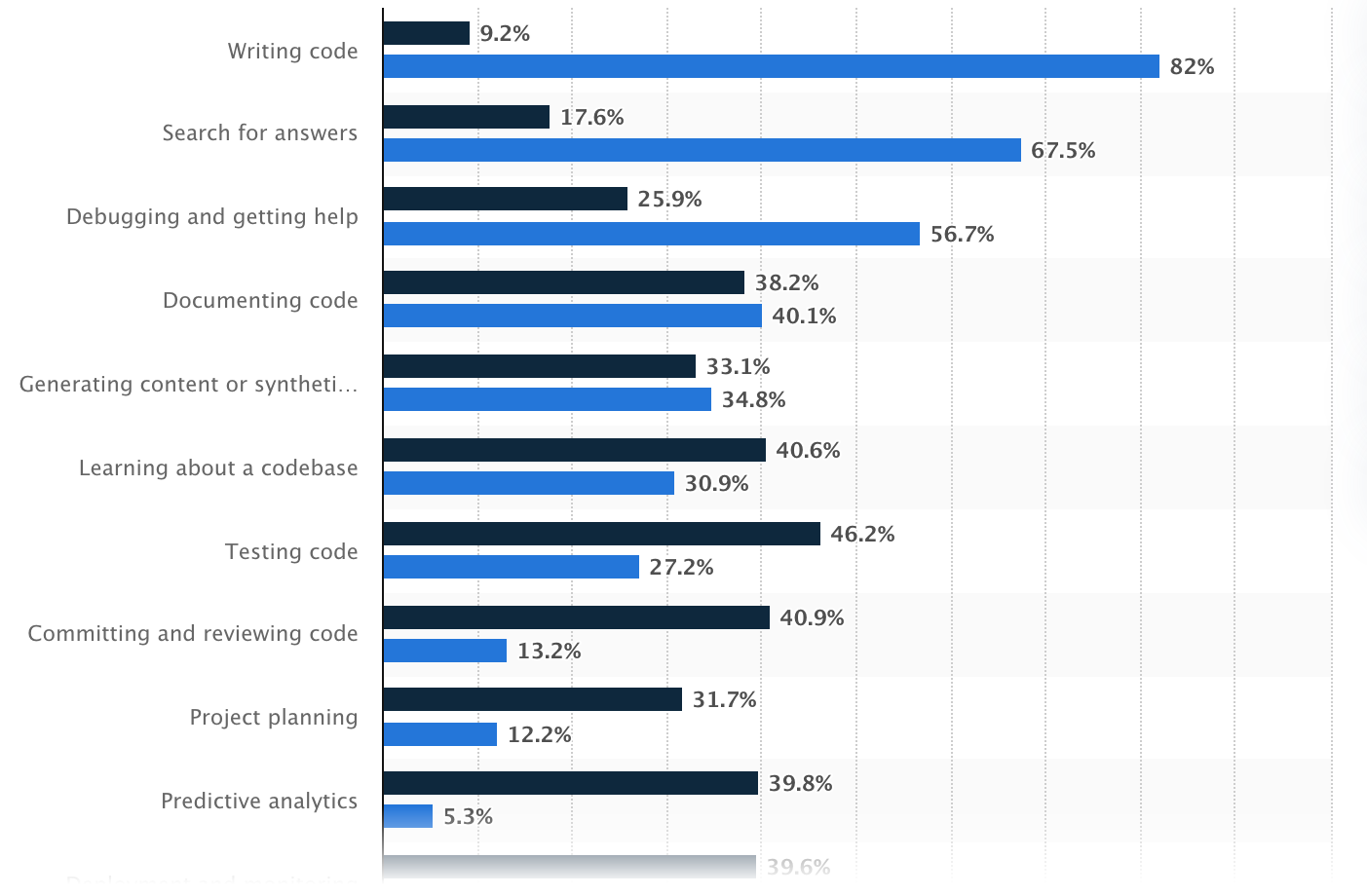

- Code Technology: GitHub Copilot and different AI instruments help outsourced builders in coding by making hints or auto-filling features as they code.

- Bug Detection: As a substitute of ready for human verification in software program testing, AI can flag errors or dangerous code so groups can repair flaws earlier than they turn into irreversible points.

- Writing Exams: AI can independently generate check circumstances from the code, thus the testing turns into faster and extra exhaustive.

- Documentation Help: AI can depart feedback and draw up documentation explaining what the code does.

- Multi-language Help: If the mission wants to modify programming languages, AI may help translate or re-write segments of code to be able to decrease the necessity for specialised information for each programming language.

Hottest makes use of of AI within the improvement, Statista

Authorized Implications of Utilizing AI in Customized Software program Improvement

AI instruments may be extremely useful in software program improvement, particularly when outsourcing. However utilizing them additionally raises some authorized questions companies want to pay attention to, primarily round possession, privateness, and accountability.

Mental Property (IP) Points

When builders use AI instruments like GitHub Copilot, ChatGPT, or different code-writing assistants, it’s pure to ask: Who really owns the code that will get written? This is likely one of the trickiest authorized questions proper now.

At present, there’s no clear world settlement. Usually, AI doesn’t personal something, and the developer who makes use of the instrument is taken into account the “creator,” nonetheless, this will range.

The catch is that AI instruments be taught from tons of current code on the web. Generally, they generate code that’s very comparable (and even similar) to the code they had been skilled on, together with open-source initiatives.

If that code is copied too intently, and it’s underneath a strict open-source license, you possibly can run into authorized issues, particularly in case you didn’t understand it or comply with the license guidelines.

Outsourcing could make it much more problematic. In case you’re working with an outsourcing group they usually use AI instruments throughout improvement, you’ll want to be further clear in your contracts:

- Who owns the ultimate code?

- What occurs if the AI instrument by chance reuses licensed code?

- Is the outsourced group allowed to make use of AI instruments in any respect?

To 100% keep on the secure facet, you possibly can:

- Be sure contracts clearly state who owns the code.

- Double-check that the code doesn’t violate any licenses.

- Think about using instruments that run regionally or restrict what the AI sees to keep away from leaking or copying restricted content material.

Information Safety and Privateness

When utilizing AI instruments in software program improvement, particularly in outsourcing, one other main consideration is knowledge privateness and safety. So what’s the chance?

Nearly all of AI instruments like ChatGPT, Copilot, and others typically run within the cloud, which implies the knowledge builders put into them could also be transmitted to outer servers.

If builders copy and paste proprietary code, login credentials, or business knowledge into these instruments, that data may very well be retained, reused, and later revealed. The scenario turns into even worse if:

- You’re giving confidential enterprise information

- Your mission issues buyer or consumer particulars

- You’re in a regulated trade reminiscent of healthcare or finance

So what does the regulation say concerning it? Certainly, totally different international locations have totally different rules, however probably the most noticeable are:

- GDPR (Europe): In easy phrases, GDPR protects private knowledge. In case you collect knowledge from individuals within the EU, you must clarify what you’re accumulating, why you want it, and get their permission first. Folks can ask to see their knowledge, rectify something improper, or have it deleted.

- HIPAA (US, healthcare): HIPAA covers personal well being data and medical information. Submitting to HIPAA, you possibly can’t simply paste something associated to affected person paperwork into an AI instrument or chatbot—particularly one which runs on-line. Additionally, in case you work with different corporations (outsourcing groups or software program distributors), they should comply with the identical decrees and signal a particular settlement to make all of it authorized.

- CCPA (California): CCPA is a privateness regulation that offers individuals extra management over their private information. If your small business collects knowledge from California residents, you must allow them to know what you’re gathering and why. Folks can ask to see their knowledge, have it deleted, or cease you from sharing or promoting it. Even when your organization relies some other place, you continue to need to comply with CCPA in case you’re processing knowledge from individuals in California.

The obvious and logical query right here is defend knowledge. First, don’t put something delicate (passwords, buyer information, or personal firm knowledge) into public AI instruments except you’re certain they’re secure.

For initiatives that concern confidential data, it’s higher to make use of AI assistants that run on native computer systems and don’t ship something to the web.

Additionally, take a superb take a look at the contracts with any outsourcing companions to ensure they’re following the appropriate practices for preserving knowledge secure.

Accountability and Duty

AI instruments can perform many duties however they don’t take accountability when one thing goes improper. The blame nonetheless falls on individuals: the builders, the outsourcing group, and the enterprise that owns the mission.

If the code has a flaw, creates a security hole, or causes injury, it’s not the AI’s guilt—it’s the individuals utilizing it who’re accountable. If nobody takes possession, small compromises can flip into massive (and costly) points.

To keep away from this example, companies want clear instructions and human oversight:

- At all times evaluation AI-generated code. It’s simply a place to begin, not a completed product. Builders nonetheless have to probe, debug, and confirm each single half.

- Assign accountability. Be it an in-house group or an outsourced associate, be sure that somebody is clearly answerable for high quality management.

- Embody AI in your contracts. Your settlement with an outsourcing supplier ought to say:

- Whether or not they can apply AI instruments.

- Who’s answerable for reviewing the AI’s work.

- Who pays for fixes if one thing goes improper due to AI-generated code.

- Hold a report of AI utilization. Doc when and the way AI instruments are utilized, particularly for main code contributions. That manner, if issues emerge, you possibly can hint again what occurred.

Case Research and Examples

AI in software program improvement is already a typical follow utilized by many tech giants although statistically, smaller corporations with fewer workers are extra possible to make use of synthetic intelligence than bigger corporations.

Beneath, now we have compiled some real-world examples that present how totally different companies are making use of AI and the teachings they’re studying alongside the way in which.

Nabla (Healthcare AI Startup)

Nabla, a French healthtech firm, built-in GPT-3 (through OpenAI) to help docs with writing medical notes and summaries throughout consultations.

How they use it:

- AI listens to patient-doctor conversations and creates structured notes.

- The time docs spend on admin work visibly shrinks.

Authorized & privateness actions:

- As a result of they function in a healthcare setting, Nabla deliberately selected to not use OpenAI’s API immediately as a result of issues about knowledge privateness and GDPR compliance.

- As a substitute, they constructed their very own safe infrastructure utilizing open-source fashions like GPT-J, hosted regionally, to make sure no affected person knowledge leaves their servers.

Lesson discovered: In privacy-sensitive industries, utilizing self-hosted or personal AI fashions is commonly a safer path than counting on business cloud-based APIs.

Replit and Ghostwriter

Replit, a collaborative on-line coding platform, developed Ghostwriter, its personal AI assistant just like Copilot.

The way it’s used:

- Ghostwriter helps customers (together with learners) write and full code proper within the browser.

- It’s built-in throughout Replit’s improvement platform, typically utilized in schooling and startups.

Problem:

- Replit has to stability ease of use with license compliance and transparency.

- The corporate supplies disclaimers encouraging customers to evaluation and edit the generated code, underlining it’s only a tip.

Lesson discovered: AI-generated code is highly effective however not at all times secure to make use of “as is.” Even platforms that construct AI instruments themselves push for human evaluation and warning.

Amazon’s Inner AI Coding Instruments

Amazon has developed its personal inside AI-powered instruments, just like Copilot, to help its builders.

How they use it:

- AI helps builders write and evaluation code throughout a number of groups and companies.

- It’s used internally to enhance developer productiveness and velocity up supply.

Why they don’t use exterior instruments like Copilot:

- Amazon has strict inside insurance policies round mental property and knowledge privateness.

- They like to construct and host instruments internally to sidestep authorized dangers and defend proprietary code.

Lesson discovered: Giant enterprises typically keep away from third-party AI instruments as a result of issues about IP leakage and lack of management over inclined knowledge.

How one can Safely Use AI Instruments in Outsourcing Tasks: Basic Suggestions

Utilizing AI instruments in outsourced improvement can convey quicker supply, decrease prices, and coding productiveness. However to do it safely, corporations have to arrange the appropriate processes and protections from the beginning.

First, it’s vital to make AI utilization expectations clear in contracts with outsourcing companions. Agreements ought to specify whether or not AI instruments can be utilized, underneath what circumstances, and who’s answerable for reviewing and validating AI-generated code.

These contracts also needs to embrace robust mental property clauses, spelling out who owns the ultimate code and what occurs if AI by chance introduces open-source or third-party licensed content material.

Information safety is one other crucial concern. If builders use AI instruments that ship knowledge to the cloud, they have to by no means enter delicate or proprietary data except the instrument complies with GDPR, HIPAA, or CCPA.

In extremely regulated industries, it’s at all times safer to make use of self-hosted AI fashions or variations that run in a managed surroundings to reduce the chance of knowledge openness.

To keep away from authorized and high quality points, corporations also needs to implement human oversight at each stage. AI instruments are nice for recommendation, however they don’t perceive enterprise context or authorized necessities.

Builders should nonetheless check, audit, and reanalyze all code earlier than it goes dwell. Establishing a code evaluation workflow the place senior engineers double-check AI output ensures security and accountability.

It’s additionally sensible to doc when and the way AI instruments are used within the improvement course of. Holding a report helps hint again the supply of any future defects or authorized issues and exhibits good religion in regulatory audits.

Lastly, be sure that your group (or your outsourcing associate’s group) receives fundamental coaching in AI finest practices. Builders ought to perceive the constraints of AI recommendations, detect licensing dangers, and why it’s vital to validate code earlier than delivery it.

FAQ

Q: Who owns the code generated by AI instruments?

Possession normally goes to the corporate commissioning the software program—however provided that that’s clearly acknowledged in your settlement. The complication comes when AI instruments generate code that resembles open-source materials. If that content material is underneath a license, and it’s not attributed correctly, it might increase mental property points. So, clear contracts and handbook checks are key.

Q: Is AI-generated code secure to make use of as-is?

Not at all times. AI instruments can by chance reproduce licensed or copyrighted code, particularly in the event that they had been skilled on public codebases. Whereas the recommendations are helpful, they need to be handled as beginning factors—builders nonetheless have to evaluation, edit, and confirm the code earlier than it’s used.

Q: Is it secure to enter delicate knowledge into AI instruments like ChatGPT?

Normally, no. Except you’re utilizing a non-public or enterprise model of the AI that ensures knowledge privateness, you shouldn’t enter any confidential or proprietary data. Public instruments course of knowledge within the cloud, which might expose it to privateness dangers and regulatory violations.

Q: What knowledge safety legal guidelines ought to we think about?

This relies on the place you use and what sort of knowledge you deal with. In Europe, the GDPR requires consent and transparency when utilizing private knowledge. Within the U.S., HIPAA protects medical knowledge, whereas CCPA in California provides customers management over how their private data is collected and deleted. In case your AI instruments contact delicate knowledge, they have to adjust to these rules.

Q: Who’s accountable if AI-generated code causes an issue?

In the end, the accountability falls on the event group—not the AI instrument. Meaning whether or not your group is in-house or outsourced, somebody must validate the code earlier than it goes dwell. AI can velocity issues up, however it could possibly’t take accountability for errors.

Q: How can we safely use AI instruments in outsourced initiatives?

Begin by placing all the pieces in writing: your contracts ought to cowl AI utilization, IP possession, and evaluation processes. Solely use trusted instruments, keep away from feeding in delicate knowledge, and ensure builders are skilled to make use of AI responsibly. Most significantly, preserve a human within the loop for high quality assurance.

Q: Does SCAND use AI for software program improvement?

Sure, however supplied that the consumer agrees. If public AI instruments are licensed, we use Microsoft Copilot in VSCode and Cursor IDE, with fashions like ChatGPT 4o, Claude Sonnet, DeepSeek, and Qwen. If a consumer requests a non-public setup, we use native AI assistants in VSCode, Ollama, LM Studio, and llama.cpp, with all the pieces saved on safe machines.

Q: Does SCAND use AI to check software program?

Sure, however with permission from the consumer. We use AI instruments like ChatGPT 4o and Qwen Imaginative and prescient for automated testing and Playwright and Selenium for browser testing. When required, we routinely generate unit checks utilizing AI fashions in Copilot, Cursor, or regionally accessible instruments like Llama, DeepSeek, Qwen, and Starcoder.