Giant language fashions are bettering quickly; to this point, this enchancment has largely been measured through educational benchmarks. These benchmarks, reminiscent of MMLU and BIG-Bench, have been adopted by researchers in an try to check fashions throughout numerous dimensions of functionality associated to basic intelligence. Nevertheless enterprises care in regards to the high quality of AI programs in particular domains, which we name area intelligence. Area intelligence entails information and duties that cope with the inside workings of enterprise processes: particulars, jargon, historical past, inside practices and workflows, and the like.

Subsequently, enterprise practitioners deploying AI in real-world settings want evaluations that immediately measure area intelligence. With out domain-specific evaluations, organizations could overlook fashions that will excel at their specialised duties in favor of people who rating properly on presumably misaligned basic benchmarks. We developed the Area Intelligence Benchmark Suite (DIBS) to assist Databricks clients construct higher AI programs for his or her particular use instances, and to advance our analysis on fashions that may leverage area intelligence. DIBS measures efficiency on datasets curated to mirror specialised area data and customary enterprise use instances that conventional educational benchmarks typically overlook.

Within the the rest of this weblog submit, we are going to focus on how present fashions carry out on DIBS compared to comparable educational benchmarks. Our key takeaways embody:

- Fashions’ rankings throughout educational benchmarks don’t essentially map to their rankings throughout business duties. We discover discrepancies in efficiency between educational and enterprise rankings, emphasizing the necessity for domain-specific testing.

- There’s room for enchancment in core capabilities. Some enterprise wants like structured information extraction present clear paths for enchancment, whereas extra complicated domain-specific duties require extra subtle reasoning capabilities.

- Builders ought to select fashions based mostly on particular wants. There isn’t any single greatest mannequin or paradigm. From open-source choices to retrieval methods, completely different options excel in numerous eventualities.

This underscores the necessity for builders to check fashions on their precise use instances and keep away from limiting themselves to any single mannequin choice.

Introducing our Area Intelligence Benchmark Suite (DIBS)

DIBS focuses on three of the commonest enterprise use instances surfaced by Databricks clients:

- Knowledge Extraction: Textual content to JSON

- Changing unstructured textual content (like emails, stories, or contracts) into structured JSON codecs that may be simply processed downstream.

- Software Use: Perform Calling

- Enabling LLMs to work together with exterior instruments and APIs by producing correctly formatted operate calls.

- Agent Workflows: Retrieval Augmented Technology (RAG)

- Enhancing LLM responses by first retrieving related data from an organization’s data base or paperwork.

We evaluated fourteen in style fashions throughout DIBS and three educational benchmarks, spanning enterprise domains in finance, software program, and manufacturing. We’re increasing our analysis scope to incorporate authorized, information evaluation and different verticals, and welcome collaboration alternatives to evaluate extra business domains and duties.

In Desk 1, we briefly present an outline of every process, the benchmark we’ve been utilizing internally, and educational counterparts if obtainable. Later, in Benchmark Overviews, we focus on these in additional element.

| Activity Class | Dataset Title | Enterprise or Tutorial | Area | Activity Description |

|---|---|---|---|---|

|

Knowledge Extraction: Textual content to JSON |

Text2JSON | Enterprise | Misc. Info | Given a immediate containing a schema and some Wikipedia-style paragraphs, extract related data into the schema. |

| Software Use: Perform Calling | BFCL-Full Universe | Enterprise | Perform calling | Modification of BFCL the place, for every question, the mannequin has to pick the proper operate from the total set of features current within the BFCL universe. |

| Software Use: Perform Calling | BFCL-Retrieval | Enterprise | Perform calling | Modification of BFCL the place, for every question, we use text-embedding-3-large to pick 10 candidate features from the total set of features current within the BFCL universe. The duty then turns into to decide on the proper operate from that set. |

| Software Use: Perform Calling | Nexus | Tutorial | APIs | Single flip operate calling analysis throughout 7 APIs of various problem |

| Software Use: Perform Calling |

Berkeley Perform Calling Leaderboard (BFCL) |

Tutorial | Perform calling | See unique BFCL weblog. |

|

Agent Workflows: RAG |

DocsQA | Enterprise | Software program – Databricks Documentation with Code | Reply actual consumer questions based mostly on public Databricks documentation internet pages. |

|

Agent Workflows: RAG |

ManufactQA | Enterprise |

Manufacturing – Semiconductors –Buyer FAQs

|

Given a technical buyer question about debugging or product points, retrieve essentially the most related web page from a corpus of a whole lot of product manuals and datasheets, and assemble a solution like a buyer help agent. |

|

Agent Workflows: RAG |

FinanceBench | Enterprise | Finance – SEC Filings | Carry out monetary evaluation on SEC filings, from Patronus AI |

|

Agent Workflows: RAG |

Pure Questions | Tutorial | Wikipedia | Extractive QA over Wikipedia articles |

Desk 1. We consider the set of fashions throughout 9 duties spanning 3 enterprise process classes: information extraction, software use, and agent workflows. The three classes we focus on had been chosen resulting from their relative frequency in enterprise workloads. Past these classes, we’re persevering with to develop to a broader set of analysis duties in collaboration with our clients.

What We Realized Evaluating LLMs on Enterprise Duties

Tutorial Benchmarks Obscure Enterprise Efficiency Gaps

In Determine 1, we present a comparability of RAG and performance calling (FC) capabilities between the enterprise and educational benchmarks, with common scores plotted for all fourteen fashions. Whereas the educational RAG common has a bigger vary (91.14% on the prime, and 26.65% on the backside), we are able to see that the overwhelming majority of fashions rating between 85% and 90%. The enterprise RAG set of scores has a narrower vary, as a result of it has a decrease ceiling – this reveals that there’s extra room to enhance in RAG settings than a benchmark like NQ may recommend.

Determine 1 visually reveals wider efficiency gaps in enterprise RAG scores, proven by the extra dispersed distribution of knowledge factors, in distinction to the tighter clustering seen within the educational RAG column. That is almost certainly as a result of educational benchmarks are based mostly on basic domains like Wikipedia, are public, and are a number of years previous – subsequently, there’s a excessive likelihood that retrieval fashions and LLM suppliers have already educated on the info. For a buyer with non-public, area particular information although, the capabilities of the retrieval and LLM fashions are extra precisely measured with a benchmark tailor-made to their information and use case. An identical impact could be noticed, although it’s much less pronounced, within the operate calling setting.

Structured Extraction (Text2JSON) presents an achievable goal

At a excessive stage, we see that the majority fashions have important room for enchancment in prompt-based Text2JSON; we didn’t consider mannequin efficiency when utilizing structured era.

Determine 2 exhibits that on this process, there are three distinct tiers of mannequin efficiency:

- Most closed-source fashions in addition to Llama 3.1 405B and 70B rating round simply 60%

- Claude 3.5 Haiku, Llama 3.1 8B and Gemini 1.5 Flash deliver up the center of the pack with scores between 50% and 55%.

- The smaller Llama 3.2 fashions are a lot worse performers.

Taken collectively, this means that prompt-based Text2JSON will not be enough for manufacturing use off-the-shelf even from main mannequin suppliers. Whereas structured era choices can be found, they could impose restrictions on viable JSON schemas and be topic to completely different information utilization stipulations. Luckily, we’ve had success fine-tuning fashions to enhance at this functionality.

Different duties could require extra subtle capabilities

We additionally discovered FinanceBench and Perform Calling with Retrieval to be difficult duties for many fashions. That is doubtless as a result of the previous requires a mannequin to be proficient with numerical complexity, and the latter requires a capability to disregard distractor data.

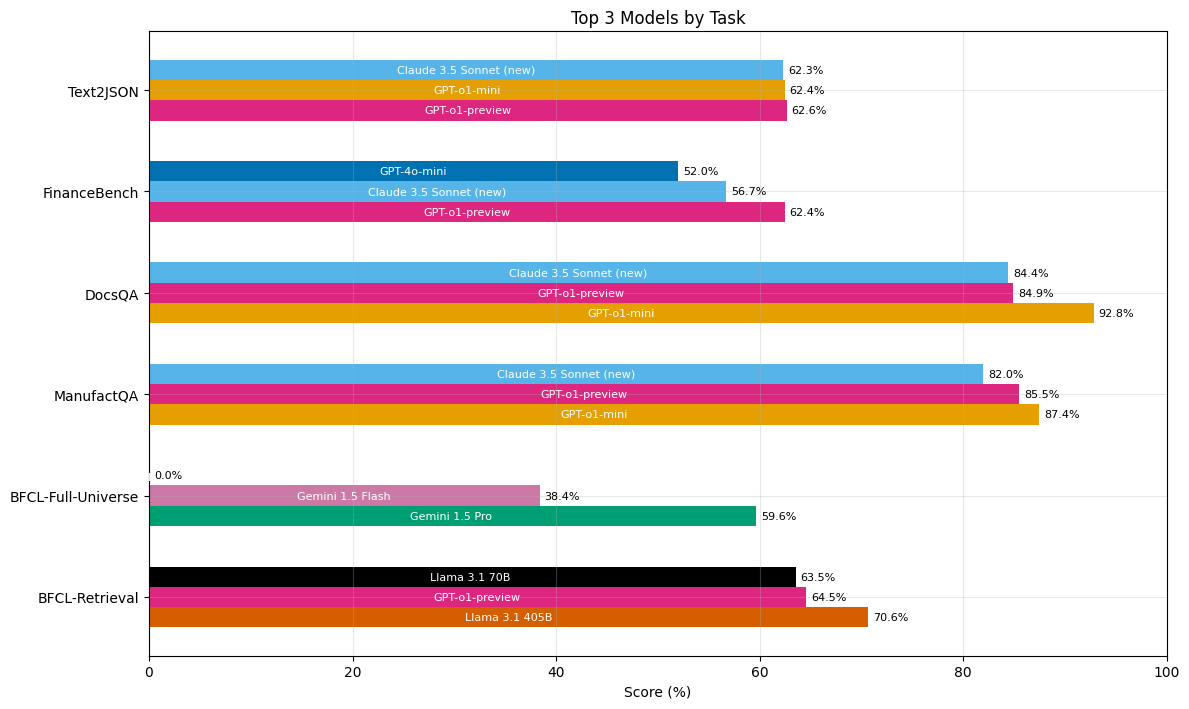

No Single Mannequin Dominates all Duties

Our analysis outcomes don’t help the declare that anybody mannequin is strictly superior to the remaining. Determine 3 demonstrates that essentially the most constantly high-performing fashions had been o1-preview, Claude Sonnet 3.5 (New), and o1-mini, attaining prime scores in 5, 4, and three out of the 6 enterprise benchmark duties respectively. These identical three fashions had been general the most effective performers for information extraction and RAG duties. Nevertheless, solely Gemini fashions at present have the context size essential to carry out the operate calling process over all doable features. In the meantime, Llama 3.1 405B outperformed all different fashions on the operate calling as retrieval process.

Small fashions had been surprisingly robust performers: they largely carried out equally to their bigger counterparts, and typically considerably outperformed them. The one notable degradation was between o1-preview and o1-mini on the FinanceBench process. That is attention-grabbing on condition that, as we are able to see in Determine 3, o1-mini outperforms o1-preview on the opposite two enterprise RAG duties. This underscores the task-dependent nature of mannequin choice.

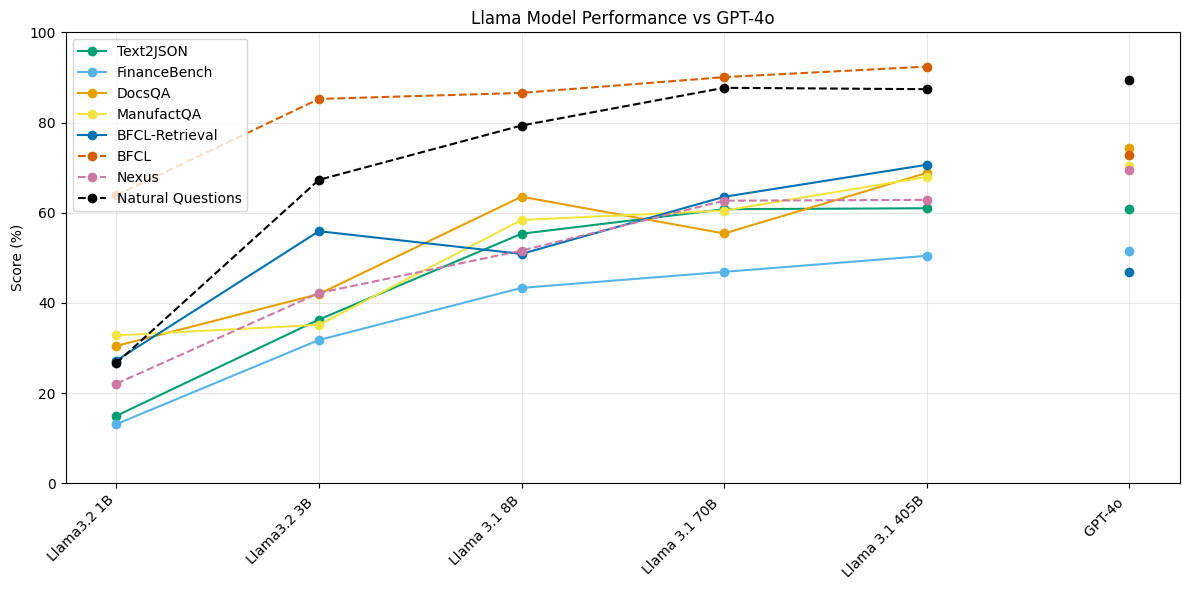

Open Supply vs. Closed Supply Fashions

We evaluated 5 completely different Llama fashions, every at a special dimension. In Determine 4, we plot the scores of every of those fashions on every of our benchmarks towards GPT-4o’s scores for comparability. We discover that Llama 3.1 405B and Llama 3.1 70B carry out extraordinarily competitively on Text2JSON and Perform Calling duties as in comparison with closed-source fashions, surpassing or performing equally to GPT 4o. Nevertheless, the hole between these mannequin courses is extra pronounced on RAG duties.

Moreover, we be aware that Llama 3.1 and three.2 sequence of fashions present diminishing returns concerning mannequin scale and efficiency. The efficiency hole between Llama 3.1 405B and Llama 3.1 70B is negligible on the Text2JSON process, and considerably smaller on each different process than Llama 3.1 8B. Nevertheless, we observe that Llama 3.2 3B outperforms Llama 3.1 8B on the operate calling with retrieval process (BFCL-Retrieval in Determine 4).

This means two issues. First, open-source fashions are off-the-shelf viable for no less than two high-frequency enterprise use instances. Second, there may be room to enhance these fashions’ capacity to leverage retrieved data.

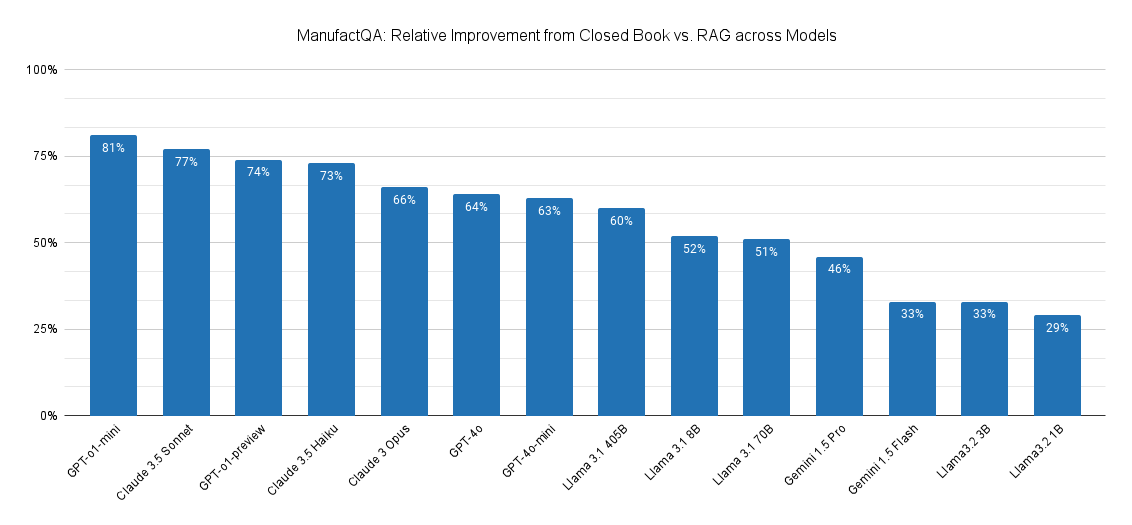

To additional examine this, we in contrast how a lot better every mannequin would carry out on ManufactQA beneath a closed e-book setting vs. a default RAG setting. In a closed e-book setting, fashions are requested to reply the queries with none given context – which measures a mannequin’s pretrained data. Within the default RAG setting, the LLM is supplied with the highest 10 paperwork retrieved by OpenAI’s text-embedding-3-large, which had a recall@10 of 81.97%. This represents essentially the most lifelike configuration in a RAG system. We then calculated the relative error discount between the rag and closed e-book settings.

Primarily based on Determine 5, we observe that the GPT-o1-mini (surprisingly!) and Claude-3.5 Sonnet are in a position to leverage retrieved context essentially the most, adopted by GPT-o1-preview and Claude 3.5 Haiku. The open supply Llama fashions and Gemini fashions all path behind, suggesting that these fashions have extra room to enhance in leveraging area particular context for RAG.

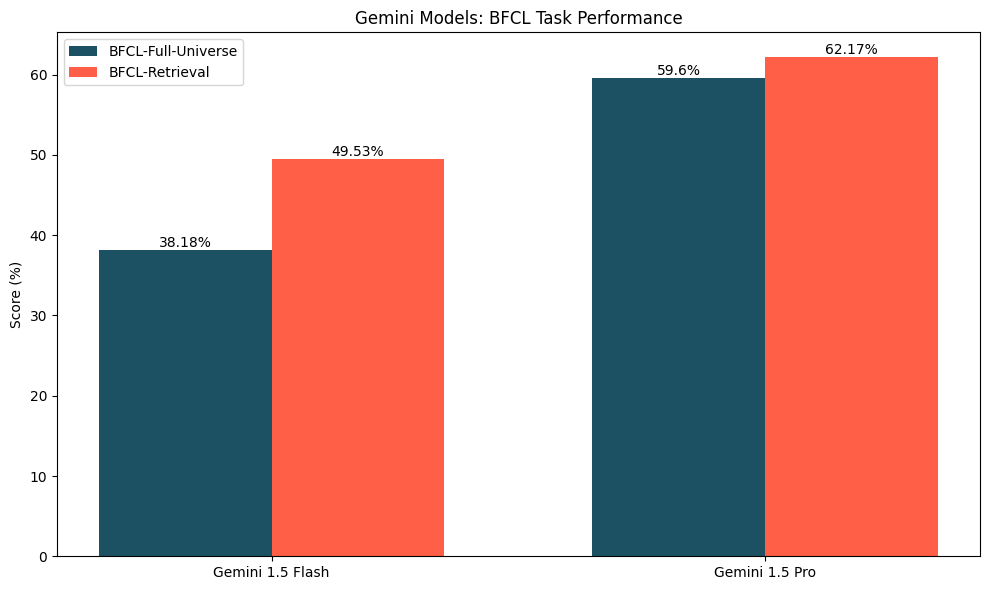

For operate calling at scale, top quality retrieval could also be extra useful than bigger context home windows.

Our operate calling evaluations present one thing attention-grabbing: simply because a mannequin can match a complete set of features into its context window doesn’t imply that it ought to. The one fashions able to doing this right now are Gemini 1.5 Flash and Gemini 1.5 Professional; as Determine 6 shows, these fashions carry out higher on the operate calling with retrieval variant, the place a retriever selects a subset of the total set of features related to the question. The development in efficiency was extra outstanding for Gemini 1.5 Flash (~11% enchancment) than for Gemini 1.5 Professional (~2.5%). This enchancment doubtless stems from the fact {that a} well-tuned retriever can enhance the chance that the proper operate is within the context whereas vastly decreasing the variety of distractor features current. Moreover, we’ve beforehand seen that fashions could wrestle with long-context duties for quite a lot of causes.

Benchmark Overviews

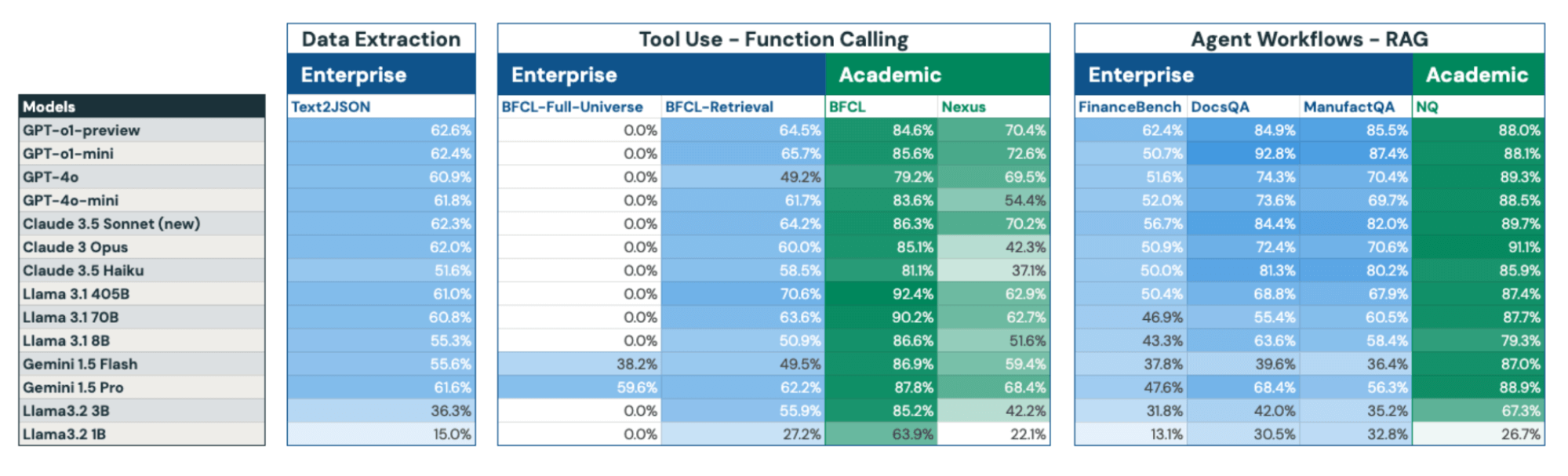

Having outlined DIBS’s construction and key findings, we current a complete abstract of fourteen open and closed-source fashions’ efficiency throughout our enterprise and educational benchmarks in Determine 7. Beneath, we offer detailed descriptions of every benchmark within the the rest of this part.

Knowledge Extraction: Textual content to JSON

In in the present day’s data-driven panorama, the power to rework huge quantities of unstructured information into actionable data has turn into more and more useful. A key problem many enterprises face is constructing unstructured information to structured information pipelines, both as standalone pipelines or as half of a bigger system.

One frequent variant we’ve seen within the subject is changing unstructured textual content – typically a big corpus of paperwork – to JSON. Whereas this process shares similarities with conventional entity extraction and named entity recognition, it goes additional – typically requiring a classy mix of open-ended extraction, summarization, and synthesis capabilities.

No open-source educational benchmark sufficiently captures this complexity; we subsequently procured human-written examples and created a customized Text2JSON benchmark. The examples we procured contain extracting and summarizing data from passages right into a specified JSON schema. We additionally consider multi-turn capabilities, e.g. enhancing present JSON outputs to include extra fields and data. To make sure our benchmark displays precise enterprise wants and gives a related evaluation of extraction capabilities, we used the identical analysis methods as our clients.

Software Use: Perform Calling

Software use capabilities allow LLMs to behave as half of a bigger compound AI system. Now we have seen sustained enterprise curiosity in operate calling as a software, and we beforehand wrote about successfully consider operate calling capabilities.

Not too long ago, organizations have taken to software calling at a a lot bigger scale. Whereas educational evaluations usually check fashions with small operate units—typically ten or fewer choices—real-world purposes continuously contain a whole lot or hundreds of accessible features. In observe, this implies enterprise operate calling is much like needle-in-a-haystack check, with many distractor features current throughout any given question.

To higher mirror these enterprise eventualities, we have tailored the established BFCL educational benchmark to judge each operate calling capabilities and the function of retrieval at scale. In its unique model, the BFCL benchmark requires a mannequin to decide on one or fewer features from a predefined set of 4 features. We constructed on prime of our earlier modification of the benchmark to create two variants: one which requires the mannequin to select from the total set of features that exist in BFCL for every question, and one which leverages a retriever to determine ten features which can be the almost certainly to be related.

Agent Workflows: Retrieval-Augmented Technology

RAG makes it doable for LLMs to work together with proprietary paperwork, augmenting present LLMs with area intelligence. In our expertise, RAG is among the hottest methods to customise LLMs in observe. RAG programs are additionally important for enterprise brokers, as a result of any such agent should be taught to function inside the context of the actual group through which it’s being deployed.

Whereas the variations between business and educational datasets are nuanced, their implications for RAG system design are substantial. Design decisions that seem optimum based mostly on educational benchmarks could show suboptimal when utilized to real-world business information. Because of this architects of commercial RAG programs should rigorously validate their design choices towards their particular use case, relatively than relying solely on educational efficiency metrics.

Pure Questions stays a preferred educational benchmark whilst others, reminiscent of HotpotQA have fallen out of favor. Each of those datasets cope with Wikipedia-based query answering. In observe, LLMs have listed a lot of this data already. For extra lifelike enterprise settings, we use FinanceBench and DocsQA – as mentioned in our earlier explorations on lengthy context RAG – in addition to ManufactQA, an artificial RAG dataset simulating technical buyer help interactions with product manuals, designed for manufacturing corporations’ use instances.

Conclusion

To find out whether or not educational benchmarks might sufficiently inform duties regarding area intelligence, we evaluated a complete of fourteen fashions throughout 9 duties. We developed a area intelligence benchmark suite comprising six enterprise benchmarks that characterize: information extraction (textual content to JSON), software use (operate calling), and agentic workflows (RAG). We chosen fashions to judge based mostly on buyer curiosity in utilizing them for his or her AI/ML wants; we moreover evaluated the Llama 3.2 fashions for extra datapoints on the results of mannequin dimension.

Our findings present that counting on educational benchmarks to make choices about enterprise duties could also be inadequate. These benchmarks are overly saturated – hiding true mannequin capabilities – and considerably misaligned with enterprise wants. Moreover, the sphere of fashions is muddied: there are a number of fashions which can be typically robust performers, and fashions which can be unexpectedly succesful at particular duties. Lastly, educational benchmark efficiency could lead one to imagine that fashions are sufficiently succesful; in actuality, there should still be room for enchancment in direction of being production-workload prepared.

At Databricks, we’re persevering with to assist our clients by investing sources into extra complete enterprise benchmarking programs, and in direction of creating subtle approaches to area experience. As a part of this, we’re actively working with corporations to make sure we seize a broad spectrum of enterprise-relevant wants, and welcome collaborations. If you’re an organization seeking to create domain-specific agentic evaluations, please check out our Agent Analysis Framework. If you’re a researcher concerned with these efforts, take into account making use of to work with us.