Knowledge preprocessing stays essential for machine studying success, but real-world datasets usually comprise errors. Knowledge preprocessing utilizing Cleanlab gives an environment friendly answer, leveraging its Python bundle to implement assured studying algorithms. By automating the detection and correction of label errors, Cleanlab simplifies the method of knowledge preprocessing in machine studying. With its use of statistical strategies to establish problematic information factors, Cleanlab permits information preprocessing utilizing Cleanlab Python to reinforce mannequin reliability. For instance, Cleanlab streamlines workflows, enhancing machine studying outcomes with minimal effort.

Why Knowledge Preprocessing Issues?

Knowledge preprocessing immediately impacts mannequin efficiency. Soiled information with incorrect labels, outliers, and inconsistencies results in poor predictions and unreliable insights. Fashions skilled on flawed information perpetuate these errors, making a cascading impact of inaccuracies all through your system. High quality preprocessing eliminates these points earlier than modeling begins.

Efficient preprocessing additionally saves time and assets. Cleaner information means fewer mannequin iterations, sooner coaching, and diminished computational prices. It prevents the frustration of debugging advanced fashions when the actual downside lies within the information itself. Preprocessing transforms uncooked information into worthwhile info that algorithms can successfully be taught from.

Preprocess Knowledge Utilizing Cleanlab?

Cleanlab helps clear and validate your information earlier than coaching. It finds unhealthy labels, duplicates, and low-quality samples utilizing ML fashions. It’s greatest for label and information high quality checks, not primary textual content cleansing.

Key Options of Cleanlab:

- Detects mislabeled information (noisy labels)

- Flags duplicates and outliers

- Checks for low-quality or inconsistent samples

- Offers label distribution insights

- Works with any ML classifier to enhance information high quality

Now, let’s stroll by way of how you should use Cleanlab step-by-step.

Step 1: Putting in the Libraries

Earlier than beginning, we have to set up a couple of important libraries. These will assist us load the info and run Cleanlab instruments easily.

!pip set up cleanlab

!pip set up pandas

!pip set up numpy- cleanlab: For detecting label and information high quality points.

- pandas: To learn and deal with the CSV information.

- numpy: Helps quick numerical computations utilized by Cleanlab.

Step 2: Loading the Dataset

Now we load the dataset utilizing Pandas to start preprocessing.

import pandas as pd

# Load dataset

df = pd.read_csv("/content material/Tweets.csv")

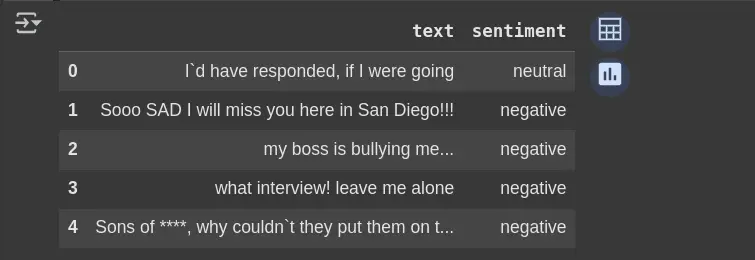

df.head(5)- pd.read_csv():

- df.head(5):

Now, as soon as we’ve got loaded the info. We’ll focus solely on the columns we’d like and test for any lacking values.

# Deal with related columns

df_clean = df.drop(columns=['selected_text'], axis=1, errors="ignore")

df_clean.head(5)Removes the selected_text column if it exists; avoids errors if it doesn’t. Helps maintain solely the mandatory columns for evaluation.

Step 3: Verify Label Points

from cleanlab.dataset import health_summary

from sklearn.linear_model import LogisticRegression

from sklearn.pipeline import make_pipeline

from sklearn.feature_extraction.textual content import TfidfVectorizer

from sklearn.model_selection import cross_val_predict

from sklearn.preprocessing import LabelEncoder

# Put together information

df_clean = df.dropna()

y_clean = df_clean['sentiment'] # Unique string labels

# Convert string labels to integers

le = LabelEncoder()

y_encoded = le.fit_transform(y_clean)

# Create mannequin pipeline

mannequin = make_pipeline(

TfidfVectorizer(max_features=1000),

LogisticRegression(max_iter=1000)

)

# Get cross-validated predicted possibilities

pred_probs = cross_val_predict(

mannequin,

df_clean['text'],

y_encoded, # Use encoded labels

cv=3,

technique="predict_proba"

)

# Generate well being abstract

report = health_summary(

labels=y_encoded, # Use encoded labels

pred_probs=pred_probs,

verbose=True

)

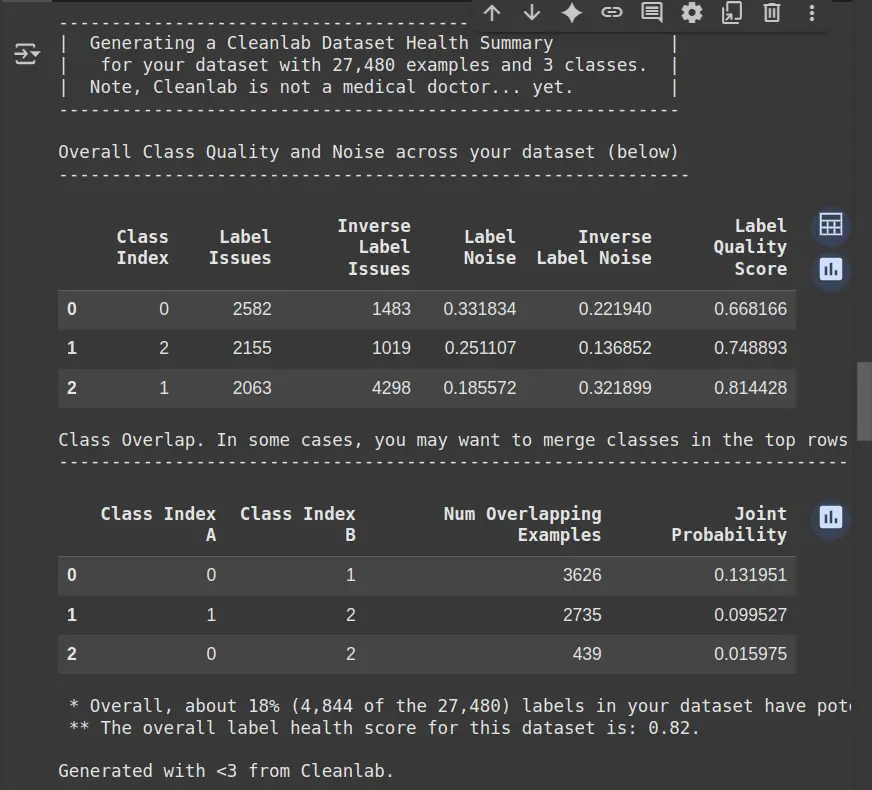

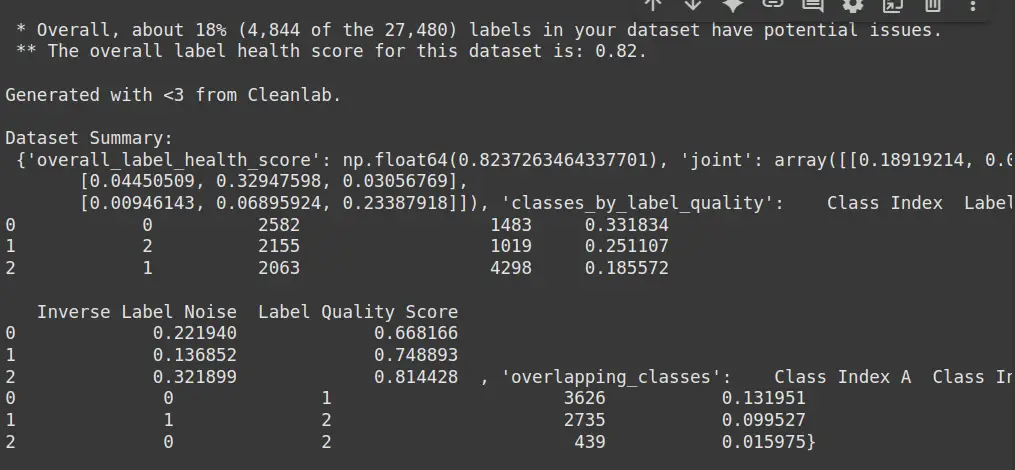

print("Dataset Abstract:n", report)- df.dropna(): Removes rows with lacking values, making certain clear information for coaching.

- LabelEncoder(): Converts string labels (e.g., “constructive”, “unfavourable”) into integer labels for mannequin compatibility.

- make_pipeline(): Creates a pipeline with a TF-IDF vectorizer (converts textual content to numeric options) and a logistic regression mannequin.

- cross_val_predict(): Performs 3-fold cross-validation and returns predicted possibilities as an alternative of labels.

- health_summary(): Makes use of Cleanlab to investigate the anticipated possibilities and labels, figuring out potential label points like mislabels.

- print(report): Shows the well being abstract report, highlighting any label inconsistencies or errors within the dataset.

- Label Points: Signifies what number of samples in a category have doubtlessly incorrect or ambiguous labels.

- Inverse Label Points: Reveals the variety of situations the place the anticipated labels are incorrect (reverse of true labels).

- Label Noise: Measures the extent of noise (mislabeling or uncertainty) inside every class.

- Label High quality Rating: Displays the general high quality of labels in a category (greater rating means higher high quality).

- Class Overlap: Identifies what number of examples overlap between completely different courses, and the likelihood of such overlaps occurring.

- General Label Well being Rating: Offers an general indication of the dataset’s label high quality (greater rating means higher well being).

Step 4: Detect Low-High quality Samples

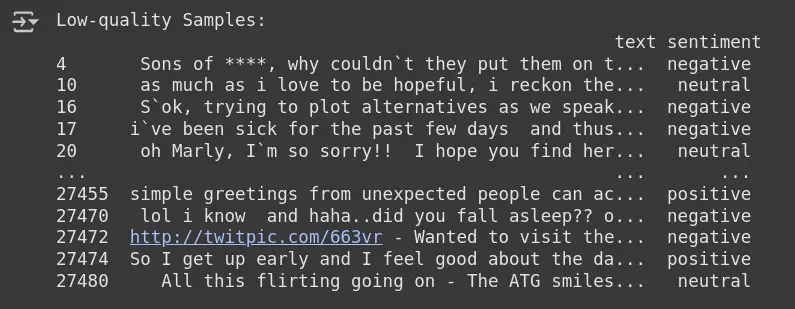

This step includes detecting and isolating the samples within the dataset which will have labeling points. Cleanlab makes use of the anticipated possibilities and the true labels to establish low-quality samples, which may then be reviewed and cleaned.

# Get low-quality pattern indices

from cleanlab.filter import find_label_issues

issue_indices = find_label_issues(labels=y_encoded, pred_probs=pred_probs)

# Show problematic samples

low_quality_samples = df_clean.iloc[issue_indices]

print("Low-quality Samples:n", low_quality_samples)- find_label_issues(): A perform from Cleanlab that detects the indices of samples with label points, based mostly on evaluating the anticipated possibilities (pred_probs) and true labels (y_encoded).

- issue_indices: Shops the indices of the samples that Cleanlab recognized as having potential label points (i.e., low-quality samples).

- df_clean.iloc[issue_indices]: Extracts the problematic rows from the clear dataset (df_clean) utilizing the indices of the low-quality samples.

- low_quality_samples: Holds the samples recognized as having label points, which might be reviewed additional for potential corrections.

Step 5: Detect Noisy Labels through Mannequin Prediction

This step includes utilizing CleanLearning, a Cleanlab technique, to detect noisy labels within the dataset by coaching a mannequin and utilizing its predictions to establish samples with inconsistent or noisy labels.

from cleanlab.classification import CleanLearning

from cleanlab.filter import find_label_issues

from sklearn.feature_extraction.textual content import TfidfVectorizer

from sklearn.linear_model import LogisticRegression

from sklearn.preprocessing import LabelEncoder

# Encode labels numerically

le = LabelEncoder()

df_clean['encoded_label'] = le.fit_transform(df_clean['sentiment'])

# Vectorize textual content information

vectorizer = TfidfVectorizer(max_features=3000)

X = vectorizer.fit_transform(df_clean['text']).toarray()

y = df_clean['encoded_label'].values

# Prepare classifier with CleanLearning

clf = LogisticRegression(max_iter=1000)

clean_model = CleanLearning(clf)

clean_model.match(X, y)

# Get prediction possibilities

pred_probs = clean_model.predict_proba(X)

# Discover noisy labels

noisy_label_indices = find_label_issues(labels=y, pred_probs=pred_probs)

# Present noisy label samples

noisy_label_samples = df_clean.iloc[noisy_label_indices]

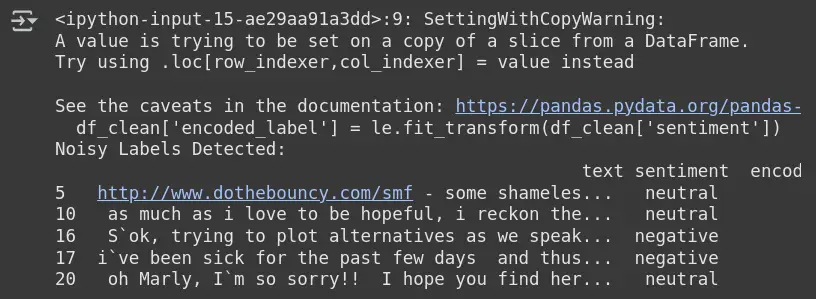

print("Noisy Labels Detected:n", noisy_label_samples.head())

- Label Encoding (LabelEncoder()): Converts string labels (e.g., “constructive”, “unfavourable”) into numerical values, making them appropriate for machine studying fashions.

- Vectorization (TfidfVectorizer()): Converts textual content information into numerical options utilizing TF-IDF, specializing in the three,000 most vital options from the “textual content” column.

- Prepare Classifier (LogisticRegression()): Makes use of logistic regression because the classifier for coaching the mannequin with the encoded labels and vectorized textual content information.

- CleanLearning (CleanLearning()): Applies CleanLearning to the logistic regression mannequin. This technique refines the mannequin’s skill to deal with noisy labels by contemplating them throughout coaching.

- Prediction Possibilities (predict_proba()): After coaching, the mannequin predicts class possibilities for every pattern, that are used to establish potential noisy labels.

- find_label_issues(): Makes use of the anticipated possibilities and the true labels to detect which samples have noisy labels (i.e., probably mislabels).

- Show Noisy Labels: Retrieves and shows the samples with noisy labels based mostly on their indices, permitting you to overview and doubtlessly clear them.

Remark

Output: Noisy Labels Detected

- Cleanlab flags samples the place the anticipated sentiment (from mannequin) doesn’t match the offered label.

- Instance: Row 5 is labeled impartial, however the mannequin thinks it won’t be.

- These samples are probably mislabeled or ambiguous based mostly on mannequin behaviour.

- It helps to establish, relabel, or take away problematic samples for higher mannequin efficiency.

Conclusion

Preprocessing is vital to constructing dependable machine studying fashions. It removes inconsistencies, standardises inputs, and improves information high quality. However most workflows miss one factor that’s noisy labels. Cleanlab fills that hole. It detects mislabeled information, outliers, and low-quality samples mechanically. No handbook checks wanted. This makes your dataset cleaner and your fashions smarter.

Cleanlab preprocessing doesn’t simply enhance accuracy, it saves time. By eradicating unhealthy labels early, you cut back coaching load. Fewer errors imply sooner convergence. Extra sign, much less noise. Higher fashions, much less effort.

Often Requested Questions

Ans. Cleanlab helps detect and repair mislabeled, noisy, or low-quality information in labeled datasets. It’s helpful throughout domains like textual content, picture, and tabular information.

Ans. No. Cleanlab works with the output of present fashions. It doesn’t want retraining to detect label points.

Ans. Not essentially. Cleanlab can be utilized with each conventional ML fashions and deep studying fashions, so long as you present predicted possibilities.

Ans. Sure, Cleanlab is designed for simple integration. You may shortly begin utilizing it with only a few traces of code, with out main adjustments to your workflow.

Ans. Cleanlab can deal with varied kinds of label noise, together with mislabeling, outliers, and unsure labels, making your dataset cleaner and extra dependable for coaching fashions.

Login to proceed studying and luxuriate in expert-curated content material.