On this publish, we display how Notebooks in Amazon SageMaker Unified Studio enable you get to insights quicker by simplifying infrastructure configuration. You’ll see the way to analyze housing value knowledge, create scalable knowledge tables, run distributed profiling, and prepare machine studying (ML) fashions inside a single pocket book setting.

Information scientists and analysts usually spend days configuring infrastructure and managing authentication throughout a number of knowledge sources earlier than they will start evaluation. When working with knowledge throughout Amazon Easy Storage Service (Amazon S3), Amazon Redshift, Snowflake, and native recordsdata, groups face repeated authentication setup, guide compute scaling choices, and tool-switching overhead that delays insights.

Notebooks in Amazon SageMaker Unified Studio present immediate entry to 12+ knowledge sources, compute scaling from native to distributed processing, and AI-powered code technology inside a single browser-based setting. You’ll be taught to make use of polyglot programming, multi-engine compute, and AI-assisted improvement to speed up your path from query to perception.

What are Notebooks in Amazon SageMaker Unified Studio?

Notebooks in Amazon SageMaker Unified Studio present an interactive setting for knowledge evaluation, exploration, engineering, and machine studying workflows. It delivers 5 built-in capabilities:

- Polyglot programming: Write code in Python and SQL interchangeably throughout the identical pocket book setting

- Unified knowledge entry: Join immediately to knowledge saved in Amazon S3, AWS Glue Information Catalog, Apache Iceberg tables, and third-party sources like Snowflake and BigQuery

- Native visualization: Create charts instantly from Python and SQL outcomes for immersive knowledge analytics

- AI-powered improvement: Generate code by pure language prompts utilizing SageMaker Information Agent, with an clever chat interface for knowledge analytics, knowledge science, and ML duties

- Versatile compute: Scale from primary situations to GPU-powered environments as your wants develop

Structure

This part covers the structure of Notebooks, which delivers enterprise-scale analytics with browser-based simplicity by a cloud-native structure that integrates a number of compute engines, numerous knowledge sources, and AI-powered help.

Presentation layer

You entry the pocket book interface by Amazon SageMaker Unified Studio, interacting with a well-recognized interface that includes code cells for execution, markdown cells for documentation, and visualization cells for charts and tables.

Compute layer

A devoted pocket book server manages your kernel lifecycle and session state. Key elements embody a Language Server for code completion, a Python 3.11 runtime with pre-loaded knowledge science libraries, and a Polyglot Kernel that handles your Python, PySpark, and SQL execution throughout the identical pocket book. Persistent Amazon Elastic Block Retailer (Amazon EBS) storage backs every pocket book you create.

Execution layer

Notebooks help a number of execution engines, mechanically routing your code to the optimum processing engine. In-memory execution handles your smaller datasets and speedy prototyping. Apache Spark by way of Amazon Athena supplies distributed processing on your large-scale analytics by way of Spark Join. Native connectivity to Amazon Athena (Trino), Amazon Redshift, Snowflake, and BigQuery processes your SQL queries.

Information Integration

You get unified entry to 12+ knowledge sources together with AWS-native (Amazon S3, AWS Glue, Amazon Athena, Amazon Redshift) and third-party (Snowflake, BigQuery, PostgreSQL, MySQL) knowledge sources. For the most recent supported knowledge sources, see Hook up with knowledge sources .

AI layer

The SageMaker Information Agent operates in two modes to help you: an Agent Panel for multi-step analytical workflows and Inline Help for targeted, cell-level code technology. For an in depth overview, see Speed up context-aware knowledge evaluation and ML workflows with Amazon SageMaker Information Agent .

Safety is embedded all through the structure to guard your work. Information entry respects your AWS Identification and Entry Administration (AWS IAM) permissions. The pocket book and the agent can solely entry knowledge sources you’re licensed to make use of. Communication between elements makes use of encrypted channels, and your pocket book storage is encrypted at relaxation. The AI agent consists of built-in guardrails to assist stop harmful operations and logs interactions on your compliance and auditing functions.

Stipulations

Earlier than you start, you want:

- An AWS account with acceptable permissions to create Amazon SageMaker Unified Studio assets. See Arrange IAM-based domains for full permission necessities.

- Fundamental familiarity with Python programming and SQL queries

- Understanding of knowledge evaluation ideas and ML workflows

- Entry to the pattern housing dataset (supplied within the walkthrough)

Getting began with Notebooks

To get began, open the Amazon SageMaker console and select Get began.

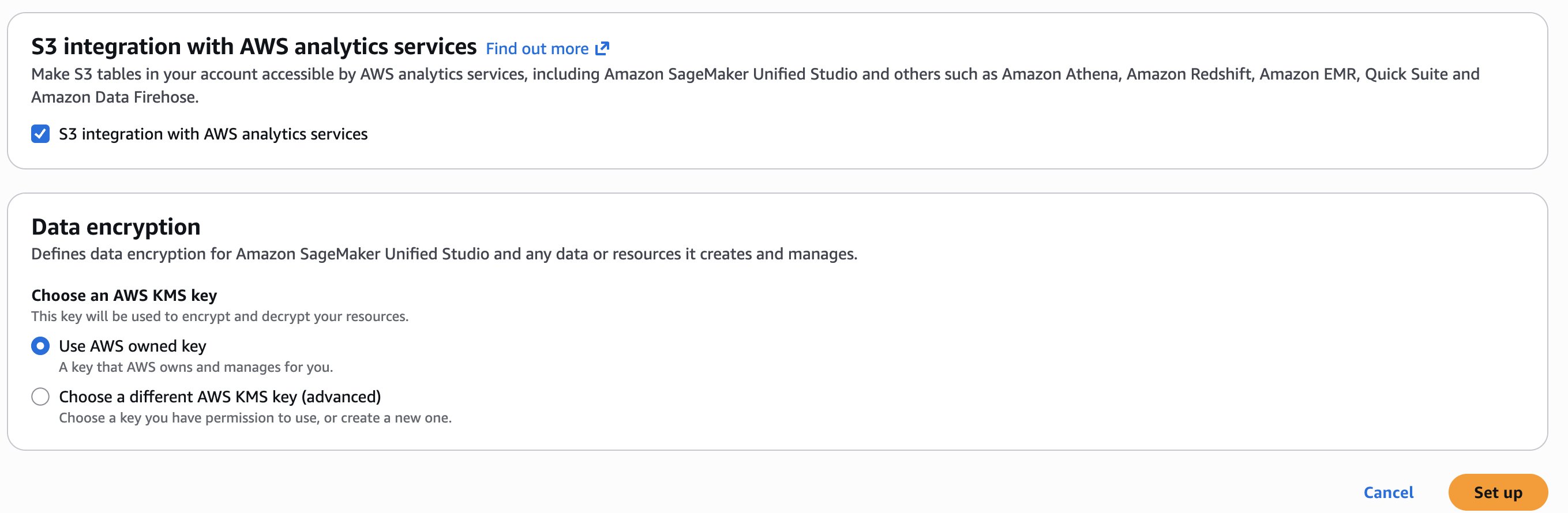

You’ll be prompted both to pick an current AWS Identification and Entry Administration (AWS IAM) function that has entry to your knowledge and compute, or to create a brand new function. For this walkthrough, select Create a brand new function and depart the opposite choices at their defaults.

Select Arrange. It takes a couple of minutes to finish your setting.

Use case

On this publish, you’ll use a Pocket book and the SageMaker Information Agent to carry out the next:

- Working with dataset: Add pattern dataset housing.csv and discover with knowledge explorer

- Polyglot programming: Question dataframes with SQL by way of DuckDB

- Multi-engine entry by way of AWS Glue: Create an AWS Glue desk to unlock Athena SQL/Spark engines for distributed processing

- Superior analytics: Use Athena Spark for knowledge profiling

- AI-assisted improvement: Generate profiling and ML code with Information Agent

- ML workflow: Practice Random Forest mannequin and consider outcomes

First, let’s stroll by the interface and discover its core capabilities.

Understanding the interface

The Notebooks interface follows acquainted pocket book conventions with cells for code execution and markdown for documentation. Inside the pocket book, you’ll see your present programming setting (akin to Python 3.11) and compute profile specs. The interface permits you to:

- Entry your knowledge by shopping recordsdata, exploring knowledge catalogs, and managing third-party connections

- Monitor variables created inside your pocket book context

- Scale compute assets on demand by adjusting digital CPUs and RAM primarily based in your workload necessities, even scaling as much as GPU situations

- Handle packages by putting in and configuring Python packages as wanted

Working with the dataset

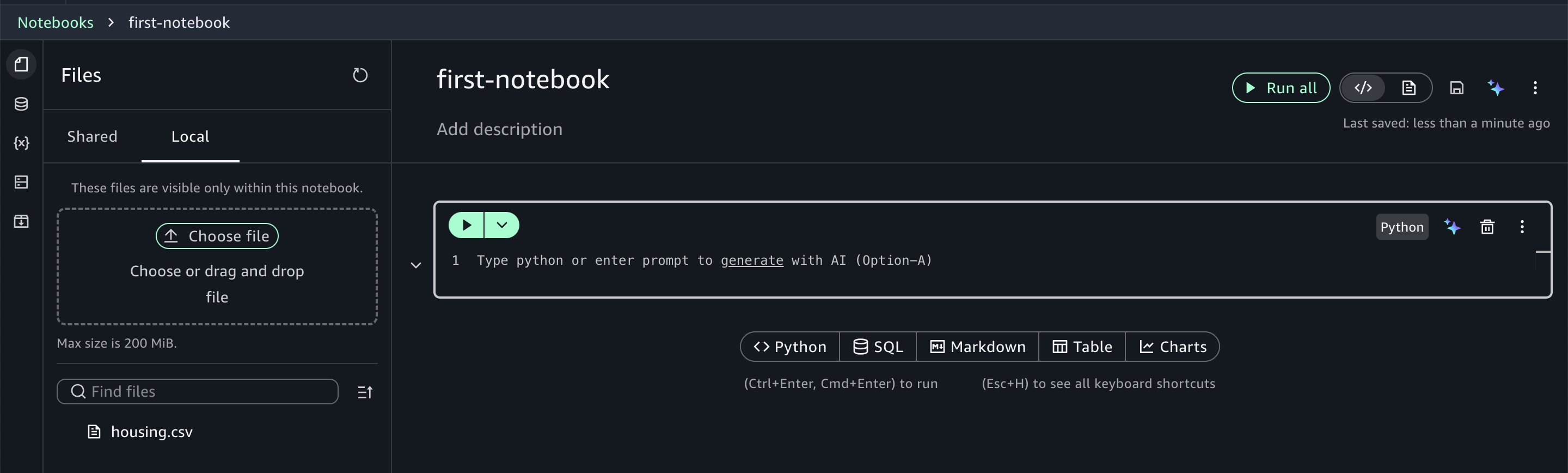

For this walkthrough, you’ll use the housing.csv pattern dataset which you’ll obtain from this web page. (the file is called canvas-sample-housing.csv on the linked web page). Select the Information icon within the left panel and select the Native tab. Add the CSV file to the pocket book on the Native tab.

Notebooks offer you immediate entry to your knowledge belongings. Utilizing the information explorer, you may browse your AWS Glue Information Catalog, Amazon S3 desk catalogs, Amazon S3 buckets, and configured third-party connections.

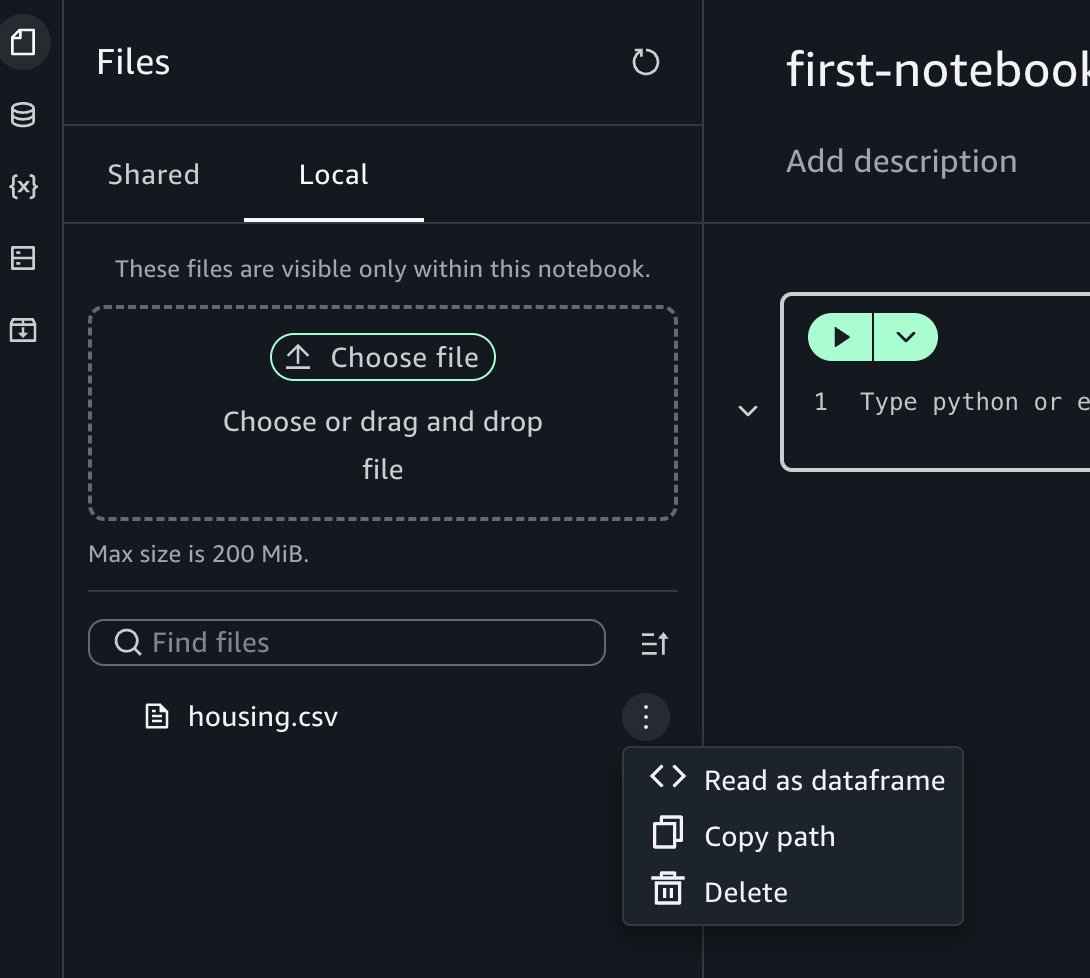

Select the three-dot choices menu.

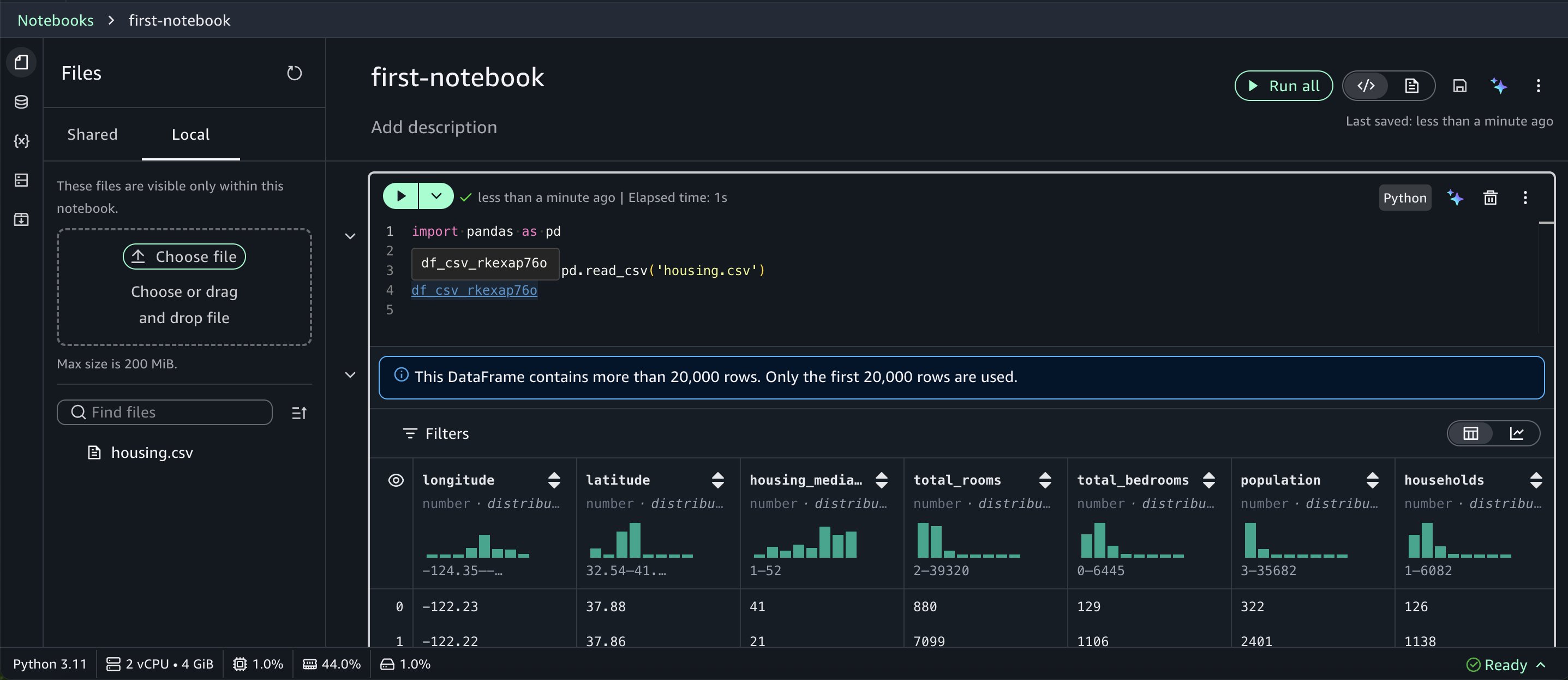

Select Learn as dataframe, then run the inserted cell within the pocket book to view the outcomes.

While you return a dataframe, Notebooks render it in a wealthy desk format with computerized knowledge profiling.

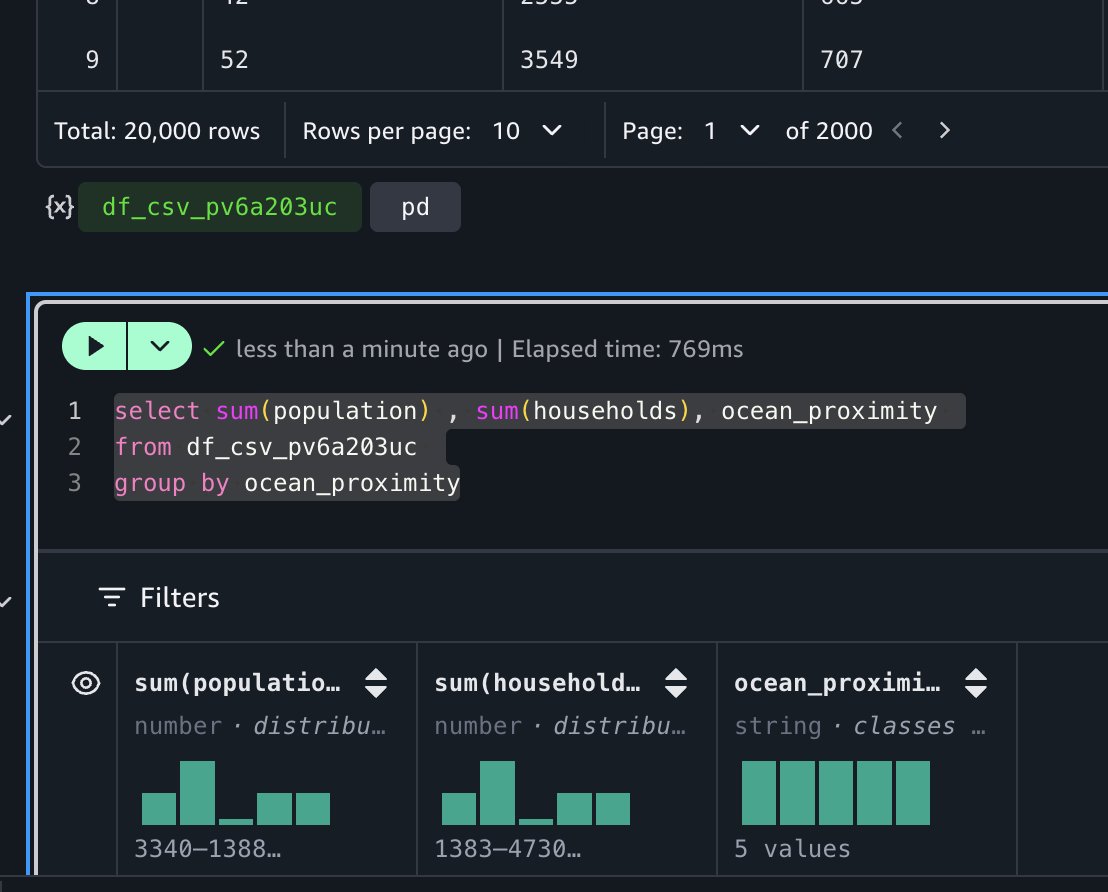

Polyglot programming: Python and SQL collectively

One of the crucial highly effective options in Notebooks is the interoperability between Python and SQL. After you load knowledge right into a Python dataframe, you may instantly question it utilizing SQL. For instance, to calculate complete inhabitants and family by ocean proximity, you may run:

The pocket book’s autocomplete performance acknowledges dataframes in your context, making SQL queries intuitive.

This SQL question runs on DuckDB (an in-memory SQL database engine), which requires no separate set up or server upkeep in your half. DuckDB’s light-weight design integrates into Python, Java, and different environments, making it preferrred on your speedy interactive knowledge evaluation. For distributed processing wants, you should use engines akin to Apache Spark or Trino after creating an AWS Glue desk for this dataset.

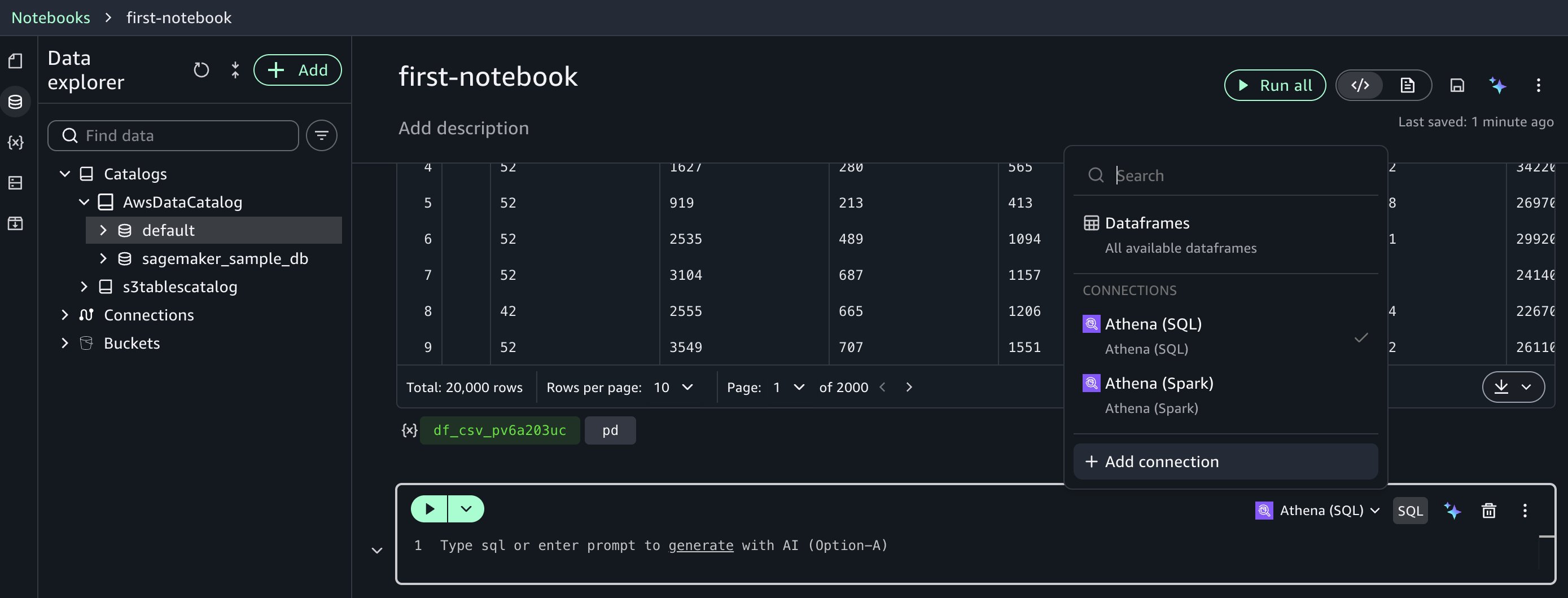

Create an AWS Glue desk for the dataset

After you create an AWS Glue desk, you may question the dataset utilizing numerous AWS Glue catalog-compatible engines, together with Amazon Athena SQL (Trino) and Amazon Athena Spark. These engines ship optimum price-performance on your particular workload necessities.

Begin by creating an AWS Glue database. To do this, create a brand new cell within the pocket book by selecting SQL and deciding on Amazon Athena (SQL).

Run this SQL to create a database: create database demo;

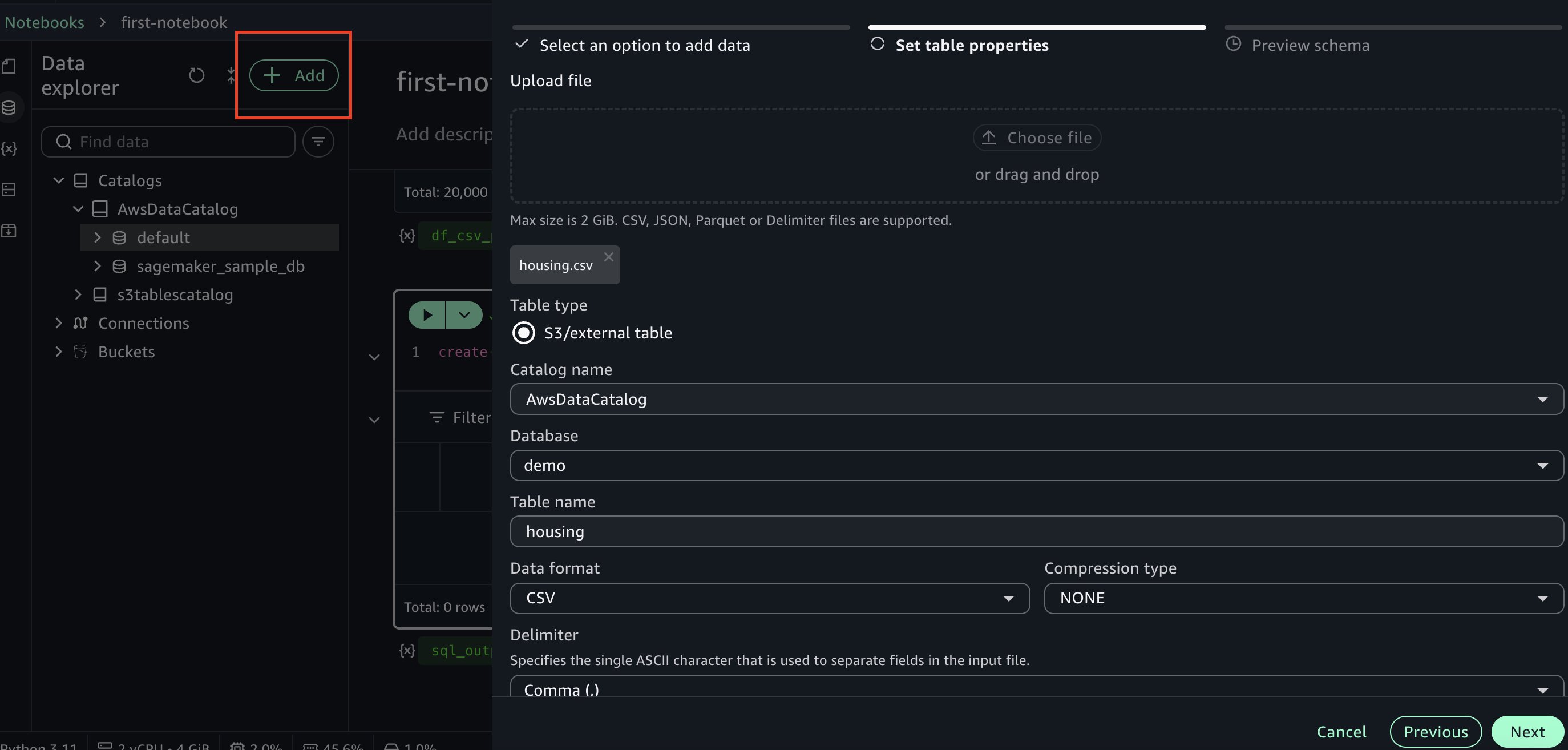

Subsequent, go to knowledge explorer and select +Add on the highest left, then select Create desk. Select the database you created earlier and enter a reputation for the desk. Add the housing.csv dataset file used earlier. Proceed by selecting Subsequent within the aspect panel to create the desk.

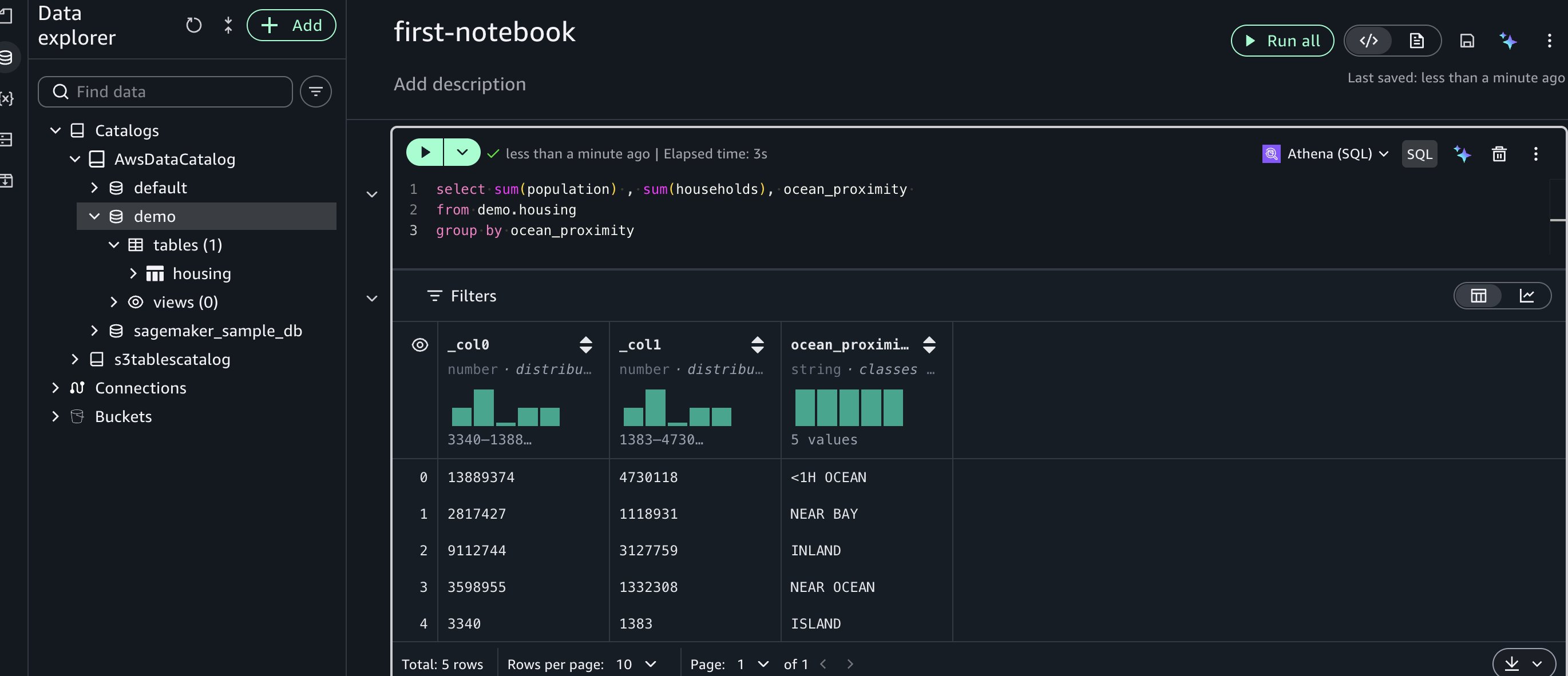

Subsequent, let’s run a pattern SQL question in a brand new cell utilizing Amazon Athena SQL:

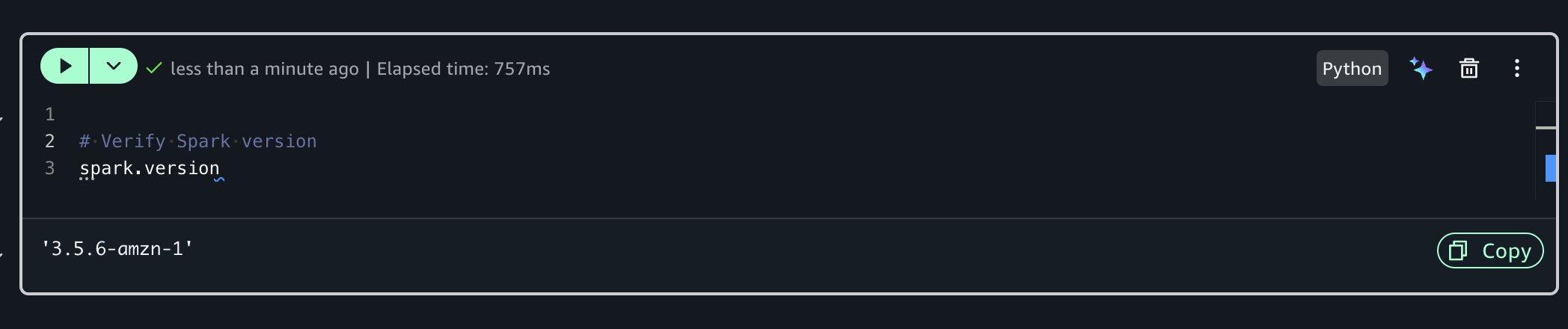

Superior capabilities with Athena Spark

Earlier than you may construct an ML mannequin to foretell home costs, let’s analyze the dataset additional and run knowledge profiling for extra insights. For superior exploration, you should use Amazon Athena Spark inside your pocket book.To do this, you’ll create a brand new Python cell which has a built-in Spark session. Run the next code to verify the Spark model:

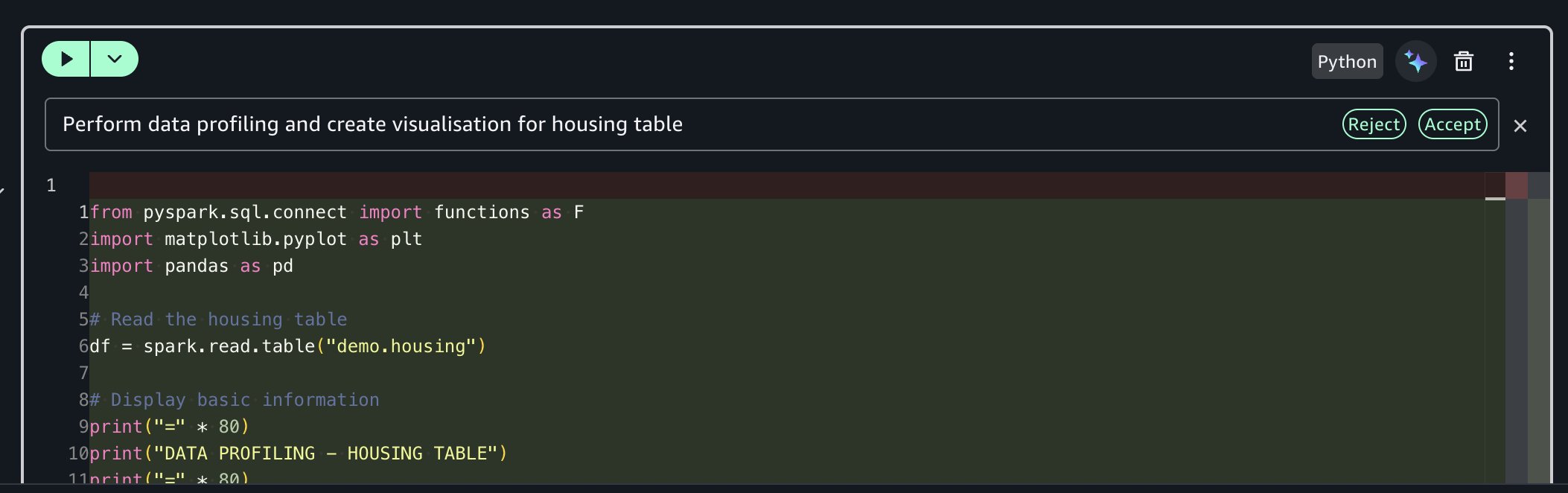

Utilizing the SageMaker Information Agent for knowledge profiling

As an alternative of writing boilerplate code manually, you should use the built-in generative AI functionality.

Immediate: “Carry out knowledge profiling and create visualization for housing desk”

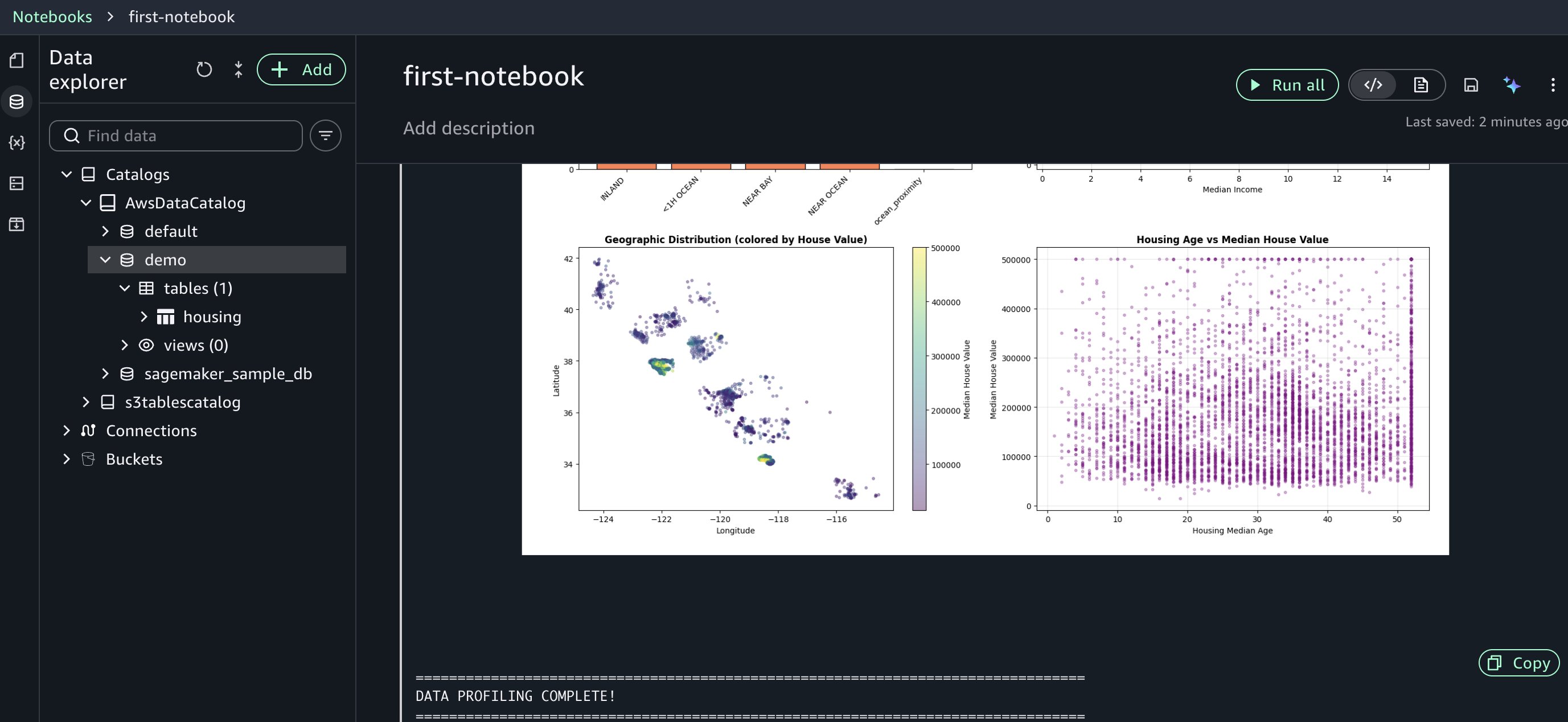

The AI assistant generates complete profiling code for you, together with primary statistics calculation, column-level profiling, knowledge sort evaluation, and lacking worth detection.

The agent accessed your AWS Glue Information Catalog, understood your housing desk construction, and generated profiling code tailor-made to your particular columns and knowledge sorts. This context consciousness reduces the trial-and-error cycle you’d usually face when adapting generic code snippets to your setting. Evaluation the generated code and run it. The quick response occasions enable you iterate in your evaluation effectively.

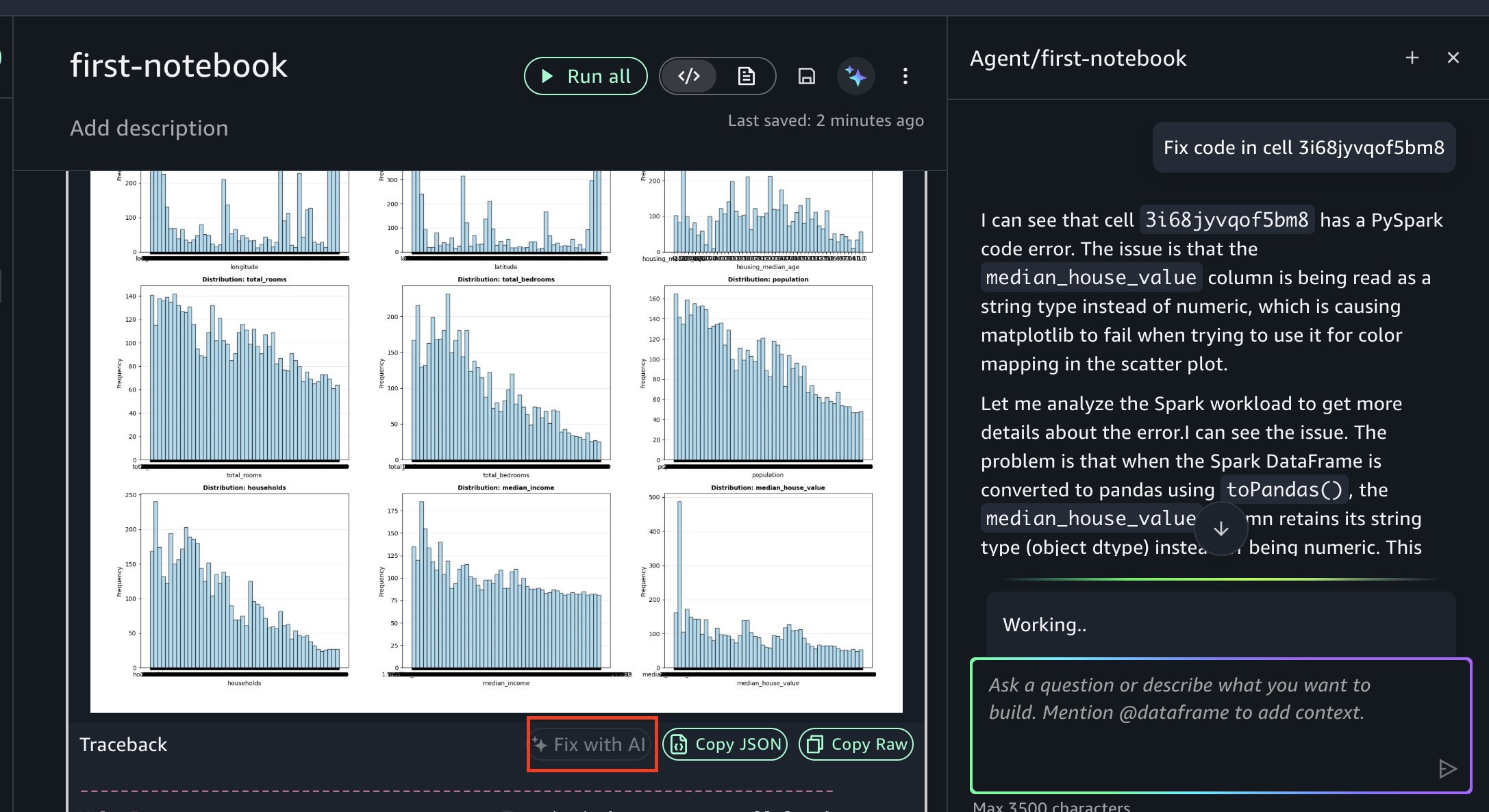

In the event you encounter an error, you may resolve it utilizing Repair with AI as proven within the following determine. When errors happen throughout execution, the “Repair with AI” function analyzes the traceback, diagnoses the foundation trigger, and generates corrected code, so you may hold your evaluation shifting ahead.

Coaching ML fashions

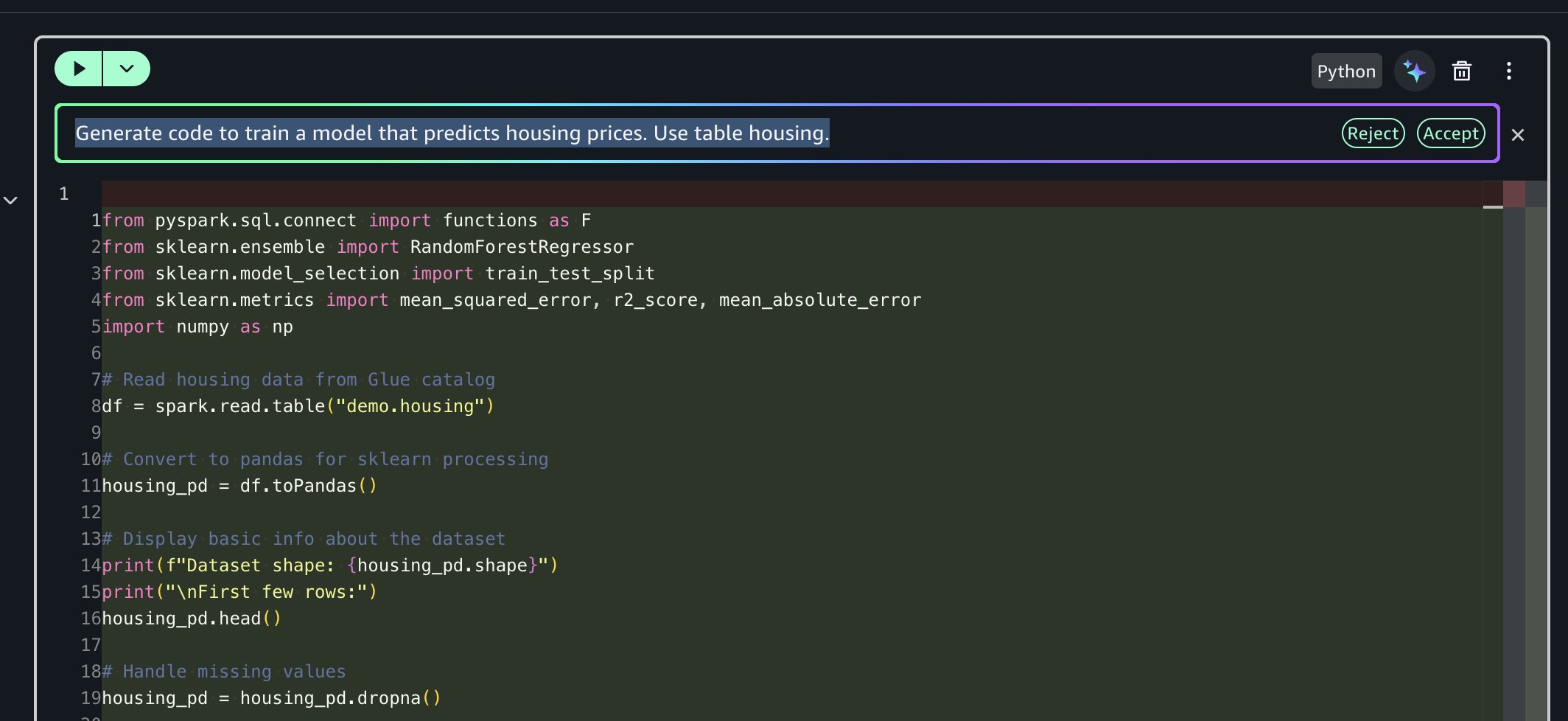

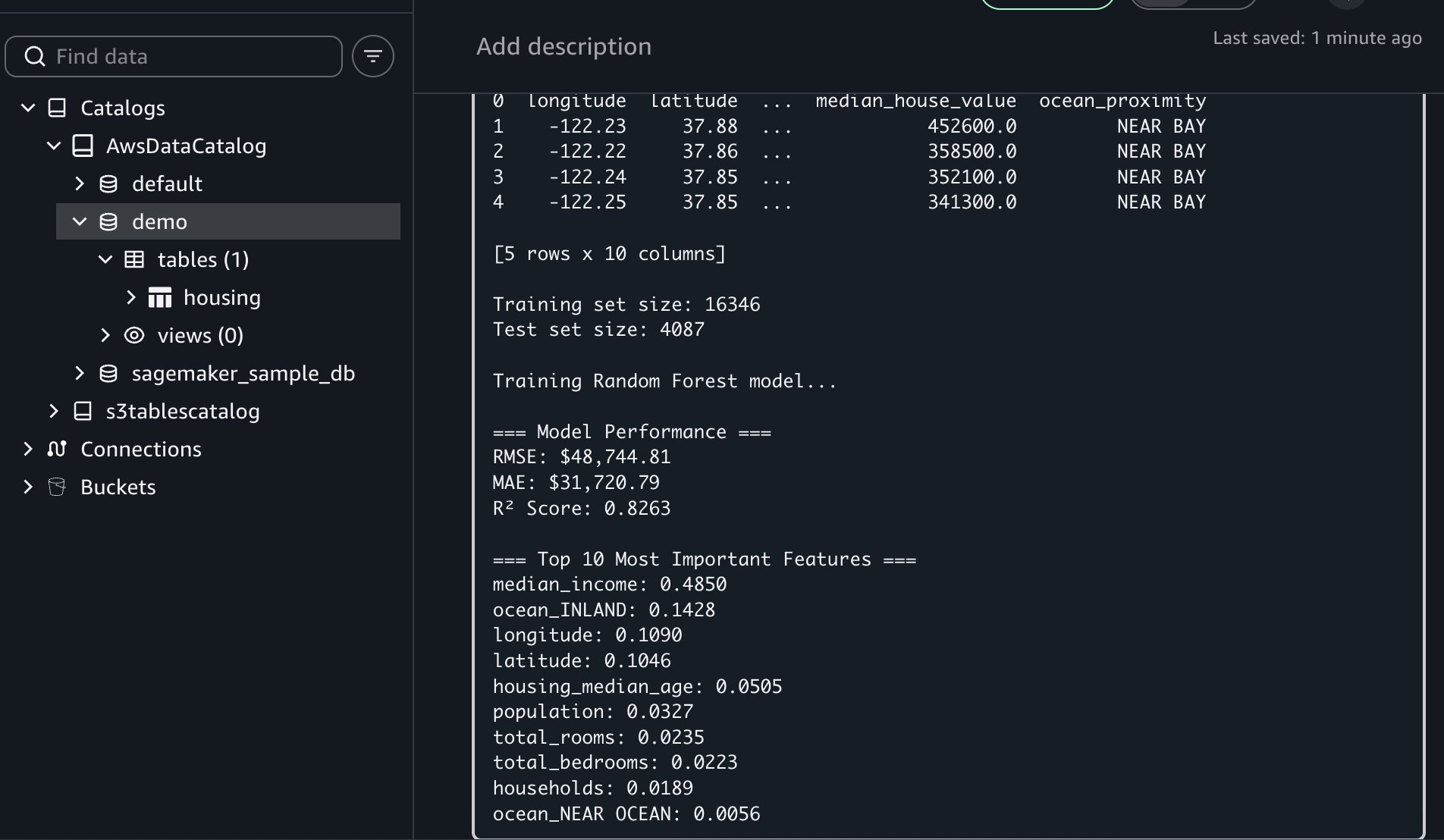

Subsequent, you’ll use the information agent to generate code for coaching a mannequin that predicts housing costs.

Immediate: “Generate code to coach a mannequin that predicts housing costs. Use desk housing.”

The AI assistant generates end-to-end code for you that:

- Reads housing knowledge from AWS Glue catalog utilizing Amazon Athena Spark and converts to pandas

- Converts string columns to numeric, encodes utilizing one-hot encoding and removes lacking values

- Trains a Random Forest mannequin to foretell median home values

- Evaluates mannequin efficiency (RMSE, MAE, R-square)

- Shows high 10 most vital options for predictions

This multi-step orchestration saves you hours of improvement time by dealing with your complete workflow from knowledge entry to mannequin analysis.

In the event you encounter an error, you may resolve it utilizing Repair with AI obtainable within the outcomes traceback part.

This workflow showcased Notebooks’ unified capabilities: you uploaded recordsdata domestically, created AWS Glue tables for multi-engine entry, used Amazon Athena Spark for distributed profiling, and used AI-assisted ML improvement to foretell housing costs. All of this occurred inside a single pocket book setting with out switching instruments.

Key advantages and greatest practices

Notebooks in Amazon SageMaker Unified Studio ship a number of benefits:

- Quicker time to insights: With conventional environments, you would possibly spend hours on configuration earlier than evaluation begins. Notebooks bypass this overhead, so you can begin work instantly.

- Improved collaboration: You’ll be able to share notebooks with constant environments, supporting reproducibility and decreasing “works on my machine” points.

- Lowered complexity: You’ll be able to entry a number of knowledge sources and compute engines from one interface reasonably than navigating separate instruments for every knowledge supply or processing engine.

- AI-accelerated improvement: Generate task-specific code and obtain clever strategies, decreasing time spent on repetitive coding duties.

- Scalable efficiency: Deal with datasets from megabytes to petabytes with acceptable compute assets. The system scales mechanically as knowledge volumes develop.

Finest practices

- Begin with acceptable compute profiles by starting with smaller situations and scaling up as your wants develop.

- Use AI help with pure language prompts on your repetitive duties and sophisticated operations.

- Mix engines strategically through the use of Amazon Athena Spark on your large-scale processing, Amazon Redshift for knowledge warehousing and different specialised engines on your particular workloads.

- Doc your work utilizing markdown cells to create residing documentation alongside your code.

- Manage utilizing a number of cells by breaking the advanced workflows into logical steps for higher readability and debugging.

Cleansing up

To keep away from incurring future expenses, delete the assets you created on this walkthrough:

- Within the Amazon SageMaker Unified Studio console, navigate to the Pocket book web page

- Delete the pocket book

- Delete the demo database and housing desk from the AWS Glue Information Catalog

- Delete Amazon SageMaker Unified Studio area created throughout this walkthrough

- In the event you created a brand new IAM function particularly for this walkthrough, delete it from the IAM console

Conclusion

On this publish, we demonstrated how Notebooks in Amazon SageMaker Unified Studio enable you work extra effectively and ship insights extra rapidly. By combining acquainted pocket book interfaces with enterprise-scale compute, multi-engine help, and generative AI help, groups can streamline knowledge and AI workflows.

The combination of Python and SQL, immediate entry to numerous knowledge sources, and clever code technology capabilities make Notebooks a beneficial device for contemporary knowledge groups. Groups can carry out exploratory knowledge evaluation, construct advanced knowledge pipelines, or prepare ML fashions with the flexibleness and energy wanted inside a single, intuitive setting.

Able to get began? Create your first pocket book in Amazon SageMaker Unified Studio and start analyzing knowledge inside minutes.

Discover further capabilities:

- Time collection evaluation workflows with seasonal decomposition and forecasting

- Pure language processing pipelines for textual content classification and sentiment evaluation

- Integration with Amazon SageMaker Mannequin Registry for ML mannequin versioning

- Superior Spark optimization methods for petabyte-scale processing

Be taught extra:

In regards to the authors