As people, we be taught to do new issues, like ballet or boxing (each actions I had the chance to do this summer season!), by means of trial and error. We enhance by attempting issues out, studying from our errors, and listening to steerage. I do know this suggestions loop effectively—a part of my intern mission for the summer season was educating a reward mannequin to determine higher code fixes to point out customers, as a part of Databricks’ effort to construct a top-tier Code Assistant.

Nonetheless, my mannequin wasn’t the one one studying by means of trial and error. Whereas educating my mannequin to tell apart good code fixes from dangerous ones, I realized tips on how to write sturdy code, steadiness latency and high quality considerations for an impactful product, clearly talk to a bigger staff, and most of all, have enjoyable alongside the best way.

Databricks Assistant Fast Repair

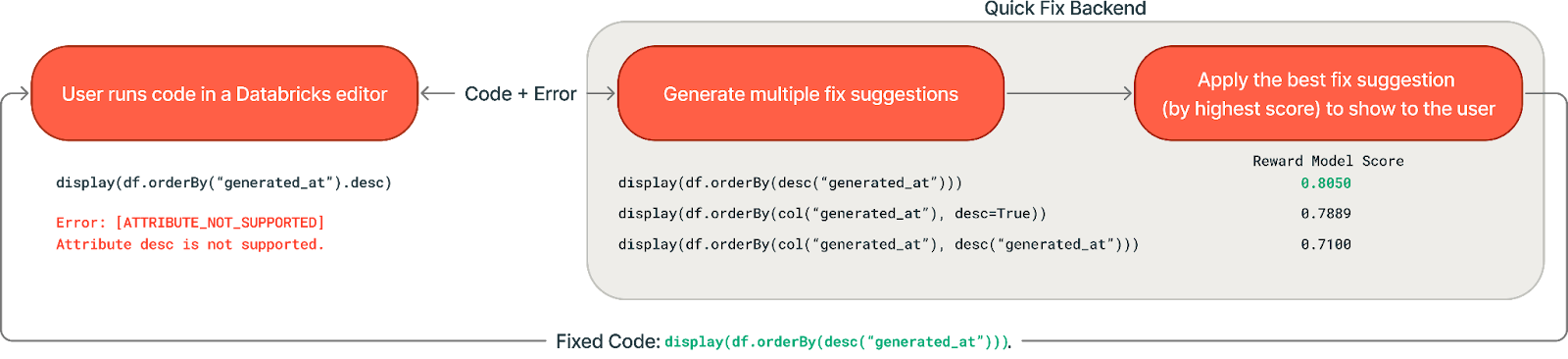

In the event you’ve ever written code and tried to run it, solely to get a pesky error, then you definitely would respect Fast Repair. Constructed into Databricks Notebooks and SQL Editors, Fast Repair is designed for high-confidence fixes that may be generated in 1-3 seconds—best for syntax errors, misspelled column names, and easy runtime errors. When Fast Repair is triggered, it takes code and an error message, then makes use of an LLM to generate a focused repair to resolve the error.

What drawback did my intern mission deal with?

Whereas Fast Repair already existed and was serving to Databricks customers repair their code, there have been loads of methods to make it even higher! For instance, after we generate a code repair and do some fundamental checks that it passes syntax conventions, how can we be sure that the repair we find yourself exhibiting a consumer is probably the most related and correct? Enter best-of-k sampling—generate a number of attainable repair recommendations, then use a reward mannequin to decide on one of the best one.

My mission construction

My mission concerned a mixture of backend implementation and analysis experimentation, which I discovered to be enjoyable and stuffed with studying.

Producing a number of recommendations

I first expanded the Fast Repair backend stream to generate various recommendations in parallel utilizing completely different prompts and contexts. I experimented with methods like including chain-of-thought reasoning, predicted outputs reasoning, system immediate variations, and selective database context to maximise the standard and variety of recommendations. We discovered that producing recommendations with extra reasoning elevated our high quality metrics but additionally induced some latency price.

Selecting one of the best repair suggestion to point out to the consumer

After a number of recommendations are generated, we’ve to decide on one of the best one to return. I began by implementing a easy majority voting baseline, which offered the consumer with probably the most incessantly steered repair—working on the precept {that a} extra generally generated answer would probably be the simplest. This baseline carried out effectively within the offline evaluations however didn’t carry out considerably higher than the present implementation in on-line consumer A/B testing, so it was not rolled out to manufacturing.

Moreover, I developed reward fashions to rank and choose probably the most promising recommendations. I skilled the fashions to foretell which fixes customers would settle for and efficiently execute. We used classical machine studying approaches (logistic regression and gradient boosted resolution tree utilizing the LightGBM bundle) and fine-tuned LLMs.

Outcomes and influence

Surprisingly, for the duty of predicting consumer acceptance and execution success of candidate fixes, the classical fashions carried out comparably to the fine-tuned LLMs in offline evaluations. The choice tree mannequin specifically may need carried out effectively as a result of code edits that “look proper” for the sorts of errors that Fast Repair handles are likely to actually be appropriate: the options that turned out to be significantly informative have been the similarity between the unique line of code and the generated repair, in addition to the error sort.

Given this efficiency, we determined to deploy the choice tree (LightGBM) mannequin in manufacturing. One other consider favor of the LightGBM mannequin was its considerably quicker inference time in comparison with the fine-tuned LLM. Pace is vital for Fast Repair since recommendations should seem earlier than the consumer manually edits their code, and any extra latency means fewer errors fastened. The small measurement of the LightGBM mannequin made it way more useful resource environment friendly and simpler to productionize—alongside some mannequin and infrastructure optimizations, we have been in a position to lower our common inference time by virtually 100x.

With the best-of-k method and reward mannequin carried out, we have been in a position to increase our inside acceptance charge, rising high quality for our customers. We have been additionally in a position to hold our latency inside acceptable bounds of our unique implementation.

If you wish to be taught extra in regards to the Databricks Assistant, try the touchdown web page or the Assistant Fast Repair Announcement.

My Internship Expertise

Databricks tradition in motion

This internship was an unbelievable expertise to contribute on to a high-impact product. I gained firsthand perception into how Databricks’ tradition encourages a robust bias for motion whereas sustaining a excessive bar for system and product high quality.

From the beginning, I seen how clever but humble everybody was. That impression solely grew stronger over time, as I noticed how genuinely supportive the staff was. Even very senior engineers often went out of their means to assist me succeed, whether or not by speaking by means of technical challenges, providing considerate suggestions, or sharing their previous approaches and learnings.

I’d particularly like to provide a shoutout to my mentor Will Tipton, my managers Phil Eichmann and Shanshan Zheng, my casual mentors Rishabh Singh and Matt Hayes, the Editor / Assistant staff, the Utilized AI staff, and the MosaicML people for his or her mentorship. I’ve realized invaluable expertise and life classes from them, which I’ll take with me for the remainder of my profession.

The opposite superior interns!

Final however not least, I had a good time attending to know the opposite interns! The recruiting staff organized many enjoyable occasions that helped us join—considered one of my favorites was the Intern Olympics (pictured beneath). Whether or not it was chatting over lunch, attempting out native exercise lessons, or celebrating birthdays with karaoke, I actually appreciated how supportive and close-knit the intern group was, each in and out of doors of labor.

Intern Olympics! Go Crew 2!

Shout-out to the opposite interns who tried boxing with me!

This summer season taught me that one of the best studying occurs while you’re fixing actual issues with actual constraints—particularly while you’re surrounded by good, pushed, and supportive individuals. Probably the most rewarding a part of my internship wasn’t simply finishing mannequin coaching or presenting attention-grabbing outcomes to the staff, however realizing that I’ve grown in my means to ask higher questions, cause by means of design trade-offs, and ship a concrete characteristic from begin to end on a platform as extensively used as Databricks.

If you wish to work on cutting-edge tasks with wonderful teammates, I’d suggest you to use to work at Databricks! Go to the Databricks Careers web page to be taught extra about job openings throughout the corporate.