As enterprises construct agent programs to ship prime quality AI apps, we proceed to ship optimizations to ship greatest general cost-efficiency for our clients. We’re excited to announce the provision of the Meta Llama 3.3 mannequin on the Databricks Knowledge Intelligence Platform, and vital updates to Mosaic AI’s Mannequin Serving pricing and effectivity. These updates collectively will scale back your inference prices by as much as 80%, making it considerably less expensive than earlier than for enterprises constructing AI brokers or doing batch LLM processing.

- 80% Price Financial savings: Obtain vital value financial savings with the brand new Llama 3.3 mannequin and decreased pricing.

- Quicker Inference Speeds: Get 40% sooner responses and decreased batch processing time, enabling higher buyer experiences and sooner insights.

- Entry to the brand new Meta Llama 3.3 mannequin: leverage the newest from Meta to attain larger high quality and efficiency.

Construct Enterprise AI Brokers with Mosaic AI and Llama 3.3

We’re proud to accomplice with Meta to deliver Llama 3.3 70B to Databricks. This mannequin rivals the bigger Llama 3.1 405B in instruction-following, math, multilingual, and coding duties whereas providing a cost-efficient answer for domain-specific chatbots, clever brokers, and large-scale doc processing.

Whereas Llama 3.3 units a brand new benchmark for open basis fashions, constructing production-ready AI brokers requires greater than only a highly effective mannequin. Databricks Mosaic AI is probably the most complete platform for deploying and managing Llama fashions, with a strong suite of instruments to construct safe, scalable, and dependable AI agent programs that may purpose over your enterprise knowledge.

- Entry Llama with a Unified API: Simply entry Llama and different main basis fashions, together with OpenAI and Anthropic, by means of a single interface. Experiment, evaluate, and swap fashions effortlessly for max flexibility.

- Safe and Monitor Visitors with AI Gateway: Monitor utilization and request/response utilizing Mosaic AI Gateway whereas imposing security insurance policies like PII detection and dangerous content material filtering for safe, compliant interactions.

- Construct Quicker Actual-Time Brokers: Create high-quality real-time brokers with 40% sooner inference speeds, function-calling capabilities and help for guide or automated agent analysis.

- Course of Batch Workflows at Scale: Simply apply LLMs to massive datasets straight in your ruled knowledge utilizing a easy SQL interface, with 40% sooner processing speeds and fault tolerance.

- Customise Fashions to Get Excessive High quality: Fantastic-tune Llama with proprietary knowledge to construct domain-specific, high-quality options.

- Scale with Confidence: Develop deployments with SLA-backed serving, safe configurations, and compliance-ready options designed to auto-scale together with your enterprise’s evolving calls for.

Making GenAI Extra Reasonably priced with New Pricing:

We’re rolling out proprietary effectivity enhancements throughout our inference stack, enabling us to cut back costs and make GenAI much more accessible to everybody. Right here’s a better take a look at the brand new pricing modifications:

Pay-per-Token Serving Worth Cuts:

- Llama 3.1 405B mannequin: 50% discount in enter token value, 33% discount in output token value.

- Llama 3.3 70B and Llama 3.1 70B mannequin: 50% discount for each enter and output tokens.

Provisioned Throughput Worth Cuts:

- Llama 3.1 405B: 44% value discount per token processed.

- Llama 3.3 70B and Llama 3.1 70B: 49% discount in {dollars} per complete tokens processed.

Decreasing Complete Price of Deployment by 80%

With the extra environment friendly and high-quality Llama 3.3 70B mannequin, mixed with the pricing reductions, now you can obtain as much as an 80% discount in your complete TCO.

Let’s take a look at a concrete instance. Suppose you’re constructing a customer support chatbot agent designed to deal with 120 requests per minute (RPM). This chatbot processes a median of three,500 enter tokens and generates 300 output tokens per interplay, creating contextually wealthy responses for customers.

Utilizing Llama 3.3 70B, the month-to-month value of operating this chatbot, focusing solely on LLM utilization, could be 88% decrease value in comparison with Llama 3.1 405B and 72% more cost effective in comparison with main proprietary fashions.

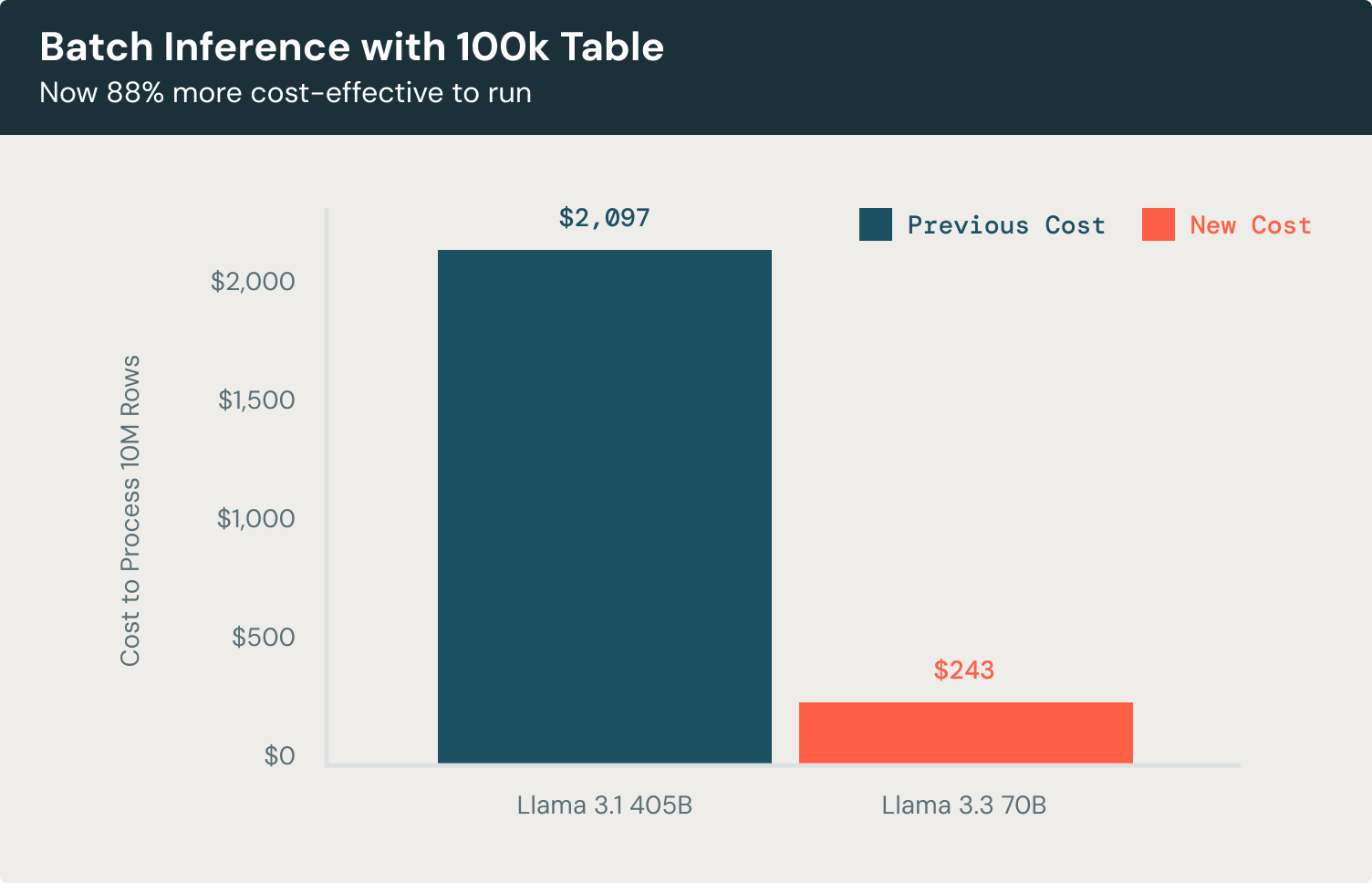

Now let’s check out a batch inference instance. For duties like doc classification or entity extraction throughout a 100K-record dataset, the Llama 3.3 70B mannequin gives outstanding effectivity in comparison with Llama 3.1 405B. Processing rows with 3500 enter tokens and producing 300 output tokens every, the mannequin achieves the identical high-quality outcomes whereas chopping prices by 88%, that’s 58% more cost effective than utilizing main proprietary fashions. This allows you to classify paperwork, extract key entities, and generate actionable insights at scale with out extreme operational bills.

Get Began Right now

Go to the AI Playground to rapidly attempt Llama 3.3 straight out of your workspace. For extra data, please confer with the next sources: