Organizations in the present day handle huge quantities of knowledge, with a lot of it saved based mostly on preliminary use instances and enterprise wants. As necessities for this knowledge evolve—whether or not for real-time reporting, superior machine studying (ML), or cross-team knowledge sharing—the unique storage codecs and buildings typically develop into a bottleneck. When this occurs, knowledge groups regularly discover that datasets that labored nicely for his or her authentic goal now require complicated transformations; customized extract, remodel, and cargo (ETL) pipelines; and in depth redesign to unblock new analytical workflows. This creates a major barrier between invaluable knowledge and actionable insights.

Amazon Athena provides an answer by means of its serverless, SQL-based method to knowledge transformation. With the CREATE TABLE AS SELECT (CTAS) performance in Athena, you may remodel current knowledge and create new tables within the course of, utilizing commonplace SQL statements to assist cut back the necessity for customized ETL pipeline growth.

This CTAS expertise now helps Amazon S3 Tables, which give built-in optimization, Apache Iceberg assist, computerized desk upkeep, and ACID transaction capabilities. This mix may help organizations modernize their knowledge infrastructure, obtain improved efficiency, and cut back operational overhead.

You should use this method to remodel knowledge from generally used tabular codecs, together with CSV, TSV, JSON, Avro, Parquet, and ORC. The ensuing tables are instantly accessible for querying throughout Athena, Amazon Redshift, Amazon EMR, and supported third-party purposes, together with Apache Spark, Trino, DuckDB, and PyIceberg.

This put up demonstrates how Athena CTAS simplifies the info transformation course of by means of a sensible instance: migrating an current Parquet dataset into S3 Tables.

Answer overview

Take into account a worldwide attire ecommerce retailer processing 1000’s of each day buyer critiques throughout marketplaces. Their dataset, at present saved in Parquet format in Amazon Easy Storage Service (Amazon S3), requires updates every time clients modify rankings and overview content material. The enterprise wants an answer that helps ACID transactions—the flexibility to atomically insert, replace, and delete data whereas sustaining knowledge consistency—as a result of overview knowledge adjustments regularly as clients edit their suggestions.

Moreover, the info crew faces operational challenges: guide desk upkeep duties like compaction and metadata administration, no built-in assist for time journey queries to investigate historic adjustments, and the necessity for customized processes to deal with concurrent knowledge modifications safely.

These necessities level to a necessity for an analytics-friendly answer that may deal with transactional workloads whereas offering automated desk upkeep, lowering the operational overhead that at present burdens their analysts and engineers.

S3 Tables and Athena present a super answer for these necessities. S3 Tables present storage optimized for analytics workloads, providing Iceberg assist with computerized desk upkeep and steady optimization. Athena is a serverless, interactive question service you should use to investigate knowledge utilizing commonplace SQL with out managing infrastructure. When mixed, S3 Tables deal with the storage optimization and upkeep mechanically, and Athena offers the SQL interface for knowledge transformation and querying. This may help cut back the operational overhead of guide desk upkeep whereas offering environment friendly knowledge administration and optimum efficiency throughout supported knowledge processing and question engines.

Within the following sections, we present methods to use the CTAS performance in Athena to remodel the Parquet-formatted overview knowledge into S3 Tables with a single SQL assertion. We then display methods to handle dynamic knowledge utilizing INSERT, UPDATE, and DELETE operations, showcasing the ACID transaction capabilities and metadata question options in S3 Tables.

Conditions

On this walkthrough, we might be working with artificial buyer overview knowledge that we’ve made publicly obtainable at s3://aws-bigdata-blog/generated_synthetic_reviews/knowledge/. To observe alongside, you need to have the next conditions:

- AWS account setup:

- An IAM person or function with the next permissions:

AmazonAthenaFullAccessmanaged coverage- S3 Tables permissions for creating and managing desk buckets

- S3 Tables permissions for creating and managing tables inside buckets

- Learn entry to the general public dataset location:

s3://aws-bigdata-blog/generated_synthetic_reviews/knowledge/

You’ll create an S3 desk bucket named athena-ctas-s3table-demo as a part of this walkthrough. Be certain that this identify is obtainable in your chosen AWS Area.

Arrange a database and tables in Athena

Let’s begin by making a database and supply desk to carry our Parquet knowledge. This desk will function the info supply for our CTAS operation.

Navigate to the Athena question editor to run the next queries:

As a result of the info is partitioned by product class, you need to add the partition data to the desk metadata utilizing MSCK REPAIR TABLE:

The preview question ought to return pattern overview knowledge, confirming the desk is prepared for transformation:

Create a desk bucket

Desk buckets are designed to retailer tabular knowledge and metadata as objects for analytics workloads. Observe these steps to create a desk bucket:

- Register to the console in your most well-liked Area and open the Amazon S3 console.

- Within the navigation pane, select Desk buckets.

- Select Create desk bucket.

- For Desk bucket identify, enter

athena-ctas-s3table-demo. - Choose Allow integration for Integration with AWS analytics companies if not already enabled.

- Depart the encryption choice to default.

- Select Create desk bucket.

Now you can see athena-ctas-s3table-demo listed beneath Desk buckets.

Create a namespace

Namespaces present logical group for tables inside your S3 desk bucket, facilitating scalable desk administration. On this step, we create a reviews_namespace to arrange our buyer overview tables. Observe these steps to create the desk namespace:

- Within the navigation pane beneath Desk buckets, select your newly created bucket

athena-ctas-s3table-demo. - On the bucket particulars web page, select Create desk with Athena.

- Select Create a namespace for Namespace configuration.

- Enter

reviews_namespacefor Namespace identify. - Select Create namespace.

- Select Create desk with Athena to navigate to the Athena question editor.

You must now see your S3 Tables configuration mechanically chosen beneath Information, as proven within the following screenshot.

Once you allow Integration with AWS analytics companies, when creating an S3 desk bucket, AWS Glue creates a brand new catalog referred to as s3tablescatalog in your account’s default Information Catalog particular to your Area. The mixing maps the S3 desk bucket sources in your account and Area on this catalog.

This configuration makes positive subsequent queries will goal your S3 Tables namespace. You’re now able to create tables utilizing the CTAS performance.

Create a brand new S3 desk utilizing the customer_reviews desk

A desk represents a structured dataset consisting of underlying desk knowledge and associated metadata saved within the Iceberg desk format. Within the following steps, we remodel the customer_reviews desk that we created earlier on the Parquet dataset into an S3 desk utilizing the Athena CTAS assertion. We partition by date utilizing the day() partition transforms from Iceberg.

Run the next CTAS question:

This question creates as S3 desk with the next optimizations:

- Parquet format – Environment friendly columnar storage for analytics

- Day-level partitioning – Makes use of Iceberg’s

day()remodel onreview_datefor quick queries when filtering on dates - Filtered knowledge – Contains solely critiques from 2016 onwards to display selective transformation

You have got efficiently reworked your Parquet dataset to S3 Tables utilizing a single CTAS assertion.

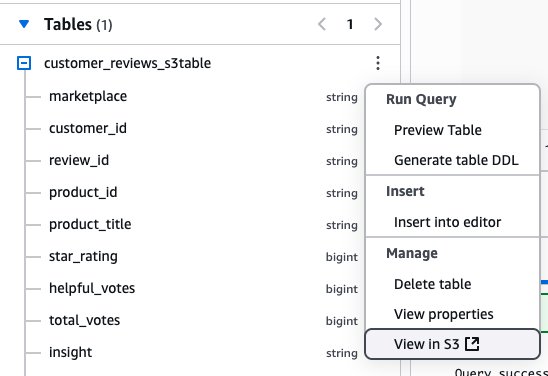

After you create the desk, customer_reviews_s3table will seem beneath Tables within the Athena console. You may as well view the desk on the Amazon S3 console by selecting the choices menu (three vertical dots) subsequent to the desk identify and selecting View in S3.

Run a preview question to substantiate the info transformation:

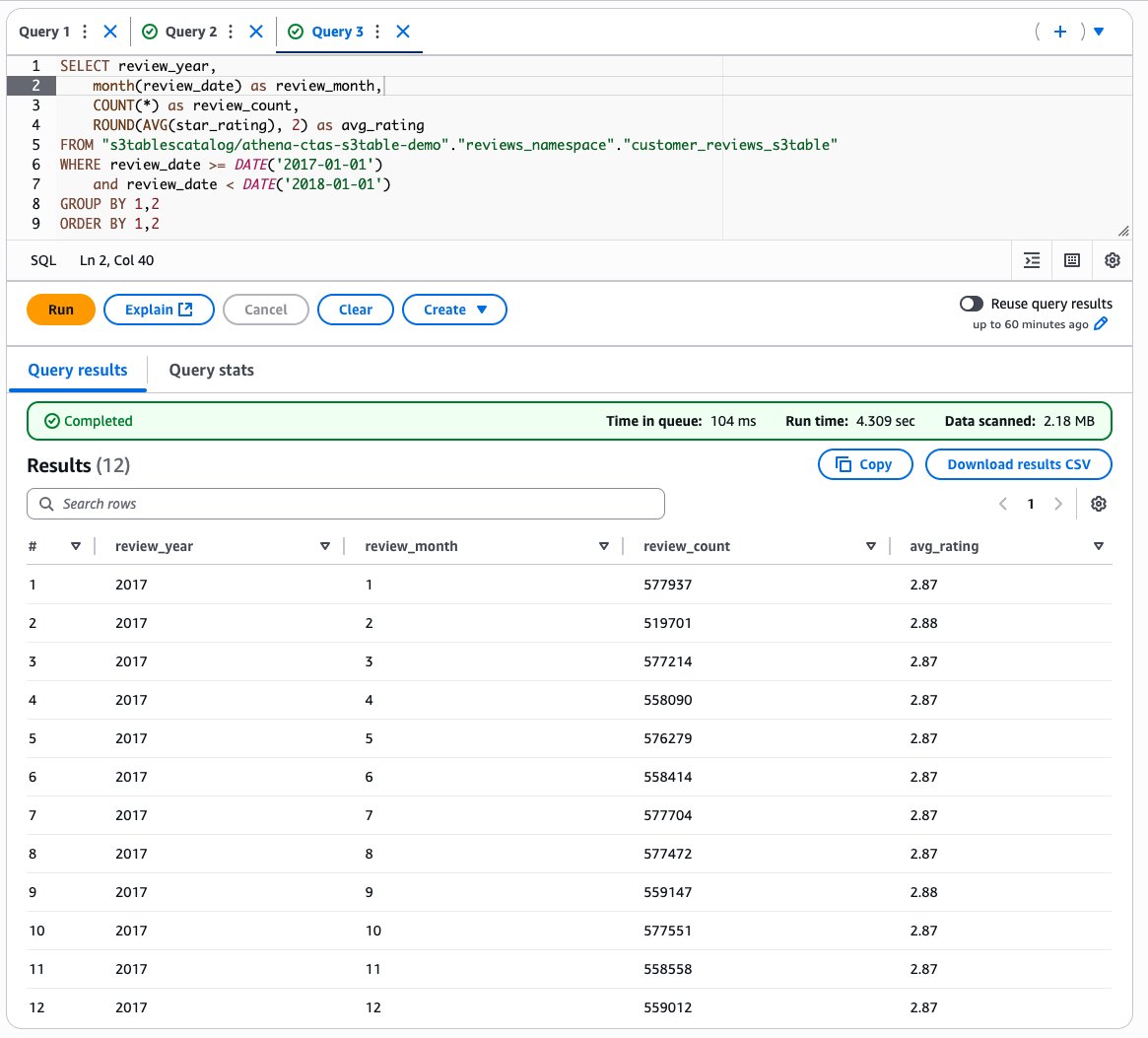

Subsequent, let’s analyze month-to-month overview traits:

The next screenshot exhibits our output.

ACID operations on S3 Tables

Athena helps commonplace SQL DML operations (INSERT, UPDATE, DELETE and MERGE INTO) on S3 Tables with full ACID transaction ensures. Let’s display these capabilities by including historic knowledge and performing knowledge high quality checks.

Insert extra knowledge into the desk utilizing INSERT

Use the next question to insert overview knowledge from 2014 and 2015 that wasn’t included within the preliminary CTAS operation:

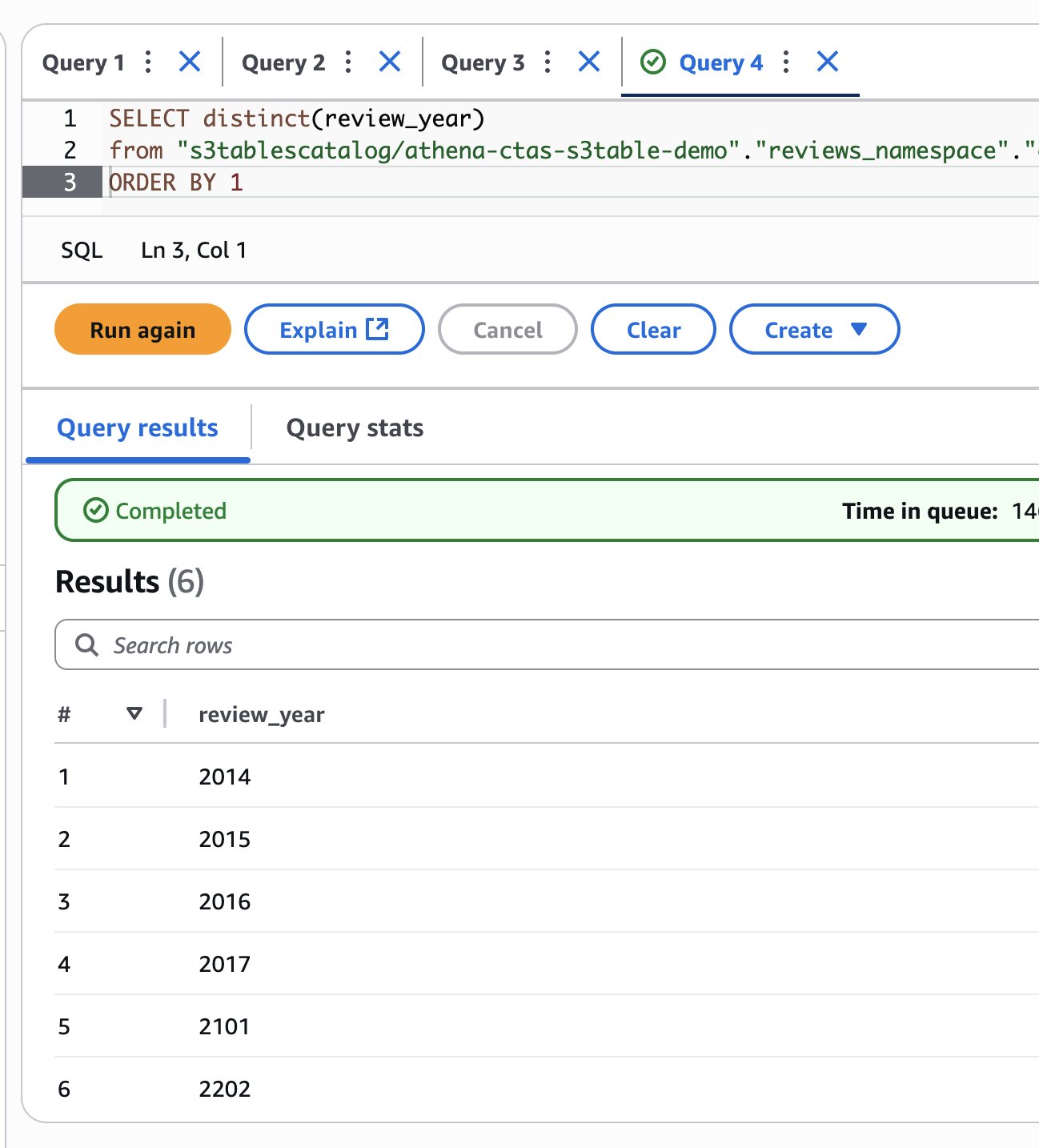

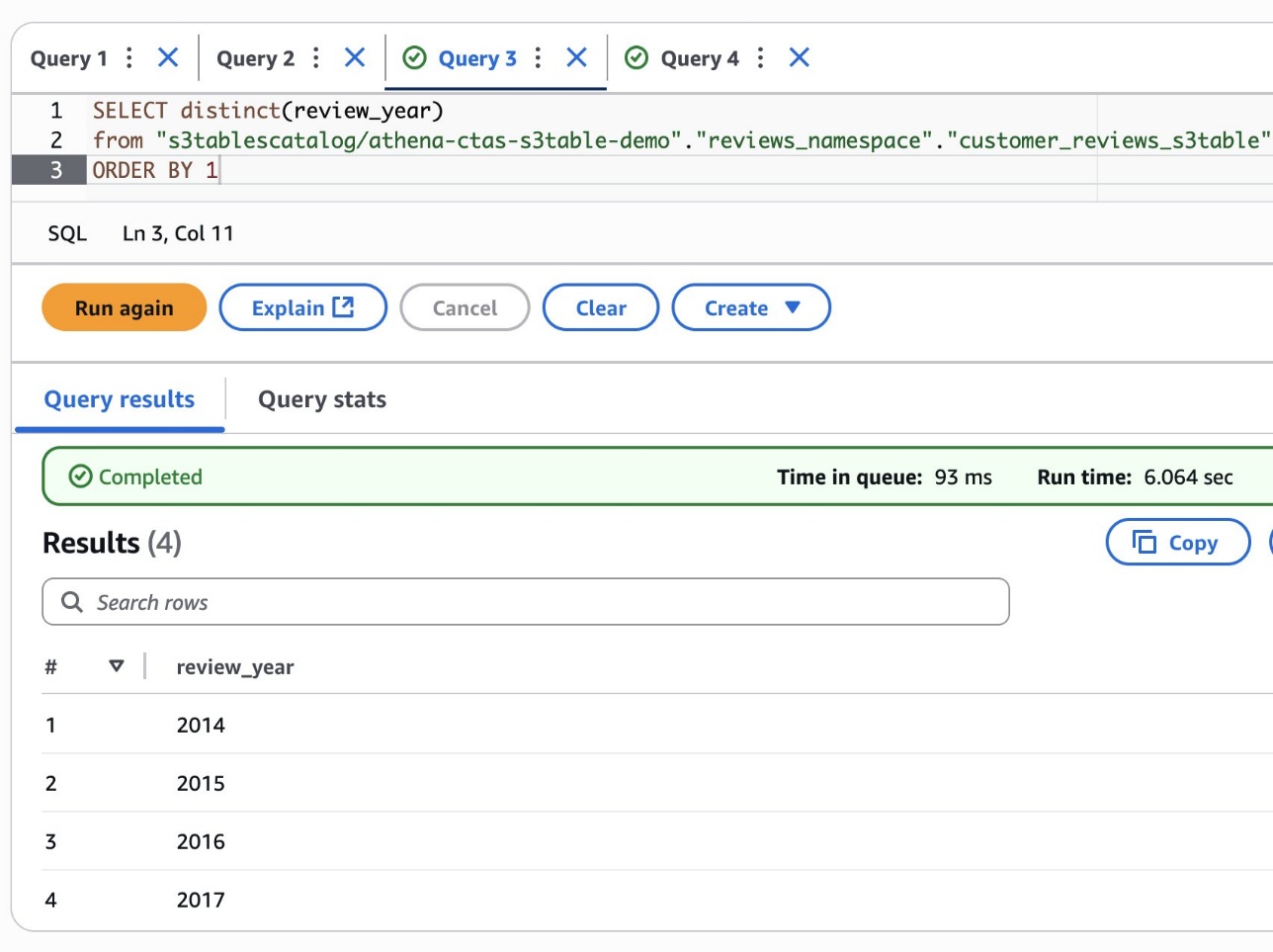

Examine which years at the moment are current within the desk:

The next screenshot exhibits our output.

The outcomes present that you’ve got efficiently added 2014 and 2015 knowledge. Nonetheless, you may also discover some invalid years like 2101 and 2202, which seem like knowledge high quality points within the supply dataset.

Clear invalid knowledge utilizing DELETE

Take away the data with incorrect years utilizing the S3 Tables DELETE functionality:

Verify the invalid data have been eliminated.

Replace product classes utilizing UPDATE

Let’s display the UPDATE operation with a enterprise situation. Think about the corporate decides to rebrand the Movies_TV product class to Entertainment_Media to higher replicate buyer preferences.

First, study the present product classes and their document counts:

You must see a document with product_category as Movies_TV with roughly 5,690,101 critiques. Use the next question to replace all Movies_TV data to the brand new class identify:

Confirm the class identify change whereas confirming the document depend stays the identical:

The outcomes now present Entertainment_Media with the identical document depend (5,690,101), confirming that the UPDATE operation efficiently modified the class identify whereas preserving knowledge integrity.

These examples display transactional assist in S3 Tables by means of Athena. Mixed with automated desk upkeep, this helps you construct scalable, transactional knowledge lakes extra effectively with minimal operational overhead.

Extra transformation eventualities utilizing CTAS

The Athena CTAS performance helps a number of transformation paths to S3 Tables. The next eventualities display how organizations can use this functionality for varied knowledge modernization wants:

- Convert from varied knowledge codecs – Athena can question knowledge in a variety of codecs in addition to federated knowledge sources, and you’ll convert these queryable sources to an S3 desk utilizing CTAS. For instance, to create an S3 desk from a federated knowledge supply, use the next question:

Pathik Shah is a Sr. Analytics Architect on Amazon Athena. He joined AWS in 2015 and has been focusing within the large knowledge analytics area since then, serving to clients construct scalable and strong options utilizing AWS Analytics companies.

Pathik Shah is a Sr. Analytics Architect on Amazon Athena. He joined AWS in 2015 and has been focusing within the large knowledge analytics area since then, serving to clients construct scalable and strong options utilizing AWS Analytics companies. Aritra Gupta is a Senior Technical Product Supervisor on the Amazon S3 crew at Amazon Internet Companies. He helps clients construct and scale knowledge lakes. Primarily based in Seattle, he likes to play chess and badminton in his spare time.

Aritra Gupta is a Senior Technical Product Supervisor on the Amazon S3 crew at Amazon Internet Companies. He helps clients construct and scale knowledge lakes. Primarily based in Seattle, he likes to play chess and badminton in his spare time.