Knowledge technique can use geospatial knowledge to supply organizations with insights for decision-making and operational optimization. By incorporating geospatial knowledge (resembling GPS coordinates, factors, polygons and geographic boundaries), companies can uncover patterns, developments, and relationships which may in any other case stay hidden throughout a number of industries, from aviation and transportation to environmental research and concrete planning. Processing and analyzing this geospatial knowledge at scale may be difficult, particularly when coping with billions of every day observations.

On this put up, we discover find out how to use Apache Sedona with AWS Glue to course of and analyze large geospatial datasets.

Introduction to geospatial knowledge

Geospatial knowledge is info that has a geographic element. It describes objects, occasions, or phenomena together with their location on the Earth’s floor. This knowledge consists of coordinates (latitude and longitude), shapes (factors, strains, polygons), and related attributes (such because the title of a metropolis or the kind of street).

Key forms of geospatial geometries (and examples of every in parentheses) embrace:

- Level – Represents a single coordinate (a climate station).

- MultiPoint – A set of factors (bus stops in a metropolis).

- LineString – A sequence of factors related in a line (a river or a flight path).

- MultiLineString – A number of strains (a number of flight routes).

- Polygon – A closed space (the boundary of a metropolis).

- MultiPolygon – A number of polygons (nationwide parks in a rustic).

Geospatial datasets come in numerous codecs, every designed to retailer and characterize various kinds of geographic info. Frequent codecs for geospatial knowledge are vector codecs (Shapefile, GeoJSON), raster codecs (GeoTIFF, ESRI Grid), GPS codecs (GPX, NMEA), net codecs (WMS, GeoRSS) amongst others.

Core ideas of Apache Sedona

Apache Sedona is an open-source computing framework for processing large-scale geospatial knowledge. Constructed on high of Apache Spark, Sedona extends Spark’s capabilities to deal with spatial operations effectively. At its core, Sedona introduces a number of key ideas that allow distributed spatial processing. These embrace Spatial Resilient Distributed Datasets (SRDDs), which permit for the distribution of spatial knowledge throughout a cluster, and Spatial SQL, which offers a well-recognized SQL-like interface for spatial queries. A few of the core capabilities of Apache Sedona are:

- Environment friendly spatial knowledge varieties like factors, strains and polygons.

- Spatial operations and capabilities resembling

ST_Contains(test if level is within a polygon),ST_Intersects(test if level is within a polygon),ST_H3CellIDs(geospatial indexing system developed by Uber, return the H3 cell ID(s) that comprise the given level on the specified decision). - Spatial joins to mix completely different spatial datasets.

- Integration with Spark SQL (geospatial capabilities to run spatial SQL queries).

- Spatial indexing strategies, resembling quad-trees and R-trees, to optimize question efficiency.

For extra details about the capabilities obtainable in Apache Sedona, go to the official Sedona Capabilities documentation.

Use case

This use case consists of a world air site visitors visualization and evaluation platform that processes and shows real-time or historic plane monitoring knowledge on an interactive world map. Utilizing distinctive plane identifiers from the Worldwide Civic Aviation Group (ICAO), the system ingests trajectory data containing info resembling geographic place (latitude and longitude), altitude, pace, and flight route, then transforms this uncooked knowledge into two complementary visible layers. The Flight Tracks Layer plots the routes traveled by every plane individually, permitting for the evaluation of particular trajectories and navigation patterns. The Flight Density Layer makes use of hexagonal spatial indexing (H3) to combination and establish areas of upper air site visitors focus worldwide, revealing busy air corridors, aviation hubs, and high-density flight zones.

The dataset used for this use case is historic flight tracker knowledge from ADSB.lol. ADSB.lol offers unfiltered flight tracker with a give attention to open knowledge. Knowledge can also be freely obtainable by way of the API. The info incorporates a file per plane, a JSON gzip file containing the information for that plane for the day.

It is a JSON hint file format pattern:

For this use case, it is a simplified schema of the dataset after processing:

icao -Distinctive plane identifiertimestamp -Epoch timestamp of the statement (transformed to readable format)hint.lat / hint.lon -Latitude and longitude of the planehint.altitude -Plane altitudehint.ground_speed -Floor pacegeometry -Geospatial geometry of the statement level (Level)

Answer overview

This answer allows plane monitoring and evaluation. The info may be visualized on maps and used for aviation administration and security purposes. The method begins with knowledge acquisition, extracting the compressed JSON recordsdata from TAR archives, then transforms this uncooked knowledge into geospatial objects, aggregating them into H3 cells for environment friendly evaluation. The processed knowledge schema consists of ICAO plane identifiers, timestamps, latitude/longitude coordinates, and derived fields resembling H3 cell identifiers and level counts per cell. This construction permits detailed monitoring of particular person flights and combination evaluation of site visitors patterns. For visualization, you’ll be able to generate density maps utilizing the H3 grid system and create visible representations of particular person flight tracks. The structure knowledge movement is as follows:

- Knowledge ingestion – Plane statement knowledge saved as JSON compressed recordsdata in Amazon Easy Storage Service (Amazon S3).

- Knowledge processing – AWS Glue jobs utilizing Apache Sedona for geospatial processing.

- Knowledge visualization – Spark SQL with Sedona’s spatial capabilities to extract insights and export knowledge to visualise the data in a map on Kepler.gl.

The next determine illustrates this answer.

Conditions

You will want the next for this answer:

- An AWS Account and a consumer with AWS Console entry.

- Entry to a Linux terminal and the AWS Command Line Interface (AWS CLI).

- An IAM position for AWS Glue with checklist, learn, and write permissions for Amazon S3 buckets.

- An Amazon S3 Bucket for flight recordsdata. For this instance, title the bucket

blog-sedona-nessie-, utilizing your account quantity and area.- - An Amazon S3 bucket for artifacts and Sedona libraries. For this instance, title the bucket

blog-sedona-artifacts-, utilizing your account quantity and area.- - Obtain a day of historic knowledge from ADSB.lol. In our examples, we used v2025.05.29-planes-readsb-prod-0tmp.tar.aa and v2025.05.29-planes-readsb-prod-0tmp.tar.ab.

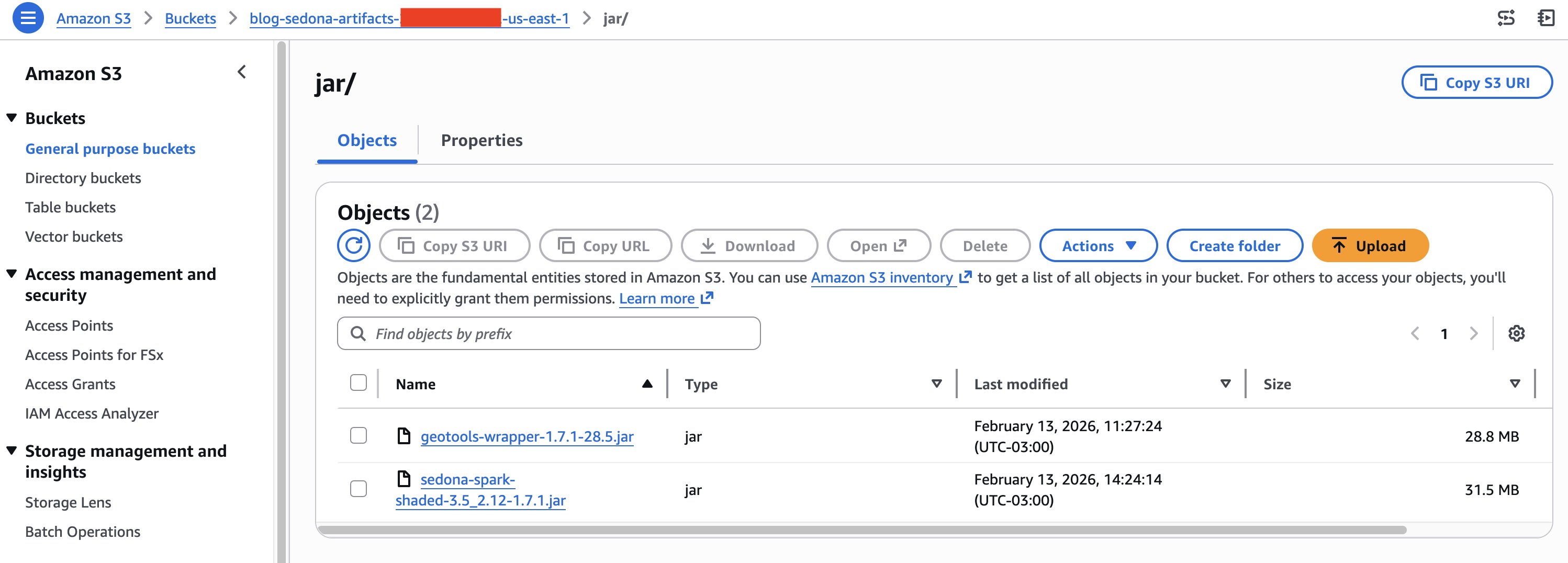

- Obtain the Apache Sedona libraries. The instance was created utilizing sedona-spark-shaded-3.5_2.12-1.7.1.jar and geotools-wrapper-1.7.1-28.5.jar.

- Obtain the AWS Glue script from AWS Pattern to course of the geospatial knowledge.

- Assessment the AWS Glue safety finest practices, particularly IAM least-privilege, encryption for delicate knowledge at relaxation and in transit, and configuring VPC Endpoints to stop knowledge from routing via the general public web.

Answer walkthrough

Any more, executing the following steps will incur prices on AWS. This step-by-step walkthrough demonstrates an strategy to processing and analyzing large-scale geospatial flight knowledge utilizing Apache Sedona and Uber’s H3 spatial indexing system, utilizing AWS Glue for distributed processing and Apache Sedona for environment friendly geospatial computations. It explains find out how to ingest uncooked flight knowledge, remodel it utilizing Sedona’s geospatial capabilities, and index it with H3 for optimized spatial queries. Lastly, it additionally demonstrates find out how to visualize the information utilizing Kepler.gl. For knowledge processing, it’s doable to make use of each Glue scripts and Glue notebooks. On this put up, we are going to focus solely on Glue scripts.

Add the Apache Sedona libraries to Amazon S3

- Open your OS terminal command line.

- Create a folder to obtain the Sedona libraries and title it jar.

# Create a listing for the Sedona libraries (JARs recordsdata) mkdir jar # Go to the folder JARs folder cd jar - Obtain the Apache Sedona libraries.

# Obtain required Sedona libraries (JARs recordsdata) wget https://repo1.maven.org/maven2/org/apache/sedona/sedona-spark-shaded-3.5_2.12/1.7.1/sedona-spark-shaded-3.5_2.12-1.7.1.jar wget https://repo1.maven.org/maven2/org/datasyslab/geotools-wrapper/1.7.1-28.5/geotools-wrapper-1.7.1-28.5.jar - Add the Sedona libraries (JARs recordsdata) to Amazon S3. On this instance, we use the S3 path

s3://aws-blog-post-sedona-artifacts/jar/.# Add the JARs recordsdata to Amazon S3 bucket aws s3 cp . s3://blog-sedona-artifacts-- /jar/ --recursive - Your Amazon S3 folder ought to now look just like the next picture:

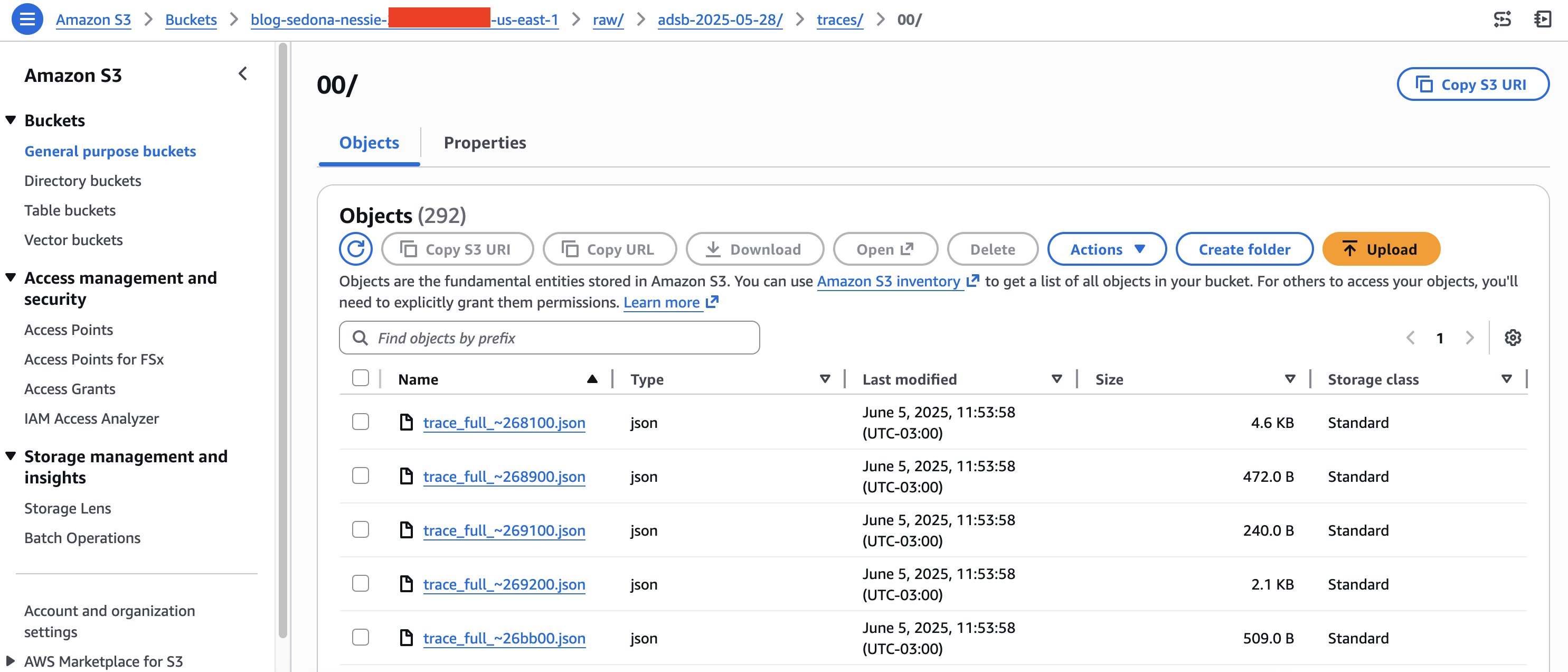

Obtain and add the geospatial knowledge to Amazon S3

- Open your OS terminal command line.

- Create a folder to obtain the flight recordsdata and title it adsb_dataset.

# Create a listing for obtain the geospatial flight recordsdata mkdir adsb_dataset # Go to the folder for geospatial flight recordsdata cd adsb_dataset - Obtain the flight recordsdata knowledge from adsblol GitHub repository.

# Obtain the geospatial flight recordsdata within the folder created wget https://github.com/adsblol/globe_history_2025/releases/obtain/v2025.05.29-planes-readsb-prod-0tmp/v2025.05.29-planes-readsb-prod-0tmp.tar.aa wget https://github.com/adsblol/globe_history_2025/releases/obtain/v2025.05.29-planes-readsb-prod-0tmp/v2025.05.29-planes-readsb-prod-0tmp.tar.ab - Extract the flight recordsdata.

# Mix the 2 the tar recordsdata collectively cat v2025.05.29* >> mixed.tar # Extract the json flight recordsdata from the tar file tar xf mixed.tar - Copy the flight recordsdata to Amazon S3. On this case, we’re utilizing the S3 folder:

s3://blog-sedona-nessie-.- /uncooked/adsb-2025-05-28/traces/ # Copy the json flight recordsdata to Amazon S3 aws s3 cp ./traces/ s3://blog-sedona-nessie-- /uncooked/adsb-2025-05-28/traces/ --recursive - Your Amazon S3 folder ought to now look just like the next picture.

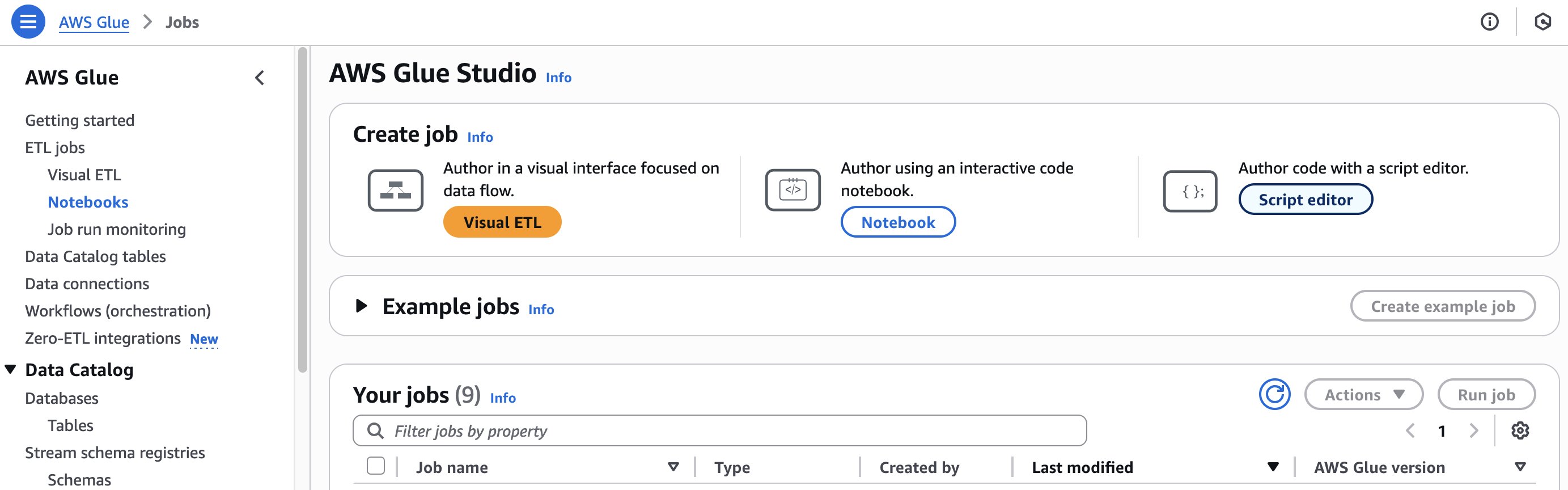

Create an AWS Glue job and arrange the job

Now, we’re able to outline the AWS Glue job utilizing Apache Sedona to learn the geospatial knowledge recordsdata. To create a Glue job:

- Open the AWS Glue console.

- On the Notebooks web page, select Script editor.

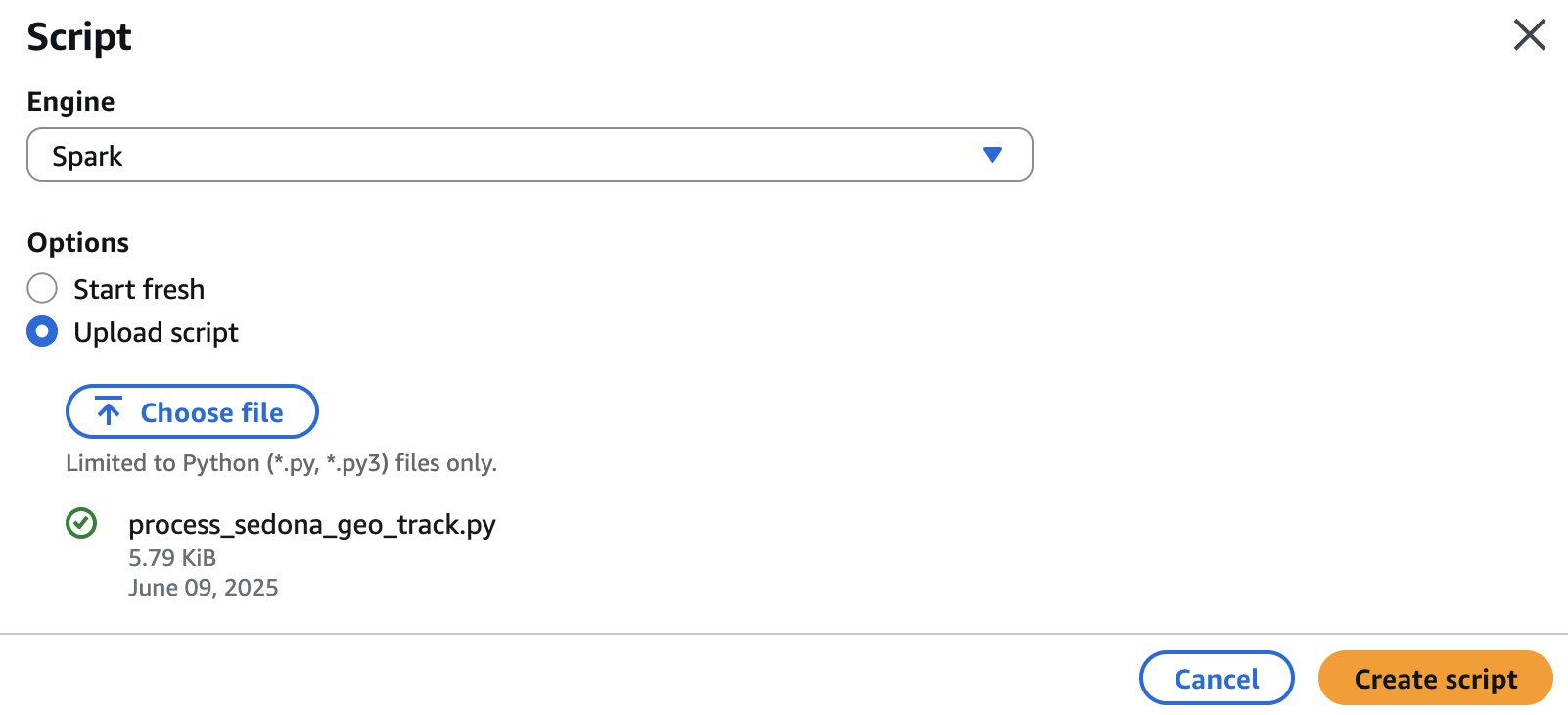

- On the Script display screen, for the engine, select Spark, then choose the choice Add script.

- Select Select file. Discover the

process_sedona_geo_track.pyfile, then select Create script.

- Rename the job from Untitled to process_sedona_geo_track.

- Select Save.

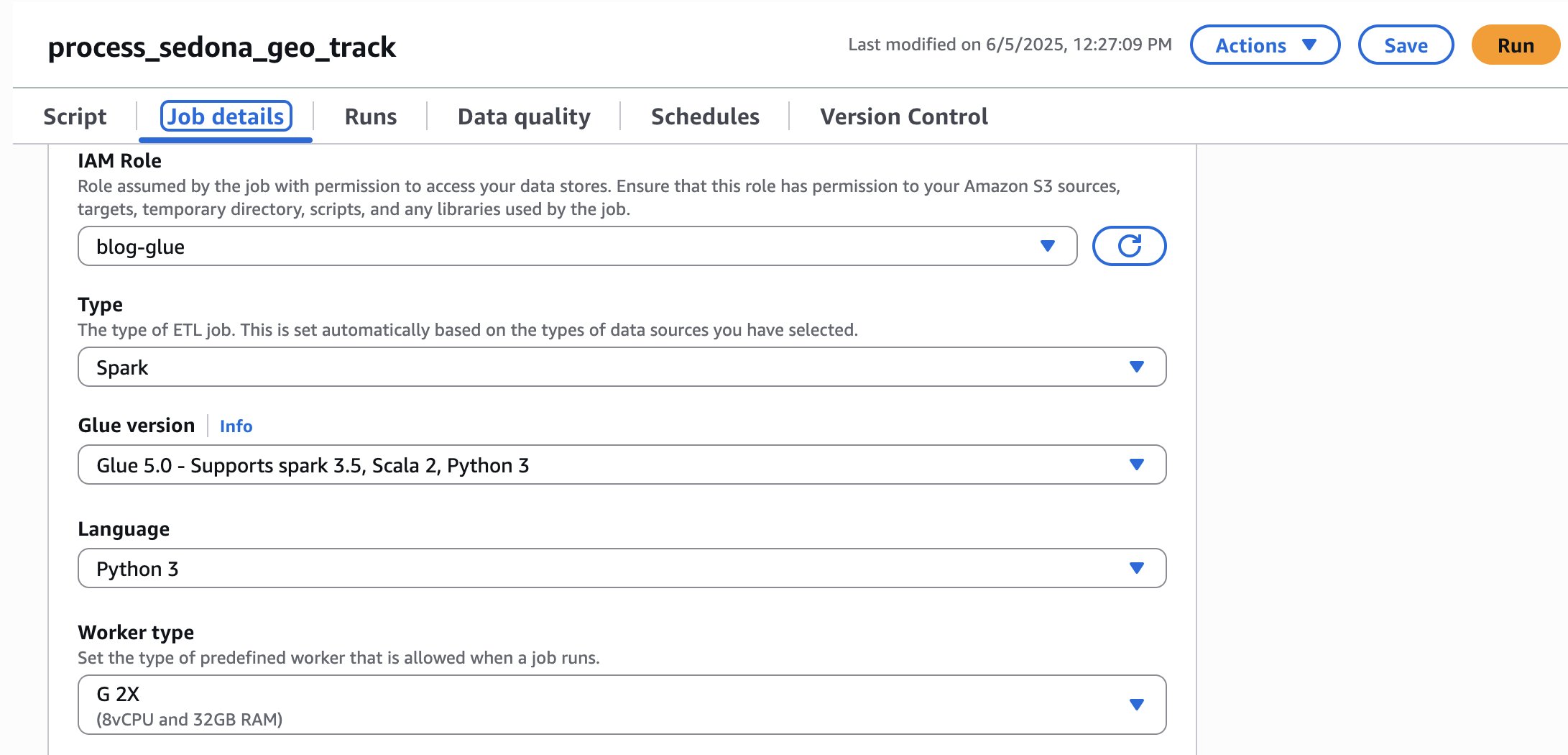

- Now, let’s arrange the AWS Glue job. Select Job Particulars.

- Select the IAM Function created for use with Glue. For this instance, we use blog-glue.

- Set the Glue model to Glue 5.0 and the Employee kind as wanted. For this instance, G.1X is adequate, however we use G.2X to hurry up processing.

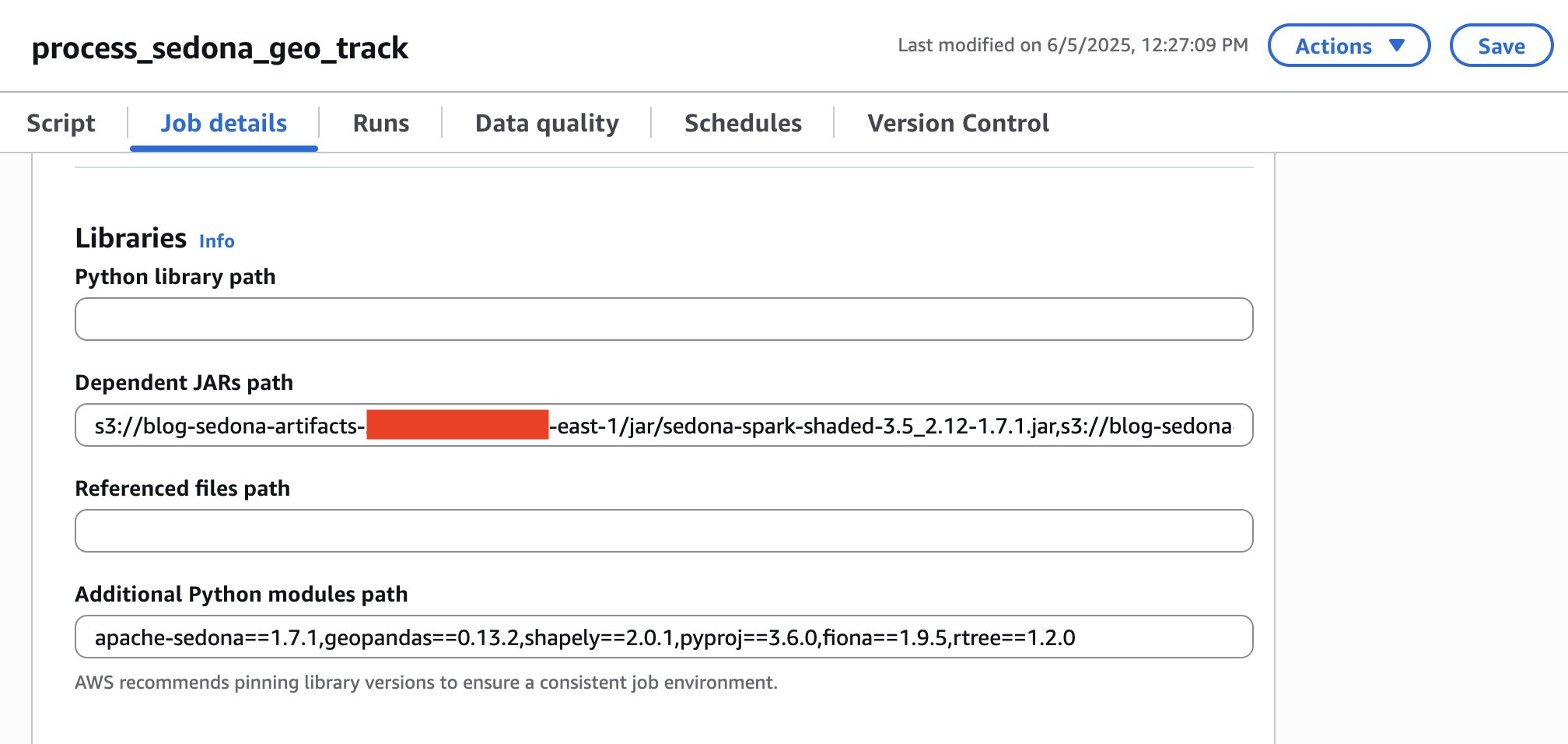

- Now, let’s import the libraries for Apache Sedona.

- Within the Dependent JARs path, kind the trail of the JAR recordsdata for Apache Sedona that you just uploaded within the previous steps. For this instance, we used

s3://blog-sedona-artifacts-- /jar/sedona-spark-shaded-3.5_2.12-1.7.1.jar,s3://blog-sedona-artifacts- - /jar/geotools-wrapper-1.7.1-28.5.jar - In Extra Python modules path, enter the modules for Apache Sedona: apache-sedona==1.7.1,geopandas==0.13.2,shapely==2.0.1,pyproj==3.6.0,fiona==1.9.5,rtree==1.2.0

- Within the Job parameters part, within the Key subject, kind —BUCKET_NAME. For its Worth, enter your bucket title. On this instance, ours is

blog-sedona-nessie-.-

- Select Save.

Processing the geospatial flights knowledge

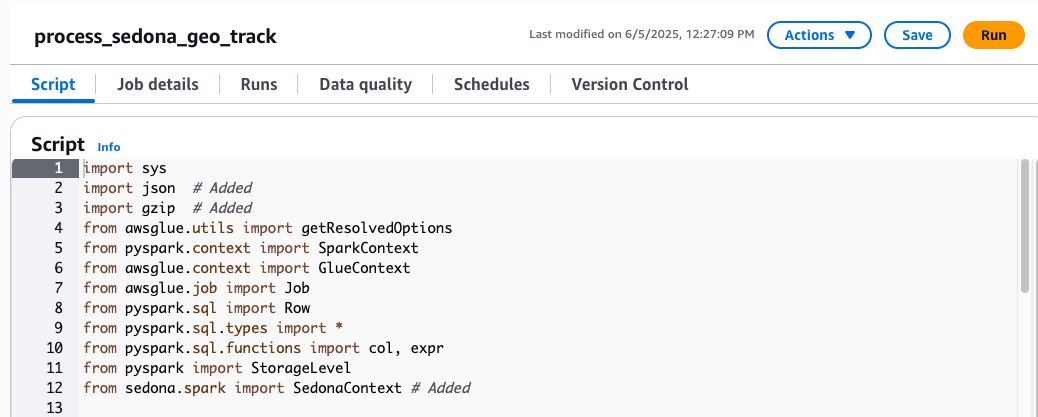

Earlier than we run the job, let’s perceive how the code works. First, import the Apache Sedona libraries:

Subsequent, initialize the Sedona context utilizing an current Spark session:

After that, create a operate for dealing with compressed JSON knowledge:

Add a operate to remodel uncooked monitoring knowledge right into a structured format appropriate for a legitimate coordinates course of:

The flat_rdd variable applies these capabilities to the structured knowledge from the unique gzipped JSON. Every ingredient on this RDD is a Row object representing a single knowledge level from an plane’s hint, with fields for ICAO, timestamp, latitude, and longitude.

The ADSB hint recordsdata comprise a deeply nested JSON construction the place the hint subject holds an array of mixed-type arrays, compressed in Gzip format. For this particular case, creating a UDF represented one of the crucial sensible and environment friendly options. Since Gzip is a non-splittable format, Spark is unable to parallelize processing, constraining each strategies to a single employee per file and processing the information a number of instances throughout JVM decompression, full JSON parsing, and subsequent re-parsing operations. The UDF bypasses all of this by studying uncooked bytes and doing all the pieces in a single Python cross: decompress → parse → extract → validate, returning solely the small set of wanted fields on to Spark.

The Spark SQL question processes geographic hint knowledge utilizing the H3 hexagonal grid system, changing level knowledge right into a regularized hexagonal grid that may assist establish areas of excessive level density. A decision of 5 was adopted, producing hexagons of roughly 253 km² (roughly the identical measurement as town of Edinburgh, Scotland, which is roughly 264 km²), for its skill to successfully seize route density patterns on the metropolis and metropolitan degree.

Lastly, this code prepares the datasets for visualization functions. The primary dataset is predicated on the plane distinctive identifier. The entire dataset for a single day can comprise greater than 80 million knowledge factors. A random sampling price of 0.1% was utilized, which proves adequate as an example route density patterns with out overwhelming the Kepler.gl browser renderer. The second dataset aggregates hint factors into hexagonal spatial cells (consequence from the question above).

Now that we perceive the code, let’s run it.

- Open the AWS Glue console.

- On the ETL jobs >> Notebooks web page, select the job title process_sedona_geo_track.

- Select Run.

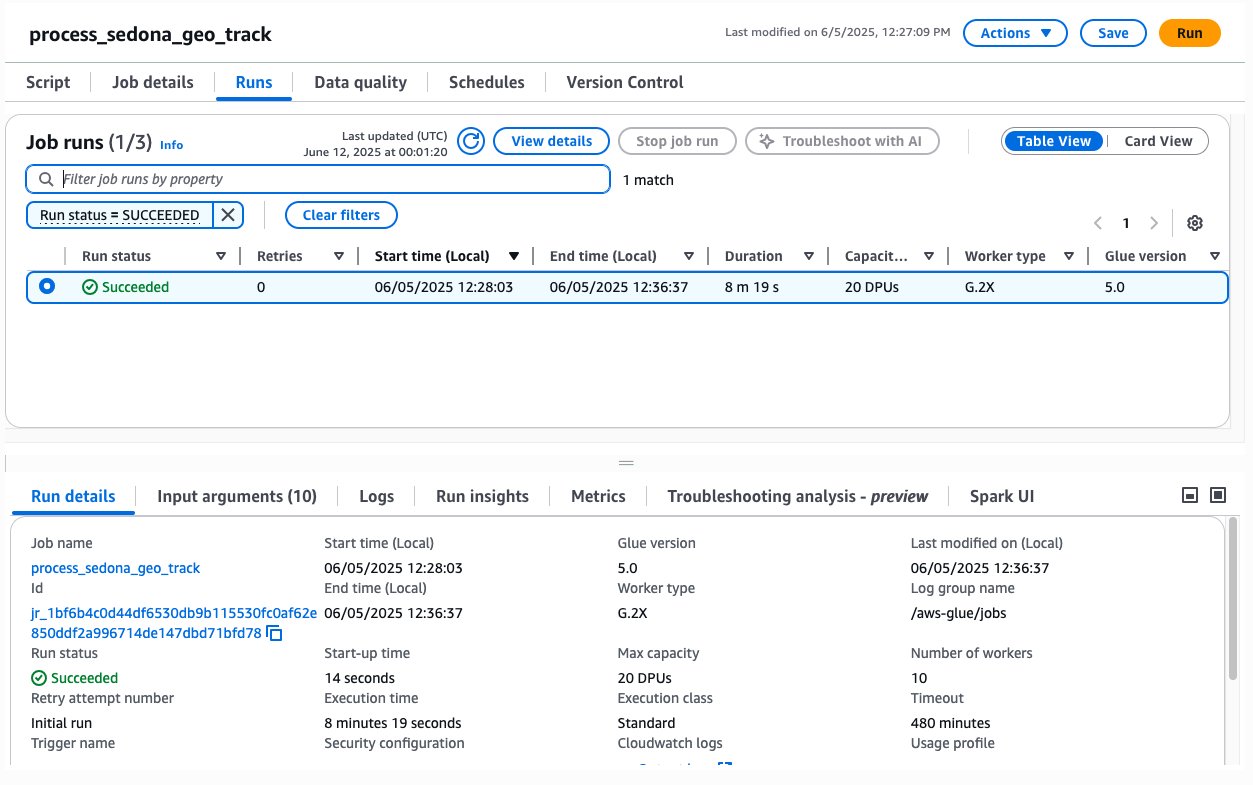

- Now, it’s doable to watch the job by selecting the Runs tab.

- It might take a couple of minutes to run the whole job. It took practically 8 minutes to course of roughly 2.50 GB (67,540 compressed recordsdata) with 20 DPUs. After the job is processed, you need to see your job with the standing Succeeded.

Now your knowledge ought to be saved for a preview visualization demo in a folder named s3://blog-sedona-nessie-.

Efficiency insights

The workload characterization of this job reveals a CPU-intensive profile, primarily due to the processing of small binary recordsdata with GZIP compression and subsequent JSON parsing. Given the inherent nature of this pipeline, which incorporates Python UDF serialization and partial single-partition write levels, linear scaling doesn’t yield proportional efficiency positive aspects. The next desk presents an evaluation of AWS Glue configurations, evaluating the trade-off between computational capability, execution period, and related prices:

| Length | Capability (DPUs) | Employee kind | Glue model | Estimated Price* |

| 10 m 7 s | 32 DPUs | G.1X | 5 | $2.34 |

| 11 m 50 s | 10 DPUs | G.1X | 5 | $0.88 |

| 19 m 7 s | 4 DPUs | G.1X | 5 | $0.59 |

| 8 m 19 s | 20 DPUs | G.2X | 5 | $1.32 |

*Estimated Price = DPUs x Length (hours) x $0.44 per DPU-hour (us-east-1)

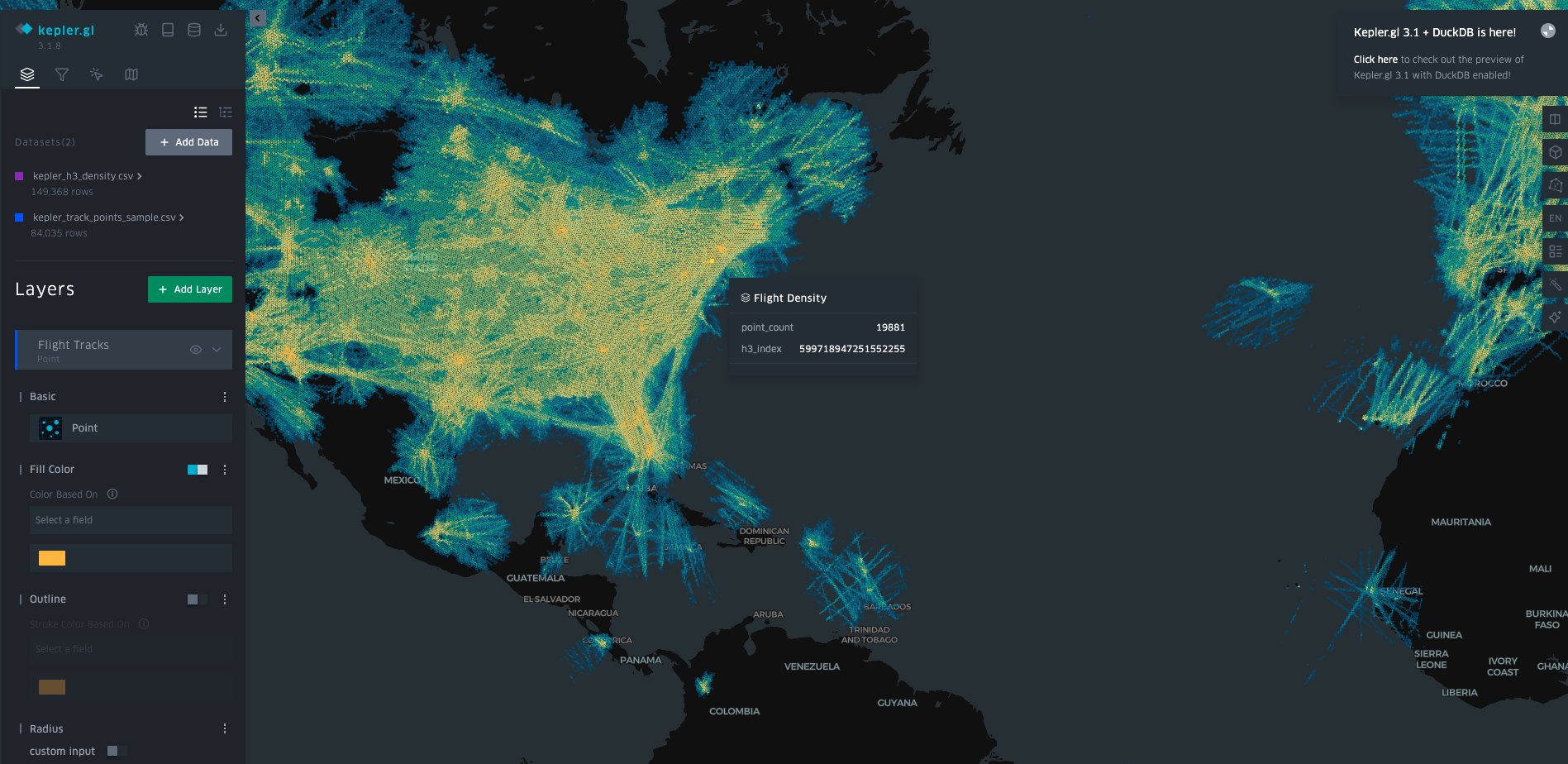

Visualizing and analyzing geospatial knowledge with Kepler.gl

Kepler.gl is an open-source geospatial evaluation software developed by Uber with code obtainable at Github. Kepler.gl is designed for large-scale knowledge exploration and visualization, providing a number of map layers, together with level, arc, heatmap, and 3D hexagon. It helps numerous file codecs like CSV, GeoJSON, and KML. On this use case, we are going to use Kepler.gl to current interactive visualizations that illustrate flight patterns, routes, and densities throughout international airspace.

Downloading the geospatial recordsdata

Earlier than we are able to view the graph, we might want to obtain the flight recordsdata to our native machine, unzip them, and rename them (to make it simpler to establish the recordsdata).

- Open your OS terminal command line.

- Create the folders to obtain the information processed within the steps earlier than. On this case, we create kepler and kepler_csv.

#create kepler folders: first folder is to obtain the recordsdata, #second folder is to prepare the recordsdata to make use of within the subsequent step mkdir kepler mkdir kepler_csv - Substitute the bracketed variables along with your account and listing info, then obtain all of the CSV recordsdata.

#copy the recordsdata from Amazon S3 to native machine aws s3 cp s3://blog-sedona-nessie-- /visualization/ / /kepler --recursive - Extract the recordsdata, rename them, and transfer them to a different folder.

# Extract the recordsdata processed by Spark and Sedona gzip -d ./kepler/kepler_h3_density/*.gz gzip -d ./kepler/kepler_track_points_sample/*.gz # Rename the Spark output recordsdata to extra readable names cd ./kepler/kepler_h3_density/ ls mv part-00000-*.csv kepler_h3_density.csv cd .. cd ./kepler/kepler_track_points_sample/ ls mv part-00000-*.csv kepler_track_points_sample.csv cd .. # Make sure the output folder exists mkdir -p ../kepler_csv # Copy the renamed CSV recordsdata to the folder that shall be used as enter in kepler.gl cp ./kepler/kepler_h3_density/*.csv ../kepler_csv cp ./kepler/kepler_track_points_sample/*.csv ../kepler_csv - Your kepler_csv folder ought to look just like the return of the command under.

#checklist the recordsdata within the kepler_csv listing ls -l whole 11684 -rw-rw-r-- 1 ec2-user ec2-user 8630110 Jun 12 14:47 kepler_h3_density.csv -rw-rw-r-- 1 ec2-user ec2-user 3331763 Jun 12 14:47 kepler_track_points_sample.csv

Visualizing the information in a graph

Now that you’ve got saved the information to your native machine, you’ll be able to analyze the flight knowledge via interactive map graphics. To import the information into the Kepler.gl net visualization software:

- Open the Kepler.gl Demo net software.

- Load knowledge into Kepler.gl:

- Select Add Knowledge within the left panel.

- Drag and drop each CSV recordsdata (

flight_pointsandh3_density) into the add space. - Affirm that each datasets are loaded efficiently.

- Delete all layers.

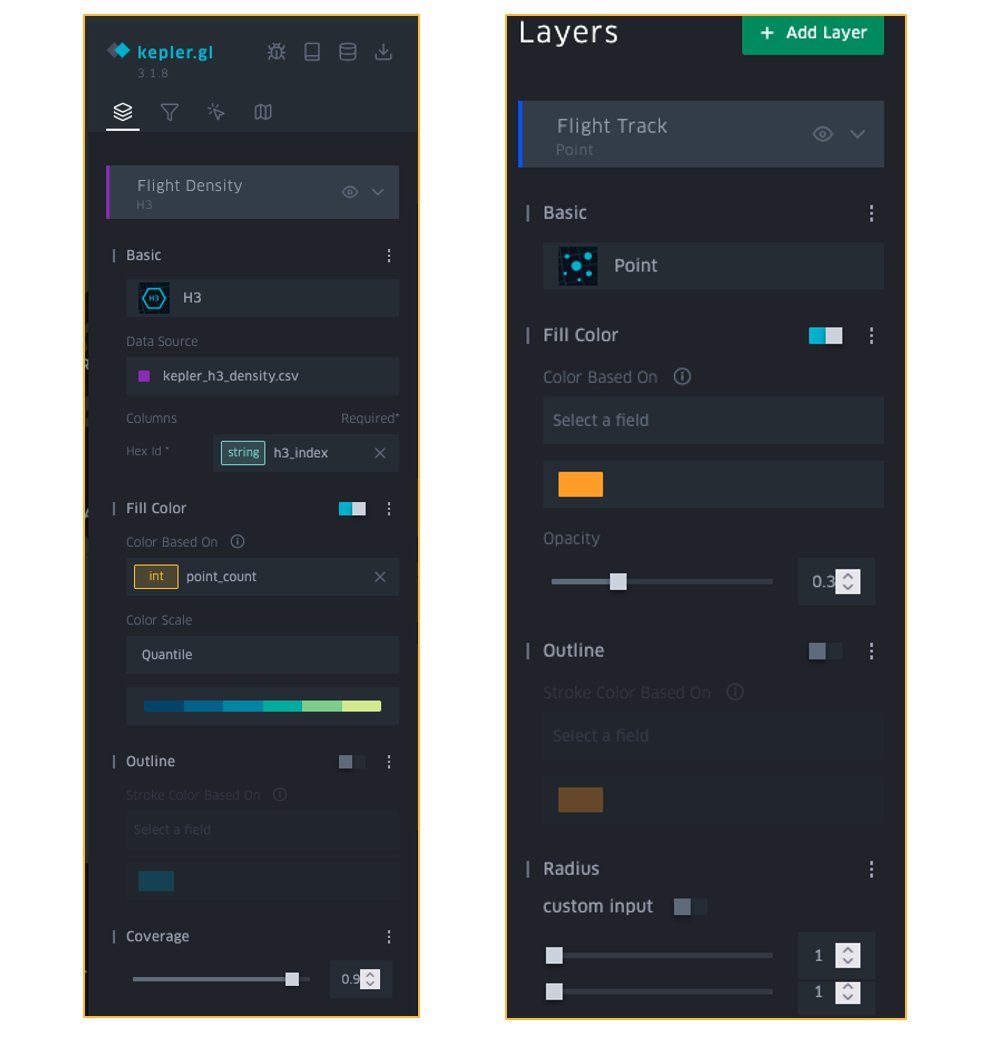

- Create the Flight Density Layer:

- Select Add Layer within the left panel.

- In Primary, select H3 because the layer kind, then add the next configuration:

- Layer Identify: Flight Density

- Knowledge Supply: kepler_h3_density.csv

- Hex ID: h3_index

- Within the Fill Colour part:

- Colour: point_count

- Colour Scale: Quantile.

- Colour Vary: Select a blue/inexperienced gradient.

- Set Opacity to 0.7.

- Within the Protection part, set it to 0.9.

- Create the Flight Tracks Layer:

- Select Add Layer within the left panel.

- In Primary, select Level because the layer kind, then add the next configuration:

- Layer Identify: Flight Tracks

- Knowledge Supply: kepler_track_points_sample.csv

- Columns:

- Latitude: lat

- Longitude: lon

- Within the Fill Colour part:

- Stable Colour: Orange

- Opacity: 0.3

- Set the Level’s Radius to 1

- The layers ought to look just like the next determine.

- The graph visualization ought to now present flight density via color-coded hexagons, with particular person flight tracks seen as orange factors:

There you go! Now that you’ve got data about geospatial knowledge and have created your first use case, take the chance to do some evaluation and be taught some attention-grabbing info about flight patterns.

It’s doable to experiment with different attention-grabbing forms of evaluation in Kepler.gl, resembling Time Playback.

Clear up

To scrub up your sources, full the next duties:

- Delete the AWS Glue job

process_sedona_geo_track. - Delete content material from the Amazon S3 buckets:

blog-sedona-artifacts-and- blog-sedona-nessie-.-

Conclusion

On this put up, we confirmed how processing geospatial knowledge can current important challenges resulting from its complicated nature (from huge knowledge to knowledge construction format). For this use case of flight trackers, it entails huge quantities of data throughout a number of dimensions resembling time, location, altitude, and flight paths, nevertheless, the mix of Spark’s distributed computing capabilities and Sedona’s optimized geospatial capabilities helps overcome these challenges. The spatial partitioning and indexing options of Sedona, coupled with Spark’s framework, allow us to carry out complicated spatial joins and proximity analyses effectively, simplifying the general knowledge processing workflow.

The serverless nature of AWS Glue eliminates the necessity for managing infrastructure whereas robotically scaling sources primarily based on workload calls for, making it a really perfect platform for processing rising volumes of flight knowledge. As the amount of flight knowledge grows or as processing necessities fluctuate, with AWS Glue, you’ll be able to shortly alter sources to fulfill demand, guaranteeing optimum efficiency with out the necessity for cluster administration.

By changing the processed outcomes into CSV format and visualizing them in Kepler.gl, it’s doable to create interactive visualizations that reveal patterns in flight paths, and you’ll effectively analyze air site visitors patterns, routes, and different insights. This end-to-end answer demonstrates how a contemporary knowledge technique in AWS with the help of open-source instruments can remodel uncooked geospatial knowledge into actionable insights.

In regards to the authors