Each group is challenged with appropriately prioritizing new vulnerabilities that have an effect on a big set of third-party libraries used inside their group. The sheer quantity of vulnerabilities printed each day makes handbook monitoring impractical and resource-intensive.

At Databricks, one among our firm aims is to safe our Knowledge Intelligence Platform. Our engineering staff has designed an AI-based system that may proactively detect, classify, and prioritize vulnerabilities as quickly as they’re disclosed, primarily based on their severity, potential influence, and relevance to Databricks infrastructure. This strategy permits us to successfully mitigate the chance of important vulnerabilities remaining unnoticed. Our system achieves an accuracy price of roughly 85% in figuring out business-critical vulnerabilities. By leveraging our prioritization algorithm, the safety staff has considerably diminished their handbook workload by over 95%. They’re now capable of focus their consideration on the 5% of vulnerabilities that require instant motion, slightly than sifting by way of a whole bunch of points.

Within the subsequent few steps, we’re going to discover how our AI-driven strategy helps determine, categorize and rank vulnerabilities.

How Our System Repeatedly Flags Vulnerabilities

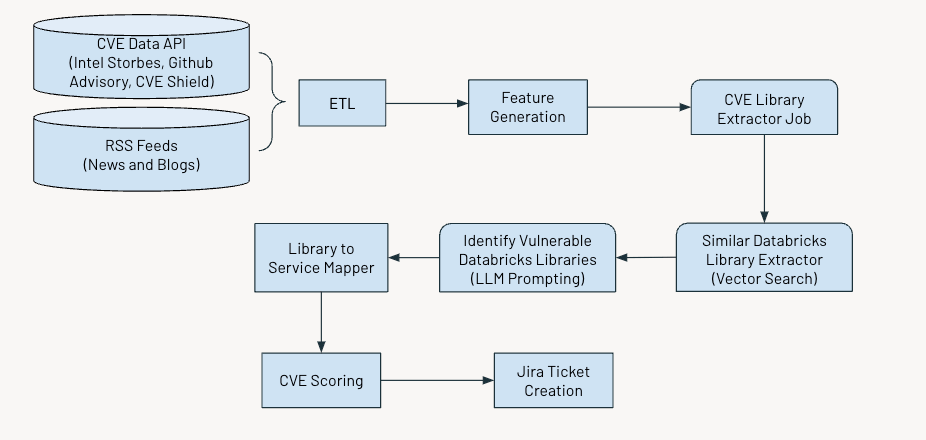

The system operates on an everyday schedule to determine and flag important vulnerabilities. The method includes a number of key steps:

- Gathering and processing information

- Producing related options

- Using AI to extract details about Frequent Vulnerabilities and Exposures (CVEs)

- Assessing and scoring vulnerabilities primarily based on their severity

- Producing Jira tickets for additional motion.

The determine under exhibits the general workflow.

Knowledge Ingestion

We ingest Frequent Vulnerabilities and Exposures (CVE) information, which identifies publicly disclosed cybersecurity vulnerabilities from a number of sources resembling:

- Intel Strobes API: This supplies data and particulars on the software program packages and variations.

- GitHub Advisory Database: Generally, when vulnerabilities are usually not recorded as CVE, they seem as Github advisories.

- CVE Defend: This supplies the trending vulnerability information from the current social media feeds

Moreover, we collect RSS feeds from sources like securityaffairs and hackernews and different information articles and blogs that point out cybersecurity vulnerabilities.

Function Era

Subsequent, we are going to extract the next options for every CVE:

- Description

- Age of CVE

- CVSS rating (Frequent Vulnerability Scoring System)

- EPSS rating (Exploit Prediction Scoring System)

- Influence rating

- Availability of exploit

- Availability of patch

- Trending standing on X

- Variety of advisories

Whereas the CVSS and EPSS scores present useful insights into the severity and exploitability of vulnerabilities, they might not totally apply for prioritization in sure contexts.

The CVSS rating doesn’t totally seize a company’s particular context or atmosphere, that means {that a} vulnerability with a excessive CVSS rating won’t be as important if the affected part is just not in use or is satisfactorily mitigated by different safety measures.

Equally, the EPSS rating estimates the likelihood of exploitation however would not account for a company’s particular infrastructure or safety posture. Due to this fact, a excessive EPSS rating may point out a vulnerability that’s prone to be exploited generally. Nevertheless, it would nonetheless be irrelevant if the affected programs are usually not a part of the group’s assault floor on the web.

Relying solely on CVSS and EPSS scores can result in a deluge of high-priority alerts, making managing and prioritizing them difficult.

Scoring Vulnerabilities

We developed an ensemble of scores primarily based on the above options – severity rating, part rating and subject rating – to prioritize CVEs, the main points of that are given under.

Severity Rating

This rating helps to quantify the significance of CVE to the broader group. We calculate the rating as a weighted common of the CVSS, EPSS, and Influence scores. The information enter from CVE Defend and different information feeds permits us to gauge how the safety group and our peer corporations understand the influence of any given CVE. This rating’s excessive worth corresponds to CVEs deemed important to the group and our group.

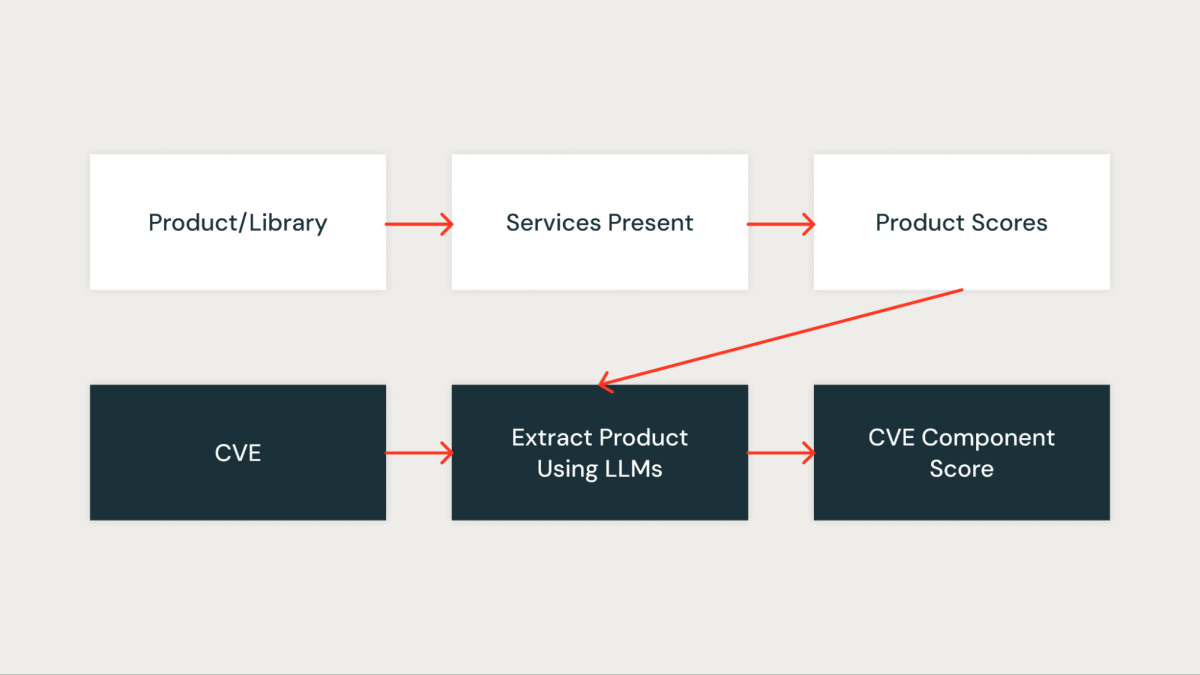

Part Rating

This rating quantitatively measures how essential the CVE is to our group. Each library within the group is first assigned a rating primarily based on the providers impacted by the library. A library that’s current in important providers will get the next rating, whereas a library that’s current in non-critical providers will get a decrease rating.

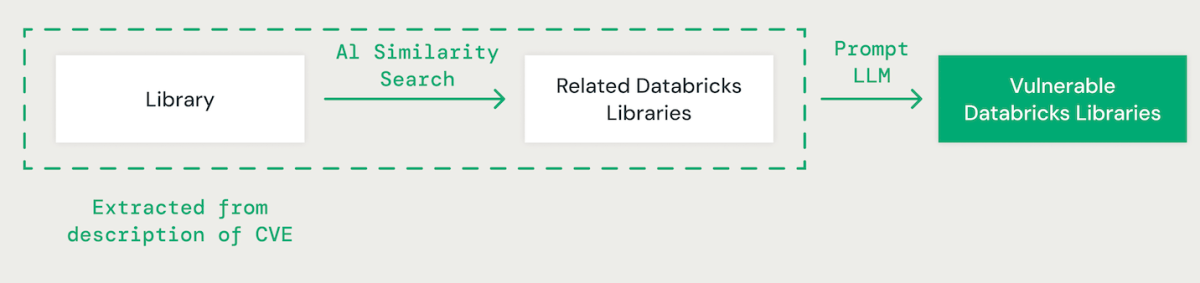

AI-Powered Library Matching

Using few-shot prompting with a big language mannequin (LLM), we extract the related library for every CVE from its description. Subsequently, we make use of an AI-based vector similarity strategy to match the recognized library with current Databricks libraries. This includes changing every phrase within the library identify into an embedding for comparability.

When matching CVE libraries with Databricks libraries, it is important to know the dependencies between totally different libraries. For instance, whereas a vulnerability in IPython might circuitously have an effect on CPython, a difficulty in CPython might influence IPython. Moreover, variations in library naming conventions, resembling “scikit-learn”, “scikitlearn”, “sklearn” or “pysklearn” have to be thought-about when figuring out and matching libraries. Moreover, version-specific vulnerabilities must be accounted for. For example, OpenSSL variations 1.0.1 to 1.0.1f is perhaps weak, whereas patches in later variations, like 1.0.1g to 1.1.1, might tackle these safety dangers.

LLMs improve the library matching course of by leveraging superior reasoning and business experience. We fine-tuned varied fashions utilizing a floor reality dataset to enhance accuracy in figuring out weak dependent packages.

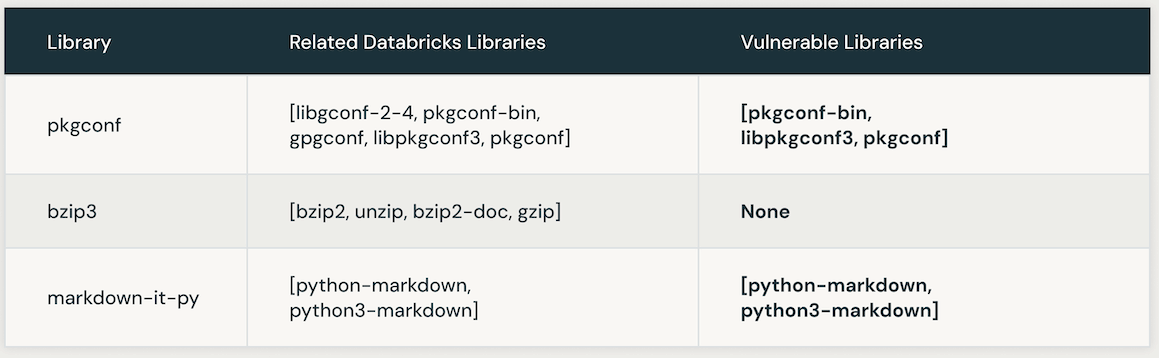

The next desk presents situations of weak Databricks libraries linked to a particular CVE. Initially, AI similarity search is leveraged to pinpoint libraries intently related to the CVE library. Subsequently, an LLM is employed to determine the vulnerability of these comparable libraries inside Databricks.

Automating LLM Instruction Optimization for Accuracy and Effectivity

Manually optimizing directions in an LLM immediate could be laborious and error-prone. A extra environment friendly strategy includes utilizing an iterative methodology to routinely produce a number of units of directions and optimize them for superior efficiency on a ground-truth dataset. This methodology minimizes human error and ensures a simpler and exact enhancement of the directions over time.

We utilized this automated instruction optimization approach to enhance our personal LLM-based resolution. Initially, we offered an instruction and the specified output format to the LLM for dataset labeling. The outcomes have been then in contrast in opposition to a floor reality dataset, which contained human-labeled information offered by our product safety staff.

Subsequently, we utilized a second LLM generally known as an “Instruction Tuner”. We fed it the preliminary immediate and the recognized errors from the bottom reality analysis. This LLM iteratively generated a sequence of improved prompts. Following a overview of the choices, we chosen the best-performing immediate to optimize accuracy.

After making use of the LLM instruction optimization approach, we developed the next refined immediate:

Choosing the proper LLM

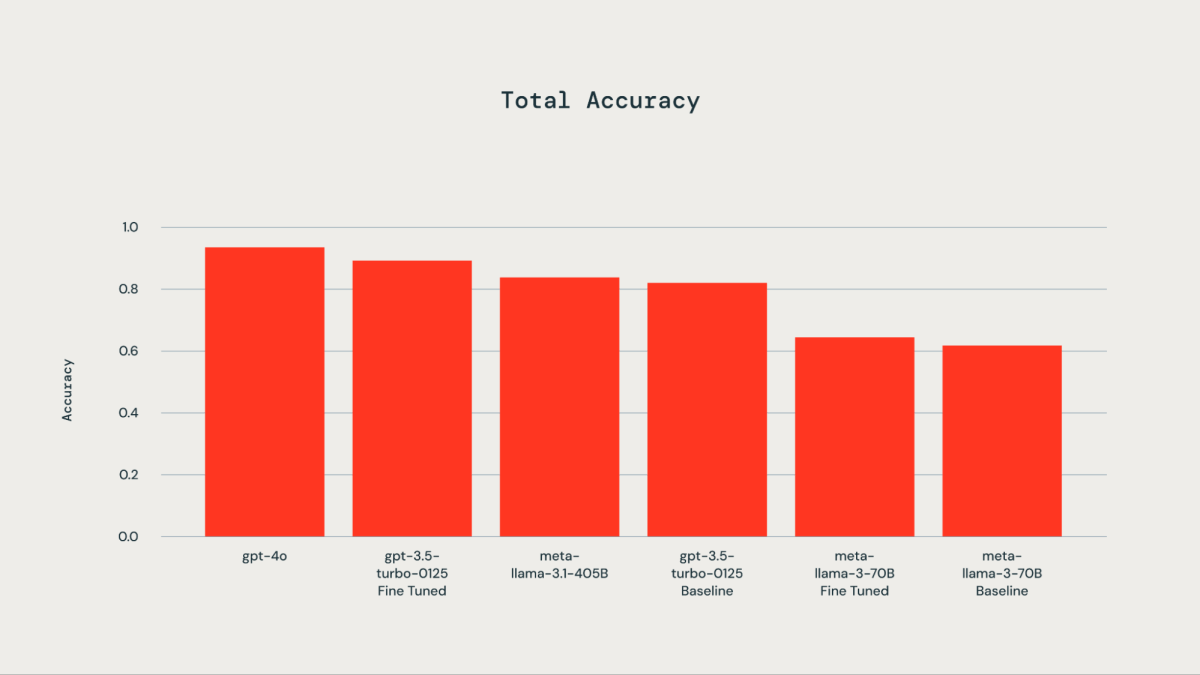

A floor reality dataset comprising 300 manually labeled examples was utilized for fine-tuning functions. The examined LLMs included gpt-4o, gpt-3.5-Turbo, llama3-70B, and llama-3.1-405b-instruct. As illustrated by the accompanying plot, fine-tuning the bottom reality dataset resulted in improved accuracy for gpt-3.5-turbo-0125 in comparison with the bottom mannequin. High quality-tuning llama3-70B utilizing the Databricks fine-tuning API led to solely marginal enchancment over the bottom mannequin. The accuracy of the gpt-3.5-turbo-0125 fine-tuned mannequin was similar to or barely decrease than that of gpt-4o. Equally, the accuracy of the llama-3.1-405b-instruct was additionally similar to and barely decrease than that of the gpt-3.5-turbo-0125 fine-tuned mannequin.

As soon as the Databricks libraries in a CVE are recognized, the corresponding rating of the library (library_score as described above) is assigned because the part rating of the CVE.

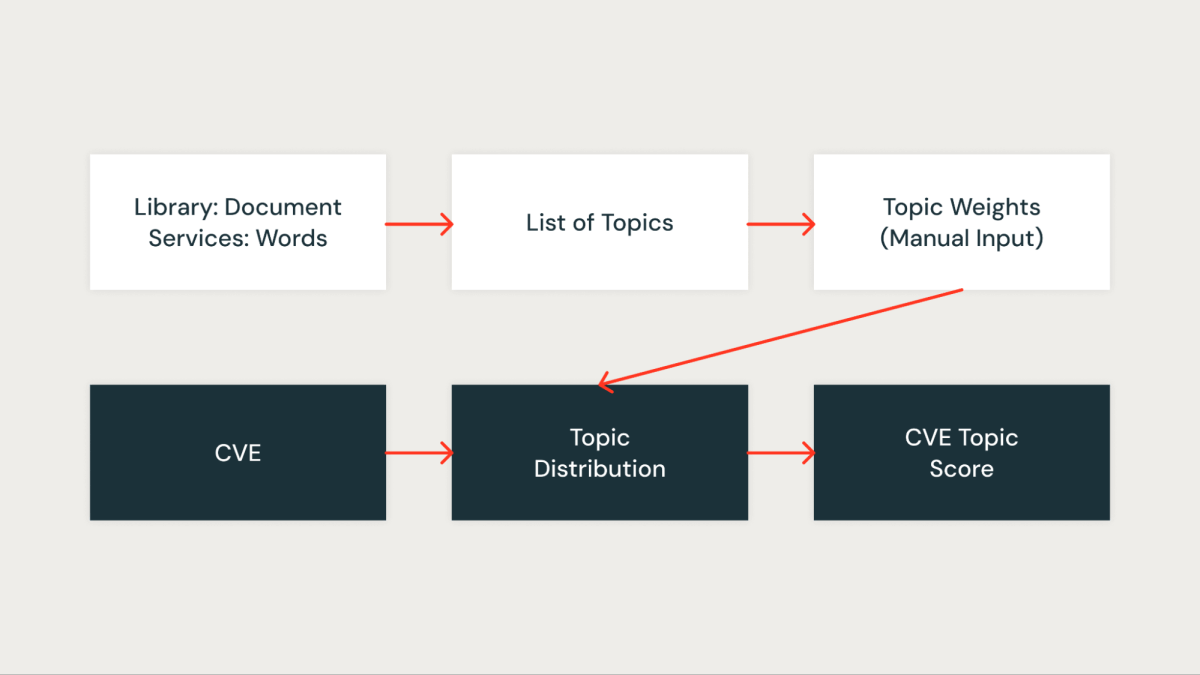

Matter Rating

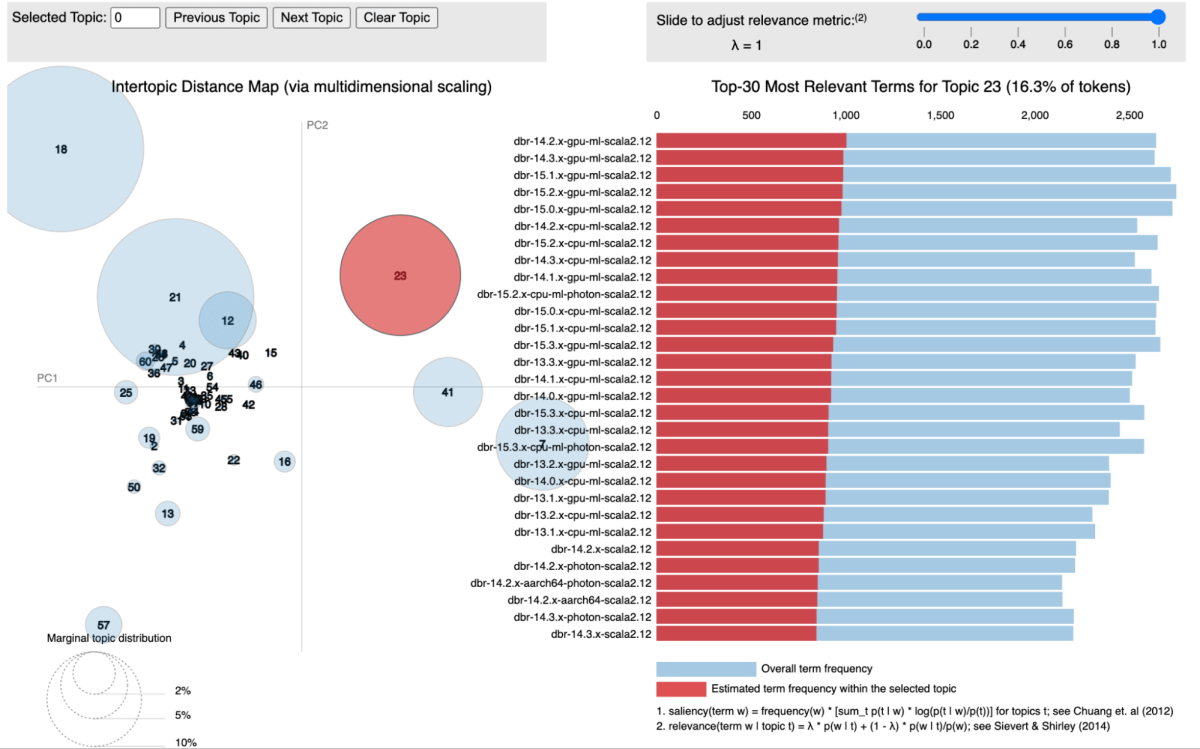

In our strategy, we utilized subject modeling, particularly Latent Dirichlet Allocation (LDA), to cluster libraries in line with the providers they’re related to. Every library is handled as a doc, with the providers it seems in performing because the phrases inside that doc. This methodology permits us to group libraries into subjects that characterize shared service contexts successfully.

The determine under exhibits a particular subject the place all of the Databricks Runtime (DBR) providers are clustered collectively and visualized utilizing pyLDAvis.

For every recognized subject, we assign a rating that displays its significance inside our infrastructure. This scoring permits us to prioritize vulnerabilities extra precisely by associating every CVE with the subject rating of the related libraries. For instance, suppose a library is current in a number of important providers. In that case, the subject rating for that library will probably be increased, and thus, the CVE affecting it would obtain the next precedence.

Influence and Outcomes

We’ve got utilized a spread of aggregation methods to consolidate the scores talked about above. Our mannequin underwent testing utilizing three months’ price of CVE information, throughout which it achieved a powerful true optimistic price of roughly 85% in figuring out CVEs related to our enterprise. The mannequin has efficiently pinpointed important vulnerabilities on the day they’re printed (day 0) and has additionally highlighted vulnerabilities warranting safety investigation.

To gauge the false negatives produced by the mannequin, we in contrast the vulnerabilities flagged by exterior sources or manually recognized by our safety staff that the mannequin didn’t detect. This allowed us to calculate the proportion of missed important vulnerabilities. Notably, there have been no false negatives within the back-tested information. Nevertheless, we acknowledge the necessity for ongoing monitoring and analysis on this space.

Our system has successfully streamlined our workflow, remodeling the vulnerability administration course of right into a extra environment friendly and centered safety triage step. It has considerably mitigated the chance of overlooking a CVE with direct buyer influence and has diminished the handbook workload by over 95%. This effectivity achieve has enabled our safety staff to focus on a choose few vulnerabilities, slightly than sifting by way of the a whole bunch printed each day.

Acknowledgments

This work is a collaboration between the Knowledge Science staff and Product Safety staff. Thanks to Mrityunjay Gautam Aaron Kobayashi Anurag Srivastava and Ricardo Ungureanu from the Product Safety staff, Anirudh Kondaveeti Benjamin Ebanks Jeremy Stober and Chenda Zhang from the Safety Knowledge Science staff.