Massive language fashions (LLMs) at the moment are extra accessible than ever. Google’s Gemini API affords a strong and versatile instrument for builders and creators. This information explores the quite a few sensible purposes you’ll be able to make the most of with the free Gemini API. We are going to stroll by a number of hands-on examples. You’ll be taught key Gemini API prompting strategies, from easy queries to complicated duties. We are going to cowl strategies like zero-shot prompting, Gemini, and few-shot prompting. Additionally, you will learn to carry out duties corresponding to fundamental code era with Gemini, making your workflow extra environment friendly.

The way to Get Your Free Gemini API?

Earlier than we start, it’s worthwhile to arrange your atmosphere. This course of is straightforward and takes just some minutes. You’ll need a Google AI Studio API key to start out.

First, set up the mandatory Python libraries. This bundle means that you can talk with the Gemini API simply.

!pip set up -U -q "google-genai>=1.0.0"Subsequent, configure your API key. You may get a free key from Google AI Studio. Retailer it securely and use the next code to initialize the consumer. This setup is the inspiration for all of the examples that comply with.

from google import genai

# Ensure that to safe your API key

# For instance, through the use of userdata in Colab

# from google.colab import userdata

# GOOGLE_API_KEY=userdata.get('GOOGLE_API_KEY')

consumer = genai.Shopper(api_key="YOUR_API_KEY")

MODEL_ID = "gemini-1.5-flash"Half 1: Foundational Prompting Methods

Let’s begin with two elementary Gemini API prompting strategies. These strategies type the premise for extra superior interactions.

Approach 1: Zero-Shot Prompting for Fast Solutions

Zero-shot prompting is the best method to work together with an LLM. You ask a query straight with out offering any examples. This methodology works effectively for simple duties the place the mannequin’s current data is adequate. For efficient zero-shot prompting, readability is the important thing.

Let’s use it to categorise the sentiment of a buyer overview.

Immediate:

immediate = """

Classify the sentiment of the next overview as constructive, damaging, or impartial:

Evaluate: "I am going to this restaurant each week, I find it irresistible a lot."

"""

response = consumer.fashions.generate_content(

mannequin=MODEL_ID,

contents=immediate,

)

print(response.textual content)Output:

This easy strategy will get the job carried out shortly. It is without doubt one of the commonest issues you are able to do with the Gemini’s free API.

Approach 2: Few-Shot Prompting for Customized Codecs

Typically, you want the output in a selected format. Few-shot prompting guides the mannequin by offering a number of examples of enter and anticipated output. This method helps the mannequin perceive your necessities exactly.

Right here, we’ll extract cities and their nations right into a JSON format.

Immediate:

immediate = """

Extract cities from the textual content and embrace the nation they're in.

USER: I visited Mexico Metropolis and Poznan final yr

MODEL: {"Mexico Metropolis": "Mexico", "Poznan": "Poland"}

USER: She wished to go to Lviv, Monaco and Maputo

MODEL: {"Lviv": "Ukraine", "Monaco": "Monaco", "Maputo": "Mozambique"}

USER: I'm at the moment in Austin, however I will probably be shifting to Lisbon quickly

MODEL:

"""

# We additionally specify the response ought to be JSON

generation_config = varieties.GenerationConfig(response_mime_type="utility/json")

response = mannequin.generate_content(

contents=immediate,

generation_config=generation_config

)

show(Markdown(f"```jsonn{response.textual content}n```"))Output:

By offering examples, you educate the mannequin the precise construction you need. This can be a highly effective step up from fundamental zero-shot prompting Gemini.

Half 2: Guiding the Mannequin’s Habits and Data

You’ll be able to management the mannequin’s persona and supply it with particular data. These Gemini API prompting strategies make your interactions extra focused.

Approach 3: Function Prompting to Outline a Persona

You’ll be able to assign a job to the mannequin to affect its tone and elegance. This makes the response really feel extra genuine and tailor-made to a selected context.

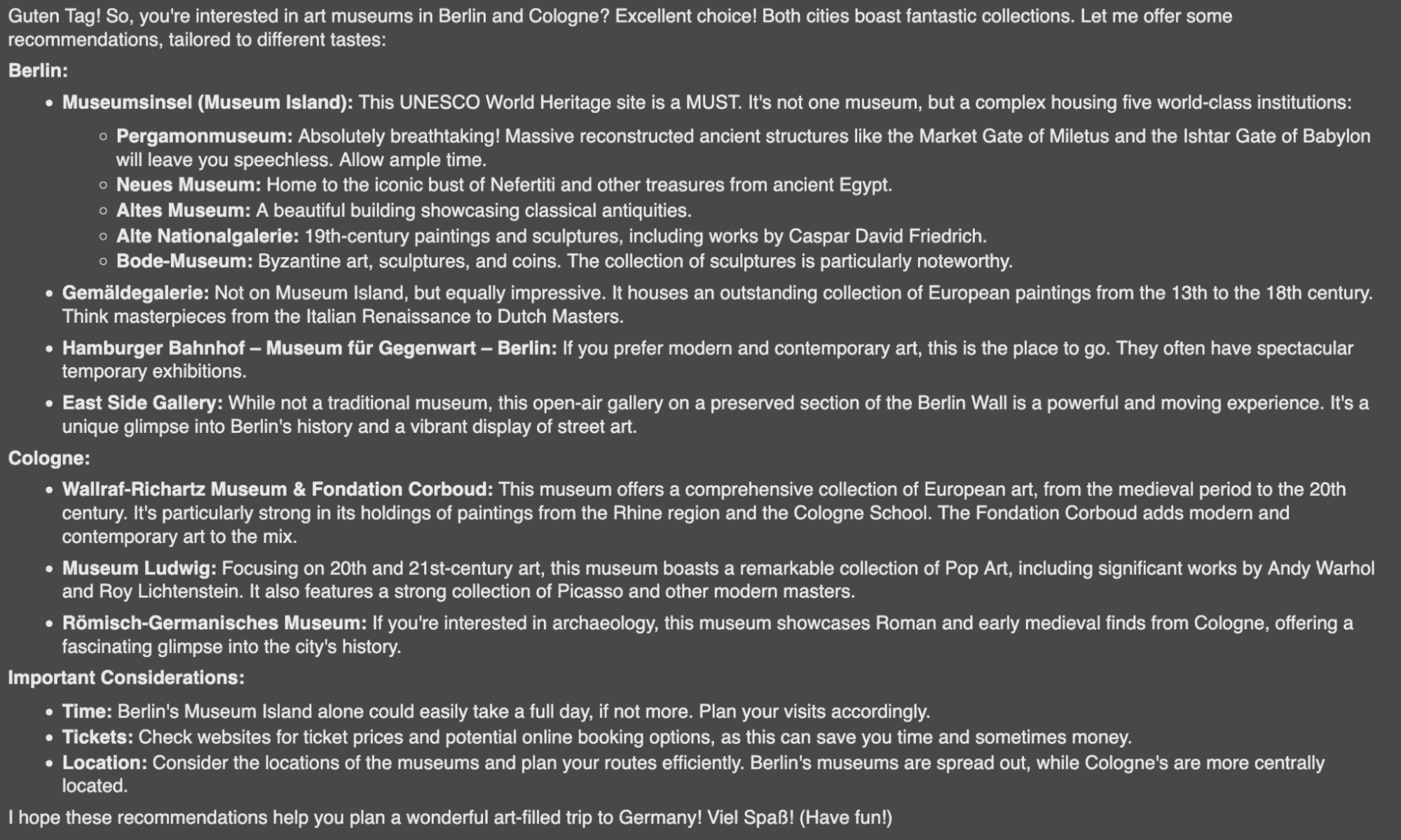

Let’s ask for museum suggestions from the angle of a German tour information.

Immediate:

system_instruction = """

You're a German tour information. Your job is to offer suggestions

to individuals visiting your nation.

"""

immediate="May you give me some suggestions on artwork museums in Berlin and Cologne?"

model_with_role = genai.GenerativeModel(

'gemini-2.5-flash-',

system_instruction=system_instruction

)

response = model_with_role.generate_content(immediate)

show(Markdown(response.textual content))Output:

The mannequin adopts the persona, making the response extra participating and useful.

Approach 4: Adding Context to Reply Area of interest Questions

LLMs don’t know every part. You’ll be able to present particular data within the immediate to assist the mannequin reply questions on new or non-public information. This can be a core idea behind Retrieval-Augmented Technology (RAG).

Right here, we give the mannequin a desk of Olympic athletes to reply a selected question.

Immediate:

immediate = """

QUERY: Present an inventory of athletes that competed within the Olympics precisely 9 occasions.

CONTEXT:

Desk title: Olympic athletes and variety of occasions they've competed

Ian Millar, 10

Hubert Raudaschl, 9

Afanasijs Kuzmins, 9

Nino Salukvadze, 9

Piero d'Inzeo, 8

"""

response = mannequin.generate_content(immediate)

show(Markdown(response.textual content))Output:

The mannequin makes use of solely the offered context to offer an correct reply.

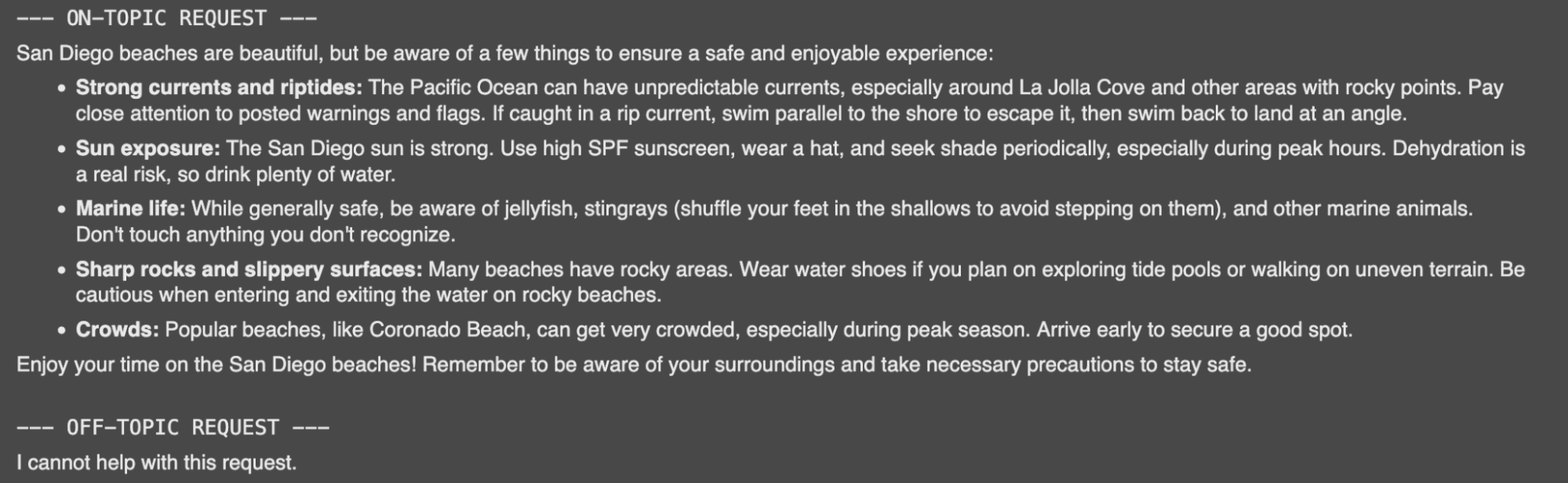

Approach 5: Offering Base Instances for Clear Boundaries

You will need to outline how a mannequin ought to behave when it can not fulfill a request. Offering base instances or default responses prevents sudden or off-topic solutions.

Let’s create a trip assistant with a restricted set of duties.

Immediate:

system_instruction = """

You might be an assistant that helps vacationers plan their trip. Your duties are:

1. Serving to e-book the lodge.

2. Recommending eating places.

3. Warning about potential risks.

If one other request is requested, return "I can not assist with this request."

"""

model_with_rules = genai.GenerativeModel(

'gemini-1.5-flash-latest',

system_instruction=system_instruction

)

# On-topic request

response_on_topic = model_with_rules.generate_content("What ought to I look out for on the seashores in San Diego?")

print("--- ON-TOPIC REQUEST ---")

show(Markdown(response_on_topic.textual content))

# Off-topic request

response_off_topic = model_with_rules.generate_content("What bowling locations do you advocate in Moscow?")

print("n--- OFF-TOPIC REQUEST ---")

show(Markdown(response_off_topic.textual content))Output:

Half 3: Unlocking Superior Reasoning

For complicated issues, it’s worthwhile to information the mannequin’s considering course of. These reasoning strategies enhance accuracy for multi-step duties.

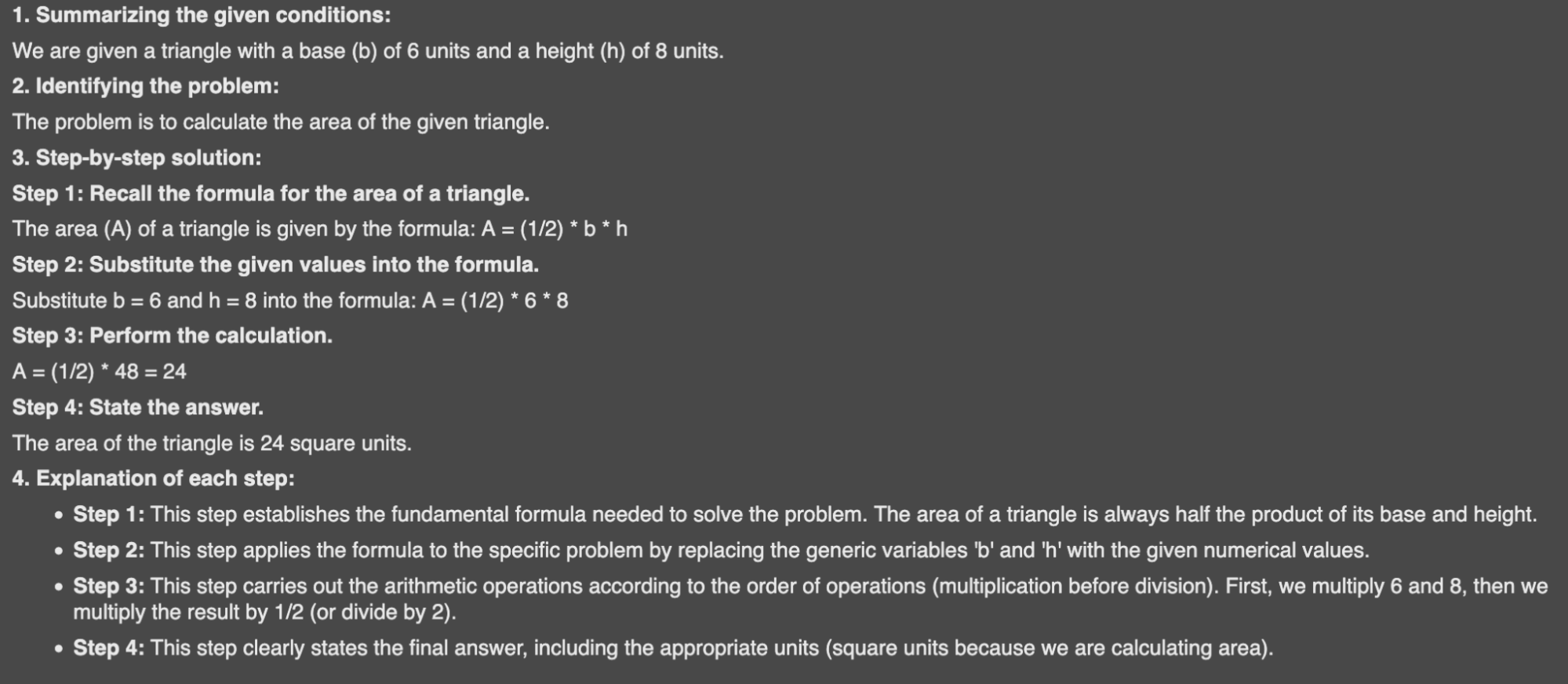

Approach 6: Fundamental Reasoning for Step-by-Step Options

You’ll be able to instruct the mannequin to interrupt down an issue and clarify its steps. That is helpful for mathematical or logical issues the place the method is as vital as the reply.

Right here, we ask the mannequin to resolve for the world of a triangle and present its work.

Immediate:

system_instruction = """

You're a trainer fixing mathematical issues. Your job:

1. Summarize given situations.

2. Determine the issue.

3. Present a transparent, step-by-step resolution.

4. Present an evidence for every step.

"""

math_problem = "Given a triangle with base b=6 and top h=8, calculate its space."

reasoning_model = genai.GenerativeModel(

'gemini-1.5-flash-latest',

system_instruction=system_instruction

)

response = reasoning_model.generate_content(math_problem)

show(Markdown(response.textual content))Output:

This structured output is evident and simple to comply with.

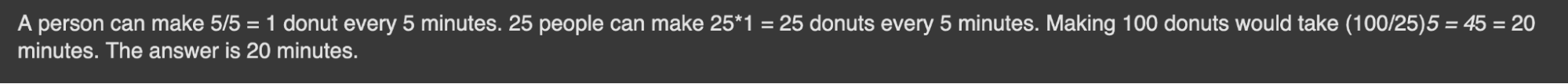

Approach 7: Chain-of-Thought for Advanced Issues

Chain-of-thought (CoT) prompting encourages the mannequin to assume step-by-step. Analysis from Google reveals that this considerably improves efficiency on complicated reasoning duties. As an alternative of simply giving the ultimate reply, the mannequin explains its reasoning path.

Let’s remedy a logic puzzle utilizing CoT.

Immediate:

immediate = """

Query: 11 factories could make 22 vehicles per hour. How a lot time would it not take 22 factories to make 88 vehicles?

Reply: A manufacturing facility could make 22/11=2 vehicles per hour. 22 factories could make 22*2=44 vehicles per hour. Making 88 vehicles would take 88/44=2 hours. The reply is 2 hours.

Query: 5 individuals can create 5 donuts each 5 minutes. How a lot time would it not take 25 individuals to make 100 donuts?

Reply:

"""

response = mannequin.generate_content(immediate)

show(Markdown(response.textual content))Output:

The mannequin follows the sample, breaking the issue down into logical steps.

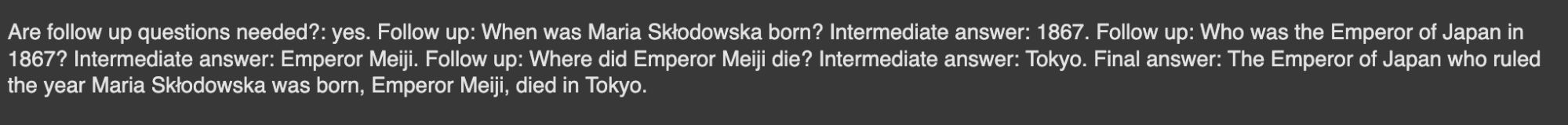

Approach 8: Self-Ask Prompting to Deconstruct Questions

Self-ask prompting is much like CoT. The mannequin breaks down a principal query into smaller, follow-up questions. It solutions every sub-question earlier than arriving on the remaining reply.

Let’s use this to resolve a multi-part historic query.

Immediate:

immediate = """

Query: Who was the president of the usa when Mozart died?

Are comply with up questions wanted?: sure.

Observe up: When did Mozart die?

Intermediate reply: 1791.

Observe up: Who was the president of the usa in 1791?

Intermediate reply: George Washington.

Remaining reply: When Mozart died George Washington was the president of the USA.

Query: The place did the Emperor of Japan, who dominated the yr Maria Skłodowska was born, die?

"""

response = mannequin.generate_content(immediate)

show(Markdown(response.textual content))Output:

This structured considering course of helps guarantee accuracy for complicated queries.

Half 4: Actual-World Purposes with Gemini

Now let’s see how these strategies apply to frequent real-world duties. Exploring these examples reveals the wide range of issues you are able to do with the Gemini’s free API.

Approach 9: Classifying Textual content for Moderation and Evaluation

You should utilize Gemini to robotically classify textual content. That is helpful for duties like content material moderation, the place it’s worthwhile to establish spam or abusive feedback. Utilizing few-shot prompting, Gemini helps the mannequin be taught your particular classes.

Immediate:

classification_template = """

Matter: The place can I purchase an affordable telephone?

Remark: You could have simply gained an IPhone 15 Professional Max!!! Click on the hyperlink!!!

Class: Spam

Matter: How lengthy do you boil eggs?

Remark: Are you silly?

Class: Offensive

Matter: {subject}

Remark: {remark}

Class:

"""

spam_topic = "I'm in search of a vet in our neighbourhood."

spam_comment = "You'll be able to win 1000$ by simply following me!"

spam_prompt = classification_template.format(subject=spam_topic, remark=spam_comment)

response = mannequin.generate_content(spam_prompt)

show(Markdown(response.textual content))Output:

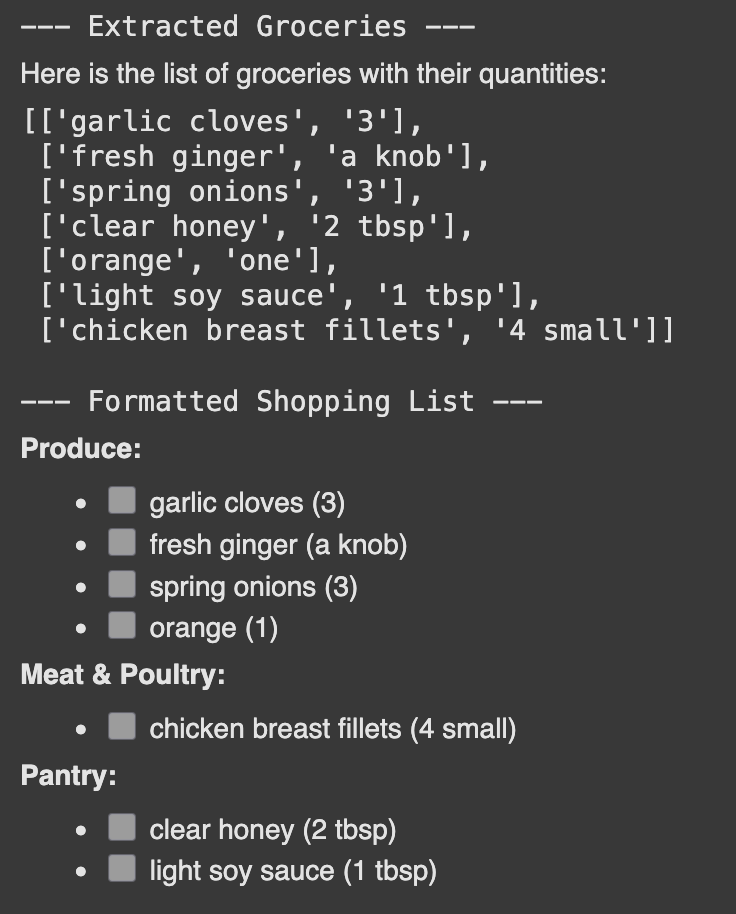

Gemini can extract particular items of knowledge from unstructured textual content. This helps you exchange plain textual content, like a recipe, right into a structured format, like a procuring record.

Immediate:

recipe = """

Grind 3 garlic cloves, a knob of contemporary ginger, and three spring onions to a paste.

Add 2 tbsp of clear honey, juice from one orange, and 1 tbsp of sunshine soy sauce.

Pour the combination over 4 small rooster breast fillets.

"""

# Step 1: Extract the record of groceries

extraction_prompt = f"""

Your job is to extract to an inventory all of the groceries with its portions based mostly on the offered recipe.

Guarantee that groceries are within the order of look.

Recipe:{recipe}

"""

extraction_response = mannequin.generate_content(extraction_prompt)

grocery_list = extraction_response.textual content

print("--- Extracted Groceries ---")

show(Markdown(grocery_list))

# Step 2: Format the extracted record right into a procuring record

formatting_system_instruction = "Set up groceries into classes for simpler procuring. Record every merchandise with a checkbox []."

formatting_prompt = f"""

LIST: {grocery_list}

OUTPUT:

"""

formatting_model = genai.GenerativeModel(

'gemini-2.5-flash',

system_instruction=formatting_system_instruction

)

formatting_response = formatting_model.generate_content(formatting_prompt)

print("n--- Formatted Buying Record ---")

show(Markdown(formatting_response.textual content))Output:

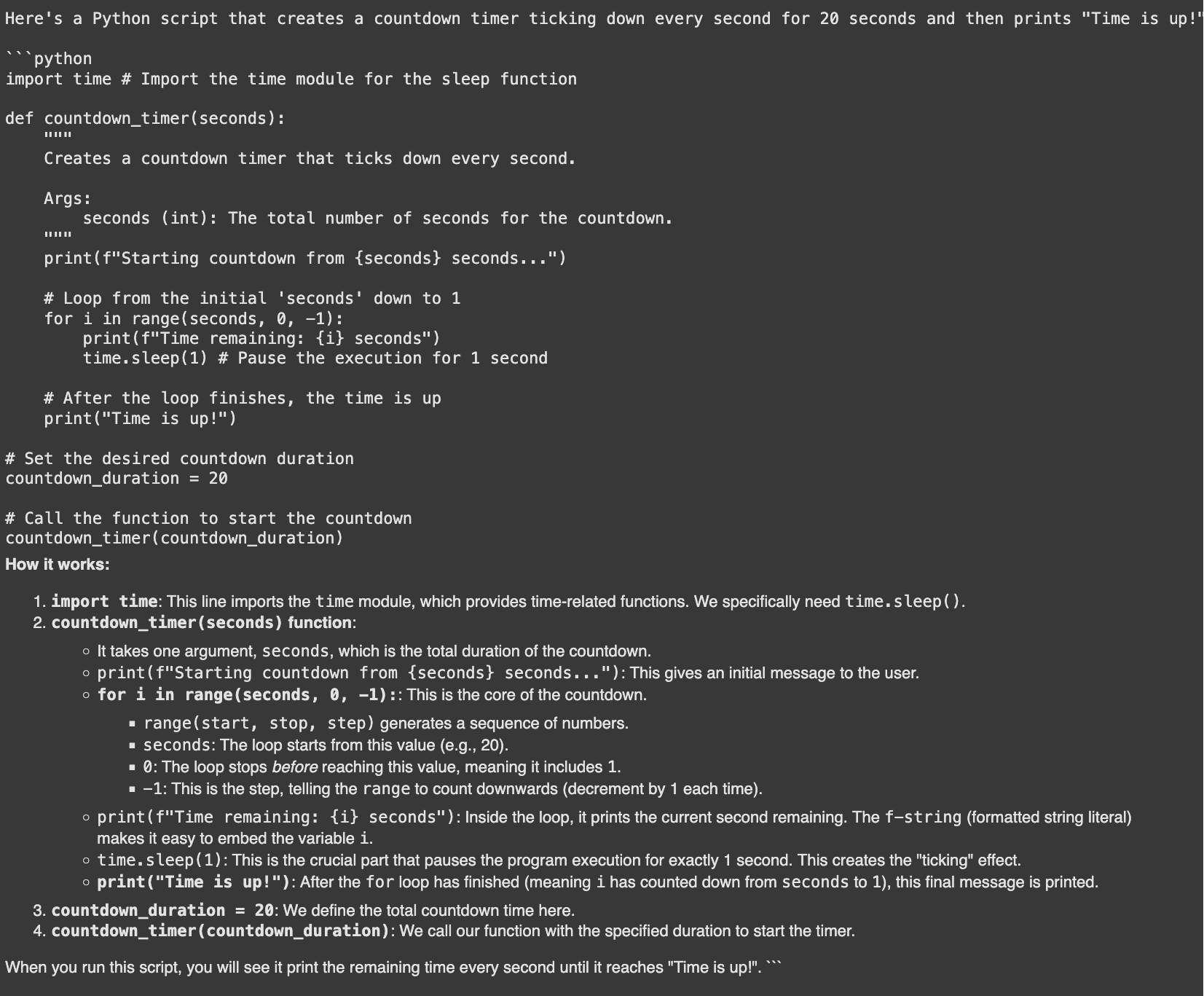

Approach 11: Fundamental Code Technology and Debugging

A robust utility is fundamental code era with Gemini. It will probably write code snippets, clarify errors, and counsel fixes. A GitHub survey discovered that builders utilizing AI coding instruments are as much as 55% sooner.

Let’s ask Gemini to generate a Python script for a countdown timer.

Immediate:

code_generation_prompt = """

Create a countdown timer in Python that ticks down each second and prints

"Time is up!" after 20 seconds.

"""

response = mannequin.generate_content(code_generation_prompt)

show(Markdown(f"```pythonn{response.textual content}n```"))Output:

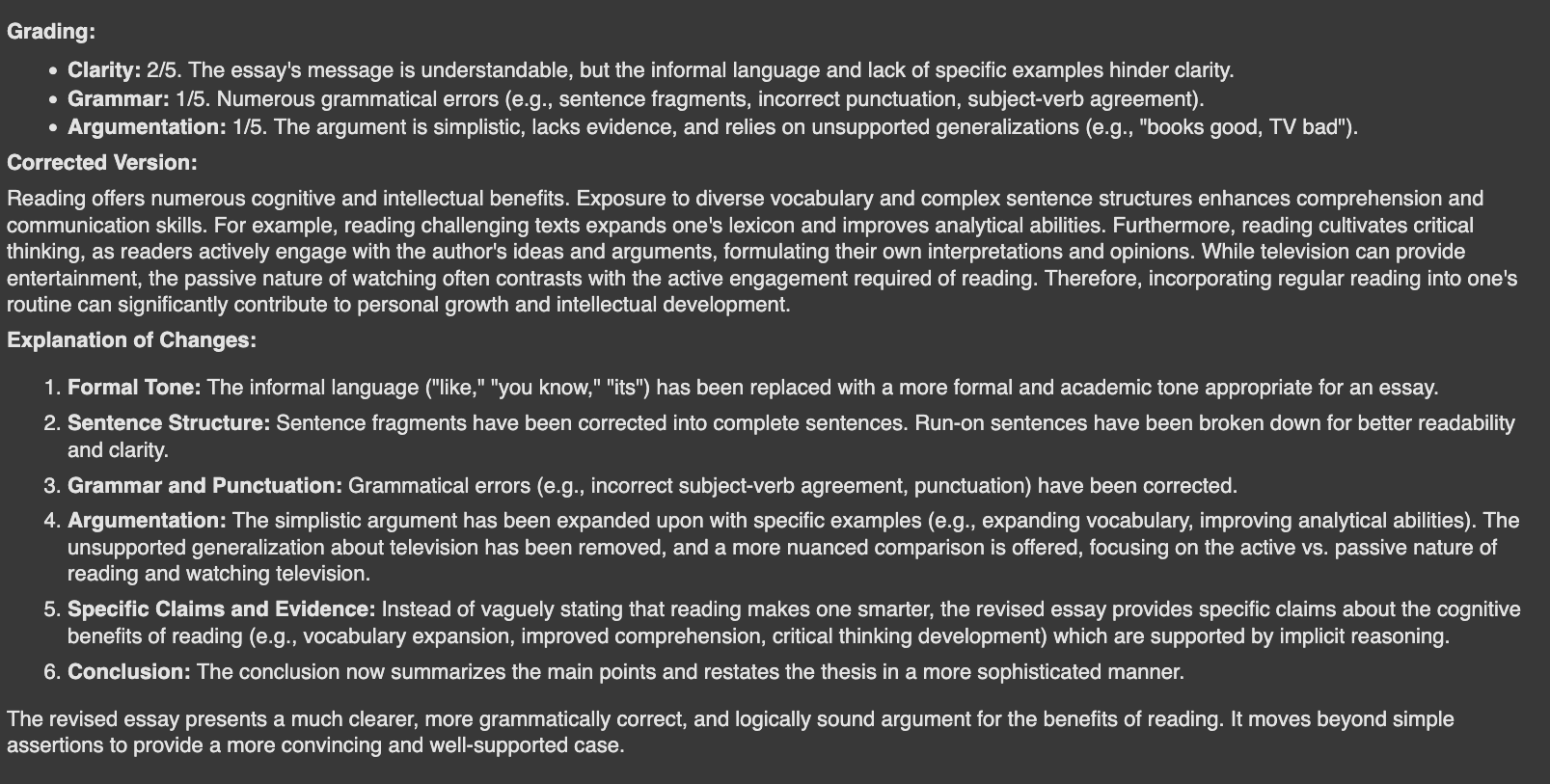

Approach 12: Evaluating Textual content for High quality Assurance

You’ll be able to even use Gemini to guage textual content. That is helpful for grading, offering suggestions, or guaranteeing content material high quality. Right here, we’ll have the mannequin act as a trainer and grade a poorly written essay.

Immediate:

teacher_system_instruction = """

As a trainer, you might be tasked with grading a scholar's essay.

1. Consider the essay on a scale of 1-5 for readability, grammar, and argumentation.

2. Write a corrected model of the essay.

3. Clarify the adjustments made.

"""

essay = "Studying is like, a extremely good factor. It’s helpful, you understand? Like, quite a bit. Once you learn, you be taught new phrases and that is good. Its like a secret code to being sensible. I learn a e-book final week and I do know it was making me smarter. Due to this fact, books good, TV unhealthy. So everybody ought to learn extra."

evaluation_model = genai.GenerativeModel(

'gemini-1.5-flash-latest',

system_instruction=teacher_system_instruction

)

response = evaluation_model.generate_content(essay)

show(Markdown(response.textual content))Output:

Conclusion

The Gemini API is a versatile and highly effective useful resource. We’ve got explored a variety of issues you are able to do with the free Gemini API, from easy inquiries to superior reasoning and code era. By mastering these Gemini API prompting strategies, you’ll be able to construct smarter, extra environment friendly purposes. The bottom line is to experiment. Use these examples as a place to begin on your personal initiatives and uncover what you’ll be able to create.

Regularly Requested Questions

A. You’ll be able to carry out textual content classification, data extraction, and summarization. It is usually glorious for duties like fundamental code era and sophisticated reasoning.

A. Zero-shot prompting entails asking a query straight with out examples. Few-shot prompting offers the mannequin with a number of examples to information its response format and elegance.

A. Sure, Gemini can generate code snippets, clarify programming ideas, and debug errors. This helps speed up the event course of for a lot of programmers.

A. Assigning a job offers the mannequin a selected persona and context. This leads to responses which have a extra acceptable tone, model, and area data.

A. It’s a method the place the mannequin breaks down an issue into smaller, sequential steps. This helps enhance accuracy on complicated duties that require reasoning.

Login to proceed studying and luxuriate in expert-curated content material.