Transport software program shortly whereas sustaining reliability is a continuing rigidity. As Databricks has grown, so has the complexity of safely rolling out modifications throughout lots of of providers, a number of clouds, and 1000’s of buyer workloads. Characteristic flags assist us handle this complexity by separating the choice to deploy code from the choice to allow it. This separation permits engineers to isolate failures and mitigate incidents sooner, with out sacrificing delivery velocity.

One of many key elements of Databricks’ stability posture is our in-house characteristic flagging and experimentation platform, referred to as “SAFE”. Databricks engineers use SAFE each day to rollout options, management service conduct dynamically, and measure the effectiveness of their options with A/B experiments.

Background

SAFE was began with the “north star” purpose of absolutely decoupling service binary releases from characteristic enablement, permitting groups to roll out options independently from their binary deployment. This enables for a lot of side-benefits, like the flexibility to reliably ramp-up a characteristic to progressively bigger populations of customers, and shortly mitigate incidents brought on by a rollout.

At Databricks’ scale, serving 1000’s of enterprise clients throughout a number of clouds with a quickly rising product floor space, we would have liked a characteristic flagging system that might meet our distinctive necessities:

- Excessive requirements for security and alter administration. The principle worth proposition for SAFE was to enhance the steadiness and operational posture of Databricks, so almost the entire different necessities flowed from this.

- Multi-cloud, seamless world supply throughout Azure, AWS, and GCP, with sub-millisecond flag analysis latency to help high-throughput and latency-sensitive manufacturing providers.

- Clear help for all locations the place Databricks engineers write code, together with our management aircraft, the Databricks UI, Databricks Runtime Setting, and Databricks’ Serverless information aircraft.

- An interface that was opinionated sufficient about Databricks’ launch practices to make frequent flag releases “secure by default”, but versatile sufficient to help a big set of extra esoteric use instances.

- Extraordinarily rigorous availability necessities, as providers can not safely launch with out flag definitions loaded.

After fastidiously contemplating these necessities, we finally opted to construct a customized in-house characteristic flagging system. We would have liked an answer that might evolve alongside our structure, and which would offer the governance controls required to soundly handle flags throughout lots of of providers and 1000’s of engineers. Attaining our scaling and security objectives efficiently required deep integration with our infrastructure information mannequin, service frameworks, and CI programs.

As of late 2025, SAFE has roughly 25k energetic flags, with 4k weekly flag flips. At peak, SAFE runs over 300M evaluations per second, all whereas sustaining a p95 latency of ~10μs for flag evaluations.

This submit explores how we constructed SAFE to satisfy these necessities and the learnings we have encountered alongside the way in which.

Characteristic Flags in Motion

To start out, we’ll focus on a typical person journey for a SAFE flag. At its core, a characteristic flag is a variable that may be accessed in a service’s management circulation which may take completely different values relying on situations managed from an exterior config. One extraordinarily frequent use case for characteristic flags is to regularly allow a brand new code path in a managed vogue, first beginning with a small portion of visitors and regularly enabling globally.

SAFE customers first begin by defining their flag of their service code, and use it as a conditional gate to the brand new characteristic’s logic:

The person then goes to the inner SAFE UI and registers this flag and selects a template to roll out their flag. This template defines a gradual rampup plan consisting of a listing of ordered levels. Every stage is ramped up slowly by percentages. The person is introduced with a UI that appears like this as soon as the flag has been created:

From right here, the person can both manually roll out their flag one stage at a time, or arrange a schedule to have the flag flips be created on their behalf. Internally, the supply of reality for the flag configuration is a jsonnet file checked in to the Databricks monorepo, that makes use of a light-weight domain-specific language (DSL) to handle the flag config:

When customers change a flag from the UI, the output of that change is a Pull Request that must be reviewed by not less than one different engineer. SAFE additionally runs quite a lot of pre-merge checks to protect towards unsafe or unintended modifications. As soon as the change is merged, the person’s service will decide up the change and begin emitting the brand new worth inside 2-5 minutes of the PR being merged.

Use instances

Apart from the use case described above for characteristic rollout, SAFE can also be used for different facets of dynamic service configuration, equivalent to: long-lived dynamic configurations (e.g. timeouts or price limits), state machine management for infrastructure migrations, or to ship small configuration blobs (e.g. focused logging insurance policies).

Structure

Consumer Libraries

SAFE offers shopper “SDKs” in a number of internally supported languages, with the Scala SDK being probably the most mature and broadly adopted. The SDK is basically a standards analysis library, mixed with a configuration loading element. For every flag, there’s a set of standards which management which worth the SDK ought to return at runtime. The SDK manages loading the most recent set of configuration, and must shortly return the results of evaluating that standards at runtime.

In pseudocode, the standards appears to be like one thing like internally:

The factors might be modeled as one thing akin to a sequence of boolean expression timber. Every conditional expression must be evaluated effectively to return a fast consequence.

To fulfill our efficiency necessities, the SAFE SDK design embodies a number of architectural rules: (1) separation of configuration supply from analysis, and (2) separation of static and runtime analysis dimensions.

- Separation of supply from analysis: The SAFE shopper libraries all the time deal with supply as an asynchronous course of, and by no means block the “sizzling path” of flag analysis on configuration supply. As soon as the shopper has a snapshot of a flag configuration, it should proceed to return outcomes based mostly on that snapshot till an asynchronous background course of does an atomic replace of that snapshot to a more recent snapshot.

- Separation of dimension sorts: Flag analysis in SAFE operates on two kinds of dimensions:

- Static dimensions characterize traits of the working binary itself, issues like cloud supplier, cloud area, and atmosphere (dev/staging/prod). These values stay fixed for the lifetime of a course of.

- Runtime dimensions seize request-specific context, like workspace IDs, account IDs, application-provided values, and different per-request attributes that change with every analysis.

To reliably obtain sub-millisecond analysis latency at scale, SAFE employs preevaluation of components of the boolean expression tree that are static. When a SAFE configuration bundle is delivered to a service, the SDK instantly evaluates all static dimensions towards the in-memory illustration of the flag configuration. This produces a simplified configuration tree that accommodates solely the logic related to that particular service occasion.

When a flag analysis is requested throughout request processing, the SDK solely wants to judge the remaining runtime dimensions towards this pre-compiled configuration. This considerably reduces the computational price of every analysis. Since many flags solely use static dimensions of their boolean expression timber, many flags can successfully be fully pre-evaluated.

Flag Supply

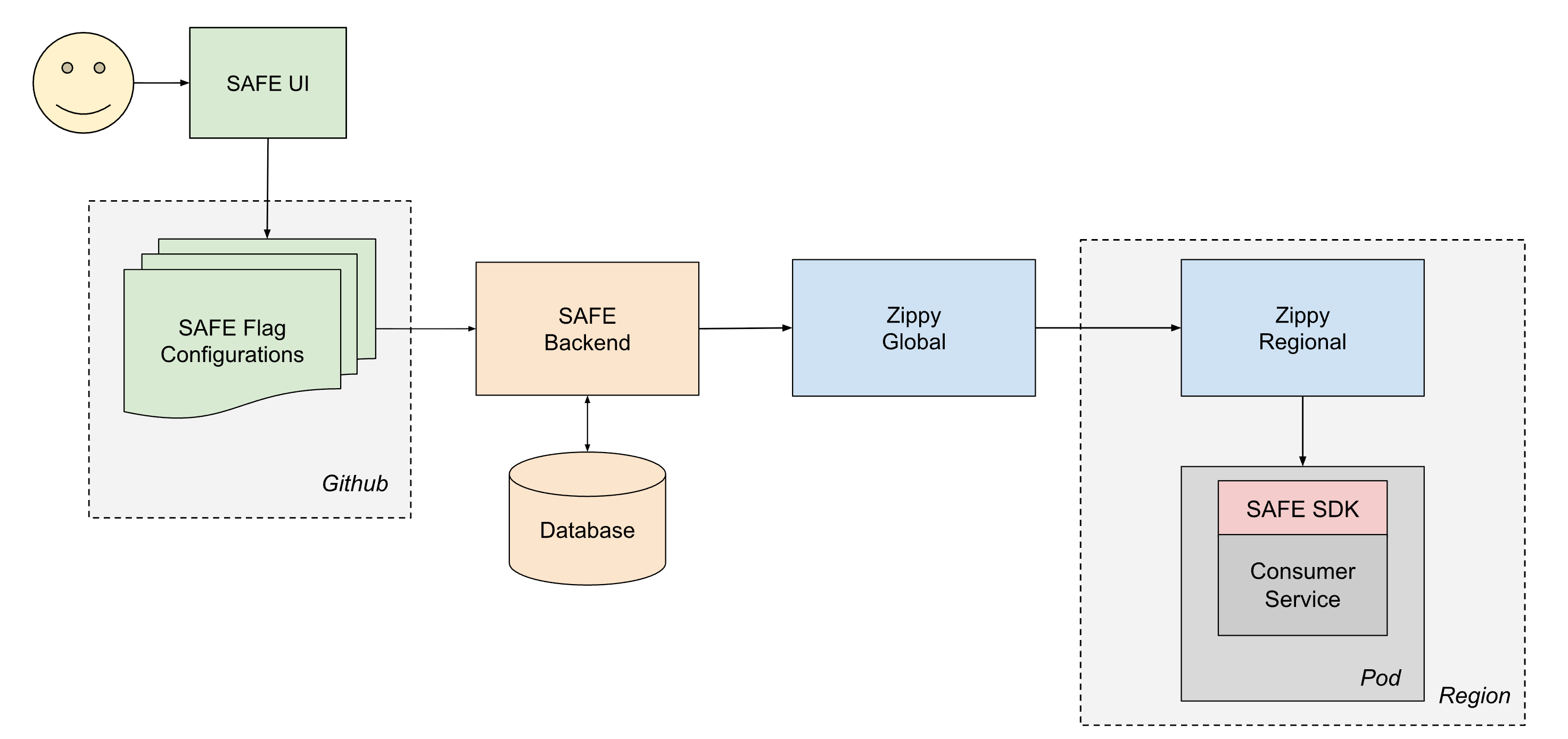

To reliably ship configuration to all providers at Databricks, SAFE operates hand-in-hand with our in-house dynamic configuration supply platform, Zippy. An in-depth description of the Zippy structure is left as a subject for one more submit, however briefly, Zippy makes use of a multi-tiered world/regional structure and per-cloud blob storage to move arbitrary configuration blobs from a central supply to (amongst different surfaces) all Kubernetes pods working within the Databricks Management Aircraft.

The lifetime of a delivered flag is as follows:

- A person creates and merges a PR to one in every of their flag configuration jsonnet recordsdata, which then will get merged into the Databricks monorepo in Github.

- Inside ~1 minute, a post-merge CI job picks up the modified file and sends it to the SAFE backend, which subsequently shops a duplicate of the brand new configuration in a database.

- Periodically (~1 minute intervals), the SAFE backend bundles up all of the SAFE flag configurations and sends them to the Zippy World backend.

- Zippy World distributes these configurations to every of its Zippy Regional cases, inside ~30 seconds.

- The SAFE SDK, working in every service pod, periodically receives the brand new model bundles utilizing a mixture of push and pull based mostly supply.

- As soon as delivered, the SAFE SDK can use the brand new configuration throughout analysis.

Finish-to-end, a flag change usually propagates to all providers inside 3-5 minutes of a PR being merged.

Flag Configuration Pipeline

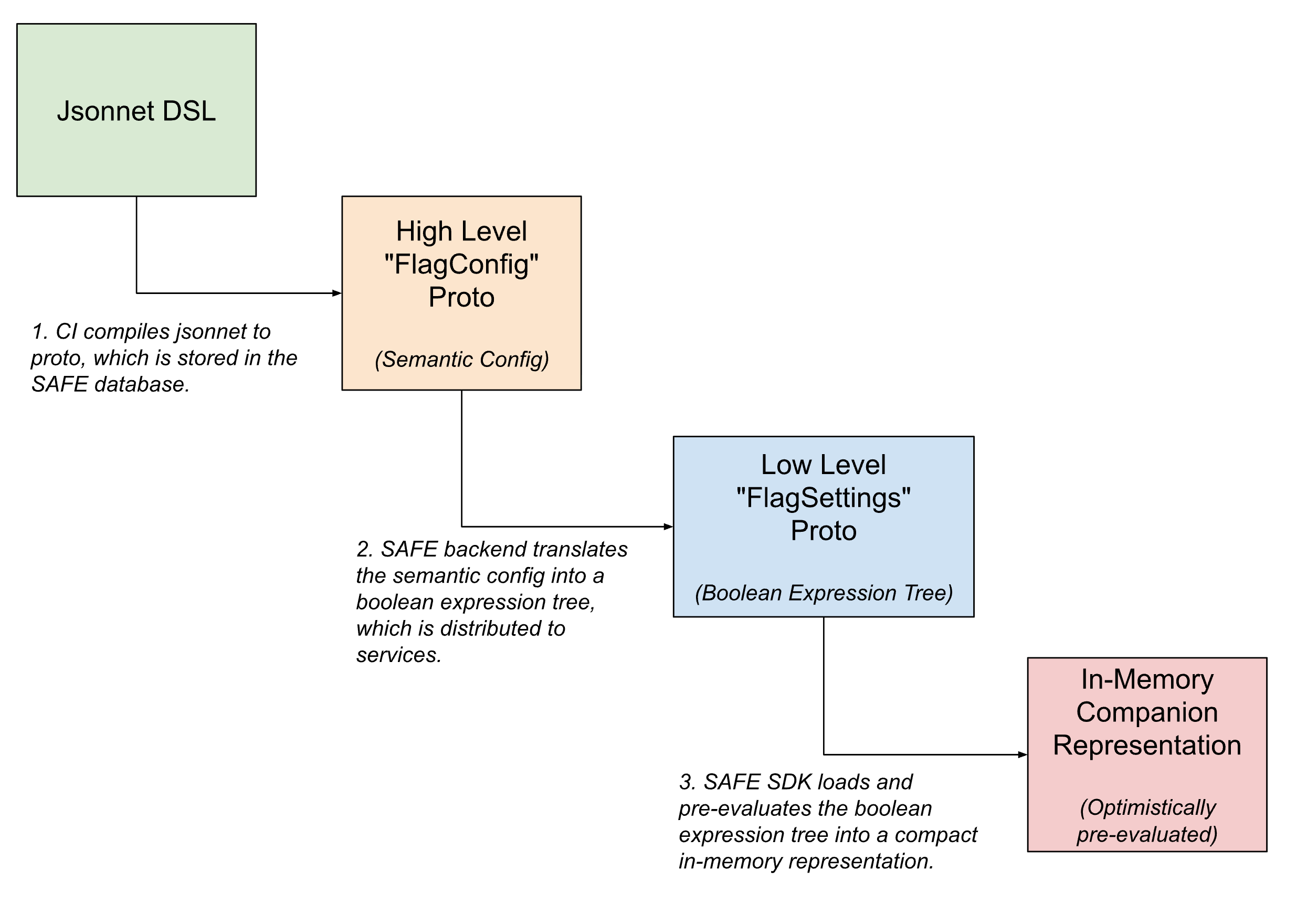

Inside the flag supply pipeline, the flag configurations take a number of types – being progressively translated from higher-level, human readable semantic configurations to compact machine-readable variations because the flag will get nearer to being evaluated.

Within the user-facing interface, flags are outlined utilizing Jsonnet with a customized DSL to permit for arbitrarily difficult flag configurations. This DSL has affordances for frequent use instances, like configuring a flag to rollout utilizing a pre-defined template, or for setting particular overrides on slices of visitors.

As soon as checked-in, this DSL is translated into an inner protobuf equal, which captures the semantic intent of the configuration. The SAFE backend then additional interprets this semantic configuration right into a boolean expression tree. A protobuf description of this boolean expression tree is delivered to the SAFE SDK, which masses it into an additional compacted in-memory illustration of the configuration.

UI

Most flag flips are initiated from an inner UI for managing SAFE flags. This UI permits customers to create, modify, and retire flags by way of a workflow that abstracts away a lot of the Jsonnet complexity for easy modifications whereas nonetheless offering entry to a lot of the full energy of the DSL for superior use instances.

A wealthy UI has additionally allowed us to floor extra quality-of-life options, equivalent to the flexibility to schedule flag flips, help for post-merge well being checks, and debugging tooling for figuring out latest flag flips which impacted a specific area or service.

Flag Config Evaluation

All SAFE flag modifications are created as regular Github PRs and are validated utilizing an in depth set of pre-merge validators. This set of validators has grown to embody dozens of particular person checks, as we have discovered extra about learn how to finest safeguard towards doubtlessly unsafe flag modifications. In the course of the preliminary introduction of SAFE, autopsy opinions of incidents that had been both brought on by or mitigated by way of a SAFE flag flip knowledgeable many of those checks. We now have checks that, for instance, require specialised evaluation on massive blast radius modifications, require {that a} specific service binary model be deployed earlier than a flag might be enabled, stop refined frequent misconfiguration patterns, and so forth.

Groups may also outline their very own flag- or team-specific pre-merge checks, to implement invariants for his or her configurations.

Dealing with Failure Modes

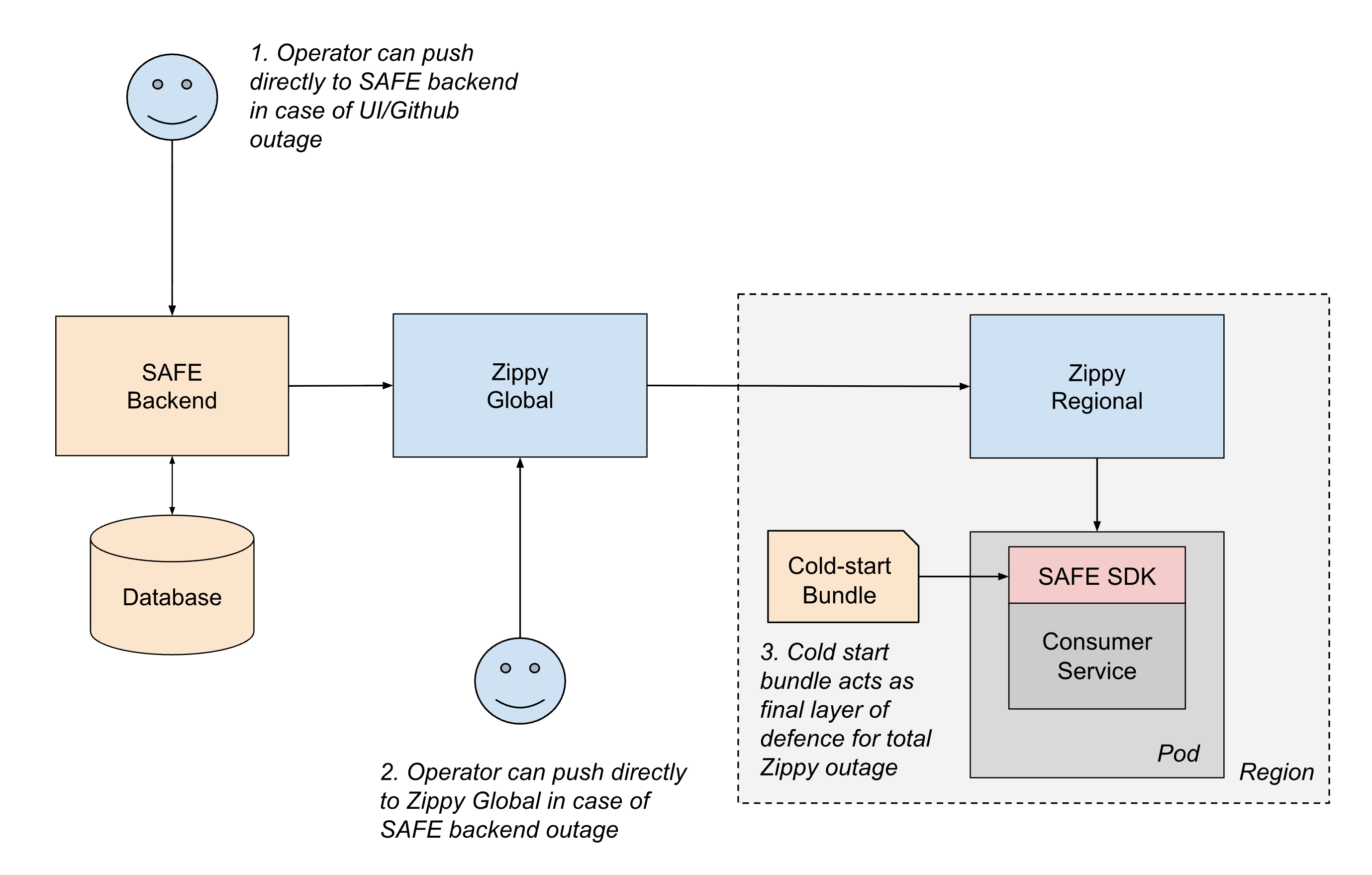

Given SAFE’s essential function in service stability, the system is designed with a number of layers of resilience to make sure continued operation even when components of the supply pipeline fail.

The commonest failure state of affairs includes disruptions to the configuration supply path. If something within the supply path leads to a failure to replace configurations, providers merely proceed serving their final recognized configuration till the supply path is restored. This “fail static” strategy ensures that present service conduct stays secure even throughout upstream outages.

For extra extreme situations, we preserve a number of fallback mechanisms:

- Out-of-band supply: If any piece of CI or Github push path is unavailable, operators can push configurations on to the SAFE backend utilizing emergency tooling.

- Regional failover: If the SAFE backend or Zippy World are down, operators can quickly push configurations on to Zippy Regional cases. Companies may also ballot cross-region to mitigate the affect of a single Zippy Regional outage.

- Chilly-start bundles: To deal with instances the place Zippy itself is unavailable throughout service startup, SAFE periodically distributes configuration bundles to providers through an artifact registry. Whereas these bundles could also be a number of hours stale, they supply enough backup for providers to begin safely moderately than blocking on dwell supply.

Inside the SAFE SDK itself, defensive design ensures that configuration errors have restricted blast radius. If a specific flag’s configuration is malformed, solely that single flag is affected. The SDK additionally maintains the contract of by no means throwing exceptions, and all the time fails open to the code default worth, so software builders don’t must deal with flag analysis as a fallible. The SDK additionally instantly alerts on-call engineers when any configuration parsing or analysis faults happen. As a result of maturity of SAFE and intensive pre-merge validation, such failures are actually extraordinarily rare in manufacturing.

This layered strategy to resilience ensures that SAFE degrades gracefully, and minimizes the danger of it turning into a single level of failure.

Classes Realized

Minimizing dependencies and layered redundant fallback scale back operational burden. Regardless of being deployed in and closely utilized by almost each compute floor at Databricks, the operational burden of sustaining SAFE has been fairly manageable. Including layered redundancies, such because the chilly begin bundle and the SDK’s “fail static” conduct, has made a lot of the SAFE structure self-healing.

Developer expertise is paramount. Scaling the “individuals side” of a strong flagging system required a powerful UX focus. SAFE is a mission essential system, usually used to mitigate incidents. As such, constructing a user-friendly UX for flipping flags throughout emergencies was excessive leverage. Adopting a product-focussed mindset led to fewer papercuts, much less confusion, and finally a decrease company-wide mean-time-to-recovery (MTTR) for incidents.

Make “finest practices” the low-friction route. One in all our largest learnings was that you simply can not solely doc finest practices and count on engineers to comply with them. Engineers have many competing priorities when delivery options. SAFE makes the secure path the simple path: gradual rollouts require much less effort and have extra quality-of-life options accessible than riskier enablement patterns. When the system incentivises safer conduct, the platform can nudge engineers in the direction of a tradition of accountable change administration.

Present State and Future Work

SAFE is now a matured inner platform inside Databricks, and is broadly used. The investments made into availability and developer expertise pay dividends as we see continued discount in each mean-time-to-resolution and blast radius manufacturing incidents by way of utilization of SAFE flags.

As Databricks’ product floor space continues to increase, the infrastructure primitives underlying these merchandise increase in each breadth and complexity. Consequently, there was important ongoing funding to make sure that SAFE helps all locations the place Databricks engineers write and deploy code.

In case you’re desirous about scaling mission-critical infrastructure like this, please discover open roles at Databricks!