It is a visitor put up by Thomas Cardenas, Employees Software program Engineer at Ancestry, in partnership with AWS.

Ancestry, the worldwide chief in household historical past and shopper genomics, makes use of household bushes, historic data, and DNA to assist individuals on their journeys of private discovery. Ancestry has the most important assortment of household historical past data, consisting of 40 billion data. They serve greater than 3 million subscribers and have over 23 million individuals of their rising DNA community. Their prospects can use this knowledge to find their household story.

Ancestry is proud to attach customers with their households previous and current. They assist individuals be taught extra about their very own id by studying about their ancestors. Customers construct a household tree by which we floor related data, historic paperwork, photographs, and tales which may comprise particulars about their ancestors. These artifacts are surfaced by Hints. The Hints dataset is likely one of the most attention-grabbing datasets at Ancestry. It’s used to alert customers that potential new data is on the market. The dataset has a number of shards, and there are at present 100 billion rows being utilized by machine studying fashions and analysts. Not solely is the dataset giant, it additionally modifications quickly.

On this put up, we share the very best practices that Ancestry used to implement an Apache Iceberg-based hints desk able to dealing with 100 billion rows with 7 million hourly modifications. The optimizations coated right here resulted in price reductions of 75%.

Overview of answer

Ancestry’s Enterprise Information Administration (EDM) crew confronted a important problem— present a unified, performant knowledge ecosystem that would serve various analytical workloads throughout monetary, advertising and marketing, and product analytics groups. The ecosystem wanted to assist every little thing from knowledge scientists coaching advice fashions to geneticists creating inhabitants research—all requiring entry to the identical Hints knowledge.

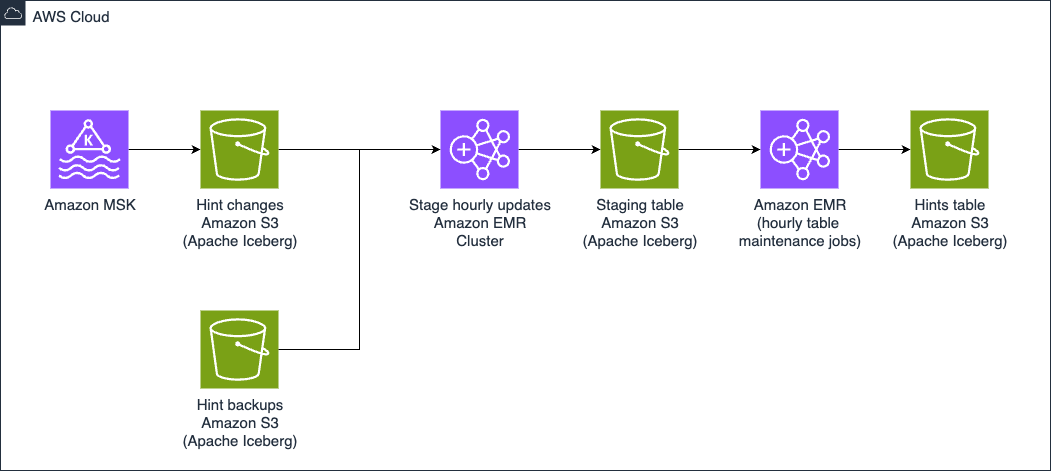

The ecosystem round Hints knowledge had been developed organically, with no well-defined structure. Groups independently accessed Hints knowledge by direct service calls, Kafka subject subscriptions, or warehouse queries, creating important knowledge duplication and pointless system load. To scale back price and enhance efficiency, EDM applied a centralized Apache Iceberg knowledge lake on Amazon Easy Storage Service (Amazon S3), with Amazon EMR offering the processing energy. This structure, proven within the following picture, creates a single supply of fact for the Hints dataset whereas utilizing Iceberg’s ACID transactions, schema evolution, and partition evolution capabilities to deal with scale and replace frequency.

Hints desk administration structure

Managing datasets exceeding one billion rows presents distinctive challenges, and Ancestry confronted this problem with the bushes assortment of 20–100 billion rows throughout a number of tables. At this scale, dataset updates require cautious execution to manage prices and stop reminiscence points. To unravel these challenges, EDM selected Amazon EMR on Amazon EC2 operating Spark to write down Iceberg tables on Amazon S3 for storage. With giant and regular Amazon EMR workloads, operating the clusters on Amazon EC2, versus Serverless, proved price efficient. EDM has scheduled an Apache Spark job to run each hour on their Amazon EMR on EC2. This job makes use of the merge operation to replace the Iceberg desk with lately modified rows. Performing updates like this on such a big dataset can simply result in runaway prices and out-of-memory errors.

Key optimization methods

The engineers wanted to allow quick, row-level updates with out impacting question efficiency or incurring substantial price. To attain this, Ancestry used a mixture of partitioning methods, desk configurations, Iceberg procedures, and incremental updates. The next is roofed intimately:

- Partitioning

- Sorting

- Merge-on-read

- Compaction

- Snapshot administration

- Storage-partitioned joins

Partitioning technique

Growing an efficient partitioning technique was essential for the 100-billion-row Hints desk. Iceberg helps varied partition transforms together with column worth, temporal features (12 months, month, day, hour), and numerical transforms (bucket, truncate). Following AWS finest practices, Ancestry rigorously analyzed question patterns to determine a partitioning method that will assist these queries whereas balancing these two competing issues:

- Too few partitions would drive queries to scan extreme knowledge, degrading efficiency and rising prices.

- Too many partitions would create small recordsdata and extreme metadata, inflicting administration overhead and slower question planning. It’s usually finest to keep away from parquet recordsdata smaller than 100 MB.

By question sample evaluation, Ancestry found that almost all analytical queries filtered on trace standing (notably pending standing) and trace kind. This perception led us to implement a two-level partitioning strategy-first on standing after which on kind, which dramatically lowered the quantity of knowledge scanned throughout typical queries.

Sorting

To additional optimize question efficiency, Ancestry applied strategic knowledge group inside partitions utilizing Iceberg’s type orders. Whereas Iceberg doesn’t keep excellent ordering, even approximate sorting considerably improves knowledge locality and compression ratios.

For the Hints desk with 100 billion rows, Ancestry confronted a novel problem: the first identifiers (PersonId and HintId) are high-cardinality numeric columns that will be prohibitively costly to type fully. The answer makes use of Iceberg’s truncate remodel perform to assist sorting on only a portion of the quantity, successfully creating one other partition by grouping a group of IDs collectively. For instance, we are able to specify truncate(100_000_000, hintId) to create teams of 100 million trace IDs, drastically bettering the efficiency of queries that specify that column.

Merge on learn

With 7 million modifications to the Hints desk occurring hourly, optimizing write efficiency turned important to the structure. Along with ensuring queries carried out properly, Ancestry additionally wanted to ensure our frequent updates would carry out properly in each time and value. It was rapidly found that the default copy-on-write (CoW) technique, which copies a whole file when any a part of it modifications, was too gradual and costly for his or her use case. Ancestry was capable of get the efficiency we wanted by as a substitute specifying the merge-on-read (MoR) replace technique, which maintains new data in diff recordsdata which might be reconciled on learn. The big updates that occur each hour led us to decide on sooner updates at the price of slower reads.

File compaction

The frequent updates imply recordsdata are always needing to be re-written to keep up efficiency. Iceberg offers the rewrite_data_files process for compaction, however default configurations proved inadequate for our scale. Leaving the default configuration in place, the rewrite operation wrote to 5 partitions at a time and didn’t meet our efficiency goal. We discovered that rising the concurrent writes improved efficiency. We used the next set of parameters, setting a comparatively excessive max-concurrent-file-group-rewrites worth of 100 to extra effectively take care of our 1000’s of partitions. The default of rewriting just one file at a time couldn’t sustain with the frequency of our updates.

Key optimizations in Ancestry’s method embrace:

- Excessive concurrency: We elevated

max-concurrent-file-group-rewritesfrom the default 5 to 100, enabling parallel processing of our 1000’s of partitions. This elevated compute prices however was mandatory to assist be certain that the roles completed. - Resilience at scale: We enabled

partial-progressto create compaction checkpoints, important when working at our scale the place failures are notably pricey. - Complete delta elimination: Setting

rewrite-alltotruehelps be certain that each knowledge recordsdata and delete recordsdata are compacted, stopping the buildup of delete recordsdata. By default, the delete recordsdata created as a part of this technique aren’t re-written and would proceed to build up, slowing queries.

We arrived at these optimizations by successive trials and evaluations. For instance, with our very giant dataset, we found that we may use a WHERE clause to restrict re-writes to a single partition. Based mostly on the partitions, we see different execution occasions and useful resource utilization. For some partitions, we wanted to cut back concurrency to keep away from operating into out of reminiscence errors.

Snapshot administration

Iceberg tables keep snapshots to protect the historical past of the desk, permitting you to time journey by the modifications. As these snapshots accrue, they add to storage prices and degrade efficiency. Because of this sustaining an Iceberg desk requires you to periodically name the expire_snapshots process. We discovered we wanted to allow concurrency for snapshot administration in order that it might full in a well timed method:

Think about steadiness efficiency, price, and the necessity to preserve historic data relying in your use case. Whenever you accomplish that, word that there’s a table-level setting for optimum snapshot age which might override the retain_last parameter and retain solely the energetic snapshot.

Lowering shuffle with Storage-Partitioned Joins

We use Storage-Partitioned Joins (SPJ) in Iceberg tables to reduce costly shuffles throughout knowledge processing. SPJ is a complicated Iceberg characteristic (accessible in Spark 3.3 or later with Iceberg 1.2 or later) that makes use of the bodily storage structure of tables to remove shuffle operations fully. For our Hints replace pipeline, this optimization was transformational.

SPJ is very helpful throughout MERGE INTO operations, the place datasets have equivalent partitioning. Correct configuration helps guarantee efficient use of SPJ to optimize joins.

SPJ has a number of necessities corresponding to each tables should be Iceberg partitioned the identical method and joined on the partition key. Then Iceberg will know that it doesn’t should shuffle the info when the tables are loaded. This even works when there are a distinct variety of partitions on both facet.

Updates to the Hints database are first staged within the Trace Adjustments database the place knowledge is reworked from the unique Kafka knowledge format into the way it will look within the goal (Hints) desk. It is a momentary Iceberg desk the place we’re capable of carry out audits utilizing Write-Audit-Publish (WAP) sample. Along with utilizing the WAP sample we’re in a position to make use of the SPJ performance.

The Hints knowledge pipeline

Lowering full-table scans

One other technique to cut back shuffle is minimizing the info concerned in joins by dynamically pushing down filters. In manufacturing, these filters fluctuate between batches, so a multi-step operation is usually mandatory for organising merges. The next instance code first limits its scope by setting minimal and most values for the ID, then performs an replace or delete to the goal desk relying on whether or not a goal worth exists.

This system reduces price in a number of methods: the bounded merge reduces the variety of affected rows, it permits for predicate pushdown optimization, which filters on the storage layer, and it reduces shuffle operations compared with a be a part of.

Further insights

Aside from the Hints desk, we’ve applied over 1,000 Iceberg tables in our knowledge ecosystem. The next are some key insights that we noticed:

- Updating a desk utilizing

MERGEis usually the most costly motion, so that is the place we spent probably the most time optimizing. It was nonetheless our greatest possibility. - Utilizing advanced knowledge varieties may help co-locate related knowledge within the desk.

- Monitor prices of every pipeline as a result of whereas following good apply you’ll be able to stumble throughout belongings you miss which might be inflicting prices to extend.

Conclusion

Organizations can use Apache Iceberg tables on Amazon S3 with Amazon EMR to handle huge datasets with frequent updates. Many purchasers will be capable of obtain glorious efficiency with a low upkeep burden by utilizing the AWS Glue desk optimizer for computerized, asynchronous compaction. Some prospects, like Ancestry, would require customized optimizations of their upkeep procedures to fulfill their price and efficiency targets. These prospects ought to begin with a cautious evaluation of question patterns to develop a partitioning technique to reduce the quantity of knowledge that must be learn and processed. Replace frequency and latency necessities will dictate different decisions, like whether or not merge-on-read or copy-on-write is the higher technique.

In case your group faces related challenges with excessive volumes of knowledge requiring frequent updates, you need to use a mixture of Apache Iceberg’s superior options with AWS providers like Amazon EMR Serverless, Amazon S3, and AWS Glue to construct a very trendy knowledge lake that delivers the dimensions, efficiency, and cost-efficiency you want.

Additional studying

Concerning the authors