You have got heard the well-known quote “Knowledge is the brand new Oil” by British mathematician Clive Humby it’s the most influential quote that describes the significance of knowledge within the twenty first century however, after the explosive improvement of the Giant Language Mannequin and its coaching what we don’t have proper is the info. as a result of the event pace and coaching pace of the LLM mannequin practically surpass the info era pace of people. The answer is making the info extra refined and particular to the duty or the Artificial information era. The previous is the extra area skilled loaded duties however the latter is extra outstanding to the large starvation of at present’s issues.

The high-quality coaching information stays a important bottleneck. This weblog put up explores a sensible strategy to producing artificial information utilizing LLama 3.2 and Ollama. It is going to display how we are able to create structured academic content material programmatically.

Studying Outcomes

- Perceive the significance and strategies of Native Artificial Knowledge Technology for enhancing machine studying mannequin coaching.

- Discover ways to implement Native Artificial Knowledge Technology to create high-quality datasets whereas preserving privateness and safety.

- Achieve sensible information of implementing sturdy error dealing with and retry mechanisms in information era pipelines.

- Study JSON validation, cleansing strategies, and their function in sustaining constant and dependable outputs.

- Develop experience in designing and using Pydantic fashions for making certain information schema integrity.

What’s Artificial Knowledge?

Artificial information refers to artificially generated info that mimics the traits of real-world information whereas preserving important patterns and statistical properties. It’s created utilizing algorithms, simulations, or AI fashions to handle privateness considerations, increase restricted information, or check programs in managed situations. Not like actual information, artificial information could be tailor-made to particular necessities, making certain variety, steadiness, and scalability. It’s extensively utilized in fields like machine studying, healthcare, finance, and autonomous programs to coach fashions, validate algorithms, or simulate environments. Artificial information bridges the hole between information shortage and real-world purposes whereas decreasing moral and compliance dangers.

Why We Want Artificial Knowledge At present?

The demand for artificial information has grown exponentially because of a number of elements

- Knowledge Privateness Laws: With GDPR and comparable rules, artificial information provides a secure various for improvement and testing

- Price Effectivity: COllecting and annotating actual information is pricey and time-consuming.

- Scalabilities: Artificial information could be generated in Giant portions with managed variations

- Edge Case Protection: We are able to generate information for uncommon situations that is likely to be tough to gather naturally

- Fast Prototyping: Fast iteration on ML fashions with out ready for actual information assortment.

- Much less Biased: The info collected from the true world could also be error inclined and filled with gender biases, racistic textual content, and never secure for youngsters’s phrases so to make a mannequin with any such information, the mannequin’s habits can also be inherently with these biases. With artificial information, we are able to management these behaviors simply.

Impression on LLM and Small LM Efficiency

Artificial information has proven promising ends in bettering each giant and small language fashions

- Effective-tuning Effectivity: Fashions fine-tuned on high-quality artificial information usually present comparable efficiency to these skilled on actual information

- Area Adaptation: Artificial information helps bridge area gaps in specialised purposes

- Knowledge Augmentation: Combining artificial and actual information usually yields higher outcomes utilizing both alone.

Undertaking Construction and Setting Setup

Within the following part, we’ll break down the undertaking structure and information you thru configuring the required setting.

undertaking/

├── major.py

├── necessities.txt

├── README.md

└── english_QA_new.jsonNow we are going to arrange our undertaking setting utilizing conda. Observe beneath steps

Create Conda Setting

$conda create -n synthetic-data python=3.11

# activate the newly created env

$conda activate synthetic-dataSet up Libraries in conda env

pip set up pydantic langchain langchain-community

pip set up langchain-ollamaNow we’re all set as much as begin the code implementation

Undertaking Implementation

On this part, we’ll delve into the sensible implementation of the undertaking, protecting every step intimately.

Importing Libraries

Earlier than beginning the undertaking we are going to create a file identify major.py within the undertaking root and import all of the libraries on that file:

from pydantic import BaseModel, Discipline, ValidationError

from langchain.prompts import PromptTemplate

from langchain_ollama import OllamaLLM

from typing import Record

import json

import uuid

import re

from pathlib import Path

from time import sleepNow it’s time to proceed the code implementation half on the primary.py file

First, we begin with implementing the Knowledge Schema.

EnglishQuestion information schema is a Pydantic mannequin that ensures our generated information follows a constant construction with required fields and computerized ID era.

Code Implementation

class EnglishQuestion(BaseModel):

id: str = Discipline(

default_factory=lambda: str(uuid.uuid4()),

description="Distinctive identifier for the query",

)

class: str = Discipline(..., description="Query Sort")

query: str = Discipline(..., description="The English language query")

reply: str = Discipline(..., description="The right reply to the query")

thought_process: str = Discipline(

..., description="Clarification of the reasoning course of to reach on the reply"

)Now, that we’ve created the EnglishQuestion information class.

Second, we are going to begin implementing the QuestionGenerator class. This class is the core of undertaking implementation.

QuestionGenerator Class Construction

class QuestionGenerator:

def __init__(self, model_name: str, output_file: Path):

move

def clean_json_string(self, textual content: str) -> str:

move

def parse_response(self, outcome: str) -> EnglishQuestion:

move

def generate_with_retries(self, class: str, retries: int = 3) -> EnglishQuestion:

move

def generate_questions(

self, classes: Record[str], iterations: int

) -> Record[EnglishQuestion]:

move

def save_to_json(self, query: EnglishQuestion):

move

def load_existing_data(self) -> Record[dict]:

moveLet’s step-by-step implement the important thing strategies

Initialization

Initialize the category with a language mannequin, a immediate template, and an output file. With this, we are going to create an occasion of OllamaLLM with model_name and arrange a PromptTemplate for producing QA in a strict JSON format.

Code Implementation:

def __init__(self, model_name: str, output_file: Path):

self.llm = OllamaLLM(mannequin=model_name)

self.prompt_template = PromptTemplate(

input_variables=["category"],

template="""

Generate an English language query that checks understanding and utilization.

Deal with {class}.Query shall be like fill within the blanks,One liner and mut not be MCQ sort. write Output on this strict JSON format:

{{

"query": "",

"reply": "",

"thought_process": ""

}}

Don't embody any textual content outdoors of the JSON object.

""",

)

self.output_file = output_file

self.output_file.contact(exist_ok=True) JSON Cleansing

Responses we are going to get from the LLM in the course of the era course of can have many pointless additional characters which can poise the generated information, so you need to move these information via a cleansing course of.

Right here, we are going to repair the frequent formatting subject in JSON keys/values utilizing regex, changing problematic characters reminiscent of newline, and particular characters.

Code implementation:

def clean_json_string(self, textual content: str) -> str:

"""Improved model to deal with malformed or incomplete JSON."""

begin = textual content.discover("{")

finish = textual content.rfind("}")

if begin == -1 or finish == -1:

increase ValueError(f"No JSON object discovered. Response was: {textual content}")

json_str = textual content[start : end + 1]

# Take away any particular characters which may break JSON parsing

json_str = json_str.exchange("n", " ").exchange("r", " ")

json_str = re.sub(r"[^x20-x7E]", "", json_str)

# Repair frequent JSON formatting points

json_str = re.sub(

r'(?Response Parsing

The parsing technique will use the above cleansing course of to wash the responses from the LLM, validate the response for consistency, convert the cleaned JSON right into a Python dictionary, and map the dictionary to an EnglishQuestion object.

Code Implementation:

def parse_response(self, outcome: str) -> EnglishQuestion:

"""Parse the LLM response and validate it towards the schema."""

cleaned_json = self.clean_json_string(outcome)

parsed_result = json.hundreds(cleaned_json)

return EnglishQuestion(**parsed_result)Knowledge Persistence

For, persistent information era, though we are able to use some NoSQL Databases(MongoDB, and many others) for this, right here we use a easy JSON file to retailer the generated information.

Code Implementation:

def load_existing_data(self) -> Record[dict]:

"""Load current questions from the JSON file."""

attempt:

with open(self.output_file, "r") as f:

return json.load(f)

besides (FileNotFoundError, json.JSONDecodeError):

return []Strong Technology

On this information era section, we’ve two most essential strategies:

- Generate with retry mechanism

- Query Technology technique

The aim of the retry mechanism is to pressure automation to generate a response in case of failure. It tries producing a query a number of instances(the default is 3 times) and can log errors and add a delay between retries. It is going to additionally increase an exception if all makes an attempt fail.

Code Implementation:

def generate_with_retries(self, class: str, retries: int = 3) -> EnglishQuestion:

for try in vary(retries):

attempt:

outcome = self.prompt_template | self.llm

response = outcome.invoke(enter={"class": class})

return self.parse_response(response)

besides Exception as e:

print(

f"Try {try + 1}/{retries} failed for class '{class}': {e}"

)

sleep(2) # Small delay earlier than retry

increase ValueError(

f"Didn't course of class '{class}' after {retries} makes an attempt."

)The Query era technique will generate a number of questions for a listing of classes and save them within the storage(right here JSON file). It is going to iterate over the classes and name generating_with_retries technique for every class. And within the final, it’s going to save every efficiently generated query utilizing save_to_json technique.

def generate_questions(

self, classes: Record[str], iterations: int

) -> Record[EnglishQuestion]:

"""Generate a number of questions for a listing of classes."""

all_questions = []

for _ in vary(iterations):

for class in classes:

attempt:

query = self.generate_with_retries(class)

self.save_to_json(query)

all_questions.append(query)

print(f"Efficiently generated query for class: {class}")

besides (ValidationError, ValueError) as e:

print(f"Error processing class '{class}': {e}")

return all_questionsDisplaying the outcomes on the terminal

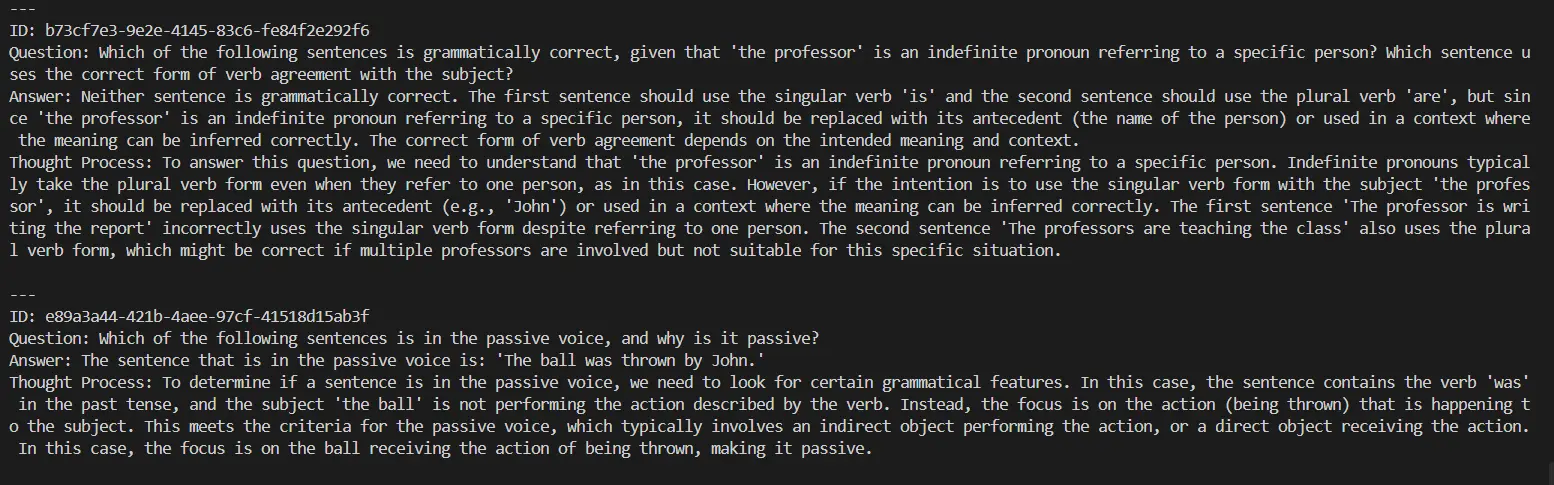

To get some thought of what are the responses producing from LLM right here is an easy printing operate.

def display_questions(questions: Record[EnglishQuestion]):

print("nGenerated English Questions:")

for query in questions:

print("n---")

print(f"ID: {query.id}")

print(f"Query: {query.query}")

print(f"Reply: {query.reply}")

print(f"Thought Course of: {query.thought_process}")Testing the Automation

Earlier than operating your undertaking create an english_QA_new.json file on the undertaking root.

if __name__ == "__main__":

OUTPUT_FILE = Path("english_QA_new.json")

generator = QuestionGenerator(model_name="llama3.2", output_file=OUTPUT_FILE)

classes = [

"word usage",

"Phrasal Ver",

"vocabulary",

"idioms",

]

iterations = 2

generated_questions = generator.generate_questions(classes, iterations)

display_questions(generated_questions)

Now, Go to the terminal and kind:

python major.pyOutput:

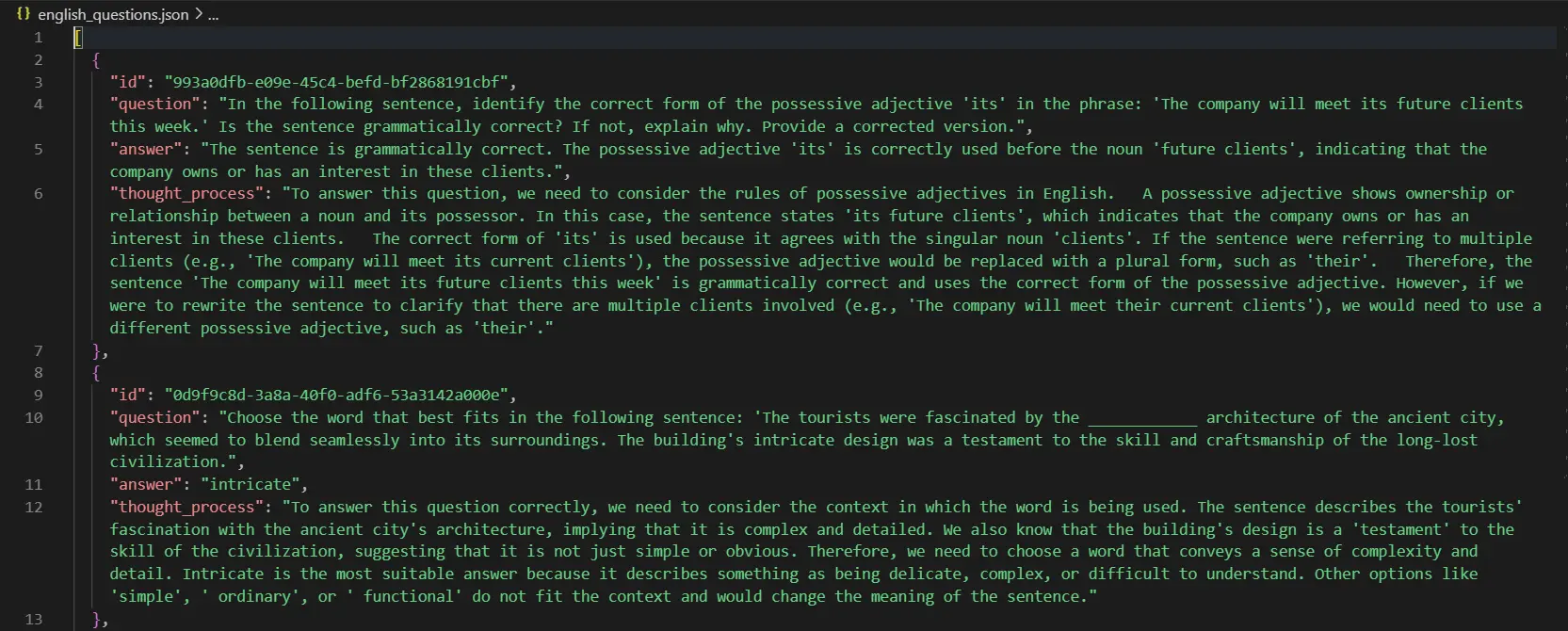

These questions shall be saved in your undertaking root. Saved Query seem like:

All of the code used on this undertaking is right here.

Conclusion

Artificial information era has emerged as a strong answer to handle the rising demand for high-quality coaching datasets within the period of fast developments in AI and LLMs. By leveraging instruments like LLama 3.2 and Ollama, together with sturdy frameworks like Pydantic, we are able to create structured, scalable, and bias-free datasets tailor-made to particular wants. This strategy not solely reduces dependency on expensive and time-consuming real-world information assortment but additionally ensures privateness and moral compliance. As we refine these methodologies, artificial information will proceed to play a pivotal function in driving innovation, bettering mannequin efficiency, and unlocking new potentialities in numerous fields.

Key Takeaways

- Native Artificial Knowledge Technology allows the creation of numerous datasets that may enhance mannequin accuracy with out compromising privateness.

- Implementing Native Artificial Knowledge Technology can considerably improve information safety by minimizing reliance on real-world delicate information.

- Artificial information ensures privateness, reduces biases, and lowers information assortment prices.

- Tailor-made datasets enhance adaptability throughout numerous AI and LLM purposes.

- Artificial information paves the best way for moral, environment friendly, and progressive AI improvement.

Steadily Requested Questions

A. Ollama supplies native deployment capabilities, decreasing price and latency whereas providing extra management over the era course of.

A. To keep up high quality, The implementation makes use of Pydantic validation, retry mechanisms, and JSON cleansing. Further metrics and keep validation could be carried out.

A. Native LLMs may need lower-quality output in comparison with bigger fashions, and era pace could be restricted by native computing assets.

A. Sure, artificial information ensures privateness by eradicating identifiable info and promotes moral AI improvement by addressing information biases and decreasing the dependency on real-world delicate information.

A. Challenges embody making certain information realism, sustaining area relevance, and aligning artificial information traits with real-world use circumstances for efficient mannequin coaching.