Organizations more and more need to ingest and acquire sooner entry to insights from SAP methods with out sustaining complicated information pipelines. AWS Glue zero-ETL with SAP now helps information ingestion and replication from SAP information sources comparable to Operational Knowledge Provisioning (ODP) managed SAP Enterprise Warehouse (BW) extractors, Superior Enterprise Utility Programming (ABAP), Core Knowledge Providers (CDS) views, and different non-ODP information sources. Zero-ETL information replication and schema synchronization writes extracted information to AWS companies like Amazon Redshift, Amazon SageMaker lakehouse, and Amazon S3 Tables, assuaging the necessity for guide pipeline improvement. This creates a basis for AI-driven insights when used with AWS companies comparable to Amazon Q and Amazon Fast Suite, the place you need to use pure language queries to investigate SAP information, create AI brokers for automation, and generate contextual insights throughout your enterprise information panorama.

On this put up, we present how you can create and monitor a zero-ETL integration with numerous ODP and non-ODP SAP sources.

Answer overview

The important thing part of SAP integration is the AWS Glue SAP OData connector, which is designed to work with the SAP information constructions and protocols. The connector offers connectivity to ABAP-based SAP methods and adheres to the SAP safety and governance frameworks. Key options of the AWS SAP connector embody:

- Makes use of OData protocol for information extraction from numerous SAP NetWeaver methods

- Managed replication for complicated SAP information fashions comparable to BW extractors (comparable to

2LIS_02_ITM) and CDS views (comparable toC_PURCHASEORDERITEMDEX) - Handles each ODP and non-ODP entities utilizing the SAP change information seize (CDC) expertise

The SAP connector works with each AWS Glue Studio or AWS managed replication with zero-ETL. Self-managed replication in AWS Glue Studio offers full management over information processing items, replication frequencies, adjusting price-performance, web page dimension, information filters, locations, file codecs, information transformation, and writing your individual code with chosen runtime. AWS managed information replication in zero-ETL removes burden of customized configurations and offers an AWS managed different, permitting replication frequencies between quarter-hour to six days. The next answer structure demonstrates the approaches of ingesting ODP and non-ODP SAP information utilizing zero-ETL from numerous SAP sources and writing to Amazon Redshift, SageMaker lakehouse, and S3 Tables.

Change information seize for ODP sources

SAP ODP is an information extraction framework that permits incremental and information replication from SAP supply methods to focus on methods. The ODP framework offers functions (subscribers) to request information from supported objects, comparable to BW extractors, CDS views, and BW objects, in an incremental method.

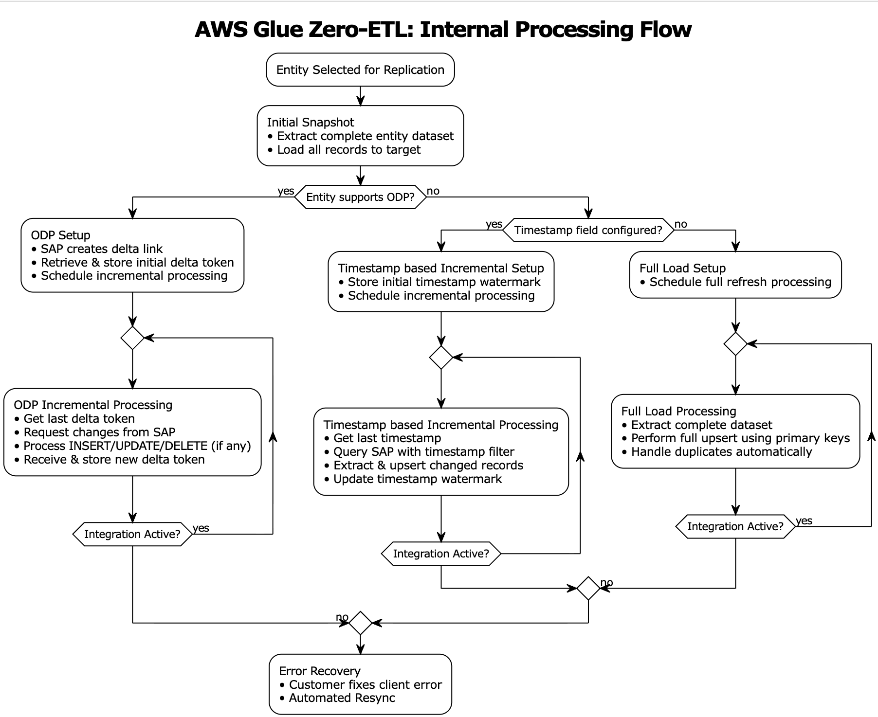

AWS Glue zero-ETL information ingestion begins with executing a full preliminary load of entity information to determine the baseline dataset within the goal system. After the preliminary full load is full, SAP provisions a delta queue referred to as Operational Delta Queue (ODQ), which captures information modifications, together with deletions. The delta token is shipped to the subscriber in the course of the preliminary load and endured throughout the zero-ETL inner state administration system.

The incremental processing retrieves the final saved delta token from the state retailer, then sends a delta change request to SAP utilizing this token utilizing the OData protocol. The system processes returned INSERT/UPDATE/DELETE operations by way of the SAP ODQ mechanism and receives a brand new delta token from SAP even in situations the place no information have been modified. This new token is endured within the state administration system after profitable ingestion. In error situations, the system preserves the present delta token state, enabling retry mechanics with out information loss.

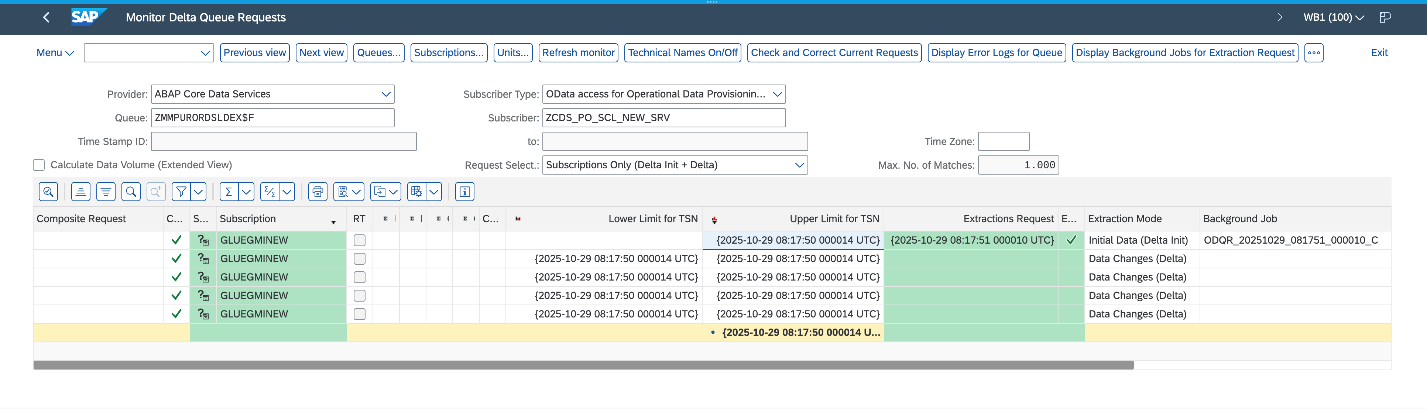

The next screenshot illustrates a profitable preliminary load adopted by 4 incremental information ingestions on the SAP system.

Change information seize for non-ODP sources

Non-ODP constructions are OData companies that aren’t ODP enabled. These are APIs, features, views, or CDS views which can be uncovered immediately with out the ODP framework. Knowledge is extracted utilizing this mechanism; nonetheless, incremental information extraction relies on the character of the item. If the item, for instance, accommodates a “final modified date” subject, it’s used to trace modifications and supply incremental information extraction.

AWS Glue zero-ETL offers out-of-the-box incremental information extraction for non-ODP OData companies, supplied the entity features a subject to trace modifications (final modified date or time). For such SAP companies, zero-ETL offers two approaches for information ingestion: timestamp-based incremental processing and full load.

Timestamp-based incremental processing

Timestamp-based incremental processing makes use of prospects’ configured timestamp fields in zero-ETL to optimize the information extraction course of. The zero-ETL system establishes a beginning timestamp that serves as the muse for subsequent incremental processing operations. This timestamp, referred to as the watermark, is essential for facilitating information consistency. The question building mechanism builds OData filters based mostly on timestamp comparisons. These queries extract information which can be created or modified for the reason that final profitable processing execution. The system’s watermark administration performance maintains monitoring of the best timestamp worth from every processing cycle and makes use of this info as the start line for subsequent executions. The zero-ETL system performs an upsert on the goal utilizing the configured major keys. This strategy facilitates correct dealing with of updates whereas sustaining information integrity. After every profitable goal system replace, the watermark timestamp is superior, making a dependable checkpoint for future processing cycles.

Nonetheless, the timestamp-based strategy has a limitation: it could possibly’t observe bodily deletions as a result of SAP methods don’t preserve deletion timestamps. In situations the place timestamp fields are both unavailable or not configured, the system transitions to a full load with upsert processing.

Full load

The total load strategy serves as each a standalone strategy and a fallback mechanism when timestamp-based processing just isn’t possible. This technique entails extracting the whole entity dataset throughout every processing cycle, making it appropriate for situations the place change monitoring just isn’t accessible or required. The extracted dataset is upserted within the goal system. The upsert processing logic handles each new document insertions and updates to current information.

When to decide on incremental or full load

The timestamp-based incremental processing strategy affords optimum efficiency and useful resource utilization for big datasets with frequent updates. Knowledge switch volumes are decreased by way of the selective switch of solely modified information, leading to reductions in community site visitors. This optimization immediately interprets into decrease operational prices. The total load with upsert facilitates information synchronization in situations the place incremental processing just isn’t possible.

Collectively, these approaches type a whole answer for zero-ETL integration with non-ODP SAP constructions, addressing the varied necessities of enterprise information integration situations. Organizations utilizing these approaches ought to consider their particular use circumstances, information volumes, and efficiency necessities when selecting between the 2 approaches.The next diagram illustrates the SAP information ingestion workflow.

Observing SAP zero-ETL integrations

AWS Glue maintains state administration, logs, and metrics utilizing Amazon CloudWatch logs. For directions to configure observability, confer with Monitoring an integration. Ensure AWS Id and Entry Administration (IAM) roles are configured for log supply. The mixing is monitored from each supply ingestion and writing to the chosen goal.

Monitoring supply ingestion

The mixing of AWS Glue zero-ETL with CloudWatch offers monitoring capabilities to trace and troubleshoot the information integration processes. By way of CloudWatch, you may entry detailed logs, metrics, and occasions that assist determine points, monitor efficiency, and preserve operational well being of your SAP information integrations. Let’s take a look at a number of cases of success and error situations.

State of affairs 1: Lacking permissions in your function

This error occurred throughout an information integration course of in AWS Glue when trying to entry SAP information. The connection encountered a CLIENT_ERROR with a 400 Dangerous Request standing code, indicating that the function has lacking permissions:

State of affairs 2: Damaged delta hyperlinks

The CloudWatch log signifies a difficulty with lacking delta tokens throughout information synchronization from SAP to AWS Glue. The error happens when trying to entry the SAP gross sales doc merchandise desk FactsOfCSDSLSDOCITMDX by way of the OData service. The absence of delta tokens, that are wanted for incremental information loading and monitoring modifications, has resulted in a CLIENT_ERROR (400 Dangerous Request) when the system tried to open the information extraction API RODPS_REPL_ODP_OPEN:

State of affairs 3: Consumer errors on SAP information ingestion

This CloudWatch log reveals a shopper exception state of affairs the place the SAP entity EntityOf0VENDOR_ATTR just isn’t positioned or accessed by way of the OData service. This CLIENT_ERROR happens when the AWS Glue connector makes an attempt to parse the response from the SAP system however fails, on account of both the entity being non-existent within the supply SAP system or the SAP occasion being briefly unavailable:

Monitoring goal write

Zero-ETL employs monitoring mechanisms relying on the goal system. For Amazon Redshift targets, it makes use of the svv_integration system view, which offers detailed details about integration standing, job execution, and information motion statistics. When working with SageMaker lakehouse targets, zero-ETL tracks integration states by way of the zetl_integration_table_state desk, which maintains metadata about synchronization standing, timestamps, and execution particulars. Moreover, you need to use CloudWatch logs to observe the mixing progress, capturing details about profitable commits, metadata updates, and potential points in the course of the information writing course of.

State of affairs 1: Profitable processing on SageMaker lakehouse goal

The CloudWatch logs present profitable information synchronization exercise for the plant desk utilizing CDC mode. The primary log entry (IngestionCompleted) confirms the profitable completion of the ingestion course of at timestamp 1757221555568, with a final sync timestamp of 1757220991999. The second log (IngestionTableStatistics) offers detailed statistics of the information modifications, displaying that in this CDC sync 300 new information have been inserted, 8 information have been up to date, and a pair of information have been deleted from the goal database gluezetl. This stage of element helps in monitoring the amount and kinds of modifications being propagated to the goal system.

State of affairs 2: Metrics on Amazon SageMaker lakehouse goal

The zetl_integration_table_state desk in SageMaker lakehouse offers a view of integration standing and information modification metrics. On this instance, the desk reveals a profitable integration for an SAP CDS view desk with integration ID 62b1164f-5b85-45e4-b8db-9aa7ab841e98 within the testdb database. The document signifies that at timestamp 1733000485999, there have been 10 insertion information processed (recent_insert_record_count: 10), with no updates or deletions (each counts at 0). This desk serves as a monitoring instrument, offering a centralized view of integration states and detailed statistics about information modifications, making it simple to trace and confirm information synchronization actions within the lakehouse.

State of affairs 3: Redshift monitoring system makes use of two views to trace zero-ETL integration standing

svv_integration offers a high-level overview of the mixing standing, displaying that integration ID 03218b8a-9c95-4ec2-81ad-dd4d5398e42a has efficiently replicated 18 tables with no failures, and the final checkpoint was at transaction sequence 1761289852999.

svv_integration_table_state affords table-level monitoring particulars, displaying the standing of particular person tables throughout the integration. On this case, the SAP materials group textual content entity desk is in Synced state, with its final replication checkpoint matching the mixing checkpoint (1761289852999). The desk at present reveals 0 rows and 0 dimension, suggesting it’s newly created.

These views collectively present a complete monitoring answer for monitoring each general integration well being and particular person desk synchronization standing in Amazon Redshift.

Stipulations

Within the following sections, we stroll by way of the steps required to arrange an SAP connection and utilizing that connection to create a zero-ETL integration. Earlier than implementing this answer, you will need to have the next in place:

- An SAP account

- An AWS account with administrator entry

- Create an S3 Tables goal and affiliate the S3 bucket sap_demo_table_bucket as a location of the database

- Replace AWS Glue Knowledge Catalog settings utilizing the next IAM coverage for fine-grained entry management of the Knowledge Catalog for zero-ETL

- Create an IAM function named

zero_etl_bulk_demo_role, for use by zero-ETL to entry information out of your SAP account - Create the key

zero_etl_bulk_demo_secretin AWS Secrets and techniques Supervisor to retailer SAP credentials

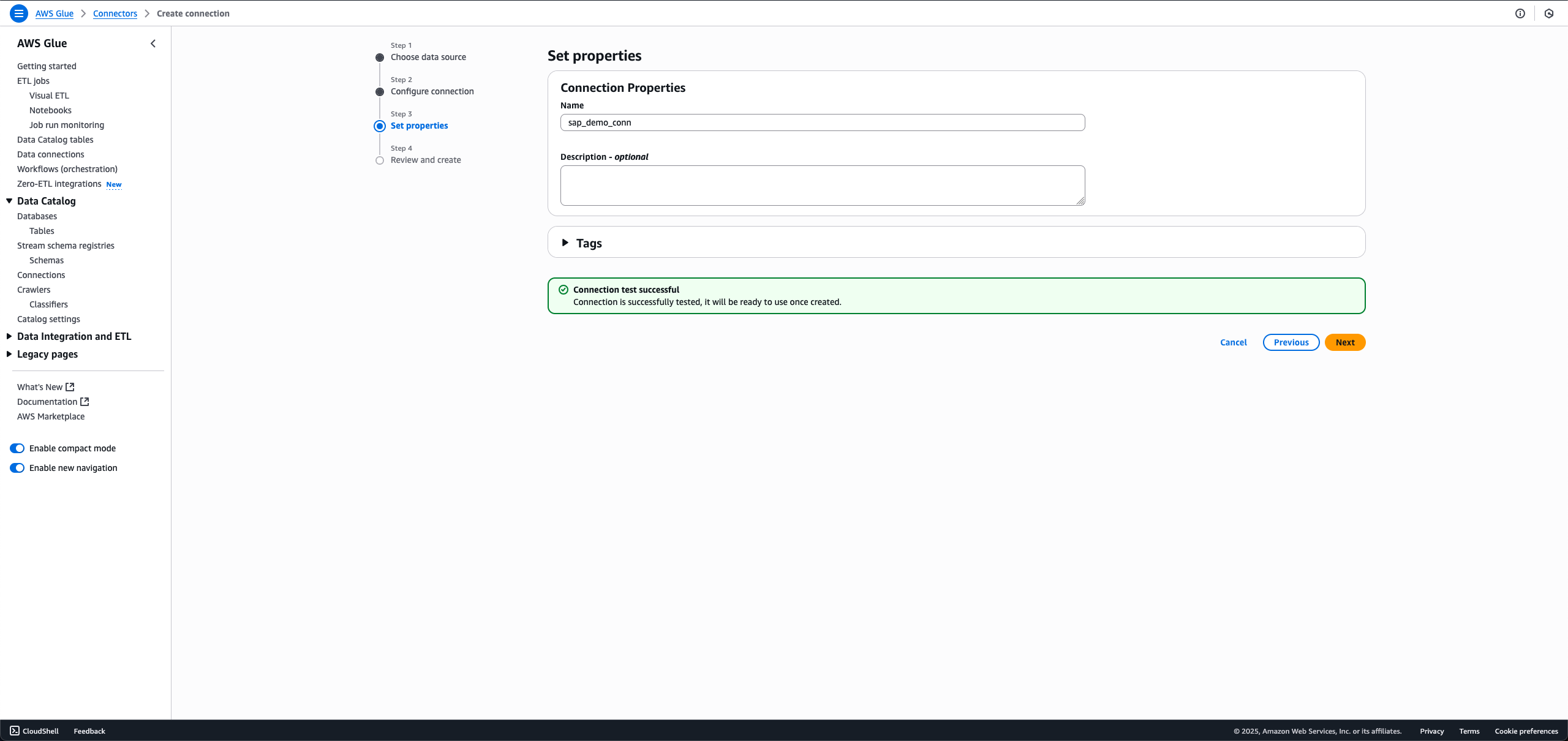

Create connection to SAP occasion

To arrange a connection to your SAP occasion and supply information to entry, full the next steps:

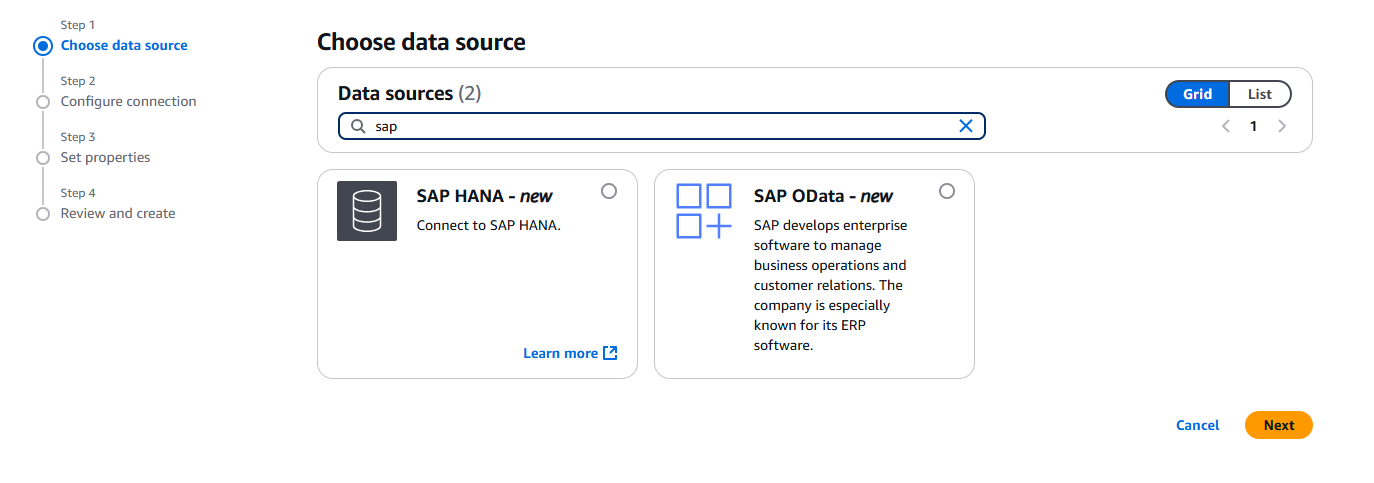

- On the AWS Glue console, within the navigation pane underneath Knowledge catalog, select Connections, then select Create Connection.

- For Knowledge sources, choose SAP OData, then select Subsequent.

- Enter the SAP occasion URL.

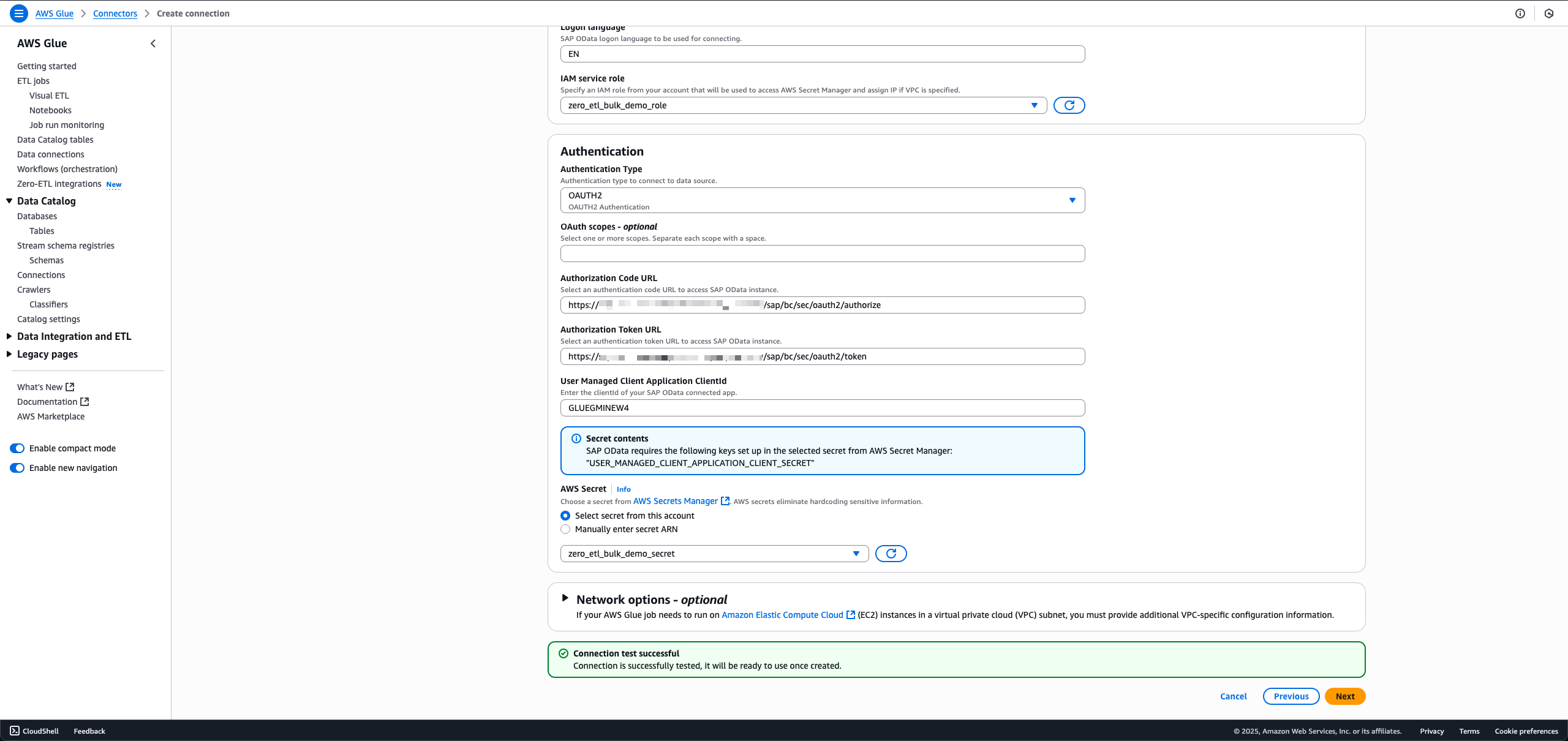

- For IAM service function, select the function

zero_etl_bulk_demo_role(created as a prerequisite). - For Authentication Kind, select the authentication sort that you just’re utilizing for SAP.

- For AWS Secret, select the key

zero_etl_bulk_demo_secret(created as a prerequisite). - Select Subsequent.

- For Identify, enter a reputation, comparable to

sap_demo_conn. - Select Subsequent.

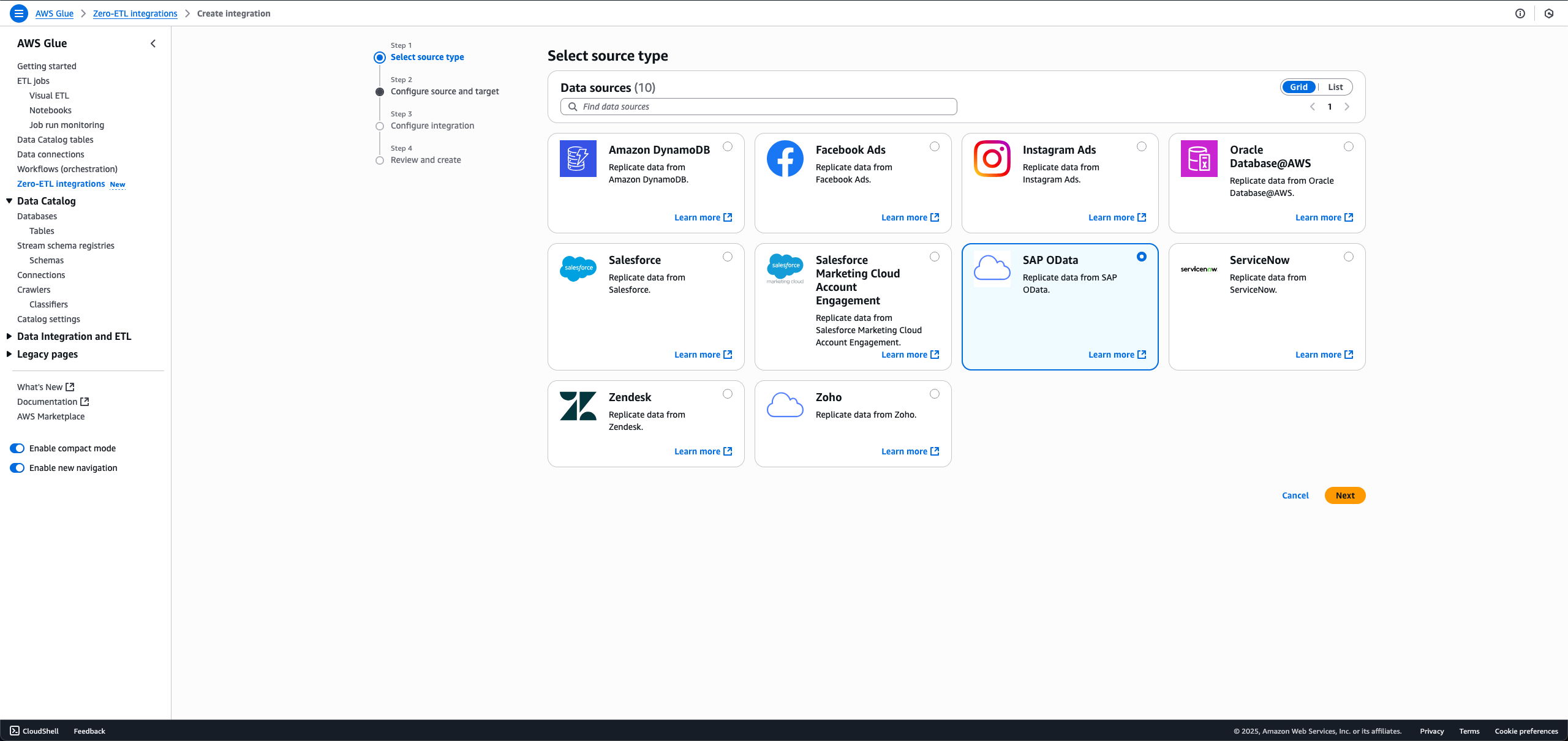

Create zero-ETL integration

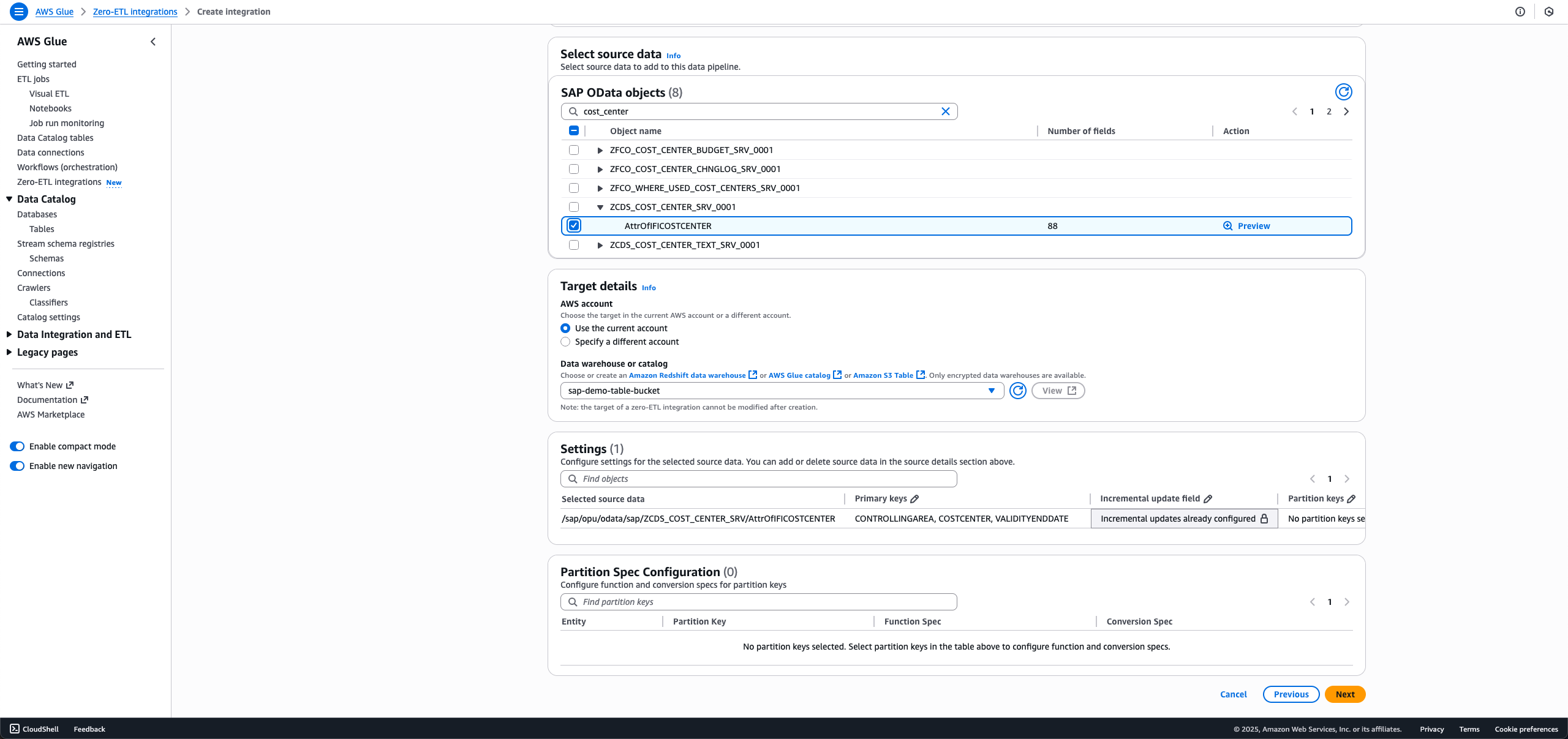

To create the zero-ETL integration, full the next steps:

- On the AWS Glue console, within the navigation pane underneath Knowledge catalog, select Zero-ETL integrations, then select Create zero-ETL integration.

- For Knowledge supply, choose SAP OData, then select Subsequent.

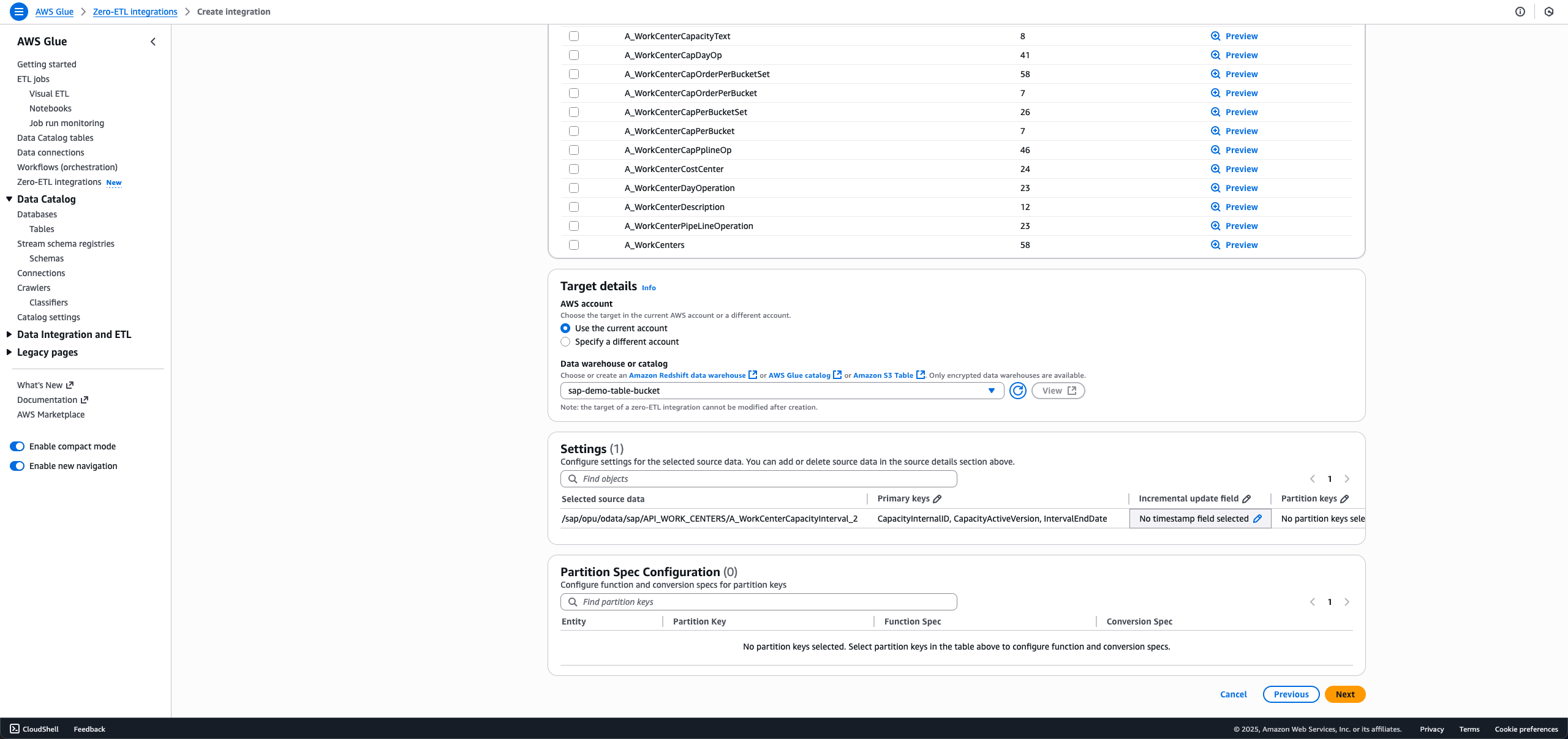

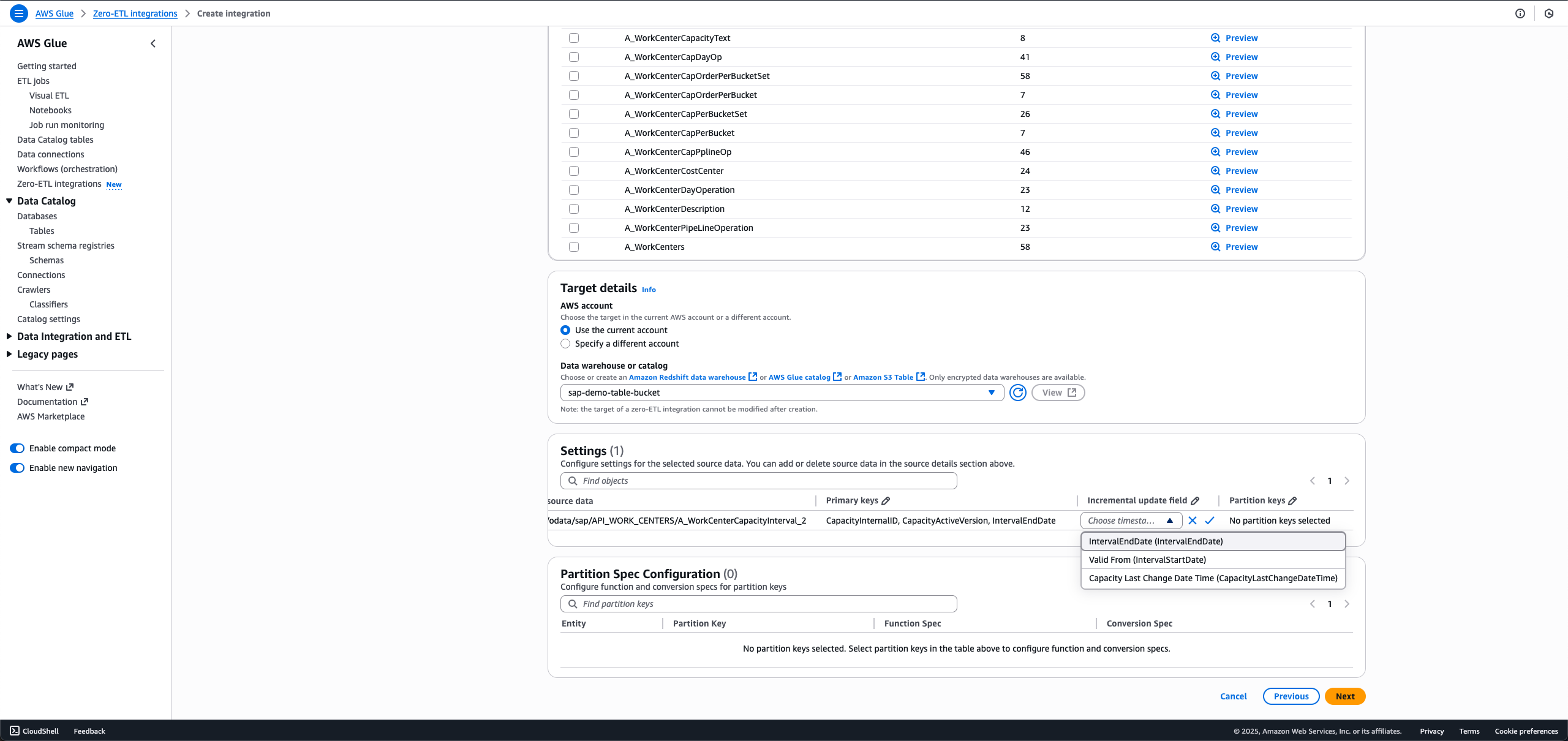

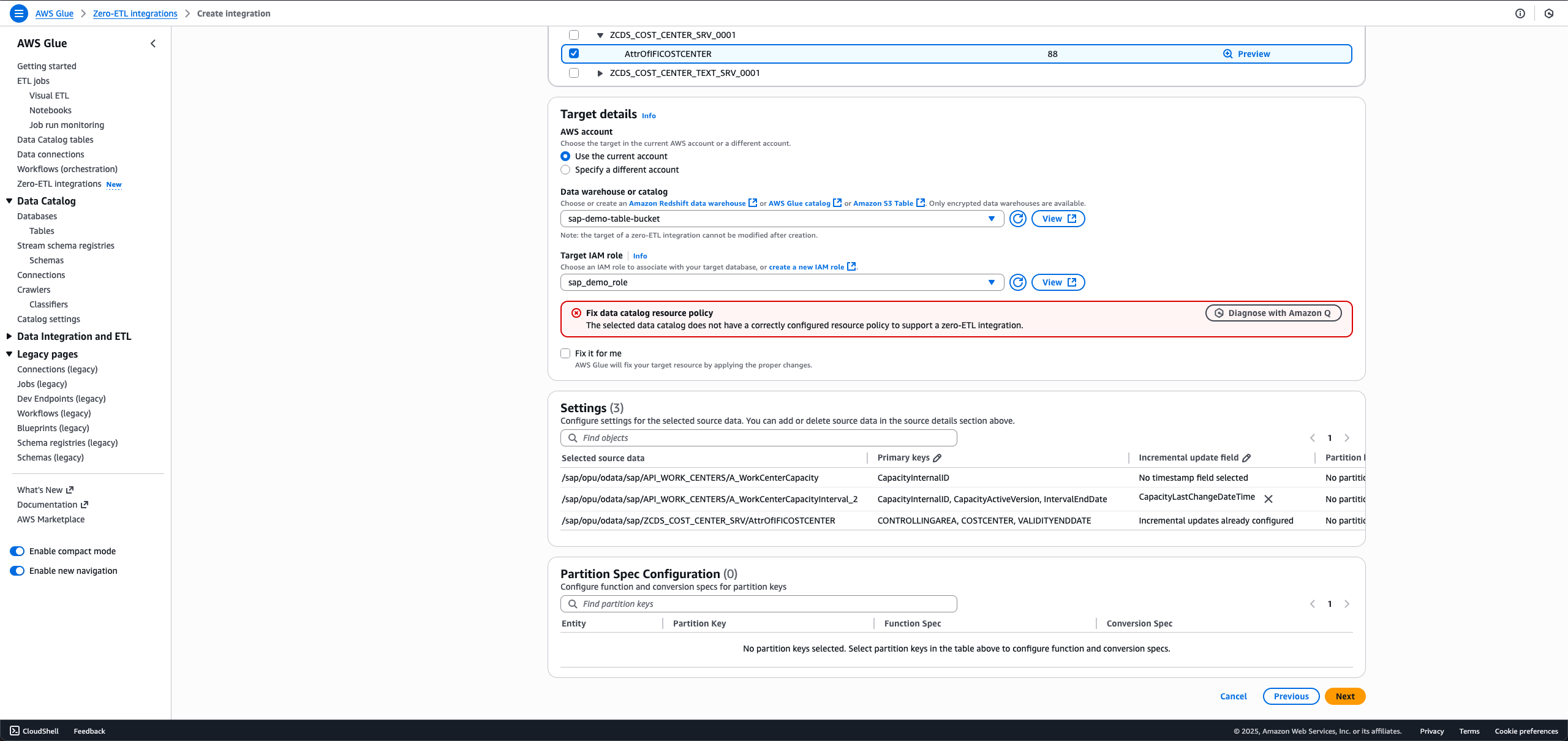

- Select the connection title and IAM function that you just created within the earlier step.

- Select the SAP objects you need in your integration. The non-ODP objects are both configured for full load or incremental load, and ODP objects are robotically configured for incremental ingestion.

- For full load, depart Incremental replace subject set as No timestamp subject chosen.

- For incremental load, select the edit icon for Incremental replace subject and select a timestamp subject.

- For ODP entities that provide delta token, the incremental replace subject is pre-selected, and no buyer motion is critical.

When making a brand new integration utilizing the identical SAP connection and entity within the information filter, you will be unable to pick a unique incremental replace subject from the primary integration.

- For full load, depart Incremental replace subject set as No timestamp subject chosen.

- For Goal particulars, select

sap_demo_table_bucket(created as a prerequisite). - For Goal IAM function, select sap_demo_role (created as a prerequisite).

- Select Subsequent.

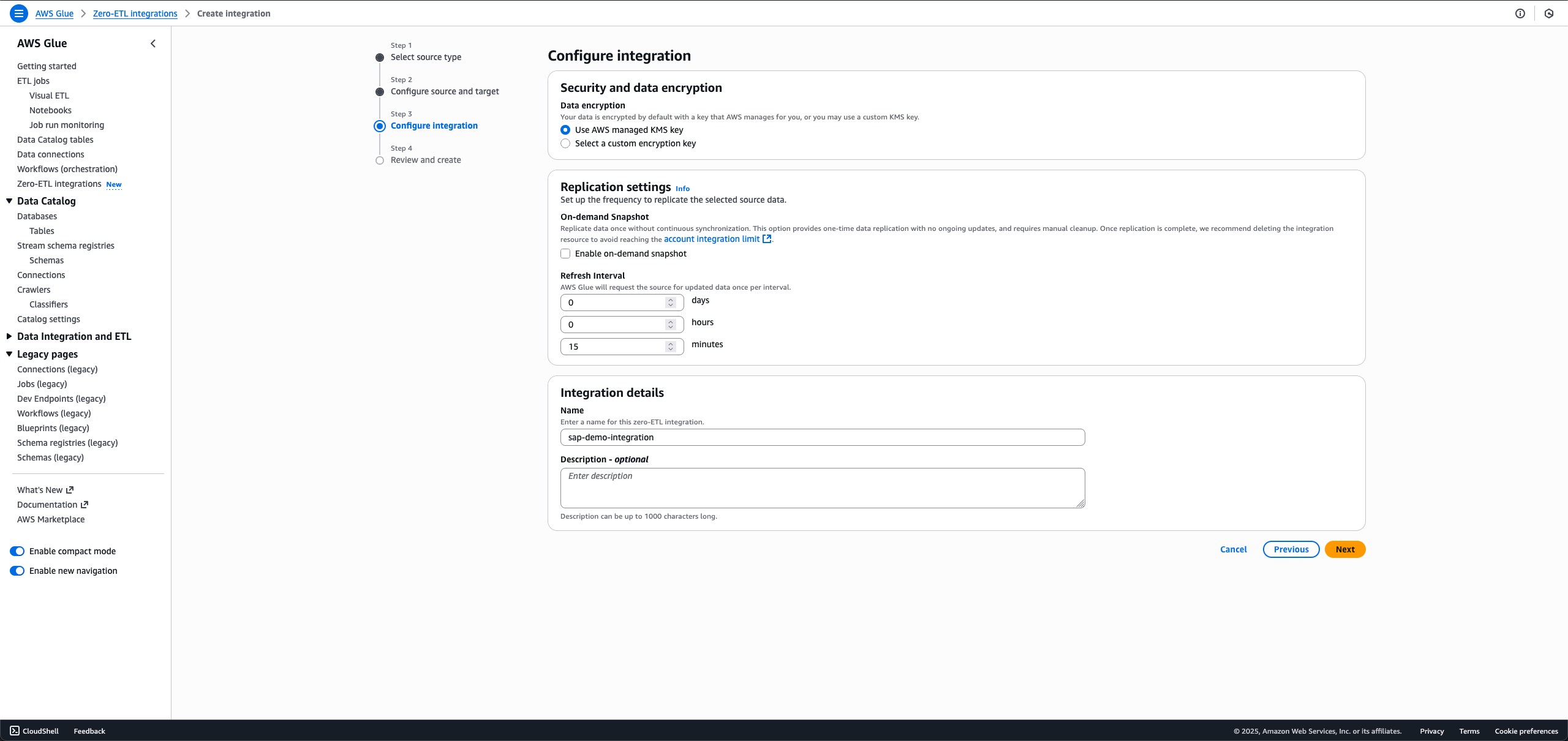

- Within the Integration particulars part, for Identify, enter sap-demo-integration.

- Select Subsequent.

- Overview the main points and select Create and launch integration.

The newly created integration is proven as Lively in a couple of minute.

Clear up

To wash up your sources, full the next steps. This course of will completely delete the sources created on this put up; again up essential information earlier than continuing.

- Delete the zero-ETL integration

sap-demo-integration. - Delete the S3 Tables goal bucket

sap_demo_table_bucket. - Delete the Knowledge Catalog connection

sap_demo_conn. - Delete the Secrets and techniques Supervisor secret

zero_etl_bulk_demo_secret.

Conclusion

Now you can remodel your SAP information analytics with out the complexity of conventional ETL processes. With AWS Glue zero-ETL, you may acquire rapid entry to your SAP information whereas sustaining its construction throughout S3 Tables, SageMaker lakehouse, and Amazon Redshift. Your groups can use ACID-compliant storage with time journey capabilities, schema evolution, and concurrent reads/writes at scale, whereas retaining information in cost-effective cloud storage. The answer’s AI capabilities by way of Amazon Q and SageMaker will help what you are promoting create on-demand information merchandise, run text-to-SQL queries, and deploy AI brokers utilizing Amazon Bedrock and Fast Suite.

To be taught extra, confer with the next sources:

Able to modernize your SAP information technique? Discover AWS Glue zero-ETL and enrich your group’s information analytics capabilities.

In regards to the authors